Red Hat blog

If you're a Site Reliability Engineer, or do similar work, you've probably heard about eBPF. You might also have tried out a few bcc or bpftrace tools. Have you wondered how you can run these tools 24/7, log historical data and set alerts based on the measured metric values? This post will guide you through setting up Performance Co-Pilot, our monitoring solution for RHEL, and enabling eBPF sourced metrics on RHEL 8.

What is Performance Co-Pilot?

Performance Co-Pilot (PCP) is a system performance monitoring toolkit. It follows the Unix design philosophy of multiple small, focussed components that work together:

-

pmcd: The Performance Metrics Collection Daemon collects performance metrics from different PMDAs

-

pmda: Each Performance Metrics Domain Agent gathers metrics from a specific domain, for example from the /proc filesystem, from the MySQL database server or from bcc tools

-

pmie: The Performance Metrics Inference Engine connects to pmcd and performs actions based on rules (for example “run this shell script if the load average of the last 5 minutes was above 2”)

-

pmlogger: connects to pmcd and creates archive logs of metric values

-

pmproxy: acts as a protocol proxy, provides a REST API and exports all metrics in the OpenMetrics format (in other words, you can scrape PCP metrics with Prometheus or OpenShift Monitoring)

For this post, we’ll focus on the different PMDAs. After all, we want to ingest eBPF metrics into our monitoring tool.

Let’s get started by installing PCP and reading our first metric:

$ sudo dnf install -y pcp-zeroconf $ pmval disk.dev.write_bytes metric: disk.dev.write_bytes host: agerstmayr-thinkpad semantics: cumulative counter (converting to rate) units: Kbyte (converting to Kbyte / sec) samples: all nvme0n1 sda 0.0 0.0 6219. 0.0 0.0 0.0 0.0 0.0 47.94 0.0 0.0 0.0

This output shows the amount of bytes written to each disk in KB/s in close to real time. This command reports the metric values every second, and can be stopped by pressing Ctrl + C. The same command can be used to access the metric values of a different machine or to access historical values of an archive created by pmlogger. Furthermore, the reporting interval can be adjusted and much more.

How to source metrics from eBPF?

There are multiple ways to write eBPF programs. The most popular eBPF front ends for monitoring programs are currently bcc (eBPF compiler collection), bpftrace and libbpf. PCP includes an agent for each front end, so you can use any of these front ends to gather metrics from eBPF programs.

bcc PMDA

bcc tools are written in Python (or Lua) with embedded eBPF/C code. The embedded eBPF/C code gets compiled at runtime, therefore it is required to have the Clang/LLVM compiler installed on the target machine. Additionally, kernel headers or the kheaders module need to be present on the target machine. The bcc PMDA is available since RHEL 7.6.

Let’s install the bcc PMDA and read our first eBPF sourced metrics:

$ sudo dnf install -y pcp-pmda-bcc $ cd /var/lib/pcp/pmdas/bcc && sudo ./Install $ pminfo bcc bcc.proc.net.udp.recv.bytes bcc.proc.net.udp.recv.calls bcc.proc.net.udp.send.bytes bcc.proc.net.udp.send.calls bcc.proc.net.tcp.recv.bytes bcc.proc.net.tcp.recv.calls bcc.proc.net.tcp.send.bytes bcc.proc.net.tcp.send.calls

The pminfo command lists all currently available metrics. In this case, the bcc PMDA already started one bcc tool, which measures per-process network bandwidth. Let’s install more bcc tools: open /var/lib/pcp/pmdas/bcc/bcc.conf in your favorite editor and edit the modules option of the [pmda] section:

[pmda] modules = netproc,runqlat,biolatency,tcptop,tcplife

Most bcc PMDA modules are based on the upstream bcc tools with small modifications (i.e. instead of command line arguments, each module can be configured in the bcc.conf file). All supported bcc modules are installed in /var/lib/pcp/pmdas/bcc/modules (there are 28 modules available in the latest version of PCP).

After updating the configuration file, reinstall the bcc PMDA by executing cd /var/lib/pcp/pmdas/bcc && sudo ./Install, wait a few seconds until all bcc modules are compiled and run pminfo bcc again. You’ll see a list of 27 different metrics. You can inspect them with pmval, pminfo or visually with Grafana.

Figure 1: runqlat and biolatency tools visualized in Grafana

Figure 1: runqlat and biolatency tools visualized in Grafana

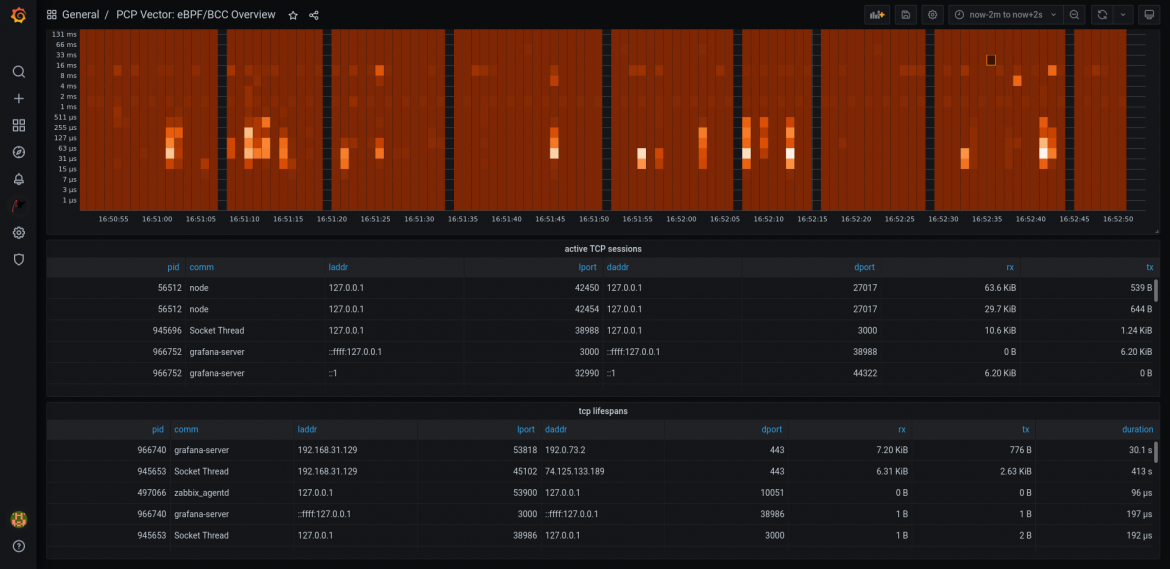

Figure 2: tcptop and tcplife tools visualized in Grafana

Figure 2: tcptop and tcplife tools visualized in Grafana

For instructions on how to setup Grafana, please refer to this blog post: Visualizing System Performance or consult the RHEL documentation at Setting up graphical representation of PCP metrics.

bpftrace PMDA

bpftrace is a high-level tracing language with a syntax influenced by awk and DTrace. It requires Clang/LLVM and kernel type information (the kernel-devel package, kheaders module or BTF) available on the target machine. The bpftrace PMDA is available since RHEL 8.2.

This PMDA supports two modes of operation: It starts all bpftrace scripts located in the /var/lib/pcp/pmdas/bpftrace/autostart directory and supports starting bpftrace scripts on-demand in Grafana with the bpftrace data source. The second mode of operation is intended for development/test purposes only, and not recommended in production environments (and disabled by default).

The bpftrace PMDA starts each bpftrace script and exports all bpftrace variables of the script and additional status information about each bpftrace process as PCP metrics:

$ sudo dnf install -y pcp-pmda-bpftrace $ cd /var/lib/pcp/pmdas/bpftrace && sudo ./Install $ pminfo bpftrace bpftrace.scripts.runqlat.data.usecs bpftrace.scripts.runqlat.data_bytes bpftrace.scripts.runqlat.code bpftrace.scripts.runqlat.probes bpftrace.scripts.runqlat.error bpftrace.scripts.runqlat.exit_code bpftrace.scripts.runqlat.pid bpftrace.scripts.runqlat.status bpftrace.scripts.biolatency.data.usecs bpftrace.scripts.biolatency.data_bytes bpftrace.scripts.biolatency.code bpftrace.scripts.biolatency.probes bpftrace.scripts.biolatency.error bpftrace.scripts.biolatency.exit_code bpftrace.scripts.biolatency.pid bpftrace.scripts.biolatency.status $ pminfo -dfT bpftrace.scripts.biolatency.data.usecs bpftrace.scripts.biolatency.data.usecs Data Type: 64-bit unsigned int InDom: 151.100010 0x25c186aa Semantics: counter Units: none Help: @usecs variable of bpftrace script inst [0 or "8-15"] value 1504 inst [1 or "16-31"] value 58274 inst [2 or "32-63"] value 64363 inst [3 or "64-127"] value 220222 inst [4 or "128-255"] value 87631 inst [5 or "256-511"] value 31494 inst [6 or "512-1023"] value 5101

Outlook: bpf PMDA

Another way of writing eBPF programs is using BPF CO-RE (Compile Once, Run Everywhere). BPF CO-RE requires BTF (eBPF Type Format), libbpf and a recent kernel. By combining these technologies, eBPF applications can be compiled once and be executed on target machines without any compiler or kernel headers installed.

The programs are written in C, making them a lightweight alternative to the bcc based tools. This PMDA is planned to be available in RHEL 9.

Conclusion

This post gave a quick overview of the current eBPF monitoring front ends and how to integrate metrics from them into PCP. By integrating eBPF metrics in the PCP ecosystem, Site Reliability Engineers can quickly access eBPF metrics from remote hosts, can store historical metric values to disk (pmlogger), set alerts based on metric values (pmie) and visualize metrics in Grafana (grafana-pcp).

Further Reading: For detailed instructions on how to set up Grafana with Performance Co-Pilot, please refer to Visualizing System Performance. For general performance related blog posts, follow our Performance Optimization Series.

Thanks to Marko Myllynen (bcc PMDA), Jason Koch (bpf PMDA), Brendan Gregg (bcc and bpftrace tools) and the PCP, eBPF and Linux communities.

About the author

Andreas Gerstmayr is an engineer in Red Hat's Platform Tools group, working on Performance Co-Pilot, Grafana plugins and related performance tools, integrations and visualizations.