In part one of our series about Distributed Compute Nodes (DCN), we described how the storage backends are deployed at each site and how to manage images at the edge. What about the OpenStack service (i.e. Cinder) that actually manages persistent block storage?

Red Hat OpenStack Platform has provided an Active/Passive (A/P) Highly Available (HA) Cinder volume service through Pacemaker running on the controllers.

Because DCN is a distributed architecture, the Cinder volume service also needs to be aligned with this model. By isolating sites to improve scalability and resilience, a dedicated set of Cinder volume services now runs at each site, and they only manage the local-to-site backend (e.g., the local Ceph).

This brings several benefits:

-

Consistency with the rest of the architecture that dedicates storage backend and service per site.

-

Limited impact to other sites when a specific Cinder volume service issue occurs.

-

Improved scalability and performances by distributing load as each set of services only manages its local backend.

-

More deployment flexibility. Any local-to-site change to the Cinder volume configuration only requires to apply the update locally.

In terms of deployment, Pacemaker is used at the central site. However it is not used at the edge so Cinder’s newer Active/Active (A/A) HA deployments are used instead.

For this reason, any Edge Cinder volume driver must have true A/A support, like the Ceph RADOS Block Device (RBD) driver. Active/Active volume services require a Distributed Lock Manager (DLM), which is provided by etcd service deployed at each site.

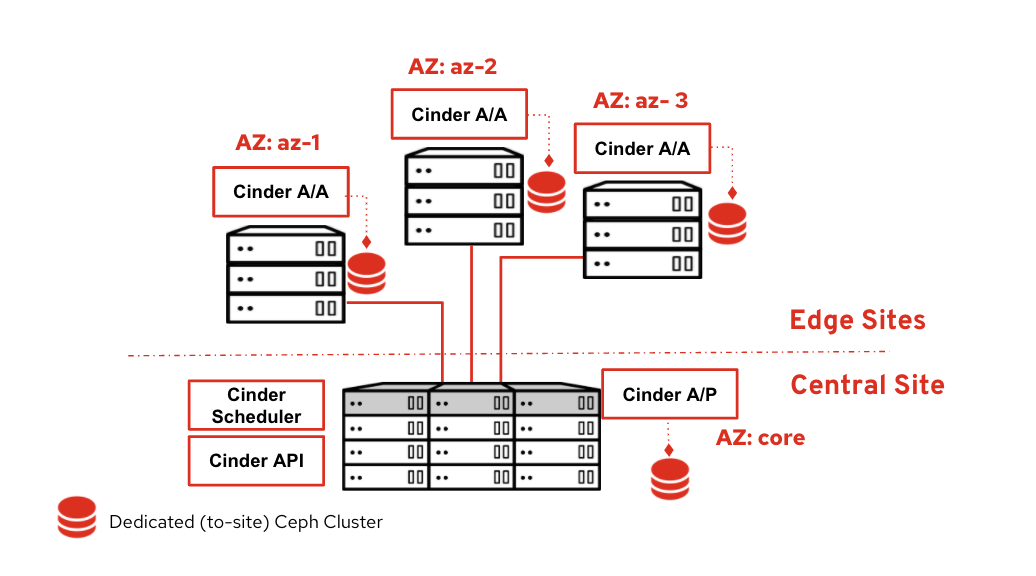

To summarize when Cinder is deployed with DCN, the Cinder scheduler and API services run only at the central location, but there is a dedicated set of cinder-volume services running at each site in its own Cinder Availability Zone (AZ).

This diagram shows the central site running Cinder API, scheduler and volume A/P as well as three edge sites all running dedicated Cinder volumes A/A. Each site is isolated within its own AZ. The Cinder database remains on the central site.

Checking the Cinder volume status

We can check the actual Cinder volume status report with the CLI

In the command output above, the lines in black and red represent the services running at the central site including the A/P Cinder volume service.

The lines in blue represent all of the DCN sites (in the example there are three sites, each with its own AZ). A variation of the above command is cinder --os-volume-api-version 3.11 service-list. When that mircroversion is specified, we get the same output but with an extra column for the cluster name.

To help manage Cinder A/A, new command line cluster options are available in microversion 3.7 and newer.

The cinder cluster-show output shows detailed information about a particular cluster. The cinder cluster-list output shows a quick health summary of all the clusters, whether they are up or down, the number of volume services that form each cluster, and how many of those services are down because the cluster will still be up even if two services are down.

An example output for cinder --os-volume-api-version 3.7 cluster-list --detail is below.

$ cinder --os-volume-api-version 3.11 cluster-list --detail +-------------------+---------------+-------+---------+-----------+----------------+----------------------------+-----------------+----------------------------+----------------------------+ | Name | Binary | State | Status | Num Hosts | Num Down Hosts | Last Heartbeat | Disabled Reason | Created At | Updated at | +-------------------+---------------+-------+---------+-----------+----------------+----------------------------+-----------------+----------------------------+----------------------------+ | dcn1@tripleo_ceph | cinder-volume | up | enabled | 3 | 0 | 2020-12-17T16:12:21.000000 | - | 2020-12-15T23:29:00.000000 | 2020-12-15T23:29:01.000000 | | dcn2@tripleo_ceph | cinder-volume | up | enabled | 3 | 0 | 2020-12-15T17:33:37.000000 | - | 2020-12-16T00:16:11.000000 | 2020-12-16T00:16:12.000000 | | dcn3@tripleo_ceph | cinder-volume | up | enabled | 3 | 0 | 2020-12-16T19:30:26.000000 | - | 2020-12-16T00:18:07.000000 | 2020-12-16T00:18:10.000000 | +-------------------+---------------+-------+---------+-----------+----------------+----------------------------+-----------------+----------------------------+----------------------------+ $

Deployment overview with director

Now that we’ve described the overall design, how do we deploy this architecture in a flexible and scalable way?

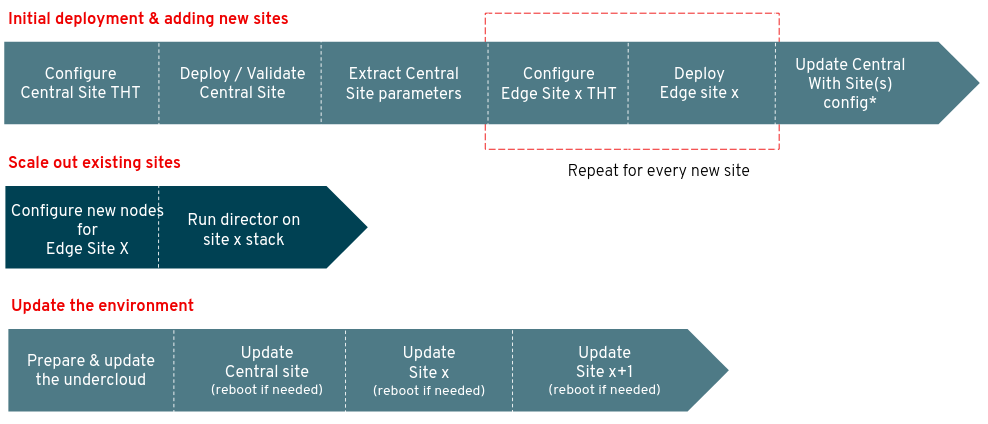

A single instance of Red Hat OpenStack Platform director running at the central location is able to deploy and manage the OpenStack and Ceph deployments at all sites.

In order to simplify management and scale, each physical site maps to a separate logical Heat stack using the director’s multi-stack feature.

The first stack is always for the central site and each subsequent stack is for each edge site. After all of the stacks are deployed, the central stack is updated so that Glance can add the other sites as new stores (see part 1 of this blog series).

When the overall deployment needs software version updates, each site is updated individually. The workload on the edge sites can continue to run if the central control plane becomes unavailable, then each site may be updated separately.

As seen in the darker sections of the flow chart above, scaling out a site by adding more compute or storage capacity doesn’t require updating the central site. Only that single edge site needs to be updated in order to have new nodes. Let’s look next at how edge sites are deployed.

In the diagram above, the central site is hosting the three OpenStack controllers, a dedicated Ceph cluster and an optional set of compute nodes.

The DCN site is running two types of hyper-converged compute nodes:

-

The first type is called DistributedComputeHCI. In addition to hosting the services found on a standard Compute node, it hosts the Ceph Monitor, Manager and OSDs.

It also hosts the services required for Cinder volume including the DLM provided by Etcd. Finally, this node type also hosts the Glance API service. Each edge site that has storage should have three nodes of this type at minimum so that Ceph can establish a quorum. -

The second type of node is called DistributedComputeHCIScaleOut, and it is like a traditional hyper-converged node (Nova and Ceph OSDs). In order to contact the local-to-site Glance API service, the Nova compute service is configured to connect to a local HA proxy service which in turn proxies image requests to the Glance services running on the DistributedComputeHCI roles.

If compute capacity to a DCN site is needed but no storage then the DistributedComputeScaleOut role may be used instead; the non-HCI version does not contain any Ceph services but is otherwise the same.

Both of these node types end in “ScaleOut“ because after the three DistributedComputeHCI nodes are deployed, the other node types may be used to scale out.

End user experience

So far we only covered the infrastructure design and operator experience.

What is the user experience once Red Hat OpenStack Platform DCN is deployed and ready to run workloads?

Let’s have a closer look at what it’s like to use the architecture. We’ll run some user’s daily commands such as upload an image into multiple stores, spin up a boot from volume workload, take a snapshot and then move the snapshot to another site.

Import an image to DCN sites

First, we confirm that we have three separate stores.

glance stores-info +----------+----------------------------------------------------------------------------------+ | Property | Value | +----------+----------------------------------------------------------------------------------+ | stores | [{"default": "true", "id": "central", "description": "central rbd glance | | | store"}, {"id": "http", "read-only": "true"}, {"id": "dcn0", "description": | | | "dcn0 rbd glance store"}, {"id": "dcn1", "description": "dcn1 rbd glance | | | store"}] | +----------+----------------------------------------------------------------------------------+

In the above example we can see that there’s the central store and two edge stores (dcn0 and dcn1). Next, we’ll import an image into all three stores by passing --stores central,dcn0,dcn1 (the CLI also accepts the --all-stores true option to import to all stores).

glance image-create-via-import --disk-format qcow2 --container-format bare --name cirros --uri http://download.cirros-cloud.net/0.4.0/cirros-0.4.0-x86_64-disk.img --import-method web-download --stores central,dcn0,dcn1

Glance will automatically convert the image to RAW. After the image is imported into Glance we can get its ID.

IMG_ID=$(openstack image show cirros -c id -f value)

We can then check which sites have a copy of our image by running a command like the following:

openstack image show $IMG_ID | grep properties

The properties field which is output by the command will contain image metadata including the stores field showing all three stores: central, dcn0, and dcn1.

Create a volume from an image and boot it at a DCN site

Using the same image ID from the previous example, create a volume from the image at the dcn0 site. To specify we want the dcn0 site, we use the dcn0 availability zone.

openstack volume create --size 8 --availability-zone dcn0 pet-volume-dcn0 --image $IMG_ID

Once the volume is created identify its ID.

VOL_ID=$(openstack volume show -f value -c id pet-volume-dcn0)

Create a virtual machine using this volume as a root device by passing the ID. This example assumes a flavor, key, security group and network have already been created. Again we specify the dcn0 availability zone so that the instance boots at dcn0.

openstack server create --flavor tiny --key-name dcn0-key --network dcn0-network --security-group basic --availability-zone dcn0 --volume $VOL_ID pet-server-dcn0

From one of the DistributedComputeHCI nodes on the dcn0 site, we can execute an RBD command within the Ceph monitor container to directly query the volumes pool. This is for demonstration purposes, a regular user would not be able to do so.

sudo podman exec ceph-mon-$HOSTNAME rbd --cluster dcn0 -p volumes ls -l NAME SIZE PARENT FMT PROT LOCK volume-28c6fc32-047b-4306-ad2d-de2be02716b7 8 GiB images/8083c7e7-32d8-4f7a-b1da-0ed7884f1076@snap 2 excl

The above example shows that the volume was CoW booted from the images pool. The VM, therefore, will boot quickly as only the changed data needs to be copied-- the unchanging data only needs to be referenced.

Copy a snapshot of the instance to another site

Create a new image on the dcn0 site that contains a snapshot of the instance created in the previous section.

openstack server image create --name cirros-snapshot pet-server-dcn0

Get the image ID of the new snapshot.

IMAGE_ID=$(openstack image show cirros-snapshot -f value -c id)

Copy the image from the dcn0 site to the central site.

glance image-import $IMAGE_ID --stores central --import-method copy-image

The new image at the central site may now be copied to other sites, used to create new volumes, booted as new instances and snapshotted.

Red Hat OpenStack Platform DCN also supports encrypted volumes with Barbican so that volumes can be encrypted at the edge on a per tenant key basis while keeping the secret securely stored in the central site (Red Hat recommends to use a Hardware Security Module).

If an image on a particular site is not needed anymore, we can delete it while keeping the other copies. To delete the snapshot taken on dcn0 while keeping its copy in central, we can use the following command:

glance stores-delete $IMAGE_ID --stores dcn0

Conclusion

This concludes our blog series on Red Hat OpenStack Distributed Compute Nodes. You should now have a better understanding on the key edge design considerations and how RH-OSP implements them as well as an overview of the deployment and day 2 operations.

Because what matters is the end user experience, we concluded with a typical number of steps users would take to manage their workloads at the edge.

For an additional walkthrough of design considerations and a best-practice approach to building an edge architecture, check out the recording from our webinar titled “Your checklist for a successful edge deployment.”Sobre os autores

Gregory Charot is a Principal Technical Product Manager at Red Hat covering OpenStack Storage, Ceph integration, Edge computing as well as OpenShift on OpenStack storage. His primary mission is to define product strategy and design features based on customers and market demands, as well as driving the overall productization to deliver production-ready solutions to the market. OpenSource and Linux-passionate since 1998, Charot worked as a production engineer and system architect for eight years before joining Red Hat—first as an Architect, then as a Field Product Manager prior to his current role as a Technical Product Manager for the Cloud Platform Business Unit.

John Fulton works for OpenStack Engineering at Red Hat and focuses on OpenStack and Ceph integration. He is currently working upstream on the OpenStack Wallaby release on cephadm integration with TripleO. He helps maintain TripleO support for the storage on the Edge use case and also works on deployment improvements for hyper-converged OpenStack/Ceph (i.e. running Nova Compute and Ceph OSD services on the same servers).

Navegue por canal

Automação

Saiba o que há de mais recente nas plataformas de automação incluindo tecnologia, equipes e ambientes

Inteligência artificial

Descubra as atualizações nas plataformas que proporcionam aos clientes executar suas cargas de trabalho de IA em qualquer ambiente

Serviços de nuvem

Aprenda mais sobre nosso portfólio de serviços gerenciados em nuvem

Segurança

Veja as últimas novidades sobre como reduzimos riscos em ambientes e tecnologias

Edge computing

Saiba quais são as atualizações nas plataformas que simplificam as operações na borda

Infraestrutura

Saiba o que há de mais recente na plataforma Linux empresarial líder mundial

Aplicações

Conheça nossas soluções desenvolvidas para ajudar você a superar os desafios mais complexos de aplicações

Programas originais

Veja as histórias divertidas de criadores e líderes em tecnologia empresarial

Produtos

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Red Hat Cloud Services

- Veja todos os produtos

Ferramentas

- Treinamento e certificação

- Minha conta

- Recursos para desenvolvedores

- Suporte ao cliente

- Calculadora de valor Red Hat

- Red Hat Ecosystem Catalog

- Encontre um parceiro

Experimente, compre, venda

Comunicação

- Contate o setor de vendas

- Fale com o Atendimento ao Cliente

- Contate o setor de treinamento

- Redes sociais

Sobre a Red Hat

A Red Hat é a líder mundial em soluções empresariais open source como Linux, nuvem, containers e Kubernetes. Fornecemos soluções robustas que facilitam o trabalho em diversas plataformas e ambientes, do datacenter principal até a borda da rede.

Selecione um idioma

Red Hat legal and privacy links

- Sobre a Red Hat

- Oportunidades de emprego

- Eventos

- Escritórios

- Fale com a Red Hat

- Blog da Red Hat

- Diversidade, equidade e inclusão

- Cool Stuff Store

- Red Hat Summit