I knew it. I’m surrounded by demos.

Wednesday’s container-centric breakout sessions got deeper into Red Hat® OpenShift, the specifics of working in the platform, and microservices. Practical use is always fun, too—and who doesn’t like impromptu, live demos?

Let’s code like a real developer

Grant Shipley, a director of OpenShift at Red Hat, came to his talk with just a laptop—and no presentation or script. He wanted to show everyone how he works daily within OpenShift to get things done. Much like yesterday, we got a little history lesson—the way things used to be with virtualization. If you weren’t using virtual machines (VMs), you were doing it wrong. Everyone was using virtualization, but a lot of the lessons learned along the way were learned the hard way. Of course, I’m talking about VM sprawl.

That’s a problem enterprises are still dealing with. VMs are out of control. They’re all over the place. Who even knows what half of their own VMs do, anyway? So, containers are the new VM—the hip technology that you have to use because it’s what’s hot. And the fear of container sprawl was addressed immediately when OpenShift was designed, making sure everyone could manage and control their containers from the beginning.

Grant logged in to his home server, running OpenShift on a VM. And when I said unscripted, I meant it. Nothing was prebuilt. No fields were autocompleted. It was all on the fly. In about a minute, he’d started a new project, chosen a docker-based image to build, pulled that image from a container repository, and had it running. He then showed how easy it was to expose the routes to the world—making his new app go live.

“This would have taken about 10 seconds if I wasn’t talking. It’s really easy.” - Grant Shipley

Grant then demonstrated the true power of OpenShift: running your app locally the same way it’ll run in production. OpenShift makes that possible like never before. He instantly spun up new container pods. Now we can test against a clustered environment. Gone are the days of going through testing and QA, only to see your app go up in smoke when it hit production on a clustered environment. With multiple servers, these apps get propagated to those servers too. And, with federation (coming soon in Kubernetes), you could have it clustered around the world.

Grant finished by demonstrating rollbacks. OpenShift saves each deployment so you can do that—in case something goes wrong. Even more impressive: You can do this while ignoring the clustering. You can roll it back to a previous version and watch as that version propagates through each pod in the cluster. But just because a container is running doesn’t mean you want to send traffic to it. OpenShift also has health checks and liveliness probes to make sure it’s ready exactly as expected to be exposed. Impressive.

Avoiding downtime, blue/green, canaries...oh my!

The world has changed. Is that a meaningless phrase now? Maybe, but it doesn’t make it untrue. Look at the sheer amount of software around us. We have entire businesses—big businesses—built almost entirely on software. Who needs a lot of offices and real estate when you’re talking about companies like Uber, AirBNB, and Facebook? These businesses are (almost) entirely software, and they release that software often. Monthly. Weekly. Daily.

“Now every company is a software company.” - Forbes, 2011.

What about downtime? You know, those lovely maintenance messages you see on websites and apps, letting you know they won’t be available—or will be only partially available—during a given time. How do you update your software, introduce new features, get feedback on those updates, and make revisions weekly or daily without taking everything down constantly? The obvious answer is: Don’t take everything down. You need a zero-downtime deployment. Rafael Benevides, director of developer experience at Red Hat, spoke about how to approach, and do, just that, featuring a live demo of a blue/green deployment.

He deployed two containers running slightly different versions of the same app. Then, to control which version visitors to the app could see, he used a proxy to point to one container. We’ll call this the blue deployment. When the other version was deemed acceptable and ready to deploy, he commanded the proxy to flip over. The green deployment was live. This can be better; it’s too cumbersome to do manually, over and over. Let’s use OpenShift!

In OpenShift, Rafael showed how routes can be used to bind between the two versions. Now the switchover can be done with a mouse click. Deploy your new version, quickly. Something went wrong? Switch it back. Change old apps to new ones while avoiding downtime. Easy.

From Rafael Benevides' presentation

Rafael moved on to rolling upgrades. The setup here is multiple pods of containers, all blue. So, we have a new version of the app, ready to be pushed live. Rafael added a health check (the same thing Grant talked about previously) and, as it passed the check, it replaced one pod at a time until we were running a full green deployment. The same outcome, but now as a clustered deployment—all done with a few mouse clicks. The great thing for these deployments is that you can automate all of this. As you update your apps, you can automatically kick off builds, publish those images to the registry, and roll the upgrades without babysitting the workflow.

Rafael went on to demonstrate canary deployments, A/B testing, and the continuous integration/continuous delivery (CI/CD) pipeline capabilities of OpenShift, again with just a few mouse clicks. All intuitive, simplified, mouse clicks—and then automated.

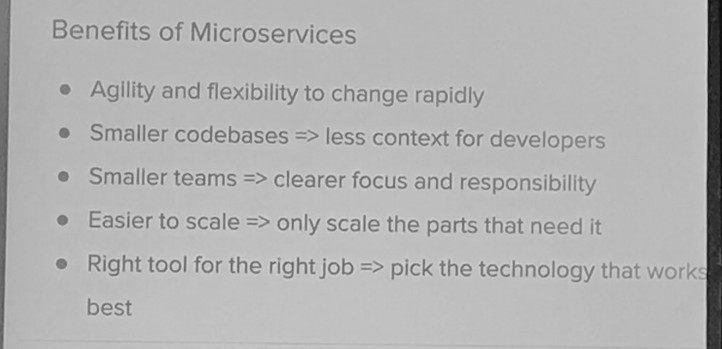

John Frizelle from Red Hat explains the challenges and benefits of microservices.

The truth about microservices

With all of this container talk, it’s easy to get lost in the technology itself while ignoring how you structure that technology—how you structure your app architecture. John Frizelle, a platform architect for Red Hat Mobile, explained misunderstandings around microservices and that there’s a time and place for that architectural approach.

“The microservices buzz is much like teen dating. You do it because it’s exciting and everyone is doing it. You don’t really know what you are getting into, but want to do it anyways. By the time you realize what it’s all about, you’re already in a committed relationship.” - John Frizelle quoting Zohaib Khan, middleware domain architect, Red Hat

The thing about microservices is that one is easy to make. But a lot of them, all orchestrated and working together, can be extremely hard. John broke down the challenges of doing microservices the right way. Those fell into eight categories: building, testing, versioning, deployment, logging, monitoring, debugging, and connectivity. Whew! That’s, well, everything you need to know about building an app and considering new architectures, but microservices are unique in how these play out.

- Building: Identify dependencies between services. Be aware that completing one build might trigger several other builds.

- Testing: Do integration testing—mock vs. full system—as well as end-to-end testing, knowing that a failure in one part of the architecture could cause something a few hops away to fail.

- Versioning: When you update to new versions, keep in mind that you might break backward compatibility. You can build in conditional logic to handle this, but that gets unwieldy and nasty fast. You could, alternatively, stand up multiple live versions for different clients, but that can be more complex in maintenance and management.

- Deployment: This requires a huge amount of automation as it becomes too complex for human deployment. Think about how you’re going to roll services out and in what order.

- Logging: With distributed systems, you need centralized logs. Otherwise it’s impossible to manage.

- Monitoring: It’s critical to have a centralized view of the system to pinpoint the sources of problems.

- Debugging: Remote debugging isn’t an option and won’t work across dozens or hundreds of services. Unfortunately there’s no single answer to how to debug.

- Connectivity: Consider service discovery, whether centralized (etcd) or integrated (properties).

With all that under consideration, microservices—though tough—are good for certain applications. Consider microservices as a solution to these challenges of monolithic apps:

- Size: If your monolith gets too big, it can be problematic. Your integrated development environment (IDE) might not load the app due to its sheer size. You need a huge number of resources locally to even work on it.

- Stack: If you have a monolith, it’s hard to switch to new tech. You already have all the tooling and process in place, and the initial jump is the hardest. Moving from one to two technology stacks doubles the overhead.

- Failure: If anything fails, everything fails. It’s all one system. The bigger the system, the more likely it’ll fail.

- Scaling: Scale everything for any contention in any part of the system. This results in excessive cost and resource consumption. That hot code path is ripe to be taken out and made into a microservice.

- Productivity: Developers can’t work independently. You have a single codebase, so you have a single deployment. With microservices, they can work independently without waiting.

The benefits of microservices ultimately outweigh their complexity. It’s mostly an up-front investment of time and a learning curve that you should see pay off over time. You gain agility and flexibility to change rapidly, smaller codebases, smaller teams with a clearer focus and responsibilities, and easier scaling, and you get to pick the right tool for the job.

The end is near

One day left in Red Hat Summit, and there’s still so much to learn and do. Stay tuned.

关于作者

Red Hat is the world’s leading provider of enterprise open source software solutions, using a community-powered approach to deliver reliable and high-performing Linux, hybrid cloud, container, and Kubernetes technologies.

Red Hat helps customers integrate new and existing IT applications, develop cloud-native applications, standardize on our industry-leading operating system, and automate, secure, and manage complex environments. Award-winning support, training, and consulting services make Red Hat a trusted adviser to the Fortune 500. As a strategic partner to cloud providers, system integrators, application vendors, customers, and open source communities, Red Hat can help organizations prepare for the digital future.

更多此类内容

产品

工具

试用购买与出售

沟通

关于红帽

我们是世界领先的企业开源解决方案供应商,提供包括 Linux、云、容器和 Kubernetes。我们致力于提供经过安全强化的解决方案,从核心数据中心到网络边缘,让企业能够更轻松地跨平台和环境运营。