红帽博客

第二版成本管理指标 Operator 是在红帽 OpenShift 上运行的认证容器镜像,以现有功能为基础,捕获更多历史数据,并纳入新的优化指标。这些新功能结合正确指定的容器请求和限值,可以帮助您更好地了解容器环境的成本。

基于现有功能构建

第二版成本管理指标 Operator 可以通过在 console.redhat.com 中自动创建其关联来源来加快配置。这些指标默认为每六个小时发送一次,但可以配置为更频繁地发送。更频繁的指标可以更快地了解依赖于定义费率成本模型的本地集群(相比之下,在云提供商上运行的集群要等待成本导出,然后才能进行分配)。

该 Operator 还支持受限网络模式,该模式在集群内收集可通过联网系统上传的有效负载。此版本的 Operator 收集的新指标也可以通过受限流在同一有效负载中访问。此版本还可以禁用指标,为只想访问特定功能的客户提供支持。

捕获现有指标

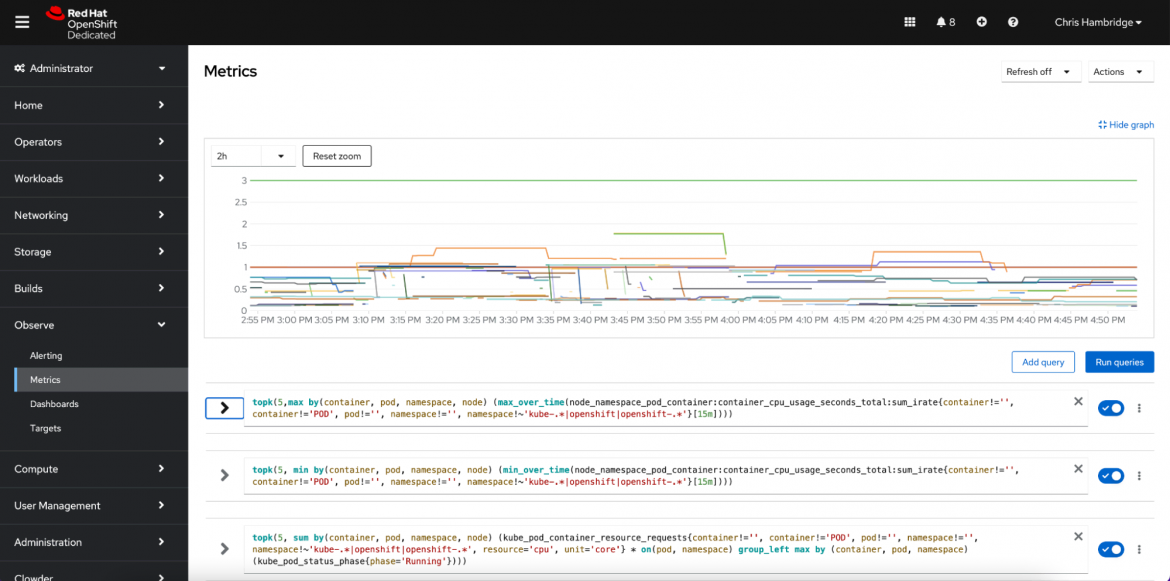

该新版本的成本管理指标 Operator 现在可以在 OpenShift 上安装和配置后立即收集现有的 Prometheus 指标,让您可以访问更多的历史数据。历史数据可以帮助您:

- 更好地了解集群所用资源的趋势和模式

- 改进预测和容量规划

- 更好地管理资源并优化成本

默认情况下,OpenShift 集群监控 Operator 会将指标数据保留 15 天,但成本管理指标 Operator 最多可以收集 90 天的保留指标数据 。这是一项重大改进,可以帮助您更好地了解集群在不同时间的性能。

借助更多的历史数据,您可以在 Operator 运行的第一天就识别出趋势和模式,帮助您更好地做出关于集群容量需求的决策,同时相应地优化成本。

优化指标

除了新的历史数据功能外,成本管理指标 Operator 2.0 版还会每 15 分钟计算一次 CPU/内存/使用量、请求和限值的最小值、最大值、平均值和总和。这些新指标可以为容器请求和限值等资源优化建议提供依据,从而更好地管理集群容量。

借助优化的工作负载,您可以创建节省成本的环境,减少多余的工作负载容量,并缩减 OpenShift 集群中的节点数量。

正确指定容器请求和限值是管理集群容量的关键部分。高估资源需求意味着为资源支付的费用可能会超出您的实际使用量。如果您低估了资源需求,可能会导致性能下降,甚至出现内存不足的错误。通过做出更明智的资源分配决策,您可以更高效地运行 OpenShift 集群来减少不必要的开支。

2.0 版的成本管理指标 Operator 会收集相关数据,以便您做出明智的决策。Operator 收集的新指标可以增进您对所用资源的了解,并相应地优化您的容器请求和限值。

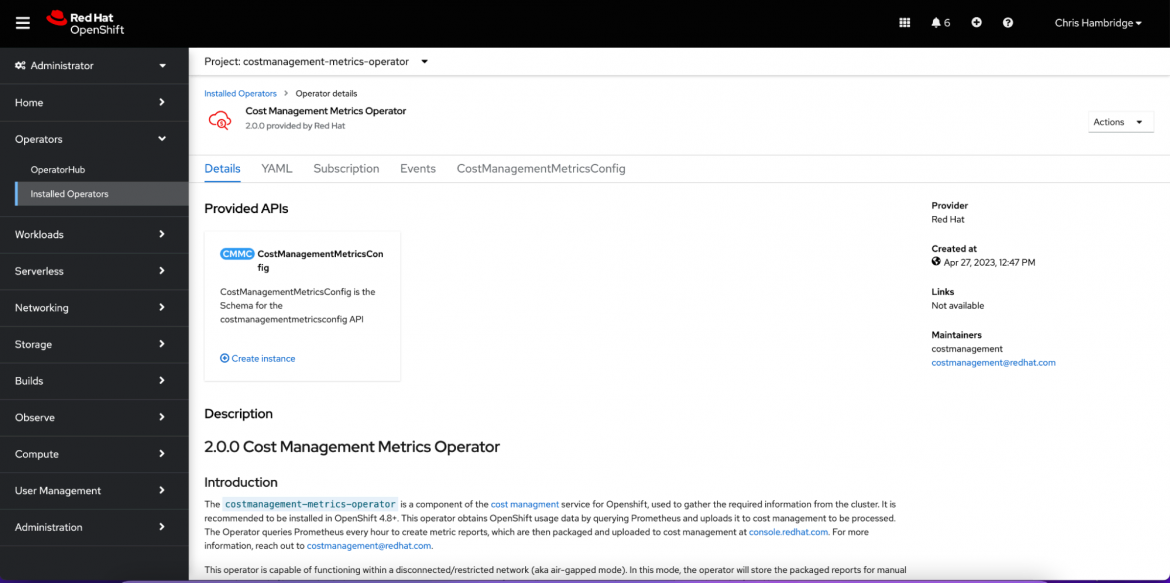

安装 Operator

成本管理指标 Operator 2.0 版包含一系列令人兴奋的新功能,可帮助您优化 OpenShift 集群并在此过程中节省资金。

该版本能够收集更多历史数据并提供资源优化建议,对于任何使用 OpenShift 的企业来说都是一个非常有价值的工具。通过正确指定容器请求和限值,您可以减少不必要的开支,更高效地运行集群,并对环境产生积极影响。

关于作者

Chris Hambridge started his software engineering career in 2006 and joined Red Hat in 2017. He has a Masters in Computer Science from the Georgia Institute of Technology, and is passionate about cloud-native development and DevOps with a focus on pragmatic solutions to everyday problems.