The SSH server is a critical, ubiquitous service that provides one of the main access points into Red Hat Enterprise Linux (RHEL) for management purposes. Over my career as a system administrator, I can not think of any RHEL systems I have worked on that were not running it.

RHEL 8.4 adds new roles to manage the SSH server and SSH client configurations, which are the sshd and ssh roles, respectively. This post will walk you through an example of how to use the sshd RHEL system role to manage the SSH server configuration. In the next post, you’ll see how to adapt to different real-world scenarios where servers need a slightly different configuration.

Why automate SSH server configuration?

Having a properly configured and secured SSH server is a key component of hardening a RHEL system. This is why security benchmarks such as the CIS benchmark and DISA STIG specify SSH server configuration options that need to be set. This makes the SSH server a great candidate for automation.

While it is possible to manually configure the SSH server, doing so is time consuming and prone to error. Additionally, if you manually configure SSH, there is no guarantee it will stay properly configured (e.g., when a fellow system administrator is troubleshooting and makes a few “temporary” changes to the configuration that end up being permanent).

Red Hat introduced RHEL System Roles in RHEL 7. These are Ansible roles and collections that provide a stable and consistent interface to manage and automate multiple releases of RHEL. RHEL System Roles are a feature included in RHEL subscriptions and are supported by Red Hat.

Prerequisites and considerations

For information on installing the RHEL System Roles, a list of available roles, and other general information, refer to the articles Red Hat Enterprise Linux (RHEL) System Roles and Introduction to RHEL System Roles for more general information.

Care must be taken when configuring SSH as a misconfiguration could potentially lock you out of the system.

For example, consider the AllowGroups setting, which specifies a list of groups in which users must be a member of at least one group to login. A misconfiguration of a setting such as this can easily prevent you, or all users, from accessing a system over SSH. There are numerous other settings that could potentially result in similar lockouts.

For more information on configuring the SSH server, refer to the Using secure communications between two systems with OpenSSH documentation.

It is recommended to thoroughly test the SSH configuration in a non-production environment before deploying to production. It is also recommended to test it on a server you have an alternative way to login to, for example console access or access through the Web Console (which provides a terminal).

Like other RHEL System Roles, Ansible variables determine the behavior of the sshd role. While I will cover an overview of some of the important role variables, it is recommended that you review the README.md file for the role, which is available in the /usr/share/doc/rhel-system-roles/sshd/ directory after installing the rhel-system-roles package. This file contains information on the other available variables that can be used with the role, and other important information about the role. If you have an Red Hat Ansible Automation Platform subscription, the role and documentation are available in Ansible Automation Hub.

By default, the sshd RHEL System Role will generate an sshd_config that matches the operating system’s default configuration. Additional variables provided to the role can provide customization. Alternatively, the sshd_skip_defaults variable can be set to true, which will result in the role not using the operating system’s default configuration.

The role, by default, will also automatically make a backup of the existing sshd_config configuration file before creating the new configuration. These backup files are placed under the /etc/ssh directory and contain a date/time stamp in the filename (for example: sshd_config.513347.2021-06-09@20:04:55~). This backup functionality can be disabled by setting the sshd_backup role variable to false.

Example environment overview

In this example, I have installed the RHEL System Roles package on a RHEL 8 server that will act as my Ansible control node. I also have two RHEL 7 servers and two RHEL 8 servers as managed nodes. I would like to use the sshd role to configure the SSH server on these nodes.

I created an ansible user account on the control node and used ssh-keygen to generate an SSH private/public key for the account. On each of the other four servers, I created an ansible user account, configured the account with sudo access to root, and copied over the public key from the control nodes ansible account to the ansible users authorized_keys file on each of the other four servers.

I would also like to manage the control nodes SSH server configuration, so I configured the ansible account on the control node to have sudo access to root as well.

My desired SSH server configuration for these five servers is:

-

The /etc/ssh/sshd_config file has the owner/group set to root/root, and the 0600 file permissions

-

The following options are set in the sshd_config file:

-

X11Forwarding false -

MaxAuthTries 4 -

ClientAliveInterval 300 -

LoginGraceTime 60 -

AllowTcpForwarding no -

PermitRootLogin no -

MaxStartups 10:30:60

-

Setting up the inventory

The Ansible inventory file specifies a list of servers that Ansible can interact with, as well as optionally defining Ansible variables for the hosts. There are other capabilities of inventory files, such as grouping servers, specifying ranges of hosts, etc. For a complete overview of the Ansible inventory file, refer to the Ansible inventory documentation.

Note that in this post, I will intentionally keep the inventory very simple and place the role variables directly in the playbook. It is generally recommended, however, to store the variables outside of the playbook to prevent the need to edit the playbook whenever variables need to be changed or updated.

In the next post I will cover how to store the variables within the group_vars and host_vars inventory directories and how to handle situations where some servers need to have configuration that deviates from the others (for example, the requirement to have just one server enable PermitRootLogin).

I’ll create a directory structure for my playbook and the inventory by running:

$ mkdir -p sshd_playbook/inventory

Then I’ll create my inventory file at sshd_playbook/inventory/inventory.yml, with the following contents:

all: hosts: rhel8-server1.example.com: rhel8-server2.example.com: rhel7-server1.example.com: rhel7-server2.example.com: controlnode.example.com: ansible_connection: local

This simple inventory defines the five hosts in the all group and sets the ansible_connection variable for the controlnode.example.com to local.

Ansible supports multiple ways to connect to nodes, controlled by the ansible_connection variable. I’m setting this to local because I would like to manage the sshd_config file on my control node and, by default, Ansible tries to connect over SSH to each host. The ansible_connection set to local instructs Ansible to directly run the commands on the controlnode.example.com host, which is appropriate as it is the host I’ll be running the playbook from.

Creating and running the playbook

Now that the inventory has been configured, the next step is to create the playbook. As previously mentioned, I will add the role variables into the playbook for the sake of simplicity. This is not generally recommended, however, as it would require the playbook be edited anytime one of the variables needs to be changed or updated. In part two of this post, I will cover how to define these variables in the inventory rather than the playbook.

I’ll create the sshd_playbook/sshd.yml file with the following contents:

- hosts: all become: true roles: - role: redhat.rhel_system_roles.sshd vars: sshd_config_owner: root sshd_config_group: root sshd_config_mode: 0600 sshd: X11Forwarding: false MaxAuthTries: 4 ClientAliveInterval: 300 LoginGraceTime: 60 AllowTcpForwarding: no PermitRootLogin: no MaxStartups: 10:30:60

At this point I’m ready to run the playbook, which will apply our desired SSH server configuration on the five hosts.

I’ll change directory into the sshd_playbook directory and use the ansible-playbook command to run the playbook, specifying the playbook name and inventory file that should be used:

$ cd sshd_playbook $ ansible-playbook sshd.yml -i inventory/inventory.yml

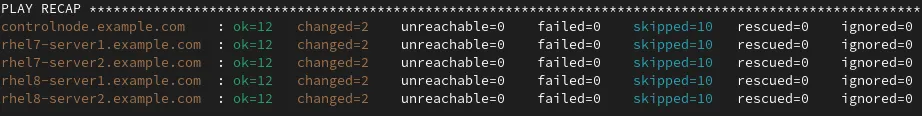

The playbook runs and at the end a summary is shown:

I can quickly validate the result by displaying the contents of the sshd_config file on one of the hosts:

I can quickly validate the result by displaying the contents of the sshd_config file on one of the hosts:

$ ssh rhel8-server1 sudo cat /etc/ssh/sshd_config # Ansible managed HostKey /etc/ssh/ssh_host_rsa_key HostKey /etc/ssh/ssh_host_ecdsa_key HostKey /etc/ssh/ssh_host_ed25519_key AcceptEnv LANG LC_CTYPE LC_NUMERIC LC_TIME LC_COLLATE LC_MONETARY LC_MESSAGES AcceptEnv LC_PAPER LC_NAME LC_ADDRESS LC_TELEPHONE LC_MEASUREMENT AcceptEnv LC_IDENTIFICATION LC_ALL LANGUAGE AcceptEnv XMODIFIERS AllowTcpForwarding no AuthorizedKeysFile .ssh/authorized_keys ChallengeResponseAuthentication no ClientAliveInterval 300 GSSAPIAuthentication yes GSSAPICleanupCredentials no LoginGraceTime 60 MaxAuthTries 4 MaxStartups 10:30:60 PasswordAuthentication yes PermitRootLogin no PrintMotd no Subsystem sftp /usr/libexec/openssh/sftp-server SyslogFacility AUTHPRIV UsePAM yes X11Forwarding no

The seven configuration options that I had specified in the playbook were properly configured. Additionally there is a comment at the top of the file that indicates that the file is Ansible managed.

Rerunning the playbook

At this point, the five servers have the desired SSH server configuration; however, since configuration files have a tendency to drift over time, it makes sense to routinely rerun our sshd RHEL System Role to ensure that the servers have the correct configuration.

The example playbook we used is idempotent. This means we can run it over and over again and as long as nothing has changed the sshd_config file, the playbook won’t make any changes. If there have been changes to the sshd_config file, Ansible will detect this and change the file back to what is specified by the role variables.

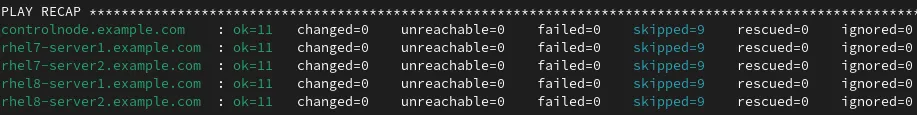

I’ll try rerunning the playbook, which results in a PLAY RECAP that looks like this:

This time the PLAY RECAP looks different because it reports changed=0 for all of the servers. This means Ansible checked the sshd_config file and it matched what our role variables specified, so no changes were made.

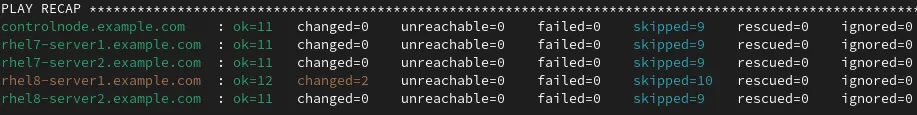

Next, I’ll log in to rhel8-server1 and manually make a change to the sshd_config file (changing LoginGraceTime from 60 to 90).

I’ll then run the playbook again:

Note that Ansible detected my manual changes to the configuration on rhel8-server1 and returned the configuration back to what was specified by our role variables. Ansible notes changed=2 on rhel8-server1 because it had to re-create the sshd_config file and restart the sshd daemon. The other servers report changed=0 because their configuration was correct and didn’t require any changes.

Ansible Tower provides a lot of advanced functionality, including the ability to run playbooks on a routine schedule.

Conclusion

In today’s environment, system administrators are constantly being asked to do more with less. RHEL System Roles, which are included in RHEL subscriptions, can help you manage your RHEL servers in a more efficient, consistent and automated manner. You can review the list of available RHEL System Roles to get started.

For more information on Ansible, review the list of available e-books. Take RHEL System Roles for a quick test drive in our hands-on interactive lab environment that walks you through a common RHEL System Roles use case.

关于作者

Brian Smith is a product manager at Red Hat focused on RHEL automation and management. He has been at Red Hat since 2018, previously working with public sector customers as a technical account manager (TAM).