In this post:

-

Overview of Podman and Amazon Elastic File System (EFS).

-

Walkthrough of setting up a photo gallery on a RHEL EC2 instance.

-

Steps to make storage and compute highly available.

Podman is a daemonless container engine for developing, managing, and running OCI containers on your Red Hat Enterprise Linux (RHEL) system. In this post, I will create a photo gallery running in a Podman container on a RHEL Amazon Elastic Compute Cloud (EC2) instance, where the photos displayed by the website are stored on the EC2 instances connected to AWS Elastic File System (EFS) across multiple Availability Zones (AZs).

Amazon Elastic File System (Amazon EFS) provides a serverless, set-and-forget elastic file system that can be used with AWS cloud services and on-premise resources. It’s built to scale on demand to petabytes without disrupting applications. Amazon EFS helps eliminate the need to provision and manage capacity to accommodate growth.

Setting up the photo gallery

For the photo gallery we will use the photoshow container image.

Here’s an overview of what we will see in each step:

-

The Podman container will run in a RHEL EC2 instance and use the local file system on the EC2 instance to store the images (This is without high availability [HA]).

-

For Step 2, we will do exactly what we did in Step 1, but this time we store the images on an EFS file system (storage level HA).

-

Next, we will make the solution HA by adding a second EC2 instance on another AZ, and adding an Application Load Balancer in front of it (compute level HA added).

-

We will take care of scaling the solution by adding an Auto Scaling Group. (scaling added).

Step 1: Running the photo gallery in a local file system

Let's start by downloading the photoshow image. Note that this image isn't supported, but we're use it as an example so we can demonstrate how to back a containerized application with Amazon EFS.

[ec2-user@ip-172-31-58-150 ~]$ $ sudo podman pull linuxserver/photoshow Completed short name "linuxserver/photoshow" with unqualified-search registries (origin: /etc/containers/registries.conf) Trying to pull registry.access.redhat.com/linuxserver/photoshow:latest... name unknown: Repo not found Trying to pull registry.redhat.io/linuxserver/photoshow:latest... unable to retrieve auth token: invalid username/password: unauthorized: Please login to the Red Hat Registry using your Customer Portal credentials. Further instructions can be found here: https://access.redhat.com/RegistryAuthentication Trying to pull docker.io/linuxserver/photoshow:latest... Getting image source signatures Copying blob 81dcd2d513e2 done Copying blob e2ffab35716e done Copying blob 806049dd093a done Copying blob 4f0deb8c1753 done Copying blob 3e4cde9a3e41 done Copying blob b9792afffe6d done Copying blob ab52860dc901 done Copying blob 189097d34002 done Copying blob cf3b05e1df27 done Copying blob 060360320f5e done Copying config 8ccdbed1b3 done Writing manifest to image destination Storing signatures 8ccdbed1b323d7002a20520219b60f7e010491b365655fa58da5b412221db09f [ec2-user@ip-172-31-58-150 ~]$ sudo podman images REPOSITORY TAG IMAGE ID CREATED SIZE ghcr.io/linuxserver/photoshow latest eb0ad054517e 5 days ago 222 MB

Just make sure that there are no containers running on the EC2 instance.

[ec2-user@ip-172-31-58-150 ~]$ sudo podman ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

We start by creating a directory on the host machine, and then creating a file in that directory.

[ec2-user@ip-172-31-58-150 ~]$ pwd /home/ec2-user [ec2-user@ip-172-31-58-150 ~]$ mkdir photo [ec2-user@ip-172-31-58-150 ~]$ mkdir -p photo/config [ec2-user@ip-172-31-58-150 ~]$ mkdir -p photo/pictures [ec2-user@ip-172-31-58-150 ~]$ mkdir -p photo/thumb [ec2-user@ip-172-31-58-150 ~]$ ls -l photo/ total 8 drwxrwxr-x. 7 ec2-user ec2-user 64 May 20 00:16 config drwxrwxr-x. 2 ec2-user ec2-user 4096 May 20 03:12 pictures drwxrwxr-x. 4 ec2-user ec2-user 32 May 20 00:16 thumb

Now we run the container with the Podman command with -v to point the source and where we want it mounted into the container.

[ec2-user@ip-172-31-58-150 ~]$ sudo podman run -d \ --name=photoshow \ -e PUID=1000 -e PGID=1000 -e TZ=Europe/London -p 8080:80 \ -v /home/ec2-user/photo/config:/config:Z \ -v /home/ec2-user/photo/pictures:/Pictures:Z \ -v /home/ec2-user/photo/thumb:/Thumbs:Z \ --restart unless-stopped linuxserver/photoshow

The :Z will ensure the proper SeLinux context is set.

[ec2-user@ip-172-31-58-150 ~]$ sudo podman ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 0d011645d26d linuxserver/photoshow 9 minutes ago Up 9 minutes ago 0.0.0.0:8080->80/tcp photoshow

We download a few images into the /photo/pictures directory of the host EC2 instance using wget.

[ec2-user@ip-172-31-58-150 ~]$ ls photo/pictures/ Argentina_WC.png FIFA_World_Cup.jpg Germany_WC.png Korea-Japan_WC.png Qatar_WC.png SouthAfrica_WC.png USA_WC.png Brasil_WC.png France_WC.png Italia_WC.png Mexico_WC.png Russia_WC.png Spain_WC.png WGermany_WC.png [ec2-user@ip-172-31-58-150 ~]$

This is just to show that no volumes were indeed created.

[ec2-user@ip-172-31-58-150 images]$ sudo podman volume ls

Let's do an “inspect” of the container to see what was mounted

[ec2-user@ip-172-31-58-150 images]$ sudo podman inspect photoshow ……. ……. ……. "Mounts": [ { "Type": "bind", "Name": "", "Source": "/home/ec2-user/photo/thumb", "Destination": "/Thumbs", "Driver": "", "Mode": "", "Options": [ "rbind" ], "RW": true, "Propagation": "rprivate" }, { "Type": "bind", "Name": "", "Source": "/home/ec2-user/photo/config", "Destination": "/config", "Driver": "", "Mode": "", "Options": [ "rbind" ], "RW": true, "Propagation": "rprivate" }, { "Type": "bind", "Name": "", "Source": "/home/ec2-user/photo/pictures", "Destination": "/Pictures", "Driver": "", "Mode": "", "Options": [ "rbind" ], "RW": true, "Propagation": "rprivate" } ], ……. …….

Let’s log into the container and check the directories that we created:

[ec2-user@ip-172-31-58-150 ~]$ sudo podman exec -it photoshow /bin/bash root@0d011645d26d:/# root@0d011645d26d:/# root@0d011645d26d:/# ls Pictures app config dev etc init libexec mnt proc run srv tmp var Thumbs bin defaults docker-mods home lib media opt root sbin sys usr version.txt root@0d011645d26d:/# ls -l /Pictures /config /Thumbs /Pictures: total 2456 -rw-rw-r-- 1 abc users 70015 Apr 9 00:56 Argentina_WC.png -rw-rw-r-- 1 abc users 173472 May 20 03:53 Brasil_WC.png -rw-rw-r-- 1 abc users 879401 May 20 03:53 FIFA_World_Cup.jpg -rw-rw-r-- 1 abc users 81582 Jul 17 2018 France_WC.png -rw-rw-r-- 1 abc users 124180 May 20 03:53 Germany_WC.png -rw-rw-r-- 1 abc users 84614 Jul 17 2018 Italia_WC.png -rw-rw-r-- 1 abc users 126259 Sep 13 2019 Korea-Japan_WC.png -rw-rw-r-- 1 abc users 157670 Jul 17 2018 Mexico_WC.png -rw-rw-r-- 1 abc users 125000 May 20 03:53 Qatar_WC.png -rw-rw-r-- 1 abc users 188832 May 20 03:53 Russia_WC.png -rw-rw-r-- 1 abc users 248316 May 20 03:53 SouthAfrica_WC.png -rw-rw-r-- 1 abc users 104383 May 19 10:36 Spain_WC.png -rw-rw-r-- 1 abc users 98021 Jul 18 2018 USA_WC.png -rw-rw-r-- 1 abc users 26622 Jul 18 2018 WGermany_WC.png /Thumbs: total 4 drwxr-x--- 2 abc users 67 May 20 03:54 Conf drwxr-x--- 2 abc users 4096 May 20 04:13 Thumbs /config: total 0 drwxr-xr-x 2 abc users 38 May 20 01:16 keys drwxr-xr-x 4 abc users 54 May 20 02:00 log drwxrwxr-x 3 abc users 42 May 20 01:16 nginx drwxr-xr-x 2 abc users 44 May 20 01:16 php drwxrwxr-x 3 abc users 41 May 20 01:16 www root@0d011645d26d:/#

Go to http://54.202.174.232:8080 to check the Photo Gallery

Step 2: Storage level high availability

What happens if the EC2 goes down? Can I still access my images in this case? Is the data highly available?

Our application was using the local file system in the previous scenario. To make the data HA let’s use EFS to store our images. In this step, you will see how to set up EFS and use it with our application container running in Podman.

First, create an EFS file system, in this case called “demo.”

Next, update the /etc/fstab with the EFS entry as shown below. Then on the EC2 host, mount the EFS file system.

Next, update the /etc/fstab with the EFS entry as shown below. Then on the EC2 host, mount the EFS file system.[ec2-user@ip-172-31-58-150 pictures]$ cat /etc/fstab # # /etc/fstab # Created by anaconda on Sat Oct 31 05:00:52 2020 # # Accessible filesystems, by reference, are maintained under '/dev/disk/'. # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info. # # After editing this file, run 'systemctl daemon-reload' to update systemd # units generated from this file. # UUID=949779ce-46aa-434e-8eb0-852514a5d69e / xfs defaults 0 0 fs-33656734.efs.us-west-2.amazonaws.com:/ /mnt/efs_drive nfs defaults,vers=4.1 0 0

Let’s mount /mnt/efs_drive.

[ec2-user@ip-172-31-58-150 pictures]$ sudo mount /mnt/efs_drive [ec2-user@ip-172-31-58-150 pictures]$ df -h Filesystem Size Used Avail Use% Mounted on devtmpfs 3.8G 0 3.8G 0% /dev tmpfs 3.8G 168K 3.8G 1% /dev/shm tmpfs 3.8G 17M 3.8G 1% /run tmpfs 3.8G 0 3.8G 0% /sys/fs/cgroup /dev/xvda2 10G 9.6G 426M 96% / tmpfs 777M 68K 777M 1% /run/user/1000 fs-33656734.efs.us-west-2.amazonaws.com:/ 8.0E 0 8.0E 0% /mnt/efs_drive

Run the container using Podman.

[ec2-user@ip-172-31-58-150 ~]$ sudo podman run -d --name=photoshow -e PUID=1000 -e PGID=1000 -e TZ=Europe/London -p 8080:80 --mount type=bind,source=/mnt/efs_drive/photo/config,destination=/config --mount type=bind,source=/mnt/efs_drive/photo/pictures/,destination=/Pictures --mount type=bind,source=/mnt/efs_drive/photo/thumb/,destination=/Thumbs --restart unless-stopped ghcr.io/linuxserver/photoshow 95c78443d893334c4d5538dc03761f828d5e7a59427c87ae364ab1e7f6d30e15 [ec2-user@ip-172-31-58-150 ~]$ [ec2-user@ip-172-31-58-150 ~]$ sudo podman ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 95c78443d893 ghcr.io/linuxserver/photoshow 18 seconds ago Up 17 seconds ago 0.0.0.0:8080->80/tcp photoshow [ec2-user@ip-172-31-58-150 ~]$

Let's inspect the container.

[ec2-user@ip-172-31-58-150 ~]$ sudo podman inspect photoshow …………… …………… …………… "Mounts": [ { "Type": "bind", "Name": "", "Source": "/mnt/efs_drive/photo/config", "Destination": "/config", "Driver": "", "Mode": "", "Options": [ "rbind" ], "RW": true, "Propagation": "rprivate" }, { "Type": "bind", "Name": "", "Source": "/mnt/efs_drive/photo/pictures", "Destination": "/Pictures", "Driver": "", "Mode": "", "Options": [ "rbind" ], "RW": true, "Propagation": "rprivate" }, { "Type": "bind", "Name": "", "Source": "/mnt/efs_drive/photo/thumb", "Destination": "/Thumbs", "Driver": "", "Mode": "", "Options": [ "rbind" ], "RW": true, "Propagation": "rprivate" } ], …………… …………… …………...

Step 3: Adding compute level high availability

This is fine, but what if the EC2 instance goes down? I have data in an EFS file system, but how are the clients going to access it?

For this, we have to make our compute and storage both HA. Our storage is already HA because of EFS, but now let's make the EC2 instance also HA.

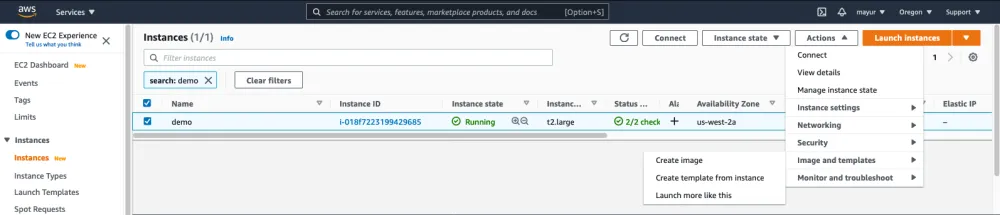

We first create an image (AMI) of our running EC2 instance as shown below in the screenshots, and bring a new EC2 instance in a different Availability Zone (AZ). Both our instances will now be accessing the same data that is stored on EFS.

Let’s add an Application Load Balancer to distribute the client requests to the two EC2 instances in the two AZs.

Let’s add an Application Load Balancer to distribute the client requests to the two EC2 instances in the two AZs.

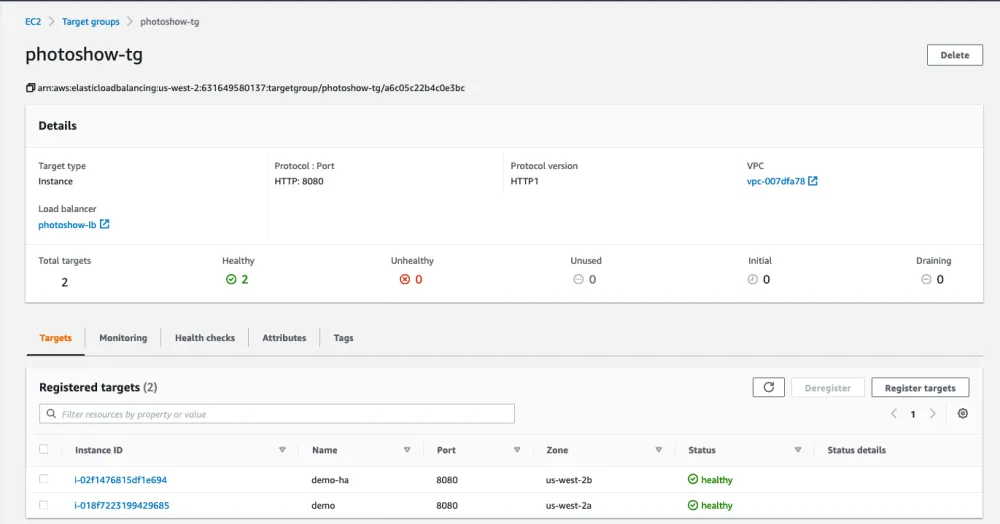

The Application Load Balancer forwards the requests to the Target group that includes the two EC2 instances hosting our application containers.

The Application Load Balancer forwards the requests to the Target group that includes the two EC2 instances hosting our application containers.

Enter the DNS name of the Load Balancer with port 8080 in the web browser (photoshow-lb-207083175.us-west-2.elb.amazonaws.com:8080) to connect to the application.

Enter the DNS name of the Load Balancer with port 8080 in the web browser (photoshow-lb-207083175.us-west-2.elb.amazonaws.com:8080) to connect to the application.

Step 4: Scaling

So far so good, but happens when our requests increase and we need additional resources to handle the client requests?

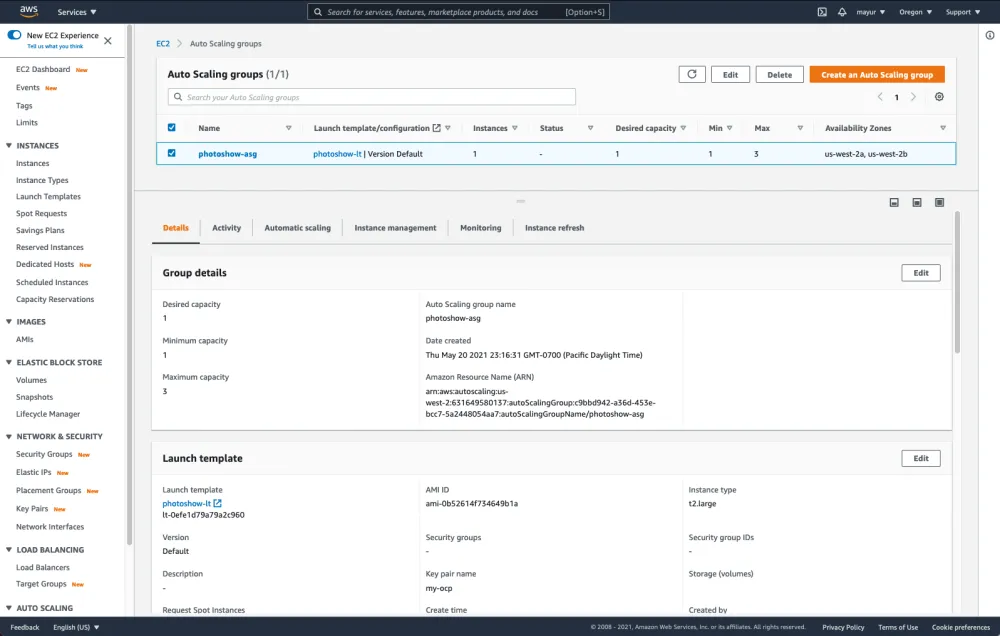

That is exactly where the Auto Scaling comes into picture. I added an Auto Scaling group called photoshow-asg with Desired Capacity of 1, Minimum capacity of 1, and Maximum capacity of 3 to handle any increase in the user requests.

I checked to see if the photo gallery can still be accessed from the URL, and also checked the scaling of the EC2 instances based on the load.

I checked to see if the photo gallery can still be accessed from the URL, and also checked the scaling of the EC2 instances based on the load.

Ok, but I don’t want to be giving the DNS name of a Load Balancer to family and friends to check out my photos—how uncool is that!

Valid point! That is where Route 53 can help. I have a domain register with Route 53, and I’m going to use it to access the photo gallery.

Go to Route 53 -> Hosted Zone -> <your registered domain> and create a CNAME record type pointing to the Load Balancer DNS name

Conclusion

In this post we have seen a highly available and scalable solution using Podman and Amazon EFS on RHEL 8. This is a supported configuration as both RHEL 7 and 8 are supported with EFS. You can reach out to Red Hat Support if there is an issue with RHEL/Podman or AWS if there is an issue with EFS.

This solution is great, but if you are also looking to simplify agile development with embedded continuous integration and continuous deployment (CI/CD), add container catalog and image streams, or integrate your existing pipeline then you should definitely look into Red Hat OpenShift on AWS.

Choose between self-hosted Red Hat OpenShift Container Platform, the managed offering of Red Hat OpenShift Dedicated or Red Hat OpenShift Service on AWS (ROSA), or a mixture of these that suits your organization’s needs to manage your Kubernetes clusters with one solution.关于作者

Mayur Shetty is a Principal Solution Architect with Red Hat’s Global Partners and Alliances (GPA) organization, working closely with cloud and system partners. He has been with Red Hat for more than five years and was part of the OpenStack Tiger Team.