Virtio-net failover is a virtualization technology that allows a virtual machine (VM) to switch from a Virtual Function I/O (VFIO) device to a virtio-net device when the VM needs to be migrated from a host to another.

On one hand, the Single Root I/O Virtualization (SR-IOV) technology allows a device like a networking card to be split into several devices (the Virtual Functions) and with the help of the VFIO technology, the kernel of the VM can directly drive these devices. This is interesting in terms of performance, because it can reach the same level as a bare metal system. In this case, the cost of the performance is that a VFIO device cannot be migrated.

On the other hand, virtio-net is a paravirtualized networking device that has good performance and can be migrated. The trade off is that performance is not as good as with VFIO devices.

Virtio-net failover tries to bring the best of both worlds: performance of a VFIO device with the migration capability of a virtio-net device.

Here I’ll discuss the principles of virtio-net failover, including the building blocks and how they interoperate to bring failover to our VM.

Principles

Virtio-net failover relies on a several blocks of technology to migrate a VM using a VFIO device:

-

Live migration, to move the VM from one host to another

-

Virtio-net, to keep network connection while the migration is in progress

-

VFIO, to use a host hardware device

-

Failover, to switch from a networking device to another in a transparent way

Virtio-net failover building blocks

Let’s take a closer look at each of these technologies.

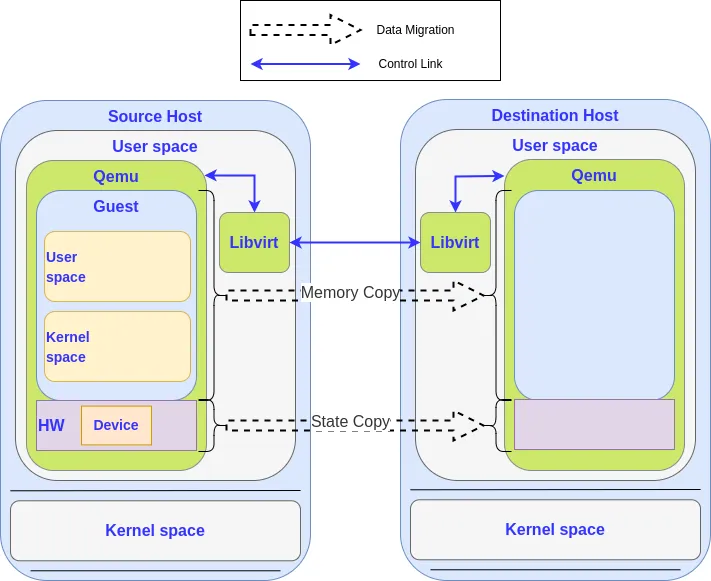

Live migration

The basic idea behind live migration is to be able to move a VM from one host to another without stopping the VM.

Migrating a VM consists in copying its internal state from one hypervisor to another. The state of a VM is defined by the content of its memory and by the internal state of the devices and CPUs of the machine.

The migration of the memory is the most important part of the data migration process. Depending on the size of the memory and the bandwidth of the network, it can take several seconds or even minutes to finish. As we don’t want to stop the VM during the migration, the memory is migrated in an iterative way — on the first round, all the memory is migrated while the system continues to run and to use it, but a dirty memory page log is used to do another migration of the memory that has been modified since the last round.

At some point the VM is stopped and all the remaining memory is migrated. Once that’s done, the state of the devices is migrated, which is small and fast to do. It’s only possible because the hypervisor knows which information needs to be saved/restored. This is why most of the VFIO devices cannot be migrated: the hypervisor doesn’t know the details of the internal state of a VFIO device. It cannot read and write the information to push the new state to the destination machine.

You can find detailed information about live migration in Live Migrating QEMU-KVM Virtual Machines.

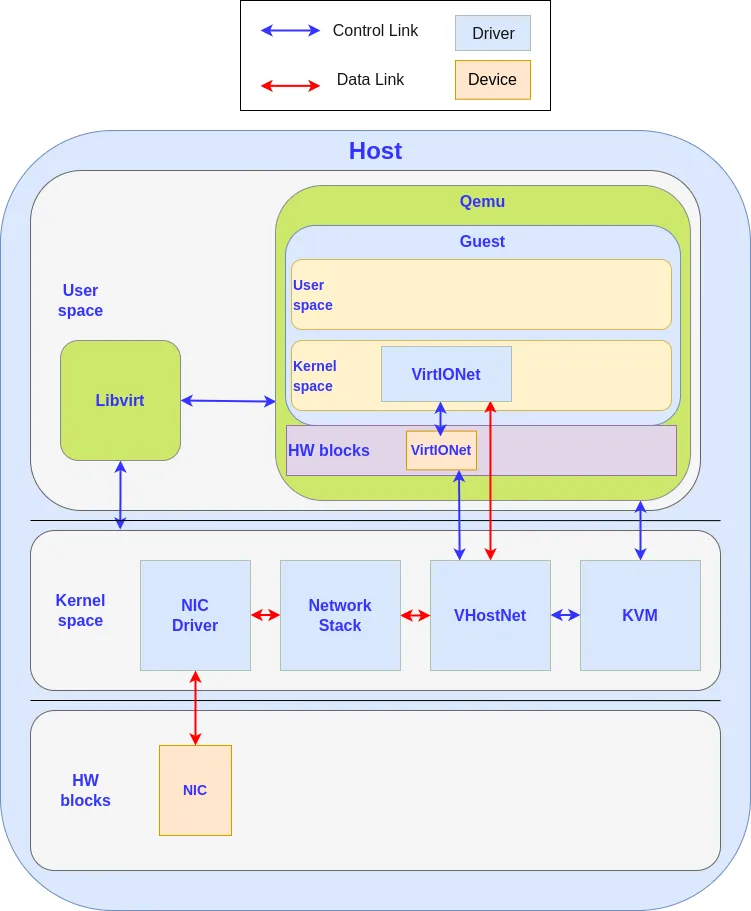

Virtio-net

Virtio-net is a networking paravirtualized device. A paravirtualized device is a software device that is presented by the hypervisor to a VM like a hardware device is, but in a way it is optimized to work more efficiently in a VM environment.

It’s generally easier to write the code for a paravirtualized device than it is to write the code to emulate an hardware device. Moreover, as the operating system is aware of the software nature of the device, the communication between the driver and the device can be optimized to be simpler and more efficient.

The driver of the guest kernel talks with the virtio-net device using memory mapped I/O (MMIO) and interrupts, like for a real hardware device. The interface is defined to be easy to use and implement. Moreover, as the guest driver is aware of its virtualized nature, the hypervisor can give it direct access to some host resources, like in the vhost interface (direct access to the networking stack or to block I/O stack). On PC machines, virtio devices are embedded in a PCI card to ease configuration and management.

Virtio-net failover relies on the PCI bus hotplug capability to automatically unplug a VFIO card before the migration.

If you want more details, you can read Introduction to virtio-networking and vhost-net or Migration — QEMU documentation.

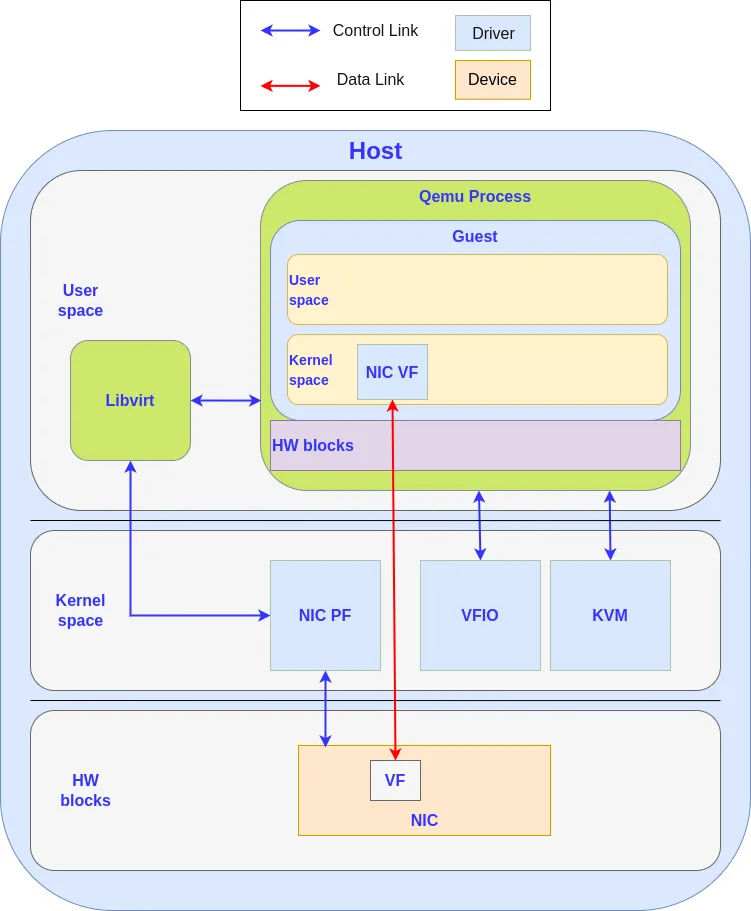

VFIO

Virtual Function I/O (VFIO) is a Linux framework that provides access to a device in a secure and programmable context. VFIO allows you to implement PCI passthrough by isolating a PCI device from the rest of the host and to put it under control of a user process.

Modern Input-Output Memory Management Units (IOMMUs) incorporate isolation properties and devices can be isolated from each other and from arbitrary memory access. This isolation allows device Virtual Function direct assignment to a VM in a secure way.

In most cases, a VFIO device cannot be migrated because the hypervisor is not able to save the device internal state to restore it after migration (but some works on the subject are undergoing, see VFIO device Migration — QEMU documentation). If you want more details about VFIO, you can read VFIO - "Virtual Function I/O" — The Linux Kernel documentation.

Failover

Failover is a term that comes from the high availability (HA) domain in an attempt to provide reliability, availability and serviceability (RAS) to a system.

The principle of failover is to bind two devices together, so called the primary and the standby, in a redundant way. The system only uses the primary device, but if the primary device becomes unavailable, unusable or disconnected, the failover manager can detect the problem and disable the primary device to switch to the standby device.

The standby is used to maintain service availability. While the standby is in use, an operator can remove the dysfunctional device and replace it with a healthy one. Once the problem is corrected, the new device can be used as the new standby device while the old standby device becomes the new primary device. Alternately, the newly replaced device could be restored as the primary device and the other switched back to standby.

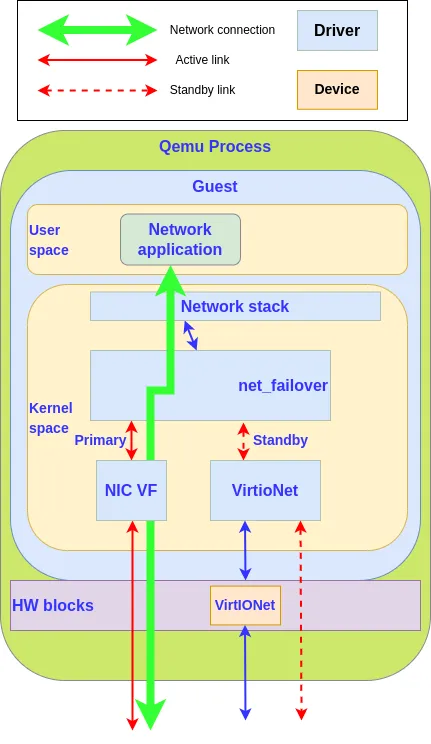

Virtio-net failover operation

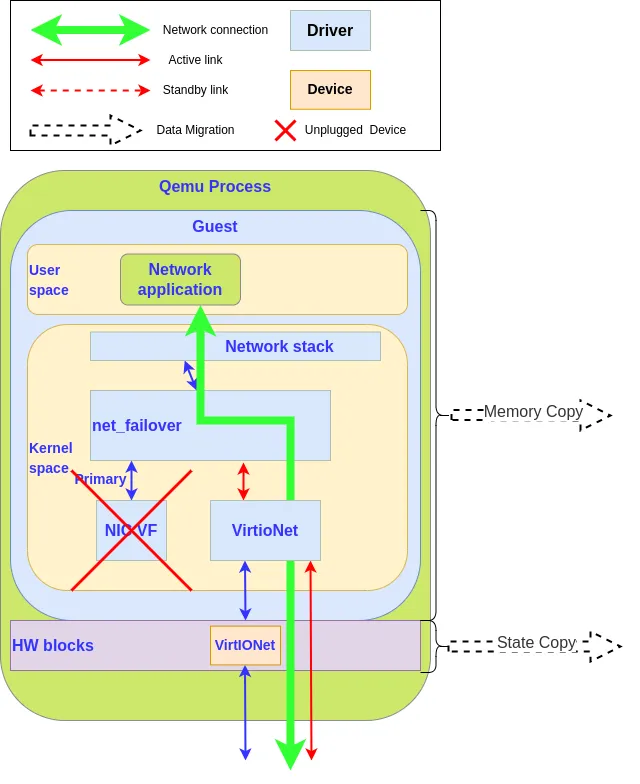

Virtio-net failover plays with the failover principle to bind two devices together, but in this case, the VFIO device is chosen with caution to be the primary device and used during the regular state of operation of the system. When a migration occurs, the hypervisor triggers a primary device fault (by unplugging it), that will force the failover manager (in our case the guest kernel failover_net driver) to disable the primary device and use the standby device, the virtio-net device, that is able to survive to a VM live migration.

The hypervisor also takes the role of the operator by restoring the disabled device on the migration destination side by hotplugging a new VFIO device. In this case, failover_net driver is configured to restore the VFIO device as the primary and to keep the virtio-net as the standby as the devices are not identical.

To implement virtio-net failover, we need support at guest kernel level and at hypervisor level:

-

The hypervisor detects when a migration is started and unplugs the VFIO device as it cannot be migrated, at the same time it will block the migration while the VFIO card is being unplugged.

-

Guest kernel needs the ability to switch transparently between the VFIO device and the vfio-net device.

-

On normal operation, VFIO is used for its performance, but when the hypervisor asks to unplug the card, the kernel unplugs it and switches the networking traffic from the VFIO device to the virtio-net device.

-

While the migration is processed, the VM is not stopped and all the networking traffic that usually crosses the VFIO device is redirected to the virtio-net device. There is no service interruption,

-

At the end of the migration, on the destination side, the hypervisor plugs in a VFIO device, and the traffic switches back from the virtio-net device to the VFIO device.

Summary

Virtio-net failover allows a VM hypervisor to migrate a VM with a VFIO device without interrupting the network connection. To reach this goal we need collaboration between the hypervisor and the guest kernel — the hypervisor unplugs the card and the guest kernel switches the network connection to the virtio-net device, and then they restore the original state on the destination host.

We’ll provide a more detailed description of the configuration and usage of the virtio-net failover in a future article.

Learn more

关于作者

Laurent Vivier is a senior software engineer at Red Hat in the Virtualization Team since 2015. After some time spent on the support of KVM on POWER he is now working on the networking virtualization.