Organizations are implementing edge infrastructure to provide important applications closer to their use, while still wanting to have a single interface to perform administration from a single location. You can do this using Red Hat Virtualization.

Planning

The first step in implementing an edge site is determining the specifications of the site. In this post we’ll walk through the things you should consider when planning an edge site, and how to plan to manage and scale the site as your needs evolve. By specifications I meant to say how do you define your edge site

- What will be the size of the site?

- Can the edge site have its own local storage?

- Can you afford to waste CPU cycles on the edge site for any control processes? Or do you wish to allocate all of your CPU cycle to your workloads?

- Do you wish to contain your edge resources (storage, network, CPU) only within the edge site?

- How often will you scale your edge site?

Wouldn’t be nice if scaling of your edge site will be just a matter of powering and stacking of your physical servers and rest of the configuration such as automatic discovery, IP assignment, loading of the operating system, and loading of your chosen hypervisor is handled automatically from a centralized place?

It sounds like it could be complicated and difficult to implement, maintain, and scale. However, the good news is there is a user can achieve all of the above with the help of Red Hat Virtualization. Red Hat Virtualization is an enterprise-grade virtualization platform built on Red Hat Enterprise Linux.

Virtualization allows users to provision new virtual servers and workstations more easily and can provide more efficient use of physical server resources. With Red Hat Virtualization, you can manage your virtual infrastructure - including hosts, virtual machines, networks, storage, and users - from a centralized graphical user interface or RESTful API.

This post details one way to implement edge infrastructure in an easier and more scalable fashion than managing systems individually. The implementation will address most of the edge characteristics highlighted above, but there are many ways you can implement your edge infrastructure. These will be based on decisions you make with the above detailed framework.

Assumptions

The edge implementation discussed in this post assumes that:

- This provides another implementation option for Edge use case deployment using Red Hat Virtualization.

- There is shared storage available at the centralized region where Red Hat Virtualization Manager will be hosted.

- There is local storage available at each of the edge sites preferably in the form of Red Hat Gluster. An NFS server or attached disk are also acceptable methods.

- Storage networking can be implemented using IP or Fiber Channel and supports Network File System (NFS), Internet Small Computer System Interface (iSCSI), GlusterFS, Fibre Channel Protocol (FCP), or any POSIX compliant networked filesystem.

- Providing high availability for management requires two physical servers at the region.

- Connectivity between region and the edge sites should be adequate, otherwise if latency is too high or throughput too low, there may be issues with Red Hat Virtualization Manager managing the nodes.

- In order to keep the edge sites isolated from each other, we create Red Hat Virtualization Data Center in each of the edge sites so that resources like network and storage are contained within the site.

- This implementation assumes there is no requirement to live migrate VMs from one edge site to another. Hence, edge sites are assumed to be independent to each other.

In this implementation, we are defining “region” as a centralized place from where an administrator would like to monitor and maintain their edge sites. In other words, the “region” is a place where our Red Hat Virtualization Manager lives. This could be at the central office, or at a regional data center.

Red Hat Virtualization Manager and edge design

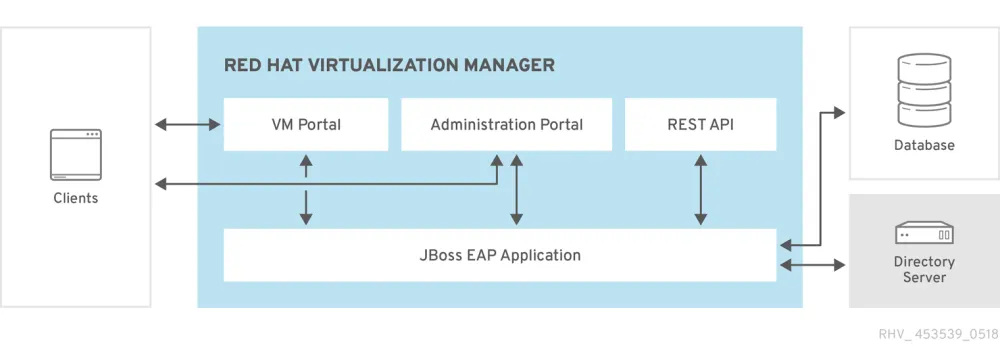

So, what is Red Hat Virtualization Manager? Red Hat Virtualization is an enterprise-grade virtualization platform built on Red Hat Enterprise Linux. The Red Hat Virtualization Manager provides centralized management for a virtualized environment. A number of different interfaces can be used to access the Red Hat Virtualization Manager. Each interface facilitates access to the virtualized environment in a different manner.

The Red Hat Virtualization Manager provides graphical interfaces and an Application Programming Interface (API). Red Hat Virtualization Manager will reside in the region/central and will be hosted on two physical hosts for high availability (HA) purposes. Recommended hardware requirement can be found in the Red Hat Virtualization Manager installation guide.

For the edge site, if the operator wishes to have only one host then local storage (attached to the host) can be used. The caveat to using local storage is that live migration cannot occur, which also means that you will have zero high availability. However, if the operator wishes to have more than one host in the edge site then it is advisable to use external storage like Gluster.

This will allow for HA/live migration within the edge site. Configuring external storage at the edge site is out of scope in this discussion. This screenshot shows the storage type as “shared” which is allocated to the region. Note it is marked as “Default."

The next screenshot demonstrates storage used at an edge site. It is marked as “east-data-center” and storage type is marked as “Local."

In this example we’re only demonstrating a single host at the edge site and using its local attached disk for storage. Next, we have a screenshot of Red Hat Virtualization Manager (hosted engine) which is in the region. In this example we are using a single host in the region, which is also hosting Red Hat Virtualization Manager.

For high availability purposes we recommend having two physical hosts and shared storage, but this demonstrates you can work with a single host. Hosted engine is in the form of a virtual machine running on host “rhv-central.example.com” and is attached to the data center named “Default.”

Finally, let’s talk about the networking. As an administrator one has to confirm that there is enough network bandwidth available between region and edge sites to host the ovirtmgmt network. Note that ovirtmgmt can be used to carry network traffic like management, display, and/or migration (if applicable).

By default, one logical network is defined during the installation of the Red Hat Virtualization Manager i.e. the ovirtmgmt management network. This screenshot shows the ovirtmgmt network running via “Default” Data Center which is “region” in our case.

Note that in this example I’m not using bonding on the interfaces. It is, however, advisable to bond the two interfaces together if possible. Bonded network interfaces combine the transmission capability of the network interface cards included in the bond to act as a single network interface, they may provide greater transmission speed than that of a single network interface card.

Also, a bonded interface can remain up unless all network interface cards in the bond fail, so bonding provides better fault tolerance. One limitation is that the network interface cards that form a bonded network interface must be of the same make and model so that all network interface cards in the bond support the same options and modes. There will be three types of networks flowing through each of the edge sites.

- Ovirtmgmt

- logical networks (many depending upon workloads requirements)

- Storage network (IP based)

This screenshot captures the logical network and ovirtmgmt network flowing through the data center “east-data-center.”

You can see networks are bound to different NIC’s. All this may be customized per the workload’s requirements.

Overall this is what the implementation will look like:

And when an administrator spins up the VM from the centralized region, this is how it will look: In this case “east-vm1” is the name of VM running in Data Center “east-data-center” and cluster “east-data-center.”

This is how the VM will attach to the logical network “east-traffic1.” Also look at the volume attached to this VM.

Clarifications

This particular implementation of Red Hat Virtualization is slightly different than Red Hat Hyperconverged Infrastructure because:

- Red Hat Virtualization Manager in this implementation first of all is at centralized place i.e. region and not present at each of the edge sites unlike in Red Hat Hyperconverged Infrastructure for Remote Office Branch Office (ROBO) cases.

- Due to centralized location of Red Hat Virtualization Manager, it is managing all of the edge sites in terms of scalability and management perspectives. Most importantly it is not putting any extra burden on the edge site in terms of resources i.e. CPU cycle. To sum this up, edge Site resources are only used for hosting edge use cases.

- Red Hat Virtualization Manager is utilizing its own shared storage at the centralized location, at the region level.

- This implementation is highly scalable - you can scale edge sites to a point where a single Red Hat Virtualization Manager can handle the work.

Conclusions

As you can see from the post, with this design one can implement an edge deployment with less complexity. You don’t have to allocate extra physical servers on the edge site for Red Hat Virtualization Manager, which means you can dedicate your CPU cycles to your workloads. If required by your workloads you can enable fast data path features which Red Hat Virtualization offers out of box like single root input/output virtualization (SR-IOV), non-uniform memory access (NUMA), and CPU pinning directly on your edge sites.

From the scaling perspective, you can add more edge sites on the go as demand increases or your business expands. You can rack, stack, and power on your physical servers on new or existing edge sites. And once it is on, all you have to do is to create a new data center (if adding a new site) and add those hosts to the required cluster. Red Hat Virtualization Manager can take care if it and make it available for your workloads.

Red Hat Virtualization can be used to handle a range of workloads, topographic designs, and customized use cases involving our certified partners. If you’re interested in learning more about Red Hat Virtualization for edge workloads, contact our Red Hat sales team for more information.

저자 소개

유사한 검색 결과

과거의 운영 방식에서 벗어나 IT의 미래 구축

Red Hat Device Edge, 이제 NVIDIA Jetson Orin에서 사용 가능

A composable industrial edge platform | Technically Speaking

Open Curiosity | Command Line Heroes

채널별 검색

오토메이션

기술, 팀, 인프라를 위한 IT 자동화 최신 동향

인공지능

고객이 어디서나 AI 워크로드를 실행할 수 있도록 지원하는 플랫폼 업데이트

오픈 하이브리드 클라우드

하이브리드 클라우드로 더욱 유연한 미래를 구축하는 방법을 알아보세요

보안

환경과 기술 전반에 걸쳐 리스크를 감소하는 방법에 대한 최신 정보

엣지 컴퓨팅

엣지에서의 운영을 단순화하는 플랫폼 업데이트

인프라

세계적으로 인정받은 기업용 Linux 플랫폼에 대한 최신 정보

애플리케이션

복잡한 애플리케이션에 대한 솔루션 더 보기

가상화

온프레미스와 클라우드 환경에서 워크로드를 유연하게 운영하기 위한 엔터프라이즈 가상화의 미래