As organizations embrace agentic AI for cluster operations, the central challenge shifts from whether or not AI can control a cluster to whether it can do it safely and with accountability. How do large language models (LLMs) provide meaningful context and operational capability within our clusters without compromising security or relying on brittle, script-based wrappers?

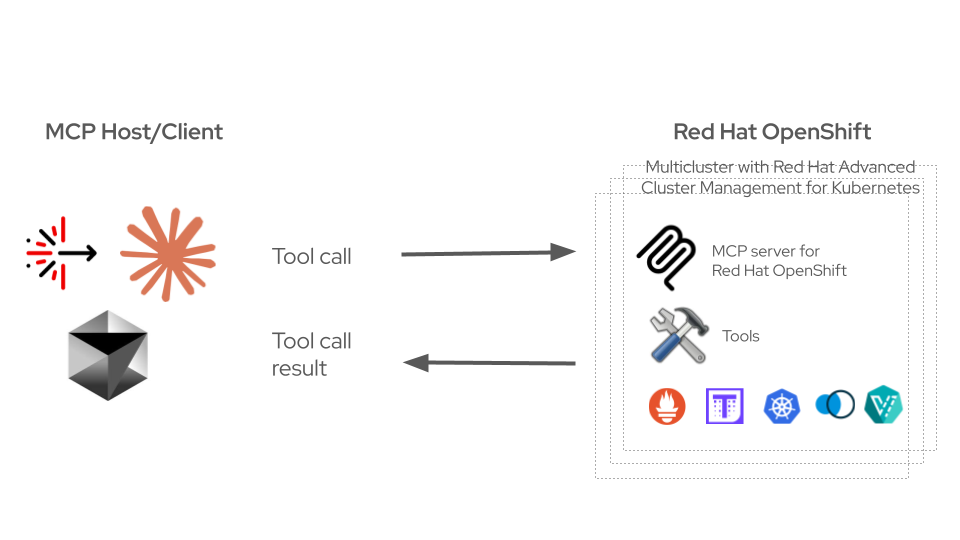

To address this challenge, Red Hat has introduced the Model Context Protocol (MCP) server for Red Hat OpenShift, available as a technology preview. MCP refers to an open source standard for connecting AI applications to external data and tools. The MCP server for Red Hat OpenShift uses the MCP to provide LLM Agents controlled access to OpenShift clusters. This helps your agents to safely and intelligently interact with your OpenShift clusters following rules you define.

MCP server for Red Hat OpenShift

The MCP server for Red Hat OpenShift introduces a suite of capabilities designed to bring AI-driven operations to the enterprise, focusing on security, observability, and scale:

Architected for fleet-wide scale

Platform engineers rarely manage single clusters. To support fleets of OpenShift clusters, the MCP server natively supports multicluster operations. Instead of relying on static, distributed kubeconfig files, the server introduces OAuth and OIDC integration, using token exchange protocols. Currently supporting Keycloak, this architecture allows the MCP server to reside on a Red Hat Advanced Cluster Management for Kubernetes hub. Authenticated agents more safely query and act upon managed OpenShift clusters dynamically without requiring impersonation flows, maintaining strict identity verification across the fleet. Here’s a short demo showing token exchange for the MCP server for Red Hat OpenShift.

RBAC and auditability

In addition to the OAuth and OIDC integration, the MCP server for Red Hat OpenShift server does not attempt to reinvent Kubernetes security; instead, it enforces it through:

- Read-only by default: The MCP server's modular toolset architecture defaults to read-only operations. Write capabilities must be explicitly enabled, and administrators can entirely disable destructive operations via configuration flags.

- Role-based access control (RBAC) enforcement and resource denial: The MCP server respects standard Kubernetes RBAC. Additionally, administrators can explicitly block the server from interacting with sensitive resources, such as Secrets or ConfigMaps, using the denied_resources configuration block.

- Audit trail: To maintain visibility for platform teams, the server injects a specific user-agent string into Kubernetes API calls. This helps ensure that the API server audit logs clearly demarcate actions initiated by an AI agent versus a human operator.

Deep observability and telemetry

Fully integrated into the MCP server, OpenTelemetry support exposes granular metrics and distributed traces directly to OpenShift monitoring. Furthermore, the MCP server exposes powerful observability toolsets directly to the LLM.

- Thanos and Prometheus: Agents can execute both instant and range PromQL queries to analyze historical trends and real-time metrics. As this is a technology preview, comprehensive evaluations of these tools are planned but not yet available.

- Red Hat OpenShift ServiceMesh (Kiali) integration: The MCP server exposes Kiali-backed tools so agents can move from high-level mesh and namespace health to per-service and per-workload signals, including traces, metrics, and logs, plus Istio configuration when the task needs to explain or change mesh behavior

- Node-level metrics: Tools like nodes_stats_summary expose the kubelet's summary API, granting agents access to cgroup v2 Pressure Stall Information (PSI) for CPU, memory, and I/O.

Extensible infrastructure management

Beyond core Kubernetes resources, the MCP server ships with multiple toolsets and custom prompts designed for complex infrastructure management across the Red Hat OpenShift ecosystem including:

- Helm: Helm chart and release management.

- NetEdge: Troubleshooting for DNS and ingress, as well as monitoring for debugging connectivity and routing issues.

- OpenShift ServiceMesh (kiali): OpenShift ServiceMesh management tools covering mesh topology and health, Istio config management, resource and workload detail, metrics, logs, traces, plus pod performance against requests/limits..

- Red Hat OpenShift Virtualization (kubevirt): VM lifecycle management and troubleshooting. This toolset is available as a developer preview.

- Prometheus: Prometheus tools for querying cluster metrics and alerts directly. As this is a technology preview, comprehensive evaluations of these tools are planned but not yet available.

If you’ve got a fleet of MCP servers, read about the MCP gateway to see how to federate, govern, and control AI agent traffic at scale.

Evaluations

Quality evaluation has been a top concern from the very start. For tool quality, we used mcpchecker, an open source integration testing framework that validates MCP servers by running AI agents against them and verifying that tools execute across a variety of scenarios, as well as they are discoverable and usable against a suite of different LLMs and agents so that agents are able to successfully use these tools.

Try MCP server for Red Hat OpenShift today!

The technology preview is available now at Model Context Protocol (MCP) server for Kubernetes and OpenShift. Follow instructions from the MCP server for Red Hat OpenShift User Guide to get started. Connect it to your AI client of choice, and start exploring what agentic cluster operations actually feel like in practice.

MCP lifecycle operator

In addition to the MCP server for Red Hat OpenShift, we’re also making available the MCP lifecycle operator, which is available as a developer preview. The MCP lifecycle operator is an operator that makes it easy to deploy, run, and manage MCP servers in OpenShift and Kubernetes. Learn more here about how the MCP lifecycle operator will be used for handling MCP server’s full lifecycles with production-grade automation and ecosystem integrations.

We'd love your feedback—whether it's a bug, a missing tool, or an idea for what comes next.

Join the conversation in the OpenShift Commons Slack #general channel, open a GitHub issue, or send a pull request.

제품 체험판

Red Hat OpenShift Container Platform | 제품 체험판

저자 소개

Calum Murray is a Software Engineer focused on Applied AI initiatives for OpenShift. He specializes in building at the intersection of AI and cloud-native infrastructure, including the MCP server for Red Hat OpenShift, MCP evaluations, and Agent Skill evaluations. Previously, he focused on developing OpenShift Serverless.

Calum is an active open source community leader, serving as a Cloud Native Computing Foundation (CNCF) Ambassador and project maintainer.

Ju Lim works on the core Red Hat OpenShift Container Platform for hybrid and multi-cloud environments to enable customers to run Red Hat OpenShift anywhere. Ju leads the product management teams responsible for installation, updates, provider integration, and cloud infrastructure.

채널별 검색

오토메이션

기술, 팀, 인프라를 위한 IT 자동화 최신 동향

인공지능

고객이 어디서나 AI 워크로드를 실행할 수 있도록 지원하는 플랫폼 업데이트

오픈 하이브리드 클라우드

하이브리드 클라우드로 더욱 유연한 미래를 구축하는 방법을 알아보세요

보안

환경과 기술 전반에 걸쳐 리스크를 감소하는 방법에 대한 최신 정보

엣지 컴퓨팅

엣지에서의 운영을 단순화하는 플랫폼 업데이트

인프라

세계적으로 인정받은 기업용 Linux 플랫폼에 대한 최신 정보

애플리케이션

복잡한 애플리케이션에 대한 솔루션 더 보기

가상화

온프레미스와 클라우드 환경에서 워크로드를 유연하게 운영하기 위한 엔터프라이즈 가상화의 미래