AI 에이전트 환경은 복잡성을 띄고 있습니다. 팀은 LangChain, LlamaIndex, CrewAI, AutoGen을 사용하거나, 사용자 정의 솔루션을 처음부터 구축합니다. 좋은 현상입니다. 창의적인 단계에서는 그렇게 진행되어야 합니다. 그러나 한 번 에이전트가 개발자의 노트북을 떠나 실제 프로덕션 데이터와 상호작용하고, 외부 애플리케이션 프로그래밍 인터페이스(API)를 호출하거나 공유 인프라에서 실행되기 시작하면, 제한 없는 자유는 더 이상 장점이 아니라 위험 요소가 됩니다.

업계는 여러 단계를 거쳐 발전해 왔습니다. 모델 API(예: 채팅 완성), 에이전트 API(예: 어시스턴트 및 이후 OpenAI Responses API), 프레임워크 시대, 그리고 현재는 하니스(Harness)와 코딩 에이전트 시대에 접어들었습니다. 최상위 계층은 계속 바뀌고 있으며, 점점 대체 가능(fungible)해지고 있습니다. 변하지 않는 것은 “내 노트북에서는 작동한다”와 “프로덕션 환경에서 안전하게, 규모에 맞게, 감사 추적(audit trail)과 함께 실행된다” 사이의 격차입니다..

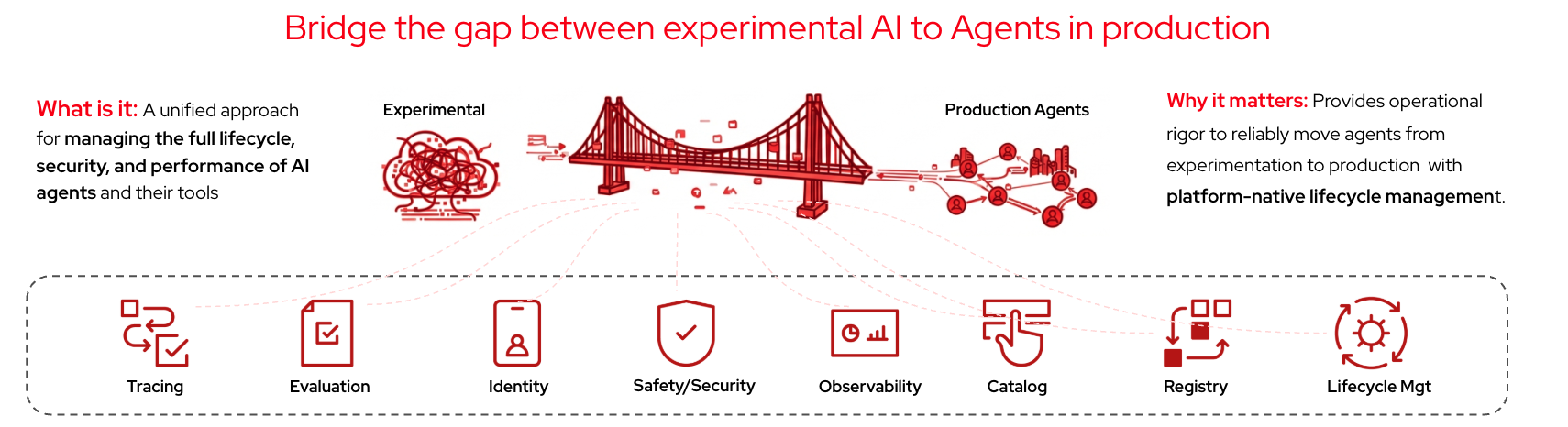

Red Hat의 AgentOps 전략은 다음 핵심 원칙을 기반으로 합니다. 자신의 에이전트 가져오기(BYOA, Bring Your Own Agent) 플랫폼은 프레임워크에 구애받지 않습니다. 중요한 것은 에이전트가 정체성을 가지고, 최소 권한으로 실행되며, 관찰되고, 안전성 검사를 통과하고, 실행 후에도 감사를 받을 수 있어야 한다는 점입니다. 플랫폼은 보안, 거버넌스, 관찰 가능성, 수명 주기 관리를 제공합니다. 그러나 에이전트는 여전히 사용자의 것입니다.

Red Hat AI에서 제공하는 기능

이 시리즈에서는 BYOA가 실제로 어떻게 작동하는지 미리 살펴보고, 현재 사용 가능한 기능과 앞으로 구축할 기능을 소개합니다.

Red Hat AI는 중앙 WebSocket 게이트웨이를 통해 여러 채널(WhatsApp, Telegram, Slack, Discord 등)에서 에이전트 상호 작용을 라우팅하는 개인 AI 어시스턴트인 OpenClaw를 운영합니다. Red Hat은 단순히 이를 자체 프레임워크로 감싸는 것이 아니라, 플랫폼 인프라 위에 구축합니다. OpenClaw는 하나의 예일 뿐이며, 이 접근 방식은 어떤 에이전트 런타임에도 적용할 수 있습니다.

OpenClaw는 기본적으로 많은 샌드박스를 사용하지 않습니다. 역할 기반 액세스 제어(RBAC), 추적 툴 호출 또는 외부 서비스에 대한 액세스 게이트를 적용하지 않습니다. Red Hat AI는 에이전트 코드를 변경하지 않고도 Red Hat OpenShift 및 Red Hat OpenShift AI의 기본 기능을 사용하여 이러한 각 계층을 추가합니다. 이 게시물의 나머지 부분에서는 이러한 각 계층을 자세히 설명합니다.

클라우드 네이티브에서 에이전트 네이티브로의 전환

Red Hat은 에이전트 인프라를 처음부터 새로 구축하지 않습니다. 대신, OpenShift가 이미 갖추고 있는 도구와 배포 패턴을 재활용하고, 이를 Red Hat AI를 통해 에이전트 중심(Agentic) 워크로드에 맞게 확장합니다.

에이전트 격리 필요: OpenShift 샌드박스 컨테이너(Kata Containers 프로젝트 기반이며, GA로 제공되는 계층형 제품)와 향후 제공될 에이전트 샌드박스 통합 기능을 통해, 각 에이전트 세션마다 커널 수준의 격리 실행 환경을 제공합니다. 이를 통해 호스트 및 다른 에이전트의 데이터는 손상된 에이전트로부터 보호됩니다.

에이전트 아이덴티티 필요: 범위가 제한된 서비스 계정 토큰과 함께, SPIFFE/SPIRE를 활용한 암호화 기반 워크로드 신원을 제공합니다. 하드코딩된 키는 사용하지 않습니다. 대신 플랫폼이 에이전트를 검증하고, 짧은 수명의 범위 제한 서비스 계정 토큰을 플랫폼 레벨에서 주입합니다. 이는 Red Hat AI의 일부로, 에이전트 라이프사이클을 위한 Kagenti 통합과 함께 제공될 예정입니다.

에이전트에 멀티테넌시 필요: 네임스페이스 격리, NetworkPolicy, ResourceQuota를 통해 이를 구현하며, 공격적(적대적) 테스트 상황에서도 이러한 경계가 유지되는지 검증합니다.

에이전트에는 여러 계층에 걸친 정책 가드레일이 필요: 쿠버네티스 레벨에서는 OPA/Gatekeeper 및 Kyverno 정책을 적용하고, 툴 수준의 권한 부여를 위해 Model Context Protocol(MCP) Gateway를 사용합니다. 또한 모델 추론 경계에서는 Guardrails Orchestrator와 NeMo Guardrails를 활용합니다(이에 대해서는 다음 섹션에서 더 자세히 설명합니다).

에이전트를 더 안전하게, 그리고 프로덕션에 적합하게 만들기

많은 엔터프라이즈 팀에게 있어, 이제 안전성은 에이전트와 모델을 프로덕션에 배포하는 데 있어 가장 큰 장애물이 되고 있습니다. 성능 문제가 아닙니다. 비용 문제도 아닙니다. 신뢰의 문제입니다. 특히 외부 사용자에게 제공되는 경우, 기업은 자사의 AI가 규정을 준수하고 브랜드 평판 및 법적 리스크를 보호한다는 확신이 필요합니다.

Red Hat AI는 전체 라이프사이클을 아우르는 안전성 접근 방식을 제공합니다. 여기에는 프로덕션 이전 단계에서의 탐지, 테스트, 리스크 완화뿐 아니라, 런타임 중 새롭게 발생하는 위협에 대한 방어까지 포함됩니다.

에이전트가 라이브 상태가 되기 전 단계: Garak(Red Hat AI의 일부로 제공 예정)이 모델 수준에서 jailbreak, 프롬프트 인젝션 및 기타 공격 벡터에 대한 적대적 취약점 스캐닝을 제공합니다. TrustyAI 오퍼레이터를 통한 통합이 예정되어 있으며, EvalHub(평가 컨트롤 플레인) 및 Kubeflow Pipelines를 통해 활용할 수 있어, CI/CD 과정에서 프로덕션 승격 전에 적대적 스캔을 수행할 수 있습니다.

런타임 단계(추론 경계에서의 가드레일): TrustyAI Guardrails Orchestrator(OpenShift AI 3.0부터 정식 제공)가 모델의 입력과 출력을 검사합니다. NeMo Guardrails(기술 프리뷰)는 프로그래밍 가능한 대화형 가드레일을 추가로 제공합니다. 두 기능 모두 추론 경계에서 작동하며, 개별 LLM 호출을 가로채 응답이 에이전트에 전달되기 전에 안전성을 보장합니다. 또한, 향후 모델 카탈로그에 추가될 모델 리스크 뷰를 통해 이러한 안전성 신호를 모델 메타데이터와 함께 제공하여, 팀이 모델 선택 시 사용 사례별 리스크를 함께 고려할 수 있도록 지원할 예정입니다.

관측성, 추적, 그리고 평가

에이전트는 확률적(stochastic) 특성을 지니기 때문에 실행 추적 없이는 프로덕션 환경에서 에이전트를 디버깅하거나 신뢰할 수 없습니다.

Red Hat AI는 MLflow에 대한 자사 지원을 통해 에이전트 관측성을 위한 기반을 제공합니다. 현재 개발자 프리뷰 단계에 있으며 향후 릴리스에서 정식 출시(GA)가 예정되어 있습니다.

MLflow Tracing은 프롬프트, 추론 단계, 툴 호출, LLM API 요청 및 토큰 비용을 캡처합니다. 모든 추적은 OpenTelemetry와 호환되므로 모든 OTEL 호환 싱크를 통합할 수 있습니다. 추적 외에도 점수 및 평가를 위한 MLflow 기능을 사용하여 시간 경과에 따른 에이전트 품질을 평가할 수 있으며, 이러한 워크플로우는 OpenShift AI의 일부인 평가 제어 플레인인 Eval Hub를 통해 호출될 예정입니다.

규모에 따른 툴 호출 관리

에이전트는 툴을 호출합니다. 문제는 이러한 호출을 관리하는 방법입니다.

현재 개발자 프리뷰 단계인 MCP Gateway(OpenShift 네트워킹 팀과 함께 개발되었으며 Envoy 기반)는 모든 MCP 서버 앞단에 위치하여 단일하고 안전한 엔드포인트 역할을 합니다. 이 게이트웨이는 에이전트가 승인된 도구만 볼 수 있도록 신원 기반 도구 필터링을 제공하고, 백엔드별로 범위가 제한된 접근을 위한 OAuth2 토큰 교환, 그리고 민감한 도구 호출이 적절한 권한 검증을 거치도록 하는 자격 증명 관리를 추가합니다. 플랫폼이 접근 제어를 강제하고, 애플리케이션은 자격 증명을 관리하므로 서버 간 자격 증명 유출이 발생하지 않습니다.

권한 부여는 Gateway API 수준에서 JSON 웹 토큰(JWT) 유효성 검사 및 OPA(Open Policy Agent) 규칙 평가를 위해 Authorino를 통합하는 Kuadrant의 AuthPolicy를 통해 적용됩니다.

OpenClaw의 경우 에이전트는 MCP_URL 환경 변수 하나만 설정하면 통합된 도구 카탈로그에 접근할 수 있습니다. 실제로 호출 가능한 툴은 프롬프트가 아니라 토큰의 클레임에 의해 결정됩니다. 승인되지 않은 도구를 호출하도록 유도하는 프롬프트 인젝션 공격은 인프라 레이어에서 차단됩니다. 게이트웨이는 프롬프트 내용을 전혀 신뢰하지 않고, 오직 토큰 클레임만을 검증하기 때문입니다.

프로덕션 에이전트를 위한 API 선택

많은 팀이 채팅으로 시작하여 채팅 완료 및 OpenAI API를 거쳐 프레임워크로, 그리고 이제는 에이전트 실행 환경(agent harness)으로 발전해 왔습니다. 에이전트가 사용하는 API도 점점 통합되는 추세입니다. 에이전트 중심 워크로드를 위한 주요 API 중 하나는 Responses API이며 OpenAI는 현재 OpenResponses 사양을 통해 이 방향을 열었습니다.

Red Hat AI는 OpenResponses 사양을 완전히 준수하는 구현을 제공합니다. 이를 통해 팀은 모든 프롬프트, 툴 호출 및 추론 아티팩트를 타사 서비스를 통해 라우팅하는 대신 자체 호스팅 또는 하이브리드 모델 인프라에서 에이전트 워크로드를 실행할 수 있습니다.

자체 관리 환경 또는 하이브리드 환경을 위한 OpenResponses 호환 런타임은 여전히 제한적입니다. Red Hat AI는 이러한 공백을 겨냥한 가장 성숙한 구현 중 하나를 제공하며, OpenClaw 사용자들이 OpenAI Responses API 기반의 에이전트 동작을 유지하면서도 실행 환경을 직접 통제 가능한 인프라로 이전할 수 있는 현실적인 경로를 제공합니다.

Responses API 오케스트레이션 계층 없이 자체 호스팅 경로를 원하는 팀을 위해 Red Hat AI의 일부인 vLLM은 OpenAI 호환 /v1/chat/completions 엔드포인트를 제공합니다. 이를 통해 OpenClaw가 해당 엔드포인트를 직접 활용할 수 있습니다.

Kagenti를 사용한 에이전트 라이프사이클

많은 팀들이 로컬 환경(노트북)에서 개발한 에이전트를 프로덕션으로 옮기는 과정에서 어려움을 겪습니다. OpenShift AI의 일부로 계획된 Kagenti는 이러한 격차를 해소합니다. kagenti-operator는 A2A 기반 AgentCard CRD를 통해 에이전트를 자동 탐지하고, AgentRuntime 구성을 통해 에이전트 코드를 변경하지 않고도 ID(SPIFFE/SPIRE), 추적 및 툴 거버넌스(MCP Gateway)를 주입합니다. 탐지부터 런타임 거버넌스까지 이어지는 전체 라이프사이클은 플랫폼에서 관리됩니다. 또한 OpenShift AI UI에는 향후 로드맵으로 에이전트 카탈로그 및 레지스트리가 추가될 예정이며, 툴 서버를 위한 MCP 카탈로그도 함께 제공될 계획입니다.

이 시리즈에 대하여

이 블로그 시리즈는 OpenClaw를 예시 에이전트 런타임으로 활용해, 앞서 설명한 각 계층을 상세히 안내합니다. 각 글은 독립적으로 이해할 수 있도록 구성되어 있으므로, 순서대로 읽어도 되고 현재 필요한 주제부터 선택해 읽어도 됩니다.

모든 게시물에서 변하지 않는 유일한 것은 BYOA 원칙입니다. 에이전트를 다시 작성하라고 요구하지 않습니다. Red Hat은 에이전트가 아닌 엔터프라이즈에 엄격한 기준을 적용합니다.

AI 애플리케이션의 안전성을 구축, 평가 및 강화하기 위한 Red Hat AI 제품 스택에 대한 자세한 내용은 공식 제품 설명서와 Red Hat AI AgentOps 사례의 핵심인 다음 업스트림 프로젝트를 참조하십시오.

리소스

적응형 엔터프라이즈: AI 준비성은 곧 위기 대응력

저자 소개

Adel Zaalouk is a product manager at Red Hat who enjoys blending business and technology to achieve meaningful outcomes. He has experience working in research and industry, and he's passionate about Red Hat OpenShift, cloud, AI and cloud-native technologies. He's interested in how businesses use OpenShift to solve problems, from helping them get started with containerization to scaling their applications to meet demand.

채널별 검색

오토메이션

기술, 팀, 인프라를 위한 IT 자동화 최신 동향

인공지능

고객이 어디서나 AI 워크로드를 실행할 수 있도록 지원하는 플랫폼 업데이트

오픈 하이브리드 클라우드

하이브리드 클라우드로 더욱 유연한 미래를 구축하는 방법을 알아보세요

보안

환경과 기술 전반에 걸쳐 리스크를 감소하는 방법에 대한 최신 정보

엣지 컴퓨팅

엣지에서의 운영을 단순화하는 플랫폼 업데이트

인프라

세계적으로 인정받은 기업용 Linux 플랫폼에 대한 최신 정보

애플리케이션

복잡한 애플리케이션에 대한 솔루션 더 보기

가상화

온프레미스와 클라우드 환경에서 워크로드를 유연하게 운영하기 위한 엔터프라이즈 가상화의 미래