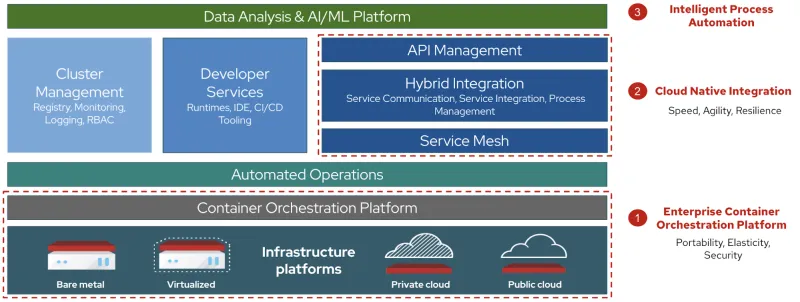

Red Hat sees three fundamental areas of modernization that financial institutions should focus on in order to modernize their payment service and benefit from the same technologies that upstart new entrants to the payments industry already have: microservices, cloud-native integration, and intelligent process automation.

Unsurprisingly, these three fundamental areas are also the drivers of the ongoing payment platform modernization journey of one of our prominent partners, Temenos, as explained in a recent virtual roundtable discussion with PYMNTS.

Enterprise Container Orchestration Platform

Traditional payments systems typically have been developed on a model that combines the application services layer, with the application user interface and underlying database into a single unit.

The first fundamental change is to begin moving away from these monolithic architectures, and identify ways to progressively break up these complex payment systems into specialized microservices, each with its own data layer and its own business logic.

These services can then be encapsulated into lightweight containers and gain the inherent benefits of containerization including portability (the ability to be deployed and run on any infrastructure), developer agility, automation of deployment, fault isolation, scalability, automation of management, and improved security through container immutability.

These architectural changes help the financial institution take advantage of the capability of cloud computing infrastructure to scale on demand by dynamically adjusting computing to meet growing demand for payment services. Infrastructure resources can be scaled up or down based on demand, meaning organizations will only pay for the resources they use.

In executing this modernization journey, portability is often overlooked. Implementing Red Hat OpenShift across the different infrastructure platforms is one way to address portability across public and private clouds.

We see the cloud strategies of financial institutions mostly evolving towards a hybrid cloud model Their computing environment plans rely on a mix of on-premises, private cloud and third-party, public cloud infrastructure services. They depend on orchestration between the various platforms to allow development and operations teams to work together in an interconnected, consistent computing environment where apps can be moved from one environment to another. This is crucial to keep agility in deployment that may be required due to business or regulatory constraints such as specific local requirements of data residency for example.

Cloud-Native Integration

When traditional payment applications become more decentralized, traditional integration architectures that rely on a service bus model, which connects all service data into a centralized hub, becomes the next bottleneck.

Functionally, they make it challenging to quickly connect to new data sources or to implement new functional logic into integration services. Technically, scaling the service bus requires constant capacity planning and operational management due to shared infrastructure resources between integration services.

For this reason, moving away from traditional integration towards cloud-native integration based on distributed, decoupled, and decentralized microservice-based integration is crucial to achieve agility, scalability and resilience.

There are also specific characteristics and benefits that the adoption of cloud-native integration supports:

-

Decoupling: through the use of independent integration microservices and event-driven communication architecture based on Red Hat AMQ Streams, a distributed producer / consumer model can be adopted for service communication whereby services do not need to know about one another, and can be developed, tested, deployed, and scaled independently.

-

Composability: integration logic for real-time systems often involves the requirement for assembling business orchestration logic in rules-based workflows based on dynamic execution rules. Cloud-native integration supports this design pattern by allowing the logic to be encapsulated over multiple service artifacts orchestrated over a common process automation layer.

Cloud-native, distributed integration is critical to handling not just the scaling needs due to increased volumes, but also the complex real-time and rule-based processing requirements created by evolving industry standards, such as ISO 20022, as well as the need to validate payments and deal with exceptions in real-time.

Intelligent Process Automation

As the volume of real-time, digital payments increases, the next challenge for payment organizations is to reduce internal, human-dependent processes. This includes dealing with payment exceptions, and also the growing threats in financial crime that require constant review and investigation of suspicious transactions.

It is not possible to do both in a scalable and cost-effective manner without leveraging intelligent process automation, which refers to the application of artificial intelligence and related new technologies, such as machine learning, to traditional process automation focusing on the automation of human tasks. In particular, intelligent process automation can support the smart automation of detection and handling rules across large volumes of payment and consumer data.

While many specialized AI/ML solutions have emerged to support financial institutions in these endeavours, few institutions realize early in this journey that their teams of data scientists, once hired, will spend more time building and maintaining the tools for collecting, labelling, cleaning and feeding the data into these learning models than building the models themselves.

For this reason, we believe it is an essential part of a payment modernization strategy to build a distributed data and analytics platform that supports data engineers, data scientists, application developers and operations staff in the process of preparing the data, developing and deploying the models, and integrating them into the overall payment service.

The platform should support an efficient delivery model (DevOps/DataOps) model that provides the agility required to adapt to changing rules quickly to limit missed transactions or false positives, and meet new compliance requirements within short timeframes and with limited to no change in underlying platform code to ensure stability in deployment.

Open source has been critical in fostering innovation in AI/ML. Red Hat is a leader of the Open Data Hub project, a community project that brings together Apache Spark, Apache Kafka, Kubeflow, Jupyter and other tools for data scientists. It includes built-in automation/operators for installation on Red Hat Openshift so payment organizations can exploit the additional data available in ISO 20022.

A modern cloud platform can help you to take advantage of real time processing, adapt to changing messaging standards, reduce operating costs, and innovate with digital ecosystems. Visit our page "Move forward, faster" to learn how Red Hat can help make payments simpler, faster, and more efficient for you.

저자 소개

채널별 검색

오토메이션

기술, 팀, 인프라를 위한 IT 자동화 최신 동향

인공지능

고객이 어디서나 AI 워크로드를 실행할 수 있도록 지원하는 플랫폼 업데이트

오픈 하이브리드 클라우드

하이브리드 클라우드로 더욱 유연한 미래를 구축하는 방법을 알아보세요

보안

환경과 기술 전반에 걸쳐 리스크를 감소하는 방법에 대한 최신 정보

엣지 컴퓨팅

엣지에서의 운영을 단순화하는 플랫폼 업데이트

인프라

세계적으로 인정받은 기업용 Linux 플랫폼에 대한 최신 정보

애플리케이션

복잡한 애플리케이션에 대한 솔루션 더 보기

가상화

온프레미스와 클라우드 환경에서 워크로드를 유연하게 운영하기 위한 엔터프라이즈 가상화의 미래