In this article, we provide a solution that enables almost latency free API management for Java-based microservices APIs. We build on Manfred Bortenschlager’s white paper Achieving Enterprise Agility With Microservices And API Management. We provide a practical solution for adding the management layer Manfred outlines to internal microservice-to-microservice API calls.

API Management and Microservices

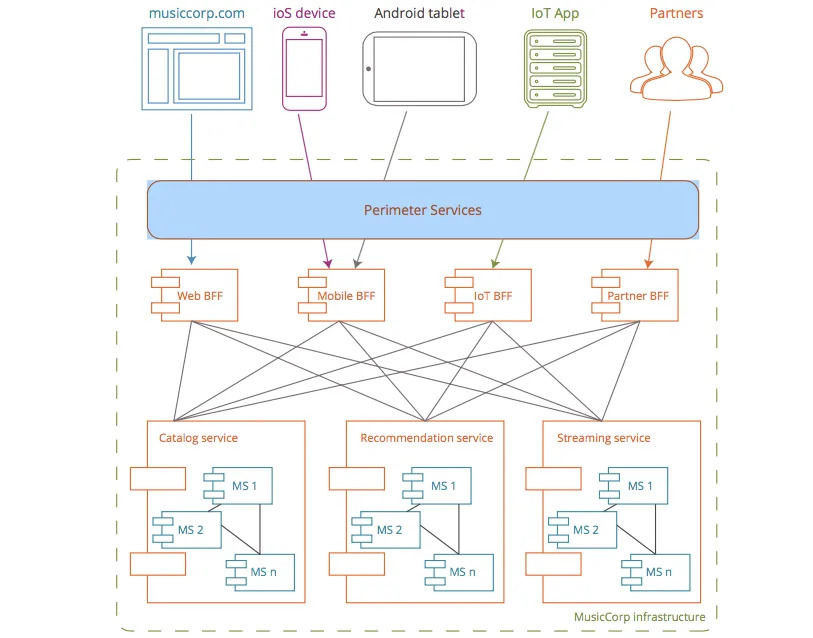

Figure 1 - a typical microservices architecture with depictions of externally and internally consumable microservices

In the white paper Manfred describes a typical microservices architecture consisting of:

- A perimeter service layer that is typically implemented by an API gateway which manages and secures components that follow the backend for frontend (BFF) pattern. The perimeter service exposes APIs to external consumers.

- Internal microservices that are clustered into functional elements and communicate via APIs.

The most common and most decoupled way to achieve API management is through deployment of API gateways on the API provider’s infrastructure. These gateways act as traffic controllers which authenticate, authorize, and report on API traffic to the 3scale API Management Platform. These extensive management features are achievable with very low latency overhead through our caching and asynchronous architectural features. Additionally the gateways provide excellent routing and security protections such as defense against DDoS attacks and more.

In certain situations, however, where the traffic has already been authenticated and low latency is absolutely critical -- like in microservice-based architectures -- an alternative to API gateways is appropriate.

Red Hat 3scale’s Low-Latency API Gateway Architecture

For context, let’s describe how 3scale conceptually achieves its very low latencies in the API gateway.

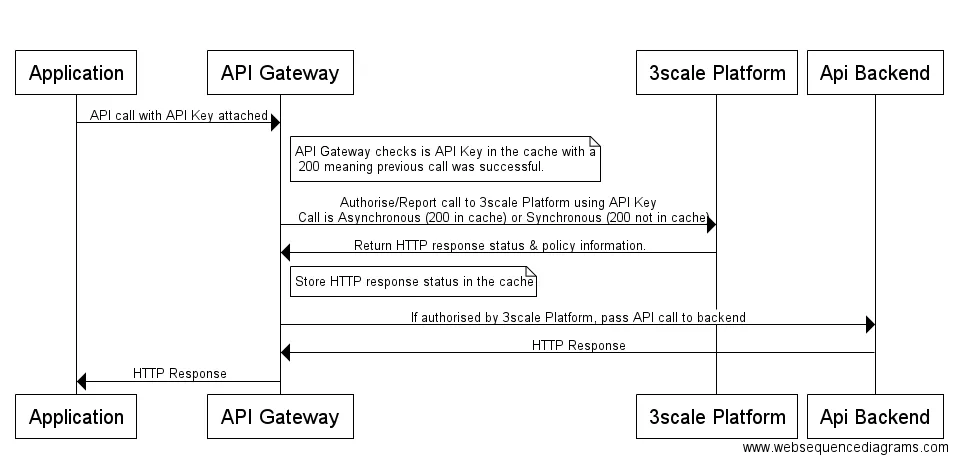

Figure 2: UML describing externally originating API calls - routed through the gateway

Essentially an API call is sent from the client application (mobile app, Web app, IoT device, etc). Instead of routing the call directly at the API, the call is directed to the gateway -- where communication is made to 3scale to authenticate, authorize, and report the call so it’s registered and later viewable in the analytics component of the 3scale API Management Platform. What enables our low latencies is the nature of the communication between the gateway and the 3scale Platform.

Let me elaborate -- as soon as a call reaches the gateway, the local cache is examined for the presence of 200 (code described in the HTTP standard that stands for “OK”) stored against the incoming the API key, concatenated to the 3scale identifier of the API resource being hit. If this is present, the call is allowed straight through and 3scale is contacted asynchronously -- i.e., after the call was forwarded to the customer’s API backend. If not, in the case of an empty cache entry or a 403 where the previous call was unauthorized, there’s a synchronous or blocking call made to 3scale.

As the vast majority of calls do encounter this 200, most calls are simply allowed straight through, resulting in our low latency solution -- typically adding an impressively low 10-20ms onto the latency of an API call without API management.

Red Hat 3scale’s Plug-in Wrapper Architecture

In addition to the API gateway solution described above, we are proposing a refined solution with even lower latency that is especially suited for internal API-based microservice-to-microservice communication. 3scale exposes various code plug-ins to enable API management calls (authorize and report) to be made from your codebase. One of these is the Java plug-in, available here. What we have done for this solution is take this plug-in and surrounded it with a wrapper. In it, we use the exact same behavior we described above on the gateway. I.e. each incoming call checks the local cache, in our case a Java Hashmap, for the presence of the incoming API key, concatenated to the 3scale identifier of the API resource being hit with a “true” stored against it. True indicates the previous call by this client to this resource was authorized by 3scale. If “true” is found, we allow the call straight through and make an asynchronous authorize + report (aka AuthRep) call to 3scale. If “true” is not found, we make a synchronous call to 3scale.

Figure 3: UML describing internal microservice-to-microservice API calls - low latency API management through the plug-in wrapper. Also uses caching and asynchronous calls.

Low-Latency Plug-in Wrapper: Implementation and Performance

As to the implementation, go to https://github.com/tnscorcoran/3scaleJavaPluginWrapper and the code and instructions are there. You simply download the 3scale Java plug-in, download the plug-in wrapper (both Maven projects) and install (detailed instructions can be found on this github repo). The calls to the wrapper should ideally be located in their own component, be it a servlet filter, an interceptor, aspect, or other cross cutting concern. This choice is up to the implementor.

As an illustration of the low latencies achievable, I have two implementations of a mock endpoint running on a local Tomcat server. The endpoint is /catalog/product, a mock version of one of the services used as an example in Manfred’s microservice architecture article. This endpoint is called by another microservice. Therefore, it’s applicable to this use case.

Details of both endpoints:

- A simple implementation that returns some product data.

- An identical implementation to 1) that includes a call to the Java plug-in wrapper to add API management to this service.

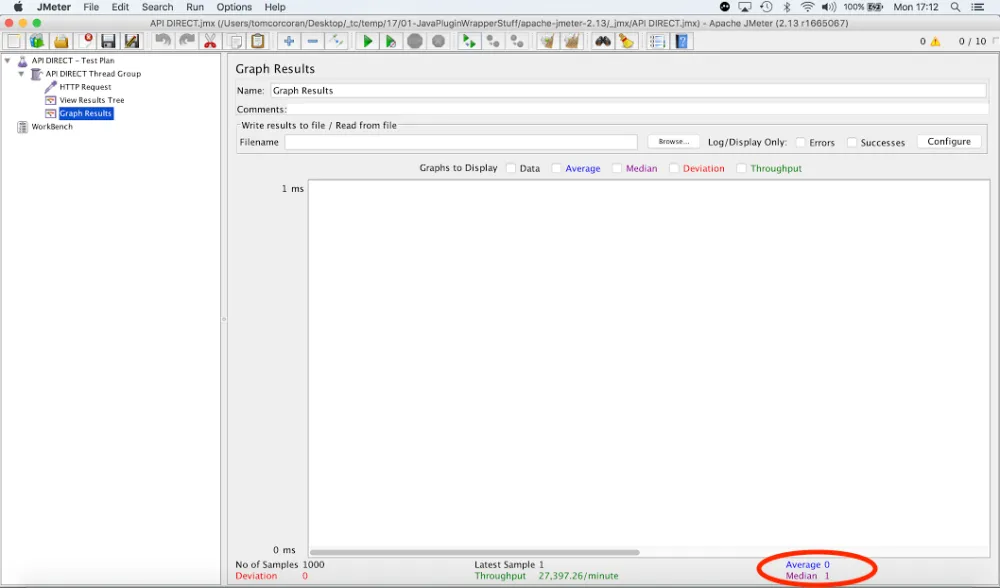

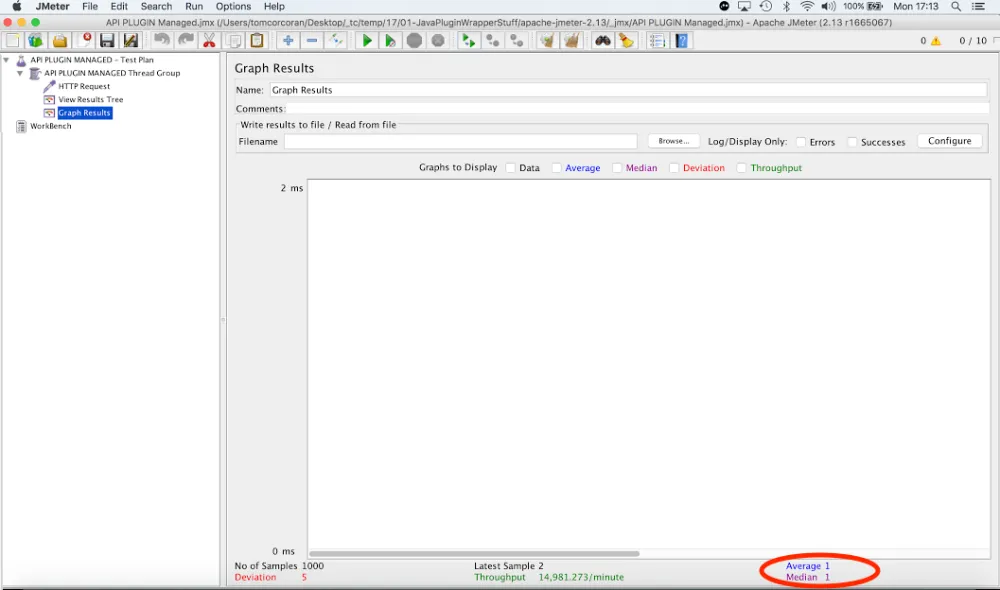

In both cases, we fire 1000 requests at the endpoint and measure the latency. We see the inclusion of the API management in my example adds 1 ms of latency on average to the calls (the average performance of the un-managed was 0 ms; the managed was 1 ms). With hardware and code optimization, this can be brought down to practically zero.

Figure 4: Baseline latency performance of a local mocked version of the internally consumable /catalog/product service. It reported 0ms on average across 1000 hits.

Figure 5: Comparative latency performance of an identical endpoint to Figure 4 - with the addition of the management component - the Java plug-in wrapper. Adds approx 1 ms of latency in a non-optimised environment.

Conclusion

The 3scale API Gateway offers outstanding benefits in terms of API security, authentication, authorization, and routing capabilities.

In certain cases, however, particularly for internal microservice-to-microservice API calls, there is benefit to utilizing the 3scale plug-in wrapper solution that we introduced in this article in order to leverage the superb and extensive API management capabilities offered by 3scale at extremely low latencies.

For more see the 3scale website, and for a live demonstration of this solution, see https://youtu.be/fYb0_AhWs7g.

저자 소개

유사한 검색 결과

내일로 가는 길: 현재의 기술과 미래의 AI를 연결

Introducing Quarkus on Red Hat OpenShift

Communicating the Value of Connecting Systems | Code Comments

채널별 검색

오토메이션

기술, 팀, 인프라를 위한 IT 자동화 최신 동향

인공지능

고객이 어디서나 AI 워크로드를 실행할 수 있도록 지원하는 플랫폼 업데이트

오픈 하이브리드 클라우드

하이브리드 클라우드로 더욱 유연한 미래를 구축하는 방법을 알아보세요

보안

환경과 기술 전반에 걸쳐 리스크를 감소하는 방법에 대한 최신 정보

엣지 컴퓨팅

엣지에서의 운영을 단순화하는 플랫폼 업데이트

인프라

세계적으로 인정받은 기업용 Linux 플랫폼에 대한 최신 정보

애플리케이션

복잡한 애플리케이션에 대한 솔루션 더 보기

가상화

온프레미스와 클라우드 환경에서 워크로드를 유연하게 운영하기 위한 엔터프라이즈 가상화의 미래