This post discusses the merits of standardizing on container base images, and is an excerpt from the new ebook on Red Hat Universal Base Images.

Standard Operating Environments (SOEs) and Linux containers

Traditional IT organizations have long understood the value of a Standard Operating Environment (SOE). Historically, administrators implemented an SOE as a disk image, kickstart, or virtual machine image for mass deployment within an organization. This reduced operational overhead, fostered automation, increased reliability by reducing variance, and set security controls that increased the overall security posture of an environment.

SOEs often include the base operating system (kernel and user space programs), custom configuration files, standard applications used within an organization, software updates, and service packs. It is far easier to troubleshoot a failed service at 2 a.m. if every server is running the same configuration. Some major advantages of an SOE are reduced cost as well as an increase in agility. The effort to deploy, configure, maintain, support, and manage systems and applications can all be reduced.

Understanding the value of an SOE, a mature IT organization tightly controls the number of different operating systems and OS versions. The ideal number is one, but that isn’t usually feasible, so there are efforts to keep the number as small as possible. IT organizations expend considerable effort to make sure that boxes aren’t added to the network with ad-hoc OS versions and configurations.

Linux containers and Standard Operating Environments

So what does this have to do with containers? Containers have dramatically improved the development, deployment, and maintenance of applications. The ease with which containers can be deployed, and the isolation they offer, simplifies many aspects of IT management. The advent of containers, and to some degree DevOps practices, has led to the notion that traditional IT practices like SOEs and configuration management best practices are no longer relevant.

With containers, it’s easy to think you can use whatever technology you want, wherever you want, whenever you want, without having a negative impact on your IT landscape. Containers can have a much smaller footprint and therefore have a much smaller surface area that could be vulnerable, but they still have the components of a stripped-down Linux OS inside. Those components still need to be maintained like traditional OS deployments. However, with containers, the number of versions to track quickly multiplies.

The version explosion: how many different versions am I running?

When developers think about building a containerized application, their focus is typically on running a handful of containers. Even if building a big microservices application with dozens or hundreds of containers, the containers likely share a similar heritage, so developers really don’t think about the many versions of similar software that could be in play.

To really understand the impact of the decisions developers make, you need to consider the consumers of your software and the IT environments for which they are responsible. Given the benefits containers offer, most IT organizations ultimately wind up running hundreds of container images, while large corporations could easily be running thousands of different images.

To understand their perspective, consider what happens if a critical vulnerability or bug is discovered in a heavily used library like the OpenSSL cryptography library or the C library (glibc). The first task is identifying all the places the vulnerable versions are running. To do that they need to know what version is running on every system, which includes every container.

Without an SOE, or at least policies to govern what base images are used, an organization could wind up with considerable maintenance challenges and relying on containers can make this faster.

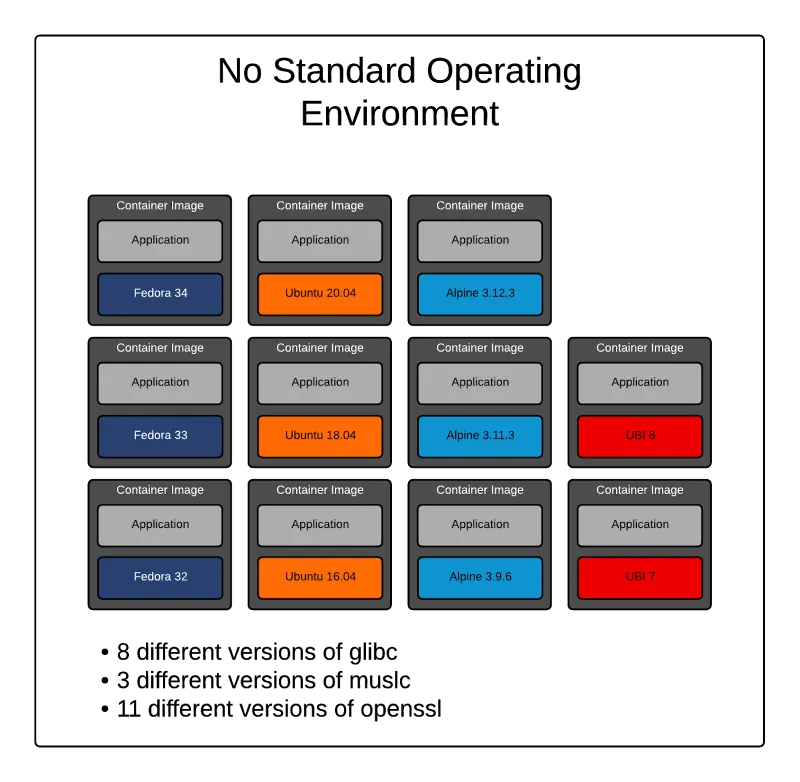

Figure 1 - Multiple versions of the same software due lack of an SOE.

In the environment depicted in figure 1, there are 11 different versions of OpenSSL and 8 different versions of the glibc C library. The situation could even be worse than that! There might be common source versions numbers across OS versions, but the actual packages are different due to different patch levels, or different configuration flags used at compilation.

Another complication is that different distributions don’t use the same conventions for naming and versioning packages. One distribution might package all files for a piece of software into a single larger package, where others break it into a number of smaller packages.

This scenario might seem contrived. However, consider that a typical application landscape includes language runtimes, database, web, and cache servers. So there might be base images for Java, Python, PHP, MySQL, PostgreSQL, Reds, Apache HTTPD, Apache Tomcat, and Nginx to satisfy application dependencies.

The availability of pre-built container images for software components in public registries gives developers a wealth of choices. The developer selecting the image for a database might focus on choosing the latest version, but not investigate what OS forms the base of those images. Or they might choose an image based simply on the smallest image size, even though the base Linux distribution for that image might not be something an enterprise would choose to run in their environment.

Due to the potential for software versions to multiply in an environment, having an SOE for containers is just as important as an SOE for operating systems.

For more information see the Infoworld article, "Containers need standard operating environments too," from Scott McCarty.

The benefits of UBI as an SOE

We introduced the Red Hat Universal Base Image (UBI) in 2019. Red Hat’s goal in creating UBI is to produce a base image for all the needs of developers, software partners, and community projects. UBI is a freely redistributable subset of Red Hat Enterprise Linux (RHEL) for building container-based software.

The bits provided in UBI are the same as those provided in RHEL. They only differ in the terms and conditions for using them. This is the same software used extensively for some of the world’s most critical workloads, such as high performance computing (HPC), financial services, and AI/ML.

It’s used in highly secure environments like governments and banking, I/O intensive applications like transaction processing, and performance critical applications with requirements for specialized hardware and/or low latencies.

-

Software partners: by choosing UBI, software companies can use the same container images for software they are making freely available or selling to enterprises. The enterprise customers of these developers can choose support options from Red Hat that meet their needs.

-

Enterprises and other organizations: Developers, operations, and security teams in many IT organizations have extensive experience with Red Hat Enterprise Linux. UBI gives them familiar base images, packages, and package management tools that they can easily support without retraining.

UBI shares the same 10+ year lifecycle as the version of RHEL on which it is based. UBI components are updated when the corresponding RHEL components are updated.

There are no subscription management or registration requirements for using UBI. This, combined with the long support lifecycle, makes UBI an excellent choice for free software projects and automated build systems like CI/CD pipelines.

Finally, Red Hat is committed to using UBI as the base image for Red Hat products. You have the knowledge that UBI is critical to the success of Red Hat’s products, giving you the confidence to use it as a basis for your own software as well.

New ebook: Red Hat Universal Base Images

To learn more about building on and delivering on UBI, we invite you to download this free ebook from either one of these two Red Hat locations:

저자 소개

Mike Guerette has worked in various marketing roles (product management, partner/business development, product marketing, and developer relations) involving enterprise software. He's been in a Red Hat developer relations role for more than 7 years. He founded the Red Hat developer blog, and co-founded Red Hat Developer Toolset and Red Hat Software Collections for Red Hat Enterprise Linux. He currently works on Red Hat's Partner team, focused on helping ISV developers.

At Red Hat, Scott McCarty is Senior Principal Product Manager for RHEL Server, arguably the largest open source software business in the world. Focus areas include cloud, containers, workload expansion, and automation. Working closely with customers, partners, engineering teams, sales, marketing, other product teams, and even in the community, he combines personal experience with customer and partner feedback to enhance and tailor strategic capabilities in Red Hat Enterprise Linux.

McCarty is a social media start-up veteran, an e-commerce old timer, and a weathered government research technologist, with experience across a variety of companies and organizations, from seven person startups to 20,000 employee technology companies. This has culminated in a unique perspective on open source software development, delivery, and maintenance.

유사한 검색 결과

Confidential Containers workshop on Microsoft Azure Red Hat OpenShift: Learn interactively

Simplify Red Hat Enterprise Linux provisioning in image builder with new Red Hat Lightspeed security and management integrations

Can Kubernetes Help People Find Love? | Compiler

Scaling For Complexity With Container Adoption | Code Comments

채널별 검색

오토메이션

기술, 팀, 인프라를 위한 IT 자동화 최신 동향

인공지능

고객이 어디서나 AI 워크로드를 실행할 수 있도록 지원하는 플랫폼 업데이트

오픈 하이브리드 클라우드

하이브리드 클라우드로 더욱 유연한 미래를 구축하는 방법을 알아보세요

보안

환경과 기술 전반에 걸쳐 리스크를 감소하는 방법에 대한 최신 정보

엣지 컴퓨팅

엣지에서의 운영을 단순화하는 플랫폼 업데이트

인프라

세계적으로 인정받은 기업용 Linux 플랫폼에 대한 최신 정보

애플리케이션

복잡한 애플리케이션에 대한 솔루션 더 보기

가상화

온프레미스와 클라우드 환경에서 워크로드를 유연하게 운영하기 위한 엔터프라이즈 가상화의 미래