There are hundreds of thousands of tasks required to administer a large fleet of servers. Automation can take some of the more mundane tasks off your plate. As an example, once you have built your Standard Operating Environment (SOE) and need to manage the care and feeding of it, you may want to run this through an automation pipeline to get the grunt work done while you are focused on more critical tasks.

So let's look at automating the initial publication of our monthly content in Red Hat Satellite.

Note: This article assumes that you have a functional Satellite or Foreman instance configured for provisioning and an Ansible Automation Control Node (Ansible Tower) instance configured to run ansible automation plays. You can see previous articles here for guidance on setting things up.

Wait a minute...Once we publish the content, how do we know it is any good? Will it work with our existing systems? Newly provisioned systems? How do we test that? Looks like we have our work cut out for us…we need a plan.

A simple plan

The guide "10 Steps to Build an SOE" provides insight into what content and life cycles need to be brought together. The content views and composite content views in this article roughly follow an SOE model.

We can view the content life cycle in Satellite through this lens. Update the content, publish the content, validate the content, promote the content to the next life-cycle environment, rinse and repeat...

If we break it down into discrete steps for automation we need to get a little more granular:

-

Update the Content View Filters to include the latest patches.

-

Publish the content views (CVs) with the latest patches to the Library.

-

Publish the composite content views (CCVs) to the Library.

-

Promote the CVs and CCVs to the first SOE environment.

-

Build some test machines.

-

Smoke test the new builds to see that builds didn’t get broken.

-

Install and configure standard software packages on the test machines

-

If basic tests succeed, promote the CVs and CCVs to the next environment.

-

Rinse and repeat as necessary to get these CVs and CCVs up the environment stack.

We could also look at keeping the test machine builds around, so both fresh builds and patched systems can be tested on iterations. Let’s just start here though—it is plenty!

The SOE environment

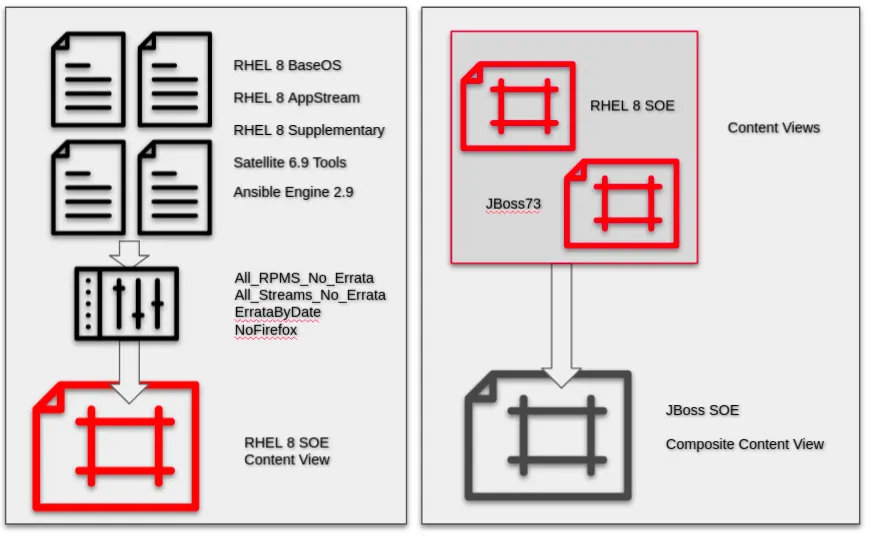

The SOEs in this article start out fairly simple because this is a demo environment and not a production environment. The same principles will apply to a production environment. Figure 1 shows an example of the environments and content views we will use.

Both Red Hat repositories and third-party repositories are brought together to create a variety of curated content for the environment. We are using some standard filters throughout:

-

All RPMs with no Errata - includes all rpms that haven’t been patched in the current release.

-

All Streams with no Errata - includes all application streams that haven’t been patched in the current release.

-

Errata by Date - includes all security, bug fix and enhancement errata that have been updated up to a selected date.

These basic filters give us a stable view on the content and reproducible builds.

Once we have included everything that we want, we can get more granular and eliminate packages and patches that are unnecessary. As an example, Firefox tends to have more frequent patches and is usually considered inappropriate for headless servers, so let’s exclude Firefox from the view. “Include filters” are processed first, then “Exclude filters.”

Figure 1 :The relationship between repositories, content filters, content views and composite content views.

The end date of the ErrataByDate filter determines the last published errata to be included in the view. We periodically update this filter to pull in new errata and then publish a new CV/CCV version. Naming filters systematically makes automation simpler.

1. Automate the filter end date

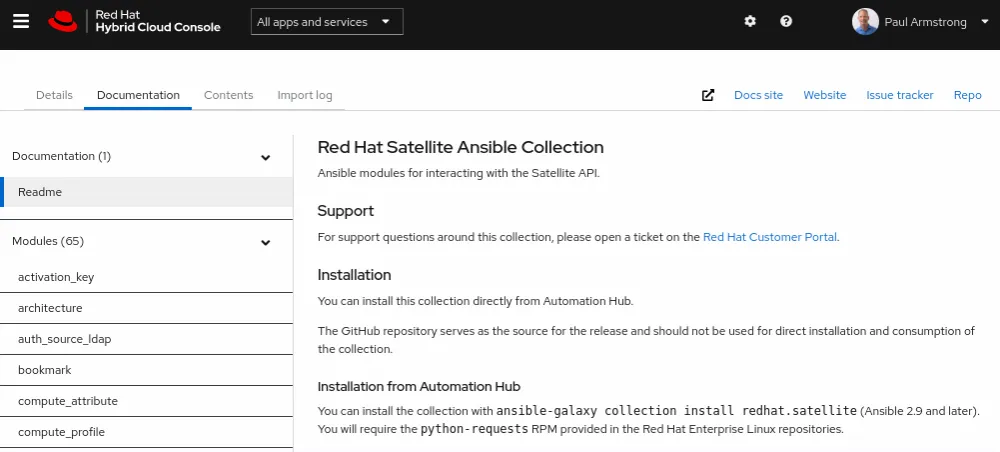

The new, fully supported Ansible collection redhat.satellite makes this automation easier. You can install it from the Automation Hub on the Red Hat Hybrid Cloud Console. Red Hat Ansible Automation Platform can be configured to automatically download collections and update them as part of an Ansible Automation Platform project.

The complete documentation of the collection is provided on the Automation Hub. You can review this as you work along with the example.

The code that goes along with this article is available in a GitHub repository here.

The definition of our content views and composite content views are specified in YAML lists in a variable file. We created a limited scope user for managing the publication process. The critical user data for connecting to our Satellite Server is stored in encrypted format using Ansible Vault. Here is part of the all.yml variable file for our Satellite project.

Note that we keep the sensitive data in a separate file managed by Ansible Vault. The separate vault variable file is encrypted and contains the vault_* variables that are referenced in the yaml.

Separation in this way allows for managing the passwords as a group in a single file. This is only one of the methods available for managing credentials. Inline credentials may also be used in more automated pipelines where the credentials are managed more granularly. You may also find other ways of organizing your data. As an example, Red Hat Identity Management Vaults can be used for storing this data centrally and utilizing certificate authentication to retrieve the data.

Note the filter_enddate variable is set at the time of the Ansible run.

sat_server_url: "https://sat6.parmstrong.ca"

sat_organization: "Default Organization"

sat_publisher_username: "{{ vault_sat_publisher_username }}"

sat_publisher_password: "{{ vault_sat_publisher_password }}"

sat_validate_certs: true

description: "Published by ansible-tower on {{ ansible_date_time.date }}"

filter_enddate: "{{ ansible_date_time.date }}"

num_cvs_to_keep: 3

content_views:

- name: "RHEL8_SOE"

desc: "Red Hat Enterprise Linux 8 Standard Operating Environment Content"

org: "{{ sat_organization }}"

repositories:

- name: "Red Hat Ansible Engine 2.9 RPMs for RHEL 8 Server x86_64"

product: "Red Hat Ansible Engine"

- name: "Red Hat Enterprise Linux 8 for x86_64 - AppStream RPMs 8"

product: "Red Hat Enterprise Linux for x86_64"

- name: "Red Hat Enterprise Linux 8 for x86_64 - BaseOS RPMs 8"

product: "Red Hat Enterprise Linux for x86_64"

- name: "Red Hat Enterprise Linux 8 for x86_64 - Supplementary RPMs 8"

product: "Red Hat Enterprise Linux for x86_64"

- name: "Red Hat Enterprise Linux 8 for x86_64 - AppStream Kickstart 8.4"

product: "Red Hat Enterprise Linux for x86_64"

- name: "Red Hat Enterprise Linux 8 for x86_64 - BaseOS Kickstart 8.4"

product: "Red Hat Enterprise Linux for x86_64"

- name: "Red Hat Satellite Tools 6.9 for RHEL 8 x86_64 RPMs"

product: "Red Hat Enterprise Linux Server"

filters:

- name: "AllPackagesNoErrata"

type: "rpm"

inclusion: "true"

description: "Include all packages with no errata for all repositories"

original_packages: true

repositories: "[]"

- name: "AllStreamsNoErrata"

type: "module"

inclusion: "true"

description: "Include all packages with no errata for all repositories"

original_packages: true

repositories: "[]"

- name: "ErrataByDate"

type: "erratum"

inclusion: "true"

description: "Include all errata updated as of the 1st of the month"

repositories: "[]"

rules:

- name: "errata-by-date-{{ filter_enddate }}"

end_date: "{{ filter_enddate }}"

date_type: "issued"

types:

- "enhancement"

- "bugfix"

- "security"

- name: "NoFireFox"

type: "rpm"

inclusion: "false"

description: "Do not provide Firefox to servers"

original_packages: true

repositories: "[]"

rules:

- name: "firefox*"

basearch: "x86_64"

date_filter_name: "ErrataByDate"

environments:

- "SOE_Development"

The remainder of the content views are described similarly. The above information is consumed by our Ansible code to promote and manage the content. Please note that for this article, it is assumed that the environments, content views and filters already exist. There is more code on my GitHub site that actually goes through the process of building all the environments, views, etc. using the same definition file.

Once we have our data organized, updating all the filters is simple. We can set the filter_enddate to the date that we run the play for simplicity. See the cvpublisher role.

- name: Update the Errata-by-date filter for content views

redhat.satellite.content_view_filter:

username: "{{ sat_publisher_username }}"

password: "{{ sat_publisher_password }}"

server_url: "{{ sat_server_url }}"

name: "{{ item.date_filter_name}}"

organization: "{{ organization }}"

content_view: "{{ item.name }}"

description: "{{ description }}"

filter_type: "erratum" # required for date filter

date_type: "updated" # default

end_date: "{{ filter_enddate }}"

inclusion: True

loop: "{{ content_views }}"

2. Automate publishing the content views

With the filters updated, we can publish and promote our content views to the Library...

- name: Publish the new content view version

redhat.satellite.content_view_version:

username: "{{ sat_publisher_username }}"

password: "{{ sat_publisher_password }}"

server_url: "{{ sat_server_url }}"

content_view: "{{ item.name }}"

organization: "{{ organization }}"

description: "{{ description }}"

loop: "{{ content_views }}"

3. Automate publishing the composite content views

…and then publish our composite content views.

name: Publish the new composite content views version redhat.satellite.content_view_version: username: "{{ sat_publisher_username }}" password: "{{ sat_publisher_password }}" server_url: "{{ sat_server_url }}" content_view: "{{ item.name }}" organization: "{{ organization }}" description: "{{ description }}" loop: "{{ composite_content_views }}"

With our data organized, very little code is left to manage - and the code is extremely portable. This process would typically take an admin many hours of work to do manually or a significant time to script in bash using the hammer command!

4. Promote the content views and composite content views

Now we promote the views to our SOE development environment. The following Ansible code loops over the list of content views and their associated life-cycle environments and promotes them. See the cvpromoter role for more details.

- name: Promote the configured content views

redhat.satellite.content_view_version:

username: "{{ sat_publisher_username }}"

password: "{{ sat_publisher_password }}"

server_url: "{{ sat_server_url }}"

organization: "{{ organization }}"

content_view: "{{ item.name }}"

description: "{{ description }}"

current_lifecycle_environment: Library

lifecycle_environments: "{{ item.environments }}"

loop: "{{ content_views }}"

- name: Promote the configured composite content views

redhat.satellite.content_view_version:

username: "{{ sat_publisher_username }}"

password: "{{ sat_publisher_password }}"

server_url: "{{ sat_server_url }}"

organization: "{{ organization }}"

content_view: "{{ item.name }}"

description: "{{ description }}"

current_lifecycle_environment: Library

lifecycle_environments: "{{ item.environments }}"

loop: "{{ composite_content_views }}"

We can provide different lists of content and composite content views in our control file with a limited list of life-cycle environments to control how we will manage these in a pipeline. One of the options we can take advantage of is using Ansible Automation Controller (formerly known as Ansible Tower) and providing variable overrides in different stages of a workflow. This can allow us to develop sophisticated pipelines for the publishing and testing of our updated content.

5. Build test machines

In this section we use our Satellite collection to deploy systems and build them with our new content views. If you examine the create_host.yml file in the Satellite project, the Ansible module used to create the test system is redhat.satellite.host. This module can be used to create a host using any of the available methods that Satellite supports—bare metal using discovered hosts, hypervisor compute resources or cloud compute resources.

I’ve included a section that builds the new host. We use a slightly different set of parameters values to build a bare metal machine, virtual machine on premise or a virtual machine on a cloud provider. This is more configuration than code (the call for a bare metal system does not use compute_resource or compute_profile). The code that is ahead of this task simply sets up these values.

- name: "Deploy the virtual host"

redhat.satellite.host:

username: "{{ sat_publisher_username }}"

password: "{{ sat_publisher_password }}"

server_url: "{{ sat_server_url }}"

organization: "{{ sat_organization }}"

location: "{{ sat_location }}"

name: "{{ host.fqdn }}"

comment: "{{ host_build_comment }}"

hostgroup: "{{ host.hostgroup }}"

compute_resource: "{{ compute_resource }}"

compute_profile: "{{ host.compute_profile }}"

mac: "{{ target_mac }}"

build: true

state: present

compute_attributes:

start: "1"

register: deploy_response

when: host.compute_resource != "Baremetal" and deploy == true

We call this code as many times as necessary to build the hosts that will represent each of our content views or composite content views that are used in an endstate system. These will be our test machines.

6. Run smoke tests

These are basic tests to ensure that we didn’t break something fundamental in the publishing or promoting of the content, or worse, a regression has crept in upstream that will critically affect our builds. Where there is smoke, there is fire.

The smoke tests that you define will vary depending on your environment. Typically, they are very simple tests - whether the system boots and starts, whether it can configure its time accurately, whether it can perform forward and reverse lookups on its own FQDN(s), whether it can discover services through DNS, whether it can authenticate standard user accounts against specified directories, etc.

These are simple verifications that the system has basic functionality. Please see the code in the smoketest GitHub repository for some of the tests that I use as demonstrations.

7. Install and configure standard packages

These are the standard packages that are configured in your content views or composite content views. The standard packages may be packages that are provided by Red Hat, provided by an open source repository or provided by a third-party vendor. Whatever the case, in this step we are testing that:

-

We published our packages and streams appropriately to our content and composite content views and haven’t missed any content.

-

Our standard package installations function appropriately and accept a standard configuration that is used in our environments.

In this section, we are consuming application configurations that are templated from development, QA or production sources that allow us to determine that the installation and configuration processes are not broken (to the best of our ability). This may be arbitrarily complex—usually for a content test, this effort is limited. Apply the configuration and determine if the application starts and the appropriate processes are running.

In our examples we are using some standard Ansible sample code to deploy a LAMP stack, a JBOSS standalone application and a wordpress deployment. The code for these is in the ansible-examples repository on my GitHub. The projects are lamp_simple_rhel7 (has been updated for rhel8), jboss-standalone, and wordpress-nginx_rhel7 (we will substitute the drupal project for wordpress later when it is complete). Feel free to use any or create your own.

8. If the tests succeed, we can promote the content views

This section will invoke the same Ansible code to promote the content views and composite content view. If the tests fail, we will log the information and send out some notifications. Optionally, we can roll back the promotions or delete the promoted content version.

Ready, set, automate

In summary, this post has walked you through the plan for automating content promotion for content views and composite content views along a life-cycle environment in Red Hat Satellite. The ansible example code provided demonstrates the utilization of the redhat.satellite collection for a supported method of automating Satellite. This is a small taste of the capabilities that can be provided through the combination of the management tools from Red Hat.

I plan to show you how to set up Ansible Automation Platform to run the automation workflow in a future post. In the meantime, check out the exciting automation announcements from AnsibleFest 2021 here.

Sobre el autor

Paul Armstrong helps customers meet business outcomes through an in-depth understanding of complex business problems and by designing, building and documenting complete solutions using Red Hat and open source technologies.

Más como éste

AI insights with actionable automation accelerate the journey to autonomous networks

IT automation with agentic AI: Introducing the MCP server for Red Hat Ansible Automation Platform

Technically Speaking | Taming AI agents with observability

A composable industrial edge platform | Technically Speaking

Navegar por canal

Automatización

Las últimas novedades en la automatización de la TI para los equipos, la tecnología y los entornos

Inteligencia artificial

Descubra las actualizaciones en las plataformas que permiten a los clientes ejecutar cargas de trabajo de inteligecia artificial en cualquier lugar

Nube híbrida abierta

Vea como construimos un futuro flexible con la nube híbrida

Seguridad

Vea las últimas novedades sobre cómo reducimos los riesgos en entornos y tecnologías

Edge computing

Conozca las actualizaciones en las plataformas que simplifican las operaciones en el edge

Infraestructura

Vea las últimas novedades sobre la plataforma Linux empresarial líder en el mundo

Aplicaciones

Conozca nuestras soluciones para abordar los desafíos más complejos de las aplicaciones

Virtualización

El futuro de la virtualización empresarial para tus cargas de trabajo locales o en la nube