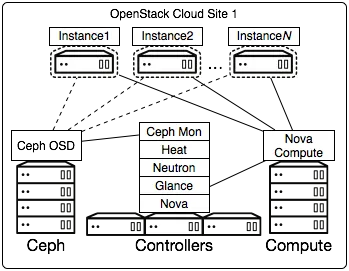

Those familiar with OpenStack already know that deployment has historically been a bit challenging. That's mainly because deployment includes a lot more than just getting the software installed - it’s about architecting your platform to use existing infrastructure as well as planning for future scalability and flexibility. OpenStack is designed to be a massively scalable platform, with distributed components on a shared message bus and database backend. For most deployments, this distributed architecture consists of Controller nodes for cluster management, resource orchestration, and networking services, Compute nodes where the virtual machines (the workloads) are executed, and Storage nodes where persistent storage is managed.

The Red Hat recommended architecture for fully operational OpenStack clouds include predefined and configurable roles that are robust, resilient, ready to scale, and capable of integrating with a wide variety of existing 3rd party technologies. We do this with by leveraging the logic embedded in Red Hat OpenStack Platform Director (based on the upstream TripleO project).

With Director, you'll use OpenStack language to create a truly Software Defined Data Center. You’ll use Ironic drivers for your initial bootstrapping of servers, and Neutron networking to define management IPs and provisioning networks. You will use Heat to document the setup of your server room, and Nova to monitor the status of your control nodes. Because Director comes with pre-defined scenarios optimized from our 20 years of Linux know-how and best practices, you will also learn how OpenStack is configured out of the box for scalability, performance, and resilience.

Why do kids in primary school learn multiplication tables when we all have calculators? Why should you learn how to use OpenStack in order to install OpenStack? Mastering these pieces is a good thing for your IT department and your own career, because they provide a solid foundation for your organization’s path to a Software Defined Data Center. Eventually, you’ll have all your Data Center configuration in text files stored on a Git repository or on a USB drive that you can easily replicate within another data center.

In a series of coming blog posts, we’ll explain how Director has been built to accommodate the business requirements and the challenges of deploying OpenStack and its long-term management. If you are really impatient, remember that we publish all of our documentation in the Red Hat OpenStack Platform documentation portal (link to version 8).

Lifecycle of your OpenStack cloud

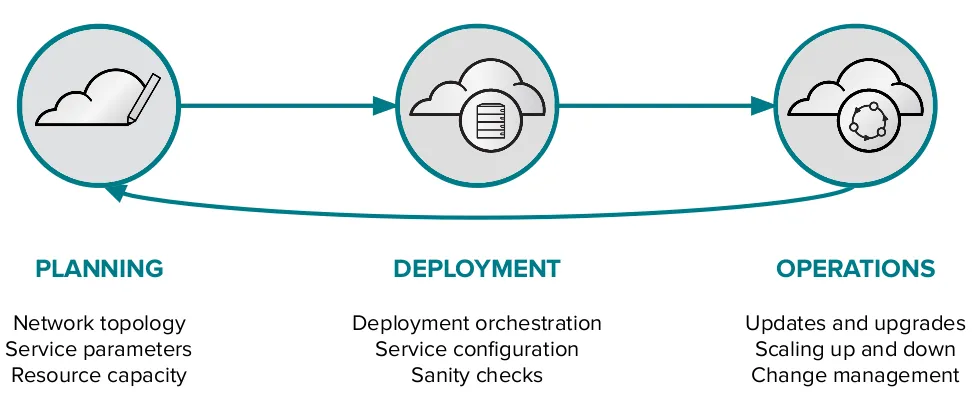

Director is defined as a lifecycle management platform for OpenStack. It has been designed from the ground up to bridge the gap between the planning and design (day-0), the installation tasks themselves (day-1), and the ongoing operation, administration and management of the environment (day-2).

- Firstly, the pre-deployment planning stage (day-0). Director provides configuration files to define the target architecture including networking and storage topologies, OpenStack service parameters, integrations to third party plugins, etc. All the required items to suit the requirements of an organisation. It also verifies that target hardware nodes are ready to be deployed and their performance is equivalent (we call that “black-sheep detection”).

- Secondly, the deployment stage (day-1). This is where the bulk of the Director functionality is executed. One of the most important steps is verifying that the proposed configuration is sane, there’s no point in trying to deploy a configuration if we are sure it will fail due to pre-flight validation checking. Assuming that the configuration is valid, Director needs to take care of the end to end orchestration of the deployment, including hardware preparation, software deployment, and once up and running, configuring the OpenStack environment to perform as expected.

- Lastly, the operations stage in the long-run (day-2). Red Hat has listened to our OpenStack customers and their Operations teams, and designed Director accordingly. It can check the health of an environment, and perform changes, such as adding or replacing OpenStack nodes, updating minor releases (security updates) and also automatically upgrading between major versions, for example from Kilo to Liberty.

Despite being a relatively new offering from Red Hat, Director has strong technology foundations, a convergence of many years of upstream engineering work, established technology for Linux and Cloud administration, and newer DevOps automation tools. This has allowed us to create a powerful, best of breed deployment tool that's in-line with the overall direction of the OpenStack project (with TripleO), as well as the OPNFV installation projects (with Arno).

Despite being a relatively new offering from Red Hat, Director has strong technology foundations, a convergence of many years of upstream engineering work, established technology for Linux and Cloud administration, and newer DevOps automation tools. This has allowed us to create a powerful, best of breed deployment tool that's in-line with the overall direction of the OpenStack project (with TripleO), as well as the OPNFV installation projects (with Arno).

Feature Overview

Upon initial creation of the Red Hat OpenStack Platform Director, we improved all the major TripleO components and extended them to perform tasks that go beyond just the deployment. Currently, Director is able to perform the following tasks:

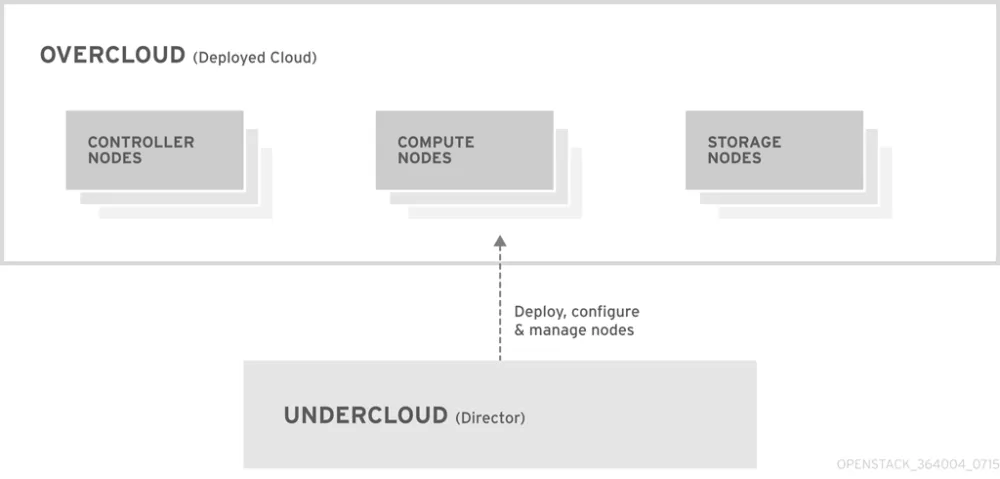

- Deploy a management node (called undercloud) as the bootstrap OpenStack cloud. From there, we define the organisation’s production-use Overcloud combining our reference configurations and user-provided customisations. Director provides command line utilities (and a graphical web interface) as a shortcut to access the undercloud OpenStack RESTful API’s.

- The undercloud interacts with bare metal hardware via Ironic (to do PXE boot and power management), which relies on an extensive array of supported drivers. Red Hat collaborates with vendors so that their hardware will be compatible with Ironic, giving customers flexibility in the hardware platforms they choose to consume.

- During overcloud deployment, Director can inspect the hardware and automatically assign roles to specific nodes, so nodes are chosen based on their system specification and performance profile. This vastly simplifies the administrative overhead, especially with large scale deployments.

- Director ships with a number of validation tools to verify that any user-provided templates are correct (like the networking files), that also be useful when performing updates or upgrades. For that, we leverage Ansible in the upgrade sanity check scripts. Once deployed you can automatically test a deployed overcloud using Director’s Tempest toolset. Tempest verifies that the overcloud is working as expected with hundreds of end-to-end tests, in a way that it conforms to the upstream API specification. Red Hat is committed to shipping the standard API specification and not breaking update and upgrade paths for customers and therefore providing an automated mechanism for compatibility is of paramount importance.

- In terms of the deployment architecture itself, Red Hat has built a highly available reference architecture containing our recommended practices for availability, resiliency, and scalability. The default Heat templates as shipped within Director have been engineered with this reference architecture in-mind, and therefore a customer deploying OpenStack with Director can leverage our extensive work with customers and partners to provide maximum stability, reliability, and security features for their platform. For instance, Director can deploy SSL/TLS based OpenStack endpoints for better security via encrypted communications.

- The majority of our production customers are using Ceph with OpenStack. That’s why Ceph is the default storage backend within Director, and automatically deploys Ceph monitors on controller nodes, and Ceph OSDs on dedicated storage nodes. Alternatively, it can connect the OpenStack installation to an existing Ceph cluster. Director supports a wide variety of Ceph configurations, all based on our recommended best practices.

- Last, but not least, the overcloud networks defined within Director can now be configured as either IPv4 or IPv6. Feel free to check our OpenStack IPv6 networking guide. Some exceptions, only doable in IPv4, are the provisioning network (PXE) and the VXLAN/GRE tunnel endpoints, which can only be IPv4 at this stage. Dual stack IPv4 and IPv6 networking is available only for non-infrastructure networks, for example, tenant, provider, and external networks.

- For 3rd-party plugin support, our partners are working with the upstream OpenStack TripleO community to add their components, like other SDN or SDS solutions. The Red Hat Partner Program of certified extensions allows our customer to enable and automatically install those plugins via Director (for more information, visit our documentation on Partner integrations with Director)

In our next post, we’ll explain the components of Director (TripleO) in further detail, how does it help you deploy and manage the Red Hat OpenStack Platform, and a deep dive on how do they work together. This will help you understand, in our opinion, the most important feature of all: Automated OpenStack Updates and Upgrades. Stay tuned!

Sobre el autor

Más como éste

Friday Five — March 27, 2026 | Red Hat

Modernize virtual machines on Google Cloud with Red Hat OpenShift Virtualization

Keeping Track Of Vulnerabilities With CVEs | Compiler

Post-quantum Cryptography | Compiler

Navegar por canal

Automatización

Las últimas novedades en la automatización de la TI para los equipos, la tecnología y los entornos

Inteligencia artificial

Descubra las actualizaciones en las plataformas que permiten a los clientes ejecutar cargas de trabajo de inteligecia artificial en cualquier lugar

Nube híbrida abierta

Vea como construimos un futuro flexible con la nube híbrida

Seguridad

Vea las últimas novedades sobre cómo reducimos los riesgos en entornos y tecnologías

Edge computing

Conozca las actualizaciones en las plataformas que simplifican las operaciones en el edge

Infraestructura

Vea las últimas novedades sobre la plataforma Linux empresarial líder en el mundo

Aplicaciones

Conozca nuestras soluciones para abordar los desafíos más complejos de las aplicaciones

Virtualización

El futuro de la virtualización empresarial para tus cargas de trabajo locales o en la nube