The manufacturing industry has consistently used technology to fuel innovation, production optimization and operations. Now, with the combination of edge computing and AI/ML, manufacturers can benefit from bringing processing power closer to data that, when put into action faster, can help to proactively discover of potential errors at the assembly line, help improve product quality, and even reduce potential downtime through predictive maintenance and in the unlikely event of a drop in communications with a central/core site, individual manufacturing plants can continue to operate.

But this revolution of process and data requires many complex systems: sensors, line servers, wireless gateways, operational dashboards, servers and tablets for the maintenance teams on the floor to monitor the whole system. Unlike the factory systems of the past that were custom built and managed by operations technology (OT), the desired approach to support use cases that not only collect, analyze and act on machine, plant, and factory floor data in real time is to do so in a standardized manner.

To achieve this manufacturing vision, along with the continued digitalization of factories, manufacturers must now take a hard look at their operations and technology to meet these new opportunities. It requires factory systems to mirror the best practices of a modern IT environment based on containers, Kubernetes, agile development, AI/ML and automation. All of these technologies, coincidentally, are components of open hybrid cloud, an IT footprint that can be used to accommodate these manufacturing technologies, from the edge of the network to the factory floor.

What does it take? Let’s break it down

Let’s examine a manufacturing use case that needs to capture sensor information from line servers and route it to the on-site factory cluster where data can be analyzed in real-time with AI/ML models. This data can then be sent to the centralized data center or cloud for further processing and storage. We’ll cover how within this use case, what is done in a single plant/location, can scale to multiple sites with consistency to meet the needs of modern manufacturing organizations including:

-

A production model: manage the complexity and relationships of manufacturing “silos” and links in the supply chain.

-

Real-time processing and analysis of data: ability to monitor orders, materials, machine status, shipments.

-

Failure prediction (predictive maintenance): anticipate and act on production line events before they occur.

-

Security: conformance to corporate and government IT security policies.

-

Compliance: auditing of factory and core data center systems using operational and historical record keeping.

-

Modularity: transition from monolithic production systems to microservices.

-

Factory modernization: IT systems that can adapt to factory production line changes.

-

Safety: workers and equipment can be monitored continuously and many issues can be detected much earlier.

Meeting the needs of consistency and intelligence at the edge

In order to meet the needs of modern manufacturing organizations, it's important to acknowledge that manufacturing customers are under tremendous pressure to drive innovation and efficiency on their plant floors. This requires a holistic approach that incorporates a combination of modern IT products and emerging methodologies with the goal being to avoid the tedious and error-prone manual configuration of different systems and applications at scale.

Among the top considerations for building the edge architecture for this one manufacturing plant is that it needs to be scalable to hundreds of factories, without needing much manual intervention and increase in resources to manage them.

In addition, it needs sophisticated data messaging between potentially disconnected systems, robust storage for data from the factory line servers to the corporate data lake and integrated machine learning (ML) components and pipelines for delivering factory automation intelligence. Also, the hardware footprint for manufacturing systems can vary dramatically from low power, low memory units for data collection on the factory floor, all the way up to multi-core, large memory nodes in a central data center.

Two fundamental strategies that can help achieve this are:

-

GitOps and blueprints

-

AI/ML

GitOps and Blueprints

GitOps is a paradigm whereby enterprises use git as the declarative source of truth for the continuous deployment (CD) of system updates. Administrators can carefully and efficiently manage system complexity by pushing reviewed commit changes of configuration settings and artifacts to git, where they are then automatically pushed into operational systems. The idea is that the current state of complex distributed systems can be reflected within git as code.

In Kubernetes, GitOps is a powerful abstraction since it mirrors the underlying design goals of that platform: a Kubernetes cluster is always reconciling to a point of truth with respect to what developers and administrators have declared should be the target state of the cluster. An ideal GitOps model for Kubernetes employs a pipeline approach where YAML-based configuration and container image changes can originate as git commits that then trigger activities in the pipeline that result in updates to applications and indeed the cluster itself. Below is a graphical representation of a GitOps flow:

So for manufacturing environments, we can see the utility of something like GitOps for a consistent, declarative approach to managing individual cluster changes and upgrades. But we also know that edge manufacturing environments are heterogeneous in their size and type of hardware resources.

This is where the concept of blueprints comes in: declarative specifications that can be organized in layers such that infrastructure settings that can be shared are shared, but also allow for various points of customization where it is required. Blueprints are used to define all the components used within an edge reference architecture such as hardware, software, management tools, and point of delivery (PoD) tooling.

AI/ML to turn insights into action

Given that this use case involves the generation of large amounts of sensor data, we need to turn to AI/ML capabilities to produce actionable insights from that data. Human operators need to be able to view HMI/SCADA (Human Machine Interface/Supervisory control and data acquisition) dashboards in the factory of not only the real-time status of production lines, but also be presented with predictive information of factory health. The data from the line servers serves two purposes for AI/ML:

-

To drive the development, testing and deployment of machine learning models for predictive maintenance

-

To provide live data streams into those deployed models for inference at the factory

The below diagram depicts a sample AI/ML workflow:

Addressing industrial manufacturing use cases with Red Hat

Red Hat provides a technology advantage for manufacturers today with a portfolio of building blocks organized around Red Hat OpenShift, the industry’s most comprehensive enterprise Kubernetes platform. Red Hat products can be deployed and managed as scalable infrastructure for manufacturing use cases in an automated and repeatable way, and also coordinate the data messaging, storage, and machine learning (ML) pipeline.

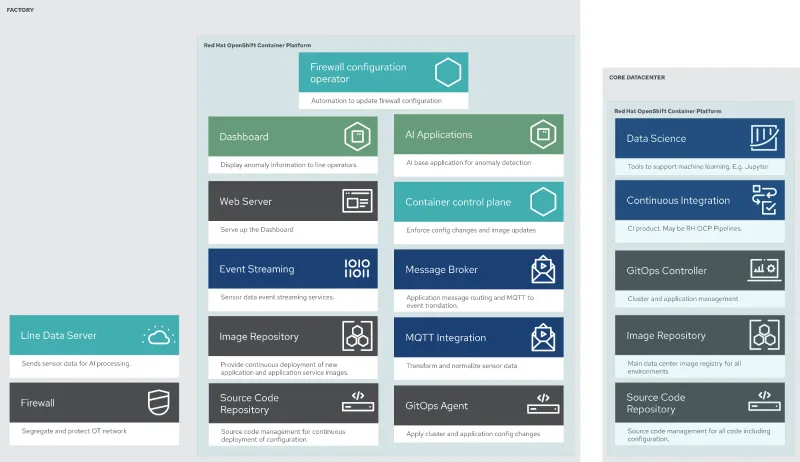

Using a modular approach to building the edge architecture (see below) provides the flexibility to deploy any of the components shown where it makes sense — from the core data center to the factory floor, to address the needs of various manufacturing use cases.

What’s also important is having a reliable message queue that can hand off data to a scalable storage system as part of an open hybrid cloud model. Such a system manages the flow of data from the sensors all the way to the long term storage systems. Here is a breakdown of how the Red Hat portfolio works together to address the needs of our manufacturing use case:

-

OpenShift provides the robust, holistically secure and scalable enterprise container platform.

-

Data coming from sensors is transmitted over MQTT to Red Hat AMQ, which routes sensor data for two purposes: model development in the core data center and live inferencing in the factory data centers. The data is then relayed on to Red Hat AMQ Streams (Kafka) for further distribution within the factory datacenter and out to the core data center.

-

The lightweight Apache Camel K provides MQTT (Message Queuing Telemetry Transport) integration that normalizes and routes sensor data to the other components.

-

That sensor data is mirrored into a data lake that is provided by Red Hat Open Container Storage. Data scientists then use various tools from the open source project Open Data Hub to perform model development and training, pulling and analyzing content from the data lake into notebooks where they can apply ML frameworks.

-

Once the models have been tuned and are deemed ready for production, the artifacts are committed to git which kicks off an image build of the model using OpenShift Pipelines (Tekton).

-

The model image is pushed into the integrated registry of OpenShift running in the core data center which is pushed back down to the factory data center for use in inferencing.

In addition, Red Hat’s partner ecosystem is strategic to solving these use cases. The Seldon Core open source platform for deploying machine learning models is used in this solution to provide the cloud-native model wrapping, deployment, and serving.

Putting it all together

Building on top of the open source, highly customizable Kubernetes-based Red Hat OpenShift platform can prepare your technology stacks for the future. And when used in combination with the various elements of Red Hat’s software portfolio, you can benefit from a powerful foundation for building out a true AI/ML driven, edge-based manufacturing process, complete with sensors and the open hybrid cloud behind it all.

To learn more about Red Hat’s edge computing approach, visit our article on why Red Hat for edge computing.

À propos des auteurs

Frank Zdarsky is Senior Principal Software Engineer in Red Hat’s Office of the CTO responsible for Edge Computing. He is also a member of Red Hat’s Edge Computing leadership team. Zdarsky’s team of seasoned engineers is developing advanced Edge Computing technologies, working closely with Red Hat’s business, engineering, and field teams as well as contributing to related open source community projects. He is serving on the Linux Foundation Edge’s technical advisory council and is a TSC member of the Akraino project.

Prior to this, Zdarsky was leading telco/NFV technology in the Office of the CTO and built a forward deployed engineering team working with Red Hat’s most strategic partners and customers on enabling OpenStack and Kubernetes for telco/NFV use cases. He has also been an active contributor to open source projects such as OpenStack, OpenAirInterface, ONAP, and Akraino.

Software, solution and cloud architect. Innovator, global collaborator, developer and machine learning engineer.

Plus de résultats similaires

Deterministic performance with Red Hat Enterprise Linux for industrial edge

From maintenance to enablement: Making your platform a force-multiplier

Who’s Afraid Of Compilers? | Compiler

Compute confidential: In hardware we trust | Technically Speaking

Parcourir par canal

Automatisation

Les dernières nouveautés en matière d'automatisation informatique pour les technologies, les équipes et les environnements

Intelligence artificielle

Actualité sur les plateformes qui permettent aux clients d'exécuter des charges de travail d'IA sur tout type d'environnement

Cloud hybride ouvert

Découvrez comment créer un avenir flexible grâce au cloud hybride

Sécurité

Les dernières actualités sur la façon dont nous réduisons les risques dans tous les environnements et technologies

Edge computing

Actualité sur les plateformes qui simplifient les opérations en périphérie

Infrastructure

Les dernières nouveautés sur la plateforme Linux d'entreprise leader au monde

Applications

À l’intérieur de nos solutions aux défis d’application les plus difficiles

Virtualisation

L'avenir de la virtualisation d'entreprise pour vos charges de travail sur site ou sur le cloud