Most businesses could be making better use of data science, but are limited by their tools and workflows. This is why we're offering Red Hat OpenShift Data Science -- to help our customers apply what Red Hat has learned about data science and machine learning (ML) from our own internal IT projects and working with customers across many industries. Here's what it is and how it can help you.

Data science and machine learning are helping to drive business decisions and generate income and insights for organizations across a spectrum of industries, from oil and gas to financial services, and many in between. However, developing and deploying ML workflows can be challenging. This work is often limited by lack of access to data, inadequate compute resources, difficulty in managing interdependent libraries and package versions, and security constraints. We are aiming to address those challenges with Red Hat OpenShift Data Science.

Vous souhaitez en faire plus avec les images UBI (Universal Base Images) de Red Hat ?

Vous souhaitez en faire plus avec les images UBI (Universal Base Images) de Red Hat ?

What is Red Hat OpenShift Data Science?

Red Hat OpenShift Data Science is an add-on to Red Hat OpenShift managed cloud services. It is initially being offered on Amazon Web Services (Red Hat OpenShift Dedicated and Red Hat OpenShift Service on AWS). It provides a sandbox environment for data scientists to develop, train and test machine learning models and deploy them for use in intelligent applications.

Red Hat OpenShift Data Science provides a supported, self-service environment where data scientists and machine learning engineers can carry out their daily work, from gathering and preparing data to testing and training ML models. With Red Hat OpenShift Data Science, customers can access a range of AI/ML technologies from Red Hat partners and independent software vendor (ISV) offerings, enabling corporations to build their own flexible sandbox environment containing some of the latest data science tools.

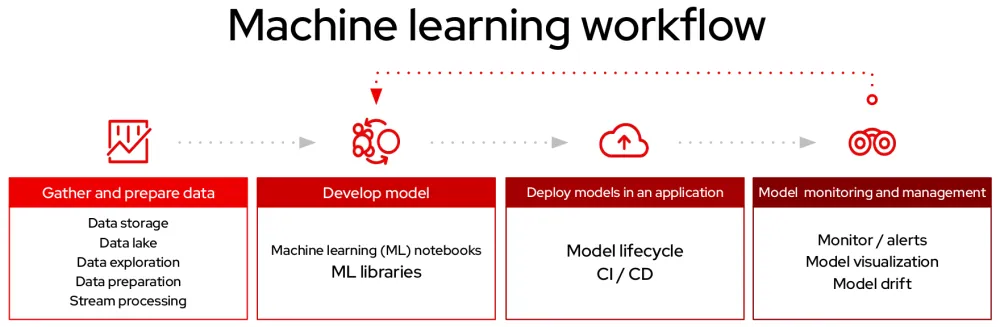

The machine learning workflow

Before exploring Red Hat OpenShift Data Science more thoroughly, let's first recap the stages of the ML workflow that data scientists typically follow when applying AI/ML to solve a business problem.

The workflow begins with gathering and preparing data. Data often has to be federated from a range of sources, and exploring and understanding data plays a key role in the success of a data science project.

Once the data has been gathered, cleaned and processed, the second stage of the ML workflow can begin. When training a model, parameters are tuned based on a set of training data. In practice, data scientists train a range of models, and compare performance while considering trade-offs such as time and memory constraints.

After model training, the next step of the workflow is production. Traditionally this step would have involved a hand-off between a data scientist and developer, but at Red Hat we are increasingly seeing that data scientists are now responsible for integration of models into applications.

Finally, data scientists need to monitor the performance of models in production, tracking prediction and performance metrics.

How OpenShift Data Science supports the machine learning workflow

By providing a unified, self-service sandbox environment with integrated tooling and access to a range of open source data science projects and proprietary software, Red Hat OpenShift Data Science enables data scientists to focus on the task at hand, and develop and train models rapidly in a more secure, supported environment.

For instance, OpenShift Data Science enables the JupyterLab service by default, allowing users to develop models and implement analytic techniques in Jupyter Notebooks. With a range of tried and tested notebook images to choose from, users can quickly load Red Hat provided container images and develop models using the latest frameworks, including TensorFlow and PyTorch.

With the ability to connect to GPUs on-demand, model training and testing can be accelerated, reducing the time needed to develop models and gain insights. This is conducive to rapid prototyping and experimentation use cases.

Putting models into production

Built on top of Red Hat OpenShift, the industry’s leading enterprise Kubernetes platform, Red Hat OpenShift Data Science enables cross functional teams to work on the same platform, leading to a simpler integration experience when deploying models. Using the Source-to-Image (S2i) toolkit in Red Hat OpenShift, builds can be used to turn ML experiments into containerised models, and automatically deployed as part of an intelligent application.

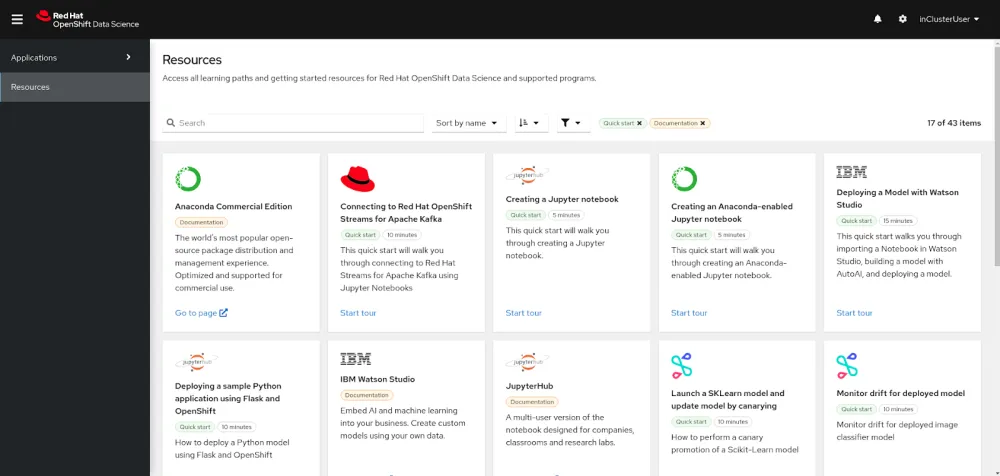

Open door for third-party ML tools

Red Hat OpenShift Data Science takes the "bring your own partner" approach to commercial ISV tools. A range of AI/ML offerings have been certified and are planned to be available, along with the OpenShift Data Science offering itself, in Red Hat Marketplace later this year. These offerings can be combined with Red Hat OpenShift Data Science to give a bespoke experience, making use of the wider AI/ML ecosystem. Integration with Red Hat OpenShift Streams for Apache Kafka allows data scientists to test and develop models on streaming data.

Initial partners include:

Starburst Galaxy

Starburst Galaxy is a fully managed platform, designed to let you access your data where it lives across hybrid clouds.

Anaconda Commercial Edition

Anaconda Commercial Edition provides curated access to an extensive set of data science packages to be used in Jupyter projects.

IBM Watson Studio

IBM Watson Studio is used to build, run and manage AI models at scale with Watson Machine Learning and Watson OpenScale.

Seldon Deploy

Seldon Deploy helps simplify and accelerate the process of deploying and managing ML models.

Red Hat OpenShift provides enterprise-grade Kubernetes, and has been the place to develop distributed systems for years. By conducting data science on the same platform, we increase the opportunity for cross-team collaboration, simplify the integration experience with other application components and make it easier and quicker for businesses to leverage the insights from ML.

With Red Hat OpenShift Data Science, data scientists can focus on developing models and gaining insights from data without having to worry about managing infrastructure and hardware.

Learn more about OpenShift Data Science

Ready to learn more about Red Hat OpenShift Data Science? Red Hat's Chris Chase has a great demo video here that really conveys the value of this new service. You can also learn more by visiting our OpenShift Data Science page.

À propos de l'auteur

Sophie Watson is a data scientist at Red Hat, where she helps customers to solve business problems using machine learning in the hybrid cloud. She has previously researched Bayesian Statistics and Recommendation Engines, and is focused on using her data science and statistics skills to inform next-generation infrastructure for intelligent application development.

Plus de résultats similaires

Votre plateforme d'applications est-elle prête pour l'avenir ?

Renforcé, prêt et gratuit : évolution de la sécurité des conteneurs

Talking to Machines: LISP and the Origins of AI | Command Line Heroes

The Web Developer And The Presence | Compiler: Re:Role

Parcourir par canal

Automatisation

Les dernières nouveautés en matière d'automatisation informatique pour les technologies, les équipes et les environnements

Intelligence artificielle

Actualité sur les plateformes qui permettent aux clients d'exécuter des charges de travail d'IA sur tout type d'environnement

Cloud hybride ouvert

Découvrez comment créer un avenir flexible grâce au cloud hybride

Sécurité

Les dernières actualités sur la façon dont nous réduisons les risques dans tous les environnements et technologies

Edge computing

Actualité sur les plateformes qui simplifient les opérations en périphérie

Infrastructure

Les dernières nouveautés sur la plateforme Linux d'entreprise leader au monde

Applications

À l’intérieur de nos solutions aux défis d’application les plus difficiles

Virtualisation

L'avenir de la virtualisation d'entreprise pour vos charges de travail sur site ou sur le cloud