In the world of hybrid cloud infrastructure, data is our most valuable currency. But for data to be valid and valuable, it must be taken in context. Recently, VMware published a blog post claiming that, based on a study conducted by Principled Technologies, VMware Cloud Foundation (VCF) 9.0 with vSphere Kubernetes Service (VKS) delivers a "5.6x pod density" advantage over Red Hat OpenShift.

At first glance, the number is striking. As any systems architect knows, however, the validity of a benchmark lies not in the result, but in the methodology. When we look under the hood of this study, we find a comparison that highlights a fundamental misunderstanding of scale and a missed opportunity for a true "apples-to-apples" evaluation.

1. The Geometry of the test: 300 versus 4

The most critical element of any experiment is controlling the variables. In this study, both platforms were given the same physical hardware (four Dell PowerEdge servers). The configurations were very different, however:

The main Principled Technologies report does not mention how many virtual worker nodes VKS was running. The companion "science behind the report" methodology document does. In the appendix (pt-pod-density.yaml, page 5), the cluster configuration specifies "replicas: 300" (p. 5).

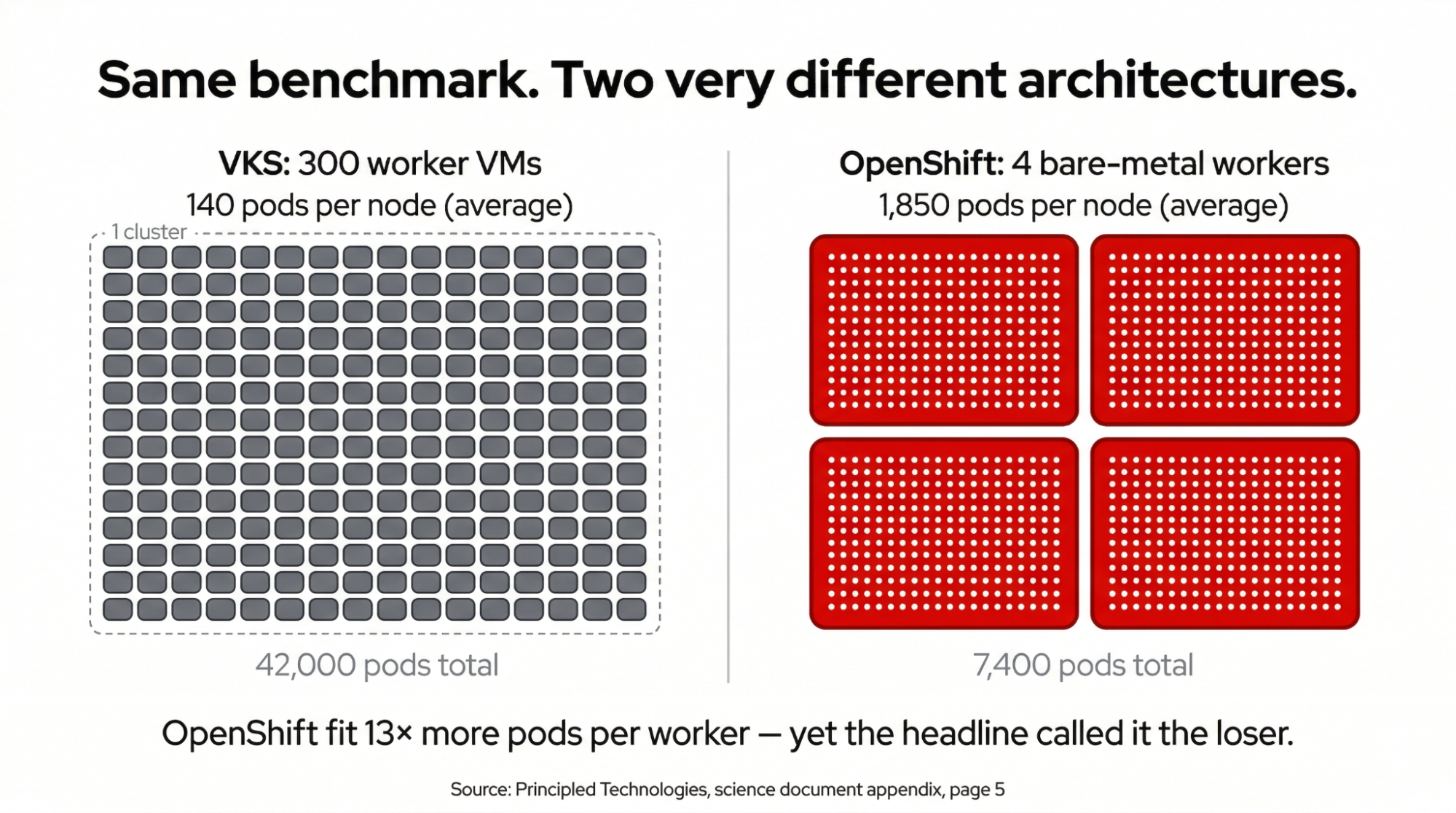

VKS was running 300 virtual worker nodes across 4 physical hosts. That is 75 virtual machines (VMs) per server, each configured as a "best-effort-medium" VM class (2 vCPUs, 8 GB RAM, no resource reservations). OpenShift was configured as a bare-metal cluster with 4 worker nodes, 1 per physical server.

In the published results, VKS reached 42,000 pods across its 300 workers, averaging 140 pods per node, while OpenShift reached 7,400 pods across its 4 workers, averaging 1,850 pods per node. OpenShift’s per-node density is over 13 times higher than VKS’s. The 5.6x headline reflects how the environments were sized—300 nodes versus 4, not how efficiently either platform runs pods.

The methodology document also reveals a configuration asymmetry in maxPods settings. The YAML configuration sets VKS to a maxPods limit of 200 per node (notably, the prose text of the same document states "a maximum of 300 pods per node," an internal discrepancy—we reference the YAML as the deployed configuration). OpenShift was set to 5,000 per node, well above the default of 250, a setting that actually gave OpenShift an advantage in packing pods onto its 4 nodes. Even so, the topology determined the outcome: VKS’s theoretical ceiling was 60,000 (300 nodes x 200 per node), while OpenShift’s was 20,000 (4 nodes x 5,000 per node). The test compared 2 fundamentally different architectures and reported only the aggregate total.

2. Queuing theory versus platform speed

The report also highlights "faster pod readiness" for VKS. To the observer, this is a basic principle of queuing theory rather than a software engineering breakthrough.

Attempting to board 1,000 people onto 4 buses creates a line. Boarding onto 300 minivans simultaneously makes the line disappear. By using 300 virtual nodes, VKS minimized scheduling contention. Conversely, by pushing 4 bare-metal nodes to their absolute stability limits, the test created a bottleneck that wouldn't exist in a properly architected production environment.

The pods used in this test (via kube-burner) consume minimal CPU and memory relative to production workloads. Unlike a real workload, there is no resource contention or queuing at the node level during startup. The queuing advantage of 300 nodes over 4 is real, but it applies only to empty scheduling slots, not to pods that actually consume resources. We cover this in more detail in section 4, below.

3. The missing comparison: Red Hat OpenShift Virtualization

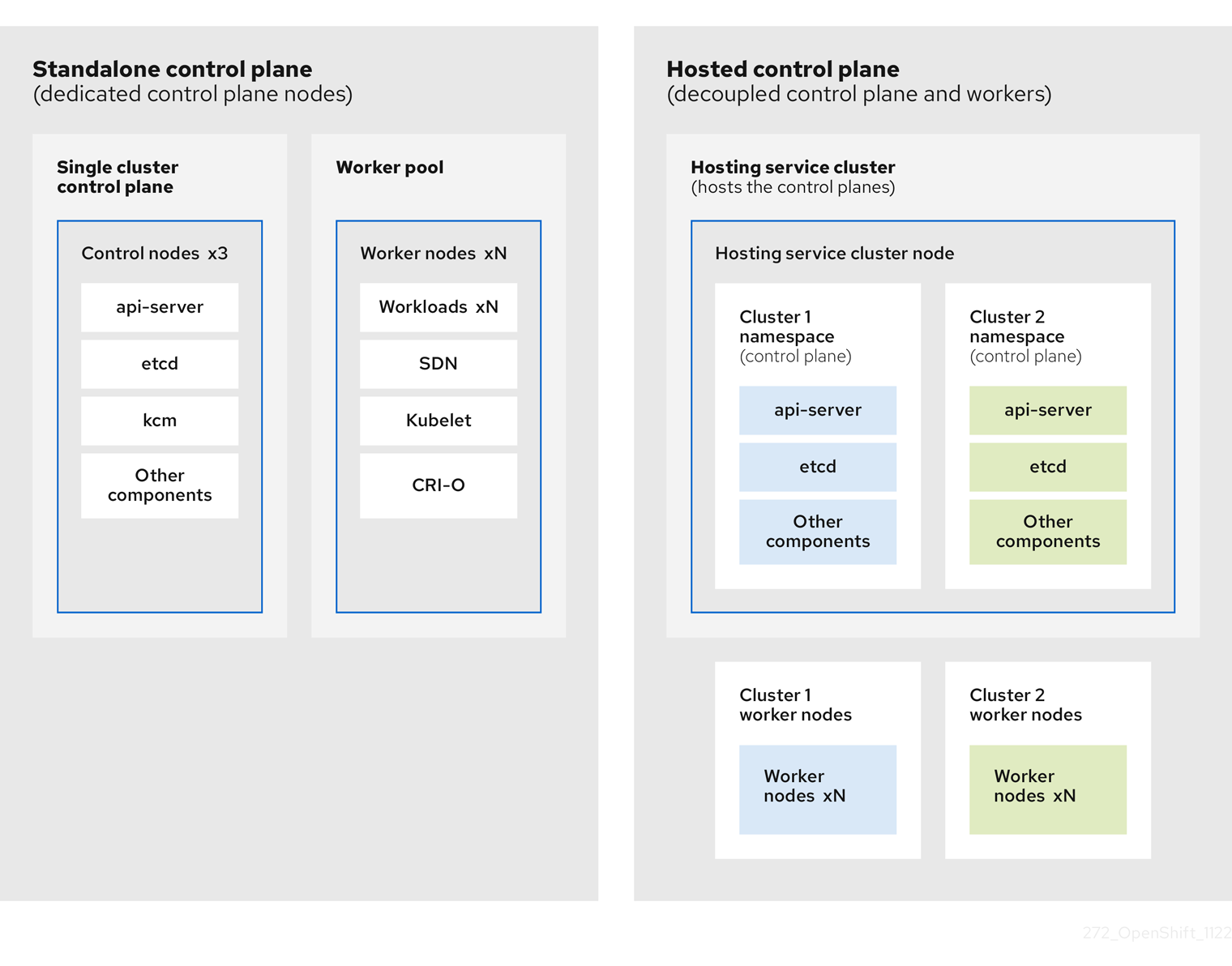

If the goal was to test how a Kubernetes application scales on top of a hypervisor, there is a standard OpenShift configuration for that: OpenShift on OpenShift Virtualization.

By running virtual OpenShift compute nodes using OpenShift Virtualization, a kernel-based virtual machine (KVM) hypervisor, we achieve the same architectural topology as VKS on ESXi. Curiously, this "like-to-like" comparison was omitted. We believe customers deserve to see how platforms perform when the playing field is level: virtual-to-virtual or bare-metal-to-bare-metal.

The published test relies on a specific hypervisor dependency. OpenShift, however, offers a unified experience across bare metal, VMware, Amazon Web Services (AWS), Microsoft Azure, Google Cloud, IBM Cloud®, Oracle Cloud, and OpenShift Virtualization. Whether you’re running high-performance database workloads directly on silicon or dynamically spinning up clusters on top of VMs using our hosted control planes, the management interface remains the same. You choose the infrastructure based on the workload, not the license.

The right architecture depends on the workload. Some use cases call for a small number of bare-metal nodes, others benefit from virtualized workers, and many organizations end up running a mix of both. OpenShift supports all 3 approaches.

A benchmark that pits 300 Kubernetes workers against 4 Kubernetes workers isn't measuring performance, it's measuring the effects of segmentation.

4. The anatomy of a synthetic workload

The "workload" in the kube-burner test is designed to stress the Kubernetes control plane (the brains of the system) rather than the data plane (the actual compute). The science document linked in the report reveals that the specific variant used was kubelet-density-heavy, which creates a basic PostgreSQL pod and a client application pod per iteration. These are heavier than empty "pause" containers, but they are still synthetic probes, not real business applications. They are meant to see how many slots the orchestrator can fill before it stops responding or the nodes lose their heartbeat.

Other kube-burner variations, like cluster-density, add a bit more complexity by creating a flurry of API objects—Secrets, ConfigMaps, and Services—alongside the pods. Even in the "heavy" versions, the workload usually consists of a basic PostgreSQL pod and a client pod that performs simple, repetitive loops. These are not applications, they are probes meant to see how many "slots" the orchestrator can fill before it stops responding or the nodes lose their heartbeat.

5. Benchmark versus business reality

In a real-world business scenario, pods are not just "slots" to be filled. A production pod delivering business value,like a Java-based microservice or a Python-powered inference engine,carries a significant "payload":

- Resource requirements: Real applications often require 512MB to 4GB of RAM and significant CPU slices. You cannot fit 1,800 of these on a single physical node without instantly exhausting physical resources.

- I/O and networking: Business workloads generate heavy disk I/O and complex network traffic. Kube-burner’s "sleep" pods generate zero internal traffic, meaning the test ignores the very "noisy neighbor" effects that usually crash high-density clusters in the real world.

- Startup logic: Real applications have initialization sequences, database migrations, and health check dependencies. Kube-burner measures how fast a "hollow" container can be marked "ready," which is akin to measuring how fast you can park empty cars in a lot versus how long it takes to actually board and dispatch a fully loaded bus.

Ultimately, while kube-burner is an excellent tool for finding the "breaking point" of a platform's management layer, it is a limit test, not a capacity plan. High pod density with empty pods proves how many "placeholders" the system can handle, it does not reflect how many paying customers or transactions your infrastructure can actually support.

While we are proud that OpenShift was able to produce 1,850 pods per node, we recognize that this is not reflective of real customer workloads, which can and will saturate a worker (physical or virtual) at much lower pod counts. The honest answer is that the headline number on both sides of this test is disconnected from production reality.

6. Accounting for the "virtualization tax"

Running 300 separate VMs introduces overhead worth considering. Each VM runs its own guest operating system (OS) kernel, its own kubelet, and its own container runtime. The aggregate vCPU demand (600) exceeds the physical core count across all 4 hosts (192), meaning the VMs were overcommitted, and the best-effort-medium VM class carried no resource reservations. That combination works for nearly-idle synthetic pods but would not hold up under the same test if those pods were doing real work.

Dense virtualized workers are a production pattern, and Red Hat recommends this topology for hosted control planes hosting scenarios. With the default maxPods limit of 500 and roughly 70 pods per hosted control plane, customers hit a ceiling of around 6 hosted control planes per bare-metal worker fairly quickly. The recommended way past that ceiling is to run VM workers on top of the bare-metal hosts and place the hosted control plane pods inside those VMs, which is precisely the topology under test here.

The same pattern also helps when customers need kernel-level isolation between pods rather than namespace-level isolation, and our OpenShift Virtualization approach uses the same model. The issue with the Principled Technologies test isn't virtualized workers as an architecture, it's that this particular configuration overcommitted the hosts and ran workloads light enough to disguise it.

7. Moving forward with integrity

It is worth noting that the methodology document confirms that Broadcom fully set up and configured both test environments, including the Red Hat OpenShift cluster. The choice to compare 300 virtual workers against 4 bare-metal nodes across different maxPods configurations was a design decision that shaped the outcome before a single pod was deployed.

At Red Hat, we welcome rigorous testing. It’s how the industry improves. In keeping with our open-by-default philosophy, we do not ask our users to get our permission to publish benchmarks of our software.

We believe that benchmarks should empower customers to make informed decisions by comparing equivalent configurations on a level playing field. When evaluating pod density claims, we encourage looking beyond aggregate totals and to ask for per-node results on comparable architectures with representative production workloads.

Our commitment is to provide a platform that delivers maximum density and performance, whether you choose the raw power of bare metal or the flexibility of virtualization.

If you are evaluating how to run VMs alongside containers on a single platform, or are exploring a path away from legacy virtualization, OpenShift Virtualization provides a production-ready, KVM-based approach that eliminates the need for a separate hypervisor layer.

To learn more about how it works and where it fits, visit the Red Hat OpenShift Virtualization product page. For installation requirements, supported platforms, and configuration details, the full OpenShift Virtualization 4.21 technical documentation is available at docs.redhat.com.

Essai de produit

Red Hat OpenShift Container Platform | Essai de produit

À propos de l'auteur

Steve Gordon is senior director of Product Management at Red Hat

Plus de résultats similaires

Votre plateforme d'applications est-elle prête pour l'avenir ?

Validation de l'expertise ciblée : mises à jour majeures des certifications Red Hat

Becoming a Coder | Command Line Heroes

Where Coders Code | Command Line Heroes

Parcourir par canal

Automatisation

Les dernières nouveautés en matière d'automatisation informatique pour les technologies, les équipes et les environnements

Intelligence artificielle

Actualité sur les plateformes qui permettent aux clients d'exécuter des charges de travail d'IA sur tout type d'environnement

Cloud hybride ouvert

Découvrez comment créer un avenir flexible grâce au cloud hybride

Sécurité

Les dernières actualités sur la façon dont nous réduisons les risques dans tous les environnements et technologies

Edge computing

Actualité sur les plateformes qui simplifient les opérations en périphérie

Infrastructure

Les dernières nouveautés sur la plateforme Linux d'entreprise leader au monde

Applications

À l’intérieur de nos solutions aux défis d’application les plus difficiles

Virtualisation

L'avenir de la virtualisation d'entreprise pour vos charges de travail sur site ou sur le cloud