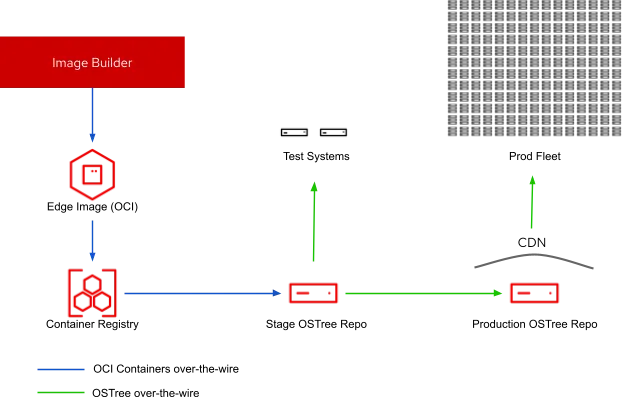

Red Hat Enterprise Linux (RHEL) 8.4 brings a set of new features that make it easier to manage image updates for edge systems. RHEL for Edge uses Image Builder as the engine to create rpm-ostree images. This model provides advantages around the long life cycle and package flexibility of RHEL combined with A/B transactional updates, rollbacks controlled by application health-checks, and network efficient updates over the wire. In this post, we will walk through how to set up a simple yet powerful staging environment for edge image updates.

An illustrative example for staging updates

This table represents the hostnames and their respective functions as they relate to the example architecture:

|

Function |

Hostname |

|

Image Builder Node |

imagebuilder.example.com |

|

Stage Repository |

ostree-stage.example.com |

|

Production Repository |

ostree-prod.example.com |

|

Container Registry (provided by Red Hat Quay) |

quay.io |

We will first start by setting up the production ostree repository. An in depth overview of HTTPS certificates, GPG signing, and CDN configurations are outside the scope of this post, but for the course of this discussion, these have already been provisioned and configured. We can also assume that Red Hat Enterprise Linux 8.4 is being used and that the systems are properly registered.

Creating edge images in Image Builder

Start by creating a blueprint. This is the image definition which will define the attributes and customizations needed for this image. On the Image Builder host, imagebuilder.example.com, create a file named gateway.toml with the following content:

name = "gateway" description = "RHEL for Edge Commit" version = "0.0.1" [[customizations.user]] name = "core" password = "edge" groups = ["wheel"]

Note: password hashes and ssh keys are supported and highly recommended, but for the purpose of this example, clear text has been used for readability purposes.

Next, push the blueprint to the blueprint library of osbuild-composer, the back-end Image Builder service using composer-cli:

# composer-cli blueprints push gateway.toml

Then, create the initial image:

# composer-cli compose start-ostree gateway rhel-edge-container

Getting the image into a registry (Quay)

Recently, Image Builder added the ability to push images directly to cloud providers. This capability to push directly to container registries is a feature we are looking at adding in future releases. In the meantime, you can push images to any OCI-compliant registry using podman or skopeo directly from the Image Builder system.

Before you begin these steps, verify the status of the image build. A status of FINISHED indicates that the image has been built successfully and is ready for distribution:

# composer-cli compose status <UUID> FINISHED Mon Mar 22 17:55:24 2021 gateway 0.0.2 rhel-edge-container 2147483648

Download the OCI-compliant image tar archive to the local ImageBuilder file system:

#composer-cli compose image

Next, we will use skopeo to push the tarball downloaded using the prior command directly into our container registry. podman can, of course, take care of the load, tag, and push steps, but skopeo lets us complete these tasks with a single command. In this example, quay is used as a registry, but in practice, any OCI-compliant container registry is a viable option.

# skopeo copy oci-archive:<UUID>-rhel84-container.tar docker://quay.io/[account]/edge-stage:latest

Configuring the stage OSTree repository

The purpose of the stage repository is to serve the container image pushed to Quay previously, so the only software package that is required is podman. From the ostree-stage.example.com system, first install podman:

# yum -y install podman

Once podman has been installed, running the image is as easy as:

# podman run --name edge-stage --rm -d -p 80:80

quay.io/[account]/edge-stage:latest

At this point, client systems will be able to provision themselves from content from the stage repository and/or update to this RHEL for Edge image version. However, if we perform a few additional steps, the stage repository can be configured to automatically watch our image registry for updates, thereby removing any user intervention to serve the latest edge images.

Adding -- label io.containers.autoupdate=image when running the container will enable auto-updates. By including systemd unit files, we can ensure the container is always running and checking for updates daily.

First, stop the existing running edge-stage container:

# podman stop edge-stage

Now, start a new container with the updated label to enable automatic updates followed by generating the systemd configurations to automatically start the container when the machine boots:

# podman run --name edge-stage -d -p 80:80 --label io.containers.autoupdate=image quay.io/[account]/edge-stage:latest # podman generate systemd --new -n -f edge-stage # cp container-edge-stage.service /etc/systemd/system/ ; systemctl daemon-reload # systemctl enable --now container-edge-stage podman-auto-update.timer

Configuring the production OSTree repository

On the production instance (ostree-prod.example.com), we will first install the Apache web server and ostree software:

# yum -y install httpd ostree

Ensure that the Apache (httpd) service is started and the firewall allows http traffic:

# systemctl enable --now httpd # firewall-cmd --add-service=http # firewall-cmd --permanent --add-service=http

Create the ostree repository that we will use in the prod environment to mirror each version released to the stage environment. This is as easy as creating a directory structure from within the folder Apache serves content (/var/www/html):

# mkdir -p /var/www/html/ostree # cd /var/www/html/ostree # ostree --repo=repo init --mode=archive # ostree --repo=repo remote add --no-gpg-verify edge-stage http://ostree-stage.example.com/repo

Sync the initial content:

# ostree --repo=repo pull --mirror edge-stage rhel/8/x86_64/edge # ostree summary -u --repo=repo

Now, our prod repository is ready to serve clients. Promotions to production can by synchronized from the stage repository using a script that we will name update_prod.sh:

#!/bin/bash ostree --repo=/var/www/html/ostree/repo pull --mirror edge-stage rhel/8/x86_64/edge ostree static-delta generate --repo=/var/www/html/ostree/repo rhel/8/x86_64/edge ostree summary -u --repo=/var/www/html/ostree/repo

Further automation of these promotion activities can be achieved and gated via standard jenkins pipelines, ansible playbooks, or simple systemd timers,but these are topics for subsequent discussions.

Putting the pieces together

Now that our repositories are in place, imagine that we are targeting a monthly cadence to send OS updates to our edge systems. We can generate the image updates on our image builder system using the following command:

# composer-cli compose start-ostree gateway rhel-edge-container --url http://ostree-stage.example.com/repo/

Obtain the UUID of the updated image once finished and download the image

# composer-cli compose status # composer-cli compose image <UUID>

Then, push the downloaded archive to the image registry:

# skopeo copy oci-archive:<UUID>-rhel84-container.tar

docker://quay.io/[account]/edge-stage

At this point, the ostree update will be available for the stage environment as soon as the podman-autoupdate.timer runs. This is the new process for generating updates, and showcased by this scenario. It could not be simpler! Once you are ready to promote the changes to production, simply update the production repository by executing the update_prod.sh script defined earlier on the prod repository machine:

./update_prod.sh

If ostree-prod.example.com is behind a CDN, it can handle sending updates to a massive amount of clients. Our example assumes that clients are configured to pull updates from their corresponding ostree mirror. RHEL for Edge systems can use rpm-ostree’s ability to automatically stage updates in the background, and also apply them with systemd timers during maintenance windows. These configurations are explored in greater detail along with a full end to end demo in our github demo repository.

The enhancements provided by the release of RHEL 8.4 enable new capabilities at the edge. Based on the concepts introduced in the prior article, “What’s new for RHEL for Edge in 8.4”, and within this discussion, you should now be able to:

-

Build RHEL for the Edge images and publish them to container registries.

-

Automate the staging of the content for deployment to systems at the edge.

-

Mirror updates to a production repository serving a fleet of systems.

By incorporating these concepts, you can make the move towards managing many, many edge devices, and their lifecycle, using Red Hat Enterprise Linux and RHEL for the edge!

À propos des auteurs

Ben Breard is a Senior Principal Product Manager at Red Hat, focusing on Red Hat Enterprise Linux and Edge Offerings.

Andrew Block is a Distinguished Architect at Red Hat, specializing in cloud technologies, enterprise integration and automation.

Plus de résultats similaires

Closing the gap: Bringing AI and Kubernetes to the source of the data

Simplifying modern defence operations with Red Hat Edge Manager

A vested interest in 5G | Technically Speaking

Compute confidential: In hardware we trust | Technically Speaking

Parcourir par canal

Automatisation

Les dernières nouveautés en matière d'automatisation informatique pour les technologies, les équipes et les environnements

Intelligence artificielle

Actualité sur les plateformes qui permettent aux clients d'exécuter des charges de travail d'IA sur tout type d'environnement

Cloud hybride ouvert

Découvrez comment créer un avenir flexible grâce au cloud hybride

Sécurité

Les dernières actualités sur la façon dont nous réduisons les risques dans tous les environnements et technologies

Edge computing

Actualité sur les plateformes qui simplifient les opérations en périphérie

Infrastructure

Les dernières nouveautés sur la plateforme Linux d'entreprise leader au monde

Applications

À l’intérieur de nos solutions aux défis d’application les plus difficiles

Virtualisation

L'avenir de la virtualisation d'entreprise pour vos charges de travail sur site ou sur le cloud