Al giorno d'oggi è fondamentale fornire servizi ai clienti in modo continuo e senza interruzioni o con disservizi minimi. Il componente aggiuntivo per l'alta disponibilità di Red Hat Enterprise Linux (RHEL) può aiutarti a raggiungere questo obiettivo migliorando l'affidabilità, la scalabilità e la disponibilità dei sistemi di produzione. I cluster ad alta disponibilità (HA) eliminano i singoli punti di errore ed eseguono il failover dei servizi da un nodo del cluster all'altro, qualora un nodo non sia più operativo.

In questo articolo, illustrerò l'utilizzo del ruolo di sistema ha_cluster RHEL nella configurazione di un cluster HA che esegue un server HTTP Apache con storage condiviso in modalità attiva/passiva.

I ruoli di sistema RHEL sono una raccolta di ruoli e moduli Ansible inclusi in RHEL che garantiscono flussi di lavoro coerenti e semplificano l'esecuzione delle attività manuali. Per ulteriori informazioni sul clustering RHEL HA, consulta la documentazione relativa a configurazione e gestione dei cluster ad alta disponibilità.

Panoramica dell'ambiente

Nell'ambiente di esempio, sono presenti un sistema di nodi di controllo denominato controlnode e due nodi gestiti, rhel8-node1 e rhel8-node2, tutti con RHEL 8.6. Entrambi i nodi gestiti sono alimentati tramite uno switch APC con nome host apc-switch.

Voglio creare un cluster denominato rhel8-cluster, che sia costituito dai nodi rhel8-node1 e rhel8-node2. Sul cluster sarà in esecuzione un server HTTP Apache in modalità attiva/passiva con un indirizzo IP mobile che gestisce le pagine di un file system ext4 montato su un volume logico LVM. Il fencing verrà fornito da apc-switch.

Entrambi i nodi del cluster sono connessi allo storage condiviso con un file system ext4 montato su un volume logico LVM e su entrambi i nodi è stato installato e configurato un server HTTP Apache. Per ulteriori indicazioni consulta i capitoli relativi alla configurazione di un volume LVM con un file system ext4 in un cluster Pacemaker e alla configurazione di un server HTTP Apache nel documento su configurazione e gestione dei cluster ad alta disponibilità.

Ho già configurato un account di servizio Ansible su tutti e tre i server, denominato ansible e impostato l'autenticazione con chiave SSH in modo che l'account ansible su controlnode possa accedere a ciascuno dei nodi. Inoltre, l'account di servizio ansible è stato configurato in modo da avere accesso all'account root su ogni nodo tramite il comando sudo. Su controlnode ho anche installato i pacchetti rhel-system-roles e ansible. Per informazioni dettagliate su queste attività, consulta l'articolo introduttivo sui ruoli di sistema RHEL.

Definizione del file di inventario e delle variabili del ruolo

Il primo passo consiste nel creare, dal sistema controlnode, una nuova struttura di directory:

[ansible@controlnode ~]$ mkdir -p ha_cluster/group_vars

Queste directory verranno utilizzate come segue:

- La directory ha_cluster conterrà il playbook e il file di inventario.

- Il file ha_cluster/group_vars conterrà invece i file delle variabili per i gruppi di inventario che verranno applicati agli host nei rispettivi gruppi Ansible.

È necessario definire un file di inventario Ansible per elencare e raggruppare gli host che il ruolo di sistema ha_cluster dovrà configurare. Creerò il file di inventario all'indirizzo ha_cluster/inventory.yml con il seguente contenuto:

---

all:

children:

rhel8_cluster:

hosts:

rhel8-node1:

rhel8-node2:L'inventario definirà un gruppo denominato rhel8_cluster e vi assegnerà i due nodi gestiti.

Successivamente, definirò le variabili dei ruoli che controlleranno il comportamento di ha_cluster durante l'esecuzione. Il file README.md per il ruolo ha_cluster è disponibile all'indirizzo /usr/share/doc/rhel-system-roles/ha_cluster/README.md e contiene informazioni importanti sul ruolo, incluso un elenco delle variabili disponibili e come utilizzarle.

Una delle variabili da definire per ha_cluster è la variabile ha_cluster_hacluster_password, che definisce la password per l'utente hacluster. Userò Ansible Vault per crittografare la password, in modo che non venga salvata come testo normale.

[ansible@controlnode ~]$ ansible-vault encrypt_string 'your-hacluster-password' --name ha_cluster_hacluster_password

New Vault password:

Confirm New Vault password:

ha_cluster_hacluster_password: !vault |

$ANSIBLE_VAULT;1.1;AES256 376135336466646132313064373931393634313566323739363365616439316130653539656265373663636632383930323230343731666164373766353161630a303434316333316264343736336537626632633735363933303934373666626263373962393333316461616136396165326339626639663437626338343530360a39366664336634663237333039383631326263326431373266616130626333303462386634333430666333336166653932663535376538656466383762343065

Encryption successfulOvviamente your-hacluster-password dovrà essere sostituito con la password reale. In questo modo, quando viene immesso il comando, verrà richiesta una password vault per decrittografare la variabile durante l'esecuzione del playbook. Dopo aver digitato la password una prima volta e un'altra per confermarla, la variabile crittografata verrà visualizzata nell'output e inserita nel file delle variabili, che verrà creato nel passaggio successivo.

Ora procederò a creare un file che definirà le variabili per i nodi del cluster elencati nel gruppo di inventario rhel8_cluster creando un file in ha_cluster/group_vars/rhel8_cluster.yml con il seguente contenuto:

---

ha_cluster_cluster_name: rhel8-cluster

ha_cluster_hacluster_password: !vault |

$ANSIBLE_VAULT;1.1;AES256

3761353364666461323130643739313936343135663237393633656164393161306535

39656265373663636632383930323230343731666164373766353161630a3034343163

3331626434373633653762663263373536393330393437366662626337396239333331

6461616136396165326339626639663437626338343530360a39366664336634663237

3330393836313262633264313732666161306263333034623866343334306663333361

66653932663535376538656466383762343065

ha_cluster_fence_agent_packages:

- fence-agents-apc-snmp

ha_cluster_resource_primitives:

- id: myapc

agent: stonith:fence_apc_snmp

instance_attrs:

- attrs:

- name: ipaddr

value: apc-switch

- name: pcmk_host_map

value: rhel8-node1:1;rhel8-node2:2

- name: login

value: apc

- name: passwd

value: apc

- id: my_lvm

agent: ocf:heartbeat:LVM-activate

instance_attrs:

- attrs:

- name: vgname

value: my_vg

- name: vg_access_mode

value: system_id

- id: my_fs

agent: ocf:heartbeat:Filesystem

instance_attrs:

- attrs:

- name: device

value: /dev/my_vg/my_lv

- name: directory

value: /var/www

- name: fstype

value: ext4

- id: VirtualIP

agent: ocf:heartbeat:IPaddr2

instance_attrs:

- attrs:

- name: ip

value: 198.51.100.3

- name: cidr_netmask

value: 24

- id: Website

agent: ocf:heartbeat:apache

instance_attrs:

- attrs:

- name: configfile

value: /etc/httpd/conf/httpd.conf

- name: statusurl

value: http://127.0.0.1/server-status

ha_cluster_resource_groups:

- id: apachegroup

resource_ids:

- my_lvm

- my_fs

- VirtualIP

- WebsiteIn questo modo il ruolo ha_cluster creerà un cluster denominato rhel8-cluster sui nodi.

Nel cluster sarà presente un dispositivo di fencing, myapc, di tipo stonith:fence_apc_snmp. Il dispositivo è accessibile all'indirizzo IP apc-switch con nome utente e password, rispettivamente, apc e apc. I nodi del cluster vengono alimentati tramite questo dispositivo: rhel8-node1 è collegato al socket 1, mentre rhel8-node2 al socket 2. Poiché non verranno utilizzati altri dispositivi di fencing, ho specificato la variabile ha_cluster_fence_agent_packages, che si sostituirà al valore predefinito e impedirà l'installazione di altri agenti di fencing.

Nel cluster ci saranno quattro risorse in esecuzione:

- Il gruppo di volumi LVM my_vg verrà attivato dalla risorsa my_lvm di tipo ocf:heartbeat:LVM-activate.

- Il filesystem ext4 verrà montato dal dispositivo di storage condiviso /dev/my_vg/my_lv su /var/www dalla risorsa my_fs di tipo ocf:heartbeat: Filesystem.

- L'indirizzo IP mobile 198.51.100.3/24 per il server HTTP sarà gestito dalla risorsa VirtualIP di tipo ocf:heartbeat:IPaddr2.

- Il server HTTP sarà rappresentato da una risorsa Website di tipo ocf:heartbeat:apache, con il file di configurazione archiviato in /etc/httpd/conf/httpd.conf e la pagina di stato per monitoraggio disponibile all'indirizzo http://127.0.0.1/server-status.

Tutte le risorse verranno quindi inserite in un gruppo apachegroup per essere eseguite su un singolo nodo e avviate nell'ordine specificato: my_lvm, my_fs, VirtualIP, Website.

Creazione del playbook

Il passaggio successivo consiste nel creare il file del playbook all'indirizzo ha_cluster/ha_cluster.yml con i seguenti contenuti:

---

- name: Deploy a cluster

hosts: rhel8_cluster

roles:

- rhel-system-roles.ha_clusterQuesto playbook richiama il ruolo di sistema ha_cluster per tutti i sistemi definiti nel gruppo di inventario rhel8_cluster.

Esecuzione del playbook

A questo punto, la configurazione è completata e posso eseguire il playbook. Per questa dimostrazione, utilizzerò un nodo di controllo RHEL ed eseguirò il playbook dalla riga di comando. Utilizzerò il comando cd per spostarmi nella directory ha_cluster, quindi utilizzerò il comando ansible-playbook per eseguire il playbook.

[ansible@controlnode ~]$ cd ha_cluster/ [ansible@controlnode ~]$ ansible-playbook -b -i inventory.yml --ask-vault-pass ha_cluster.yml

Specifico che il playbook ha_cluster.yml deve essere eseguito, che deve essere eseguito come root (flag -b), che il file inventory.yml deve essere utilizzato come inventario Ansible (flag -i) e che dovrebbe essermi chiesto di fornire la password vault per decrittografare la variabile ha_cluster_hacluster_password (flag --ask-vault-pass).

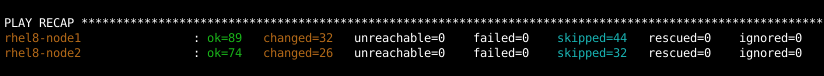

Completata l'esecuzione del playbook, devo verificare che tutte le attività siano riuscite:

Convalida della configurazione

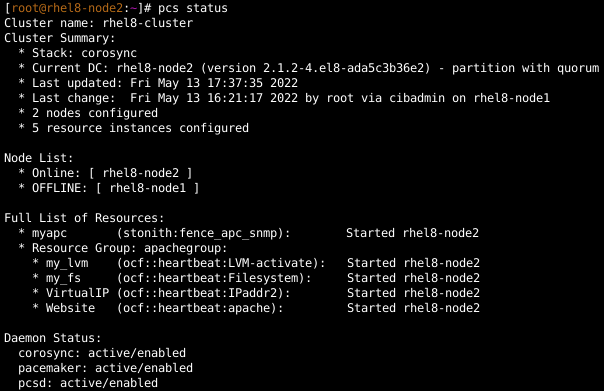

Per verificare che il cluster sia stato configurato e che le risorse vengano eseguite correttamente, accedo a rhel8-node1 e visualizzo lo stato del cluster:

Verifico inoltre che anche su rhel8-node2 l'output sia lo stesso.

Successivamente, apro un browser web e mi collego all'indirizzo IP 198.51.100.3 per verificare che il sito web sia accessibile.

Per testare il failover, rimuovo un cavo di rete da rhel8-node1. Dopo un po', il cluster esegue il failover e il fencing per rhel8-node1. Ora accedo a rhel8-node2 e verifico lo stato del cluster. Indica che tutte le risorse sono state spostate da rhel8-node1 a rhel8-node2. Ricarico anche il sito web nel browser web per verificare che sia ancora accessibile.

Ricollego quindi rhel8-node1 alla rete e lo riavvio di nuovo in modo che faccia nuovamente parte del cluster.

Conclusioni

Il ruolo di sistema RHEL ha_cluster consente di configurare in modo rapido e coerente i cluster ad alta disponibilità RHEL che eseguono diversi carichi di lavoro. In questo post ho spiegato come utilizzare il ruolo per configurare un server HTTP Apache che esegue un sito Web da uno storage condiviso in modalità attiva/passiva.

Red Hat offre molti altri ruoli di sistema RHEL per automatizzare altri aspetti importanti dell'ambiente RHEL. Per scoprire quali siano, invito a consultare l'elenco dei ruoli di sistema RHEL disponibili, per iniziare subito a gestire i server RHEL in modo più efficiente, coerente e automatizzato.

Vuoi scoprire di più su Red Hat Ansible Automation Platform? Leggi il nostro ebook, Il manuale per gli architetti dell'automazione.

Sull'autore

Tomas Jelinek is a Software Engineer at Red Hat with over seven years of experience with RHEL High Availability clusters.

Altri risultati simili a questo

Building a Center of Excellence for Ansible

Integrating Red Hat Lightspeed with CrowdStrike for enhanced malware detection coverage

Technically Speaking | Taming AI agents with observability

You Can't Automate Buy-In | Code Comments

Ricerca per canale

Automazione

Novità sull'automazione IT di tecnologie, team e ambienti

Intelligenza artificiale

Aggiornamenti sulle piattaforme che consentono alle aziende di eseguire carichi di lavoro IA ovunque

Hybrid cloud open source

Scopri come affrontare il futuro in modo più agile grazie al cloud ibrido

Sicurezza

Le ultime novità sulle nostre soluzioni per ridurre i rischi nelle tecnologie e negli ambienti

Edge computing

Aggiornamenti sulle piattaforme che semplificano l'operatività edge

Infrastruttura

Le ultime novità sulla piattaforma Linux aziendale leader a livello mondiale

Applicazioni

Approfondimenti sulle nostre soluzioni alle sfide applicative più difficili

Virtualizzazione

Il futuro della virtualizzazione negli ambienti aziendali per i carichi di lavoro on premise o nel cloud