This is the second part of a series of how to integrate Ansible and Jenkins. If you missed the last part, you’ll want to read it before this one since we’re going to use the same scenario to demonstrate the Ansible Tower integration with Jenkins.

Red Hat Ansible Tower is the next step for your infrastructure automation. It is possible to manage and control your playbook execution, orchestrate multiple executions, and integrate with third party inventories and services.

Ansible Tower opens a world of possibilities when integrated with Jenkins. If we compare with the integration with Ansible engine, it can cut a lot of boilerplate configuration from Jenkins like controlling where to deploy an application, manage the playbook execution and, most importantly, avoid SSH keys configuration at Jenkins side. Jenkins doesn’t need to know where the application is going to be deployed: it could be in a public cloud, in a blade next to it, or even in a virtual guest on your laptop. Leave the heavy lifting to Ansible.

Ansible Tower Overview

Before we start to integrate Jenkins with Tower, it’s worth spending a couple of minutes to understand the big picture and how its components work. Here’s a 10 minute video with a demonstration of the basic concepts on the Tower website that’s worth watching.

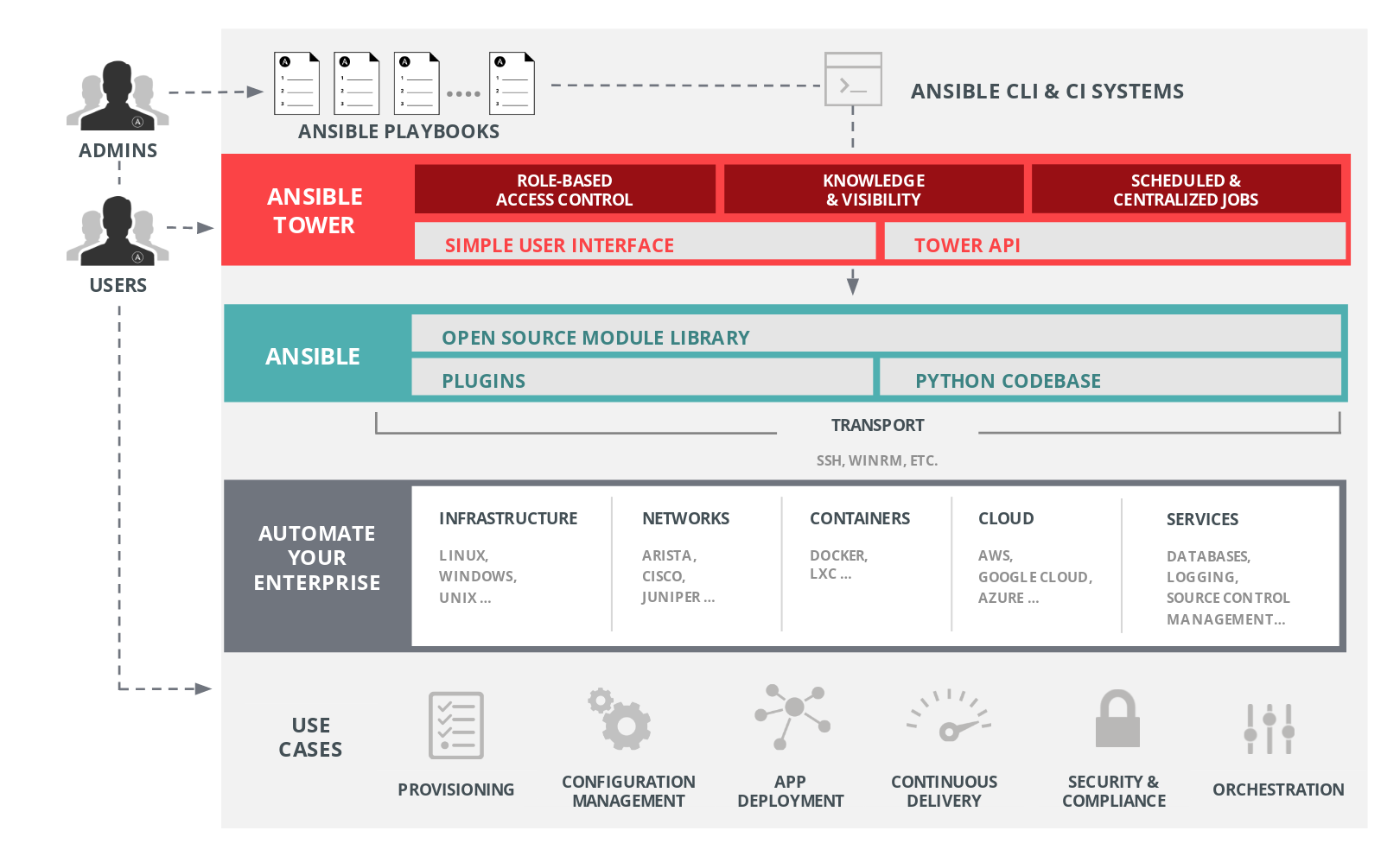

Figure 1 gives an overview of the Tower components. It’s important to become familiar with it since you’re going to deep dive in Tower architecture later in this post.

Following the fundamentals of the most important components and details of how they link with each other:

- Credentials: are utilized by Ansible Tower for authentication when running jobs against machines, synchronizing repositories and any other task that requires authentication outside Tower. The SSH keys used to access machines that are going to be provisioned are set in this component.

- Projects: a project is a logical collection of Ansible playbooks. It has to be the first component one should configure to start working with Tower. It’s possible to manually add playbooks in Tower directory, but having it linked with a source control management system (SCM) is the recommended approach since it would be easier to handle playbook’s changes.

- Inventories: are a collection of hosts which jobs can be launched to run against it, the same of an Ansible inventory file. The difference is that one can manage their inventory from a rich web console or API and have them synced with one of Ansible Tower’s supported cloud providers, CMDBs or other internal systems.

- Job template: is a definition and set of parameters for running an Ansible job. Job templates are useful to configure and run jobs multiple times. It’s the component that links projects, inventories and credentials together.

- Job: is an instance of a playbook execution. Tower will use a job template to start a job execution. Logs and execution data can be checked later in the Tower web console.

These components play an important role in the automation workflow and will be referenced later in this post to configure our environment to deploy the Soccer Stats application.

If you want to go further inside Red Hat Ansible Tower, take a look at the official documentation. There are a lot of resources including guides, white papers, books and videos.

Scopri di più su Red Hat Universal Base Image (UBI)

Scopri di più su Red Hat Universal Base Image (UBI)

Scopri di più su Red Hat Universal Base Image (UBI)

Scopri di più su Red Hat Universal Base Image (UBI)

Integrating Tower with Jenkins

Now that you are familiar with Ansible Tower basic concepts, it’s time to put Jenkins and the CI/CD process into the equation. Having the Tower environment configured, we’re going to set up Jenkins with Tower data and then create the pipeline that will be used to deploy our application.

Installing and configuring Ansible Tower

Getting started with Tower is pretty straightforward, since there are three options to have an environment ready. We chose the Vagrant box option because it was easier to reuse the Vagrant file from the previous lab. It was just a matter of adding a new machine:

# vagrant up tower --provider virtualbox

config.vm.define "tower" do |tower|

config.vm.hostname = "tower.local"

tower.vm.box = "ansible/tower"

tower.vm.network :private_network, ip: "192.168.56.2"

tower.vm.provider :virtualbox do |v|

v.gui = false

v.memory = 2048

v.cpus = 2

end

end

To run Vagrant and have Ansible Tower ready to be used in your local environment, follow the steps bellow:

- Run

vagrant up tower --provider virtualboxagainst the Vagrantfile - Run

vagrant ssh towerto get your credentials (look at the login welcome message). You might not see the message if you had Ansible Tower installed previously using this method. Please update your box and try again. - Go to https://192.168.56.2/ and claim for your trial key having authenticated with the credentials acquired in the previous step.

Now let’s configure the Ansible Tower components to have our first step concluded towards Tower and Jenkins integration in the CI/CD process.

The User

The first thing to be configured are the Jenkins user credentials to execute Ansible Tower jobs. Add a new Jenkins user by going to the gear icon and select “User”. Choose a user name and password for Jenkins to execute our jobs and save it as a “Normal User”. Leave the other tabs for now.

The Project

Create a new Ansible Tower project under the “Project” menu. Choose a name, description and leave the organization field with the default one. In the “SCM Type” combo box, choose git and fill the text input labeled as “SCM URL” with your playbook’s repo URL. For this lab you might fork the Soccer Stats repository. Then click in the tab “Permissions” and add the Jenkins user to the project.

The Inventory

In the previous lab using the Ansible engine, we had to create the inventory during the Jenkins Pipeline runtime via build parameters. Now, we can leave the responsibility for inventory management to Ansible Tower, relieving Jenkins of this burden.

For this lab, you might use the Vagrant file provided by the vagrant-alm repository to create the target virtual machine:

config.vm.define "app_box", primary: true do |app|

config.vm.hostname = "app.local"

app.vm.network :private_network, ip: "192.168.56.11"

app.vm.provider :virtualbox do |v|

v.gui = false

v.memory = 512

end

end

Make sure that Ansible Tower and the virtual machines can reach each other through the network.

Add the virtual machine to the Tower’s inventory component. To do this, go to “Inventories”, click in “Add” and then:

- Fill in a name for your inventory and set the organization with the Default value

- Go to the “Permissions” tab and add the Jenkins user

- In the “Groups” tab, create the group “app_server”

- Add your hosts in the “Hosts” tab. If you are using the Vagrant file add the “app.local” host or the IPs assigned to the virtual machine you wish to deploy the application

- Save your new inventory

Another option is to integrate Tower with one of its supported cloud providers. It’s beyond the scope of this article to explain how to do this, but if you’re keen to learn a little bit more, give it a try. For this lab, it doesn’t matter since Tower will provision whatever your machines are.

The Credentials

You have to give Ansible Tower access to your machines. One of the most common ways to do that is using SSH. If you used the Vagrant file from the vagrant-alm repository, after creating the “app” machine, Vagrant will run a playbook to add a Jenkins user and its public key into the “authorized_keys” file of this machine. This user could be renamed to “ansible”, “tower” or anything meaningful to you. Just keep the same name in every step from here.

Add the SSH private key for the Jenkins user to the Tower’s credential component. Go to the “gear” menu, located at the top right of the Tower initial page and then click on “Credentials”. Import the SSH private key from our git repository to it by filling these fields in:

- Name of the user: jenkins

- Organization: Default

- Credential Type: machine

- Username: jenkins

- SSH Private Key: copy and paste from the vagrant-alm repository.

Click in “Save” and then go to “Permissions” tab and concede access to Tower’s Jenkins user.

The Job Template

Now it’s time to put everything together in a job template. Go to the “Templates” menu and click in “Add” to add a new job template. A new page with some fields will appear, fill it in with the following data:

- Name: a template name that is meaningful to you

- Job Type: Run

- Inventory: the inventory created at the previous section

- Project: the project created previously

- Playbook: if you used the Soccer Stats git repository during project creation, choose the playbook “

provision/playbook.yml” - Credential: the Jenkins user SSH credentials

- Verbosity: normal. If you are having problems with your playbook execution, a good way to find out what’s going on is to increase the log verbosity

- Leave the option “Enable Privilege Escalation” checked

- Extra variables: add the variables that our pipeline will pass to this job separating each one by a new line:

- APP_NAME: 'soccer-stats'

- ARTIFACT_URL: ''

- Save your work and then give the Jenkins user permissions to access it

And that’s it for now. You have your Red Hat Ansible Tower installation ready to execute a job that will deploy the Soccer Stats REST application to the elected machines.

Give it a try to your configuration by running the job clicking on the “rocket” icon in the table listing the job templates. As a value for the “ARTIFACT_URL” parameter, add the Nexus repository URL for the WAR java file that you wish to deploy.

Configuring Jenkins

Now that you have your Ansible Tower installation ready, it’s time to configure Jenkins accordingly to integrate both platforms.

Provision a new Jenkins machine by using the Vagrant file from the vagrant-alm repository:

config.vm.define "jenkins_box" do |jenkins|

config.vm.hostname = "jenkins.local"

jenkins.vm.network :private_network, ip: "192.168.56.3"

jenkins.vm.provider :virtualbox do |v|

v.gui = false

v.memory = 2048

end

end

This machine already has installed the Ansible Tower plugin for Jenkins thanks to the Vagrant provision module using Ansible engine.

Login to the Jenkins console using the credentials from the admin user (password admin123). In the Jenkins menu go to Credentials, System, Global Credentials and add a new credential that is the Jenkins user and password configured during the Ansible Tower setup:

- Scope: Global

- Username: jenkins

- Password: your chosen password

- ID: tower

- Description: Ansible Tower Credentials

Those credentials are going to be used later by the Ansible Tower plugin to connect to the Tower API during the pipeline execution.

Go to the Jenkins menu again and click on Manage Jenkins, Configure System and locate “Ansible Tower” to set the information about the Tower installation:

- Name: tower

- URL: https://tower.local

- Credentials: jenkins (those credentials set in the previous step)

- Force Trust Cert: checked

- Enable Debugging: checked

Click on “Test Connection” and verify if everything is working fine. If not, verify your connection between Jenkins and Tower machines and check if you set the user jenkins in Tower correctly.

Next, let’s configure the Maven tool to build the Soccer Stats application properly. Go to the Jenkins menu, click on Manage Jenkins, Global Tool Configuration and locate the Maven section. Click on “Maven Installations” and a new one:

- Name: m3

- MAVEN_HOME: /opt/apache-maven-3.5.4 (this value is provided by our Jenkins installation playbook variable)

- Save your work

You should have a Nexus repository set if you followed the part one of this post. If you don’t have it, provision it using the vagrant-alm repository Vagrant file: “vagrant up nexus_box --provider virtualbox”:

config.vm.define "nexus_box", primary: true do |nexus|

config.vm.hostname = "nexus.local"

nexus.vm.network :private_network, ip: "192.168.56.5"

nexus.vm.provider :virtualbox do |v|

v.gui = false

v.memory = 1024

end

end

Jenkins will upload the artifact to the Nexus server during the pipeline execution. For this to work, the Nexus credentials have to be set in the Jenkins credentials store. Go to the Credentials menu, System, Global credentials, and add a new credential:

- Scope: Global

- Username: admin

- Password: admin123

- ID: nexus

- Description: Nexus Credentials

Login to the Nexus dashboard with the user admin and password admin123. Go to the “gear” icon, click on repositories and locate the maven-releases repository. Click on it and update the following fields:

- Layout policy: permissive

- Strict Content Type Validation: unchecked

- Deployment policy: disable redeploy

This is the repository where Jenkins will upload the Soccer Stats application binaries for Ansible Tower to download and deploy to the virtual machines.

Running Tower Jobs with Jenkins Pipelines

Now that your servers are running and configured accordingly, the next step is to put some work on it.

For this integration to work, a new pipeline has to be created at the Jenkins side. A pipeline similar to the first part of this series is going to be used. All the details about it have been explained in that post. If you have any questions about its insides, read the section “Example Pipeline Flow” of part one.

To add a new pipeline flow on the Jenkins side, after having logged in the Jenkins dashboard, click on “New Item” and choose “Pipeline” as the new project with the name “soccer-stats-pipeline-tower”:

- Check the option “Discard old builds” and keep only three max builds to save disk space

- Check the option “Do not allow concurrent builds”

- Inform the git URL of the Soccer Stats project: https://github.com/ricardozanini/soccer-stats/.

- Check the option “This project is parameterized”:

- Name: FULL_BUILD

- Type: Boolean value

- Default Value: checked

- Name: HOST_PROVISION

- Type: Choice value

- Options: all the options you have on your inventory plus the option “all” separated by a new line.

- Name: FULL_BUILD

The parameter “HOST_PROVISION” doesn’t need to exist. It’s just there to limit where Ansible Tower should deploy the application. In real world scenarios this has to be controlled directly by Tower through the “Inventory” component. This parameter was added for demonstration purpose only. You might want to remove it.

The other parameter, “FULL_BUILD” tells the pipeline flow if the application needs a full build from the source or if it should just take the last binary from Nexus and deploy the application.

At the “Pipeline” section of the project configuration, fill the fields in like:

- Definition: Pipeline script from SCM

- Repositories:

- Repository URL: https://github.com/ricardozanini/soccer-stats (or your fork)

- Credentials: none

- Branches to build: */master

- Script Path: JenkinsfileTower

Leave the other options with the default values and save your work.

The most important part of the pipeline that will delegate to Ansible Tower the responsibility to provision the machine and deploy the Soccer Stats application is the “Deploy” stage, since everything else remains like the previous version:

stage('Deploy') {

node {

def pom = readMavenPom file: "pom.xml"

def repoPath = "${pom.groupId}".replace(".", "/") +

"/${pom.artifactId}"

def version = pom.version

if(!FULL_BUILD) {

sh "curl -o metadata.xml -s http://${NEXUS_URL}/repository/${NEXUS_REPO}/${repoPath}/maven-metadata.xml"

version = sh script: 'xmllint metadata.xml --xpath "string(//latest)"', returnStdout: true

}

def artifactUrl = "http://${NEXUS_URL}/repository/${NEXUS_REPO}/${repoPath}/${version}/${pom.artifactId}-${version}.war"

def hostLimit = (HOST_PROVISION == "all" || HOST_PROVISION == null) ? null : HOST_PROVISION

ansibleTower(

towerServer: 'tower',

templateType: 'job',

jobTemplate: '7',

importTowerLogs: true,

inventory: '2',

removeColor: false,

verbose: true,

credential: '2',

limit: "${hostLimit}",

extraVars: """---

ARTIFACT_URL: "${artifactUrl}"

APP_NAME: "${pom.artifactId}"

"""

)

}

The first part of the “Deploy” stage is pretty straightforward since it’s defining the Nexus repository URL where the application binaries were deployed.

Having the Nexus URL set, the pipeline calls the Ansible Tower plugin API sending the relevant parameters:

- towerServer: the name of the Ansible Tower installation defined in the section “Configuring Jenkins”

- templateType: job

- jobTemplate: the ID of the job template defined in the Ansible Tower. This ID can be grabbed at the Tower URL

- inventory: the inventory ID

- credential: the user credential ID that should be used to run the job

- extraVars: YAML format file with the variables defined in the job template

Running the pipeline flow is pretty simple. Click on the pipeline and then “Build with Parameters”. Make sure the virtual machines where the application should be deployed are up and running. Don’t bother installing anything since the playbook will handle this for you. Check the option “FULL_BUILD” and choose which host to provision.

After some time building the application, Jenkins will connect to Ansible Tower and you should see the Ansible engine logs in the Jenkins console output:

Beginning Ansible Tower Run on tower

Requesting tower to run job template 7

Template Job URL: https://tower.local/#/jobs/95

Identity added: /tmp/awx_95_pQ4xuw/credential_2 (/tmp/awx_95_pQ4xuw/credential_2)

In the end of the pipeline execution, you should see a summary of the Tower execution:

PLAY RECAP *********************************************************************

[0;33mapp.local[0m : [0;32mok=29 [0m [0;33mchanged=3 [0m unreachable=0 failed=0

[0;33mapp2.local[0m : [0;32mok=29 [0m [0;33mchanged=14 [0m unreachable=0 failed=0

[0;33mlocalhost[0m : [0;32mok=2 [0m [0;33mchanged=1 [0m unreachable=0 failed=0

Found environment variables to inject but the EnvInject plugin was not found

Tower completed the requested job

Now the application is ready to be accessed at the URL: http://app.local:8080. If you SSH the machine you’ll see that Java was installed and the soccer-stats service is running locally in “active” state:

$ vagrant ssh app_box

$ java -version

openjdk version "1.8.0_181"

OpenJDK Runtime Environment (build 1.8.0_181-b13)

OpenJDK 64-Bit Server VM (build 25.181-b13, mixed mode)

$ systemctl status soccer-stats.service

● soccer-stats.service - soccer-stats

Loaded: loaded (/usr/lib/systemd/system/soccer-stats.service; enabled; vendor preset: disabled)

Active: active (running) since Sat 2018-09-08 15:47:10 UTC; 17min ago

Main PID: 4501 (soccer-stats.wa)

CGroup: /system.slice/soccer-stats.service

├─4501 /bin/bash /home/soccer-stats/soccer-stats.war

└─4516 /usr/bin/java -Dsun.misc.URLClassPath.disableJarChecking=true -jar /home/soccer-stats/soccer-stats.war

Summary

Using the previous part of this series, “Integrating Ansible with Jenkins in a CI/CD process” as inspiration, we took a similar path to integrate Ansible Tower with Jenkins. First, it was briefly explained how Ansible Tower works, and then a new Tower installation was created. Having the servers running was a matter of plugin configuration to have Ansible Tower and Jenkins integrated thanks to the Tower REST API.

The pipeline execution just took the application sources, build and uploaded it to the artifact repository (Nexus). This way, Tower could access the application binaries during the deploy to install the application as a service with all its required dependencies, in this case the Java Virtual Machine.

The beauty of this integration is that Jenkins never touches the machine. Instead it delegates to Ansible Tower all the heavy work to provision the machine, having the application ready to be used. Jenkins doesn’t even know where the application is and what needs to be done.

Ansible Tower is a powerful tool to have in a CI/CD process, since it takes the responsibility for the environment provision and inventory management, leaving Jenkins with only one job: orchestrate the process. And thanks to its role-based access control features, anyone in the team, from Devs to Ops, can leverage it. It’s time to integrate!

Sull'autore

Ricardo Zanini is a TAM in the Latin America region. He has expertise in integration, middleware and software engineering. Ricardo has been studying software quality since his first professional years and today helps strategic customers achieve success with Red Hat Middleware products and quality processes.

Altri risultati simili a questo

La tua piattaforma applicativa è pronta per il futuro?

Convalida delle competenze mirate: i principali aggiornamenti di Red Hat Certification

Where Coders Code | Command Line Heroes

What Kind of Coder Will You Become? | Command Line Heroes

Ricerca per canale

Automazione

Novità sull'automazione IT di tecnologie, team e ambienti

Intelligenza artificiale

Aggiornamenti sulle piattaforme che consentono alle aziende di eseguire carichi di lavoro IA ovunque

Hybrid cloud open source

Scopri come affrontare il futuro in modo più agile grazie al cloud ibrido

Sicurezza

Le ultime novità sulle nostre soluzioni per ridurre i rischi nelle tecnologie e negli ambienti

Edge computing

Aggiornamenti sulle piattaforme che semplificano l'operatività edge

Infrastruttura

Le ultime novità sulla piattaforma Linux aziendale leader a livello mondiale

Applicazioni

Approfondimenti sulle nostre soluzioni alle sfide applicative più difficili

Virtualizzazione

Il futuro della virtualizzazione negli ambienti aziendali per i carichi di lavoro on premise o nel cloud