Performance is a critical focus area of improvement with every release of Red Hat Enterprise Linux (RHEL). With RHEL 8.4, we introduced several key performance enhancements for system administrators and performance engineers to enable simpler troubleshooting experiences, richer visualization of performance metrics, and deeper performance insights. In this blog, we will go over some of these capabilities.

The newly improved RHEL web console

Just imagine that you only have a couple of minutes, and you want to quickly access live and historical performance metrics across CPU, memory, network and storage resources to understand their utilization, saturation and errors. What do you do?

With the new interface in the RHEL 8.4 web console, administrators can look at some key metrics and more easily identify where to start troubleshooting performance issues — they have a better understanding of what's going on with their local systems, as well as across the network environment.

If you’re new to the RHEL web console, the performance metrics can be found under the System -> Overview - Usage card. Before you can see the history of performance metrics, you need to install the cockpit-pcp package.

You can click the “Install cockpit-pcp” button within the Usage card section to install this package.

This new interface is divided into two sections. Across the top, we have four cards showing real-time data for CPU, Memory, Storage, and Network:

For CPU, you’ll see that we show utilization and saturation and then provide utilization metrics for CPU hot services. For memory, we show RAM utilization and swap usage, and then provide memory utilization metrics for the top services. For disks, we show read/write IO metrics and disk utilization percentages. For networking, we show bytes in and out across each network interface.

Along the bottom, provided you have pcp metric collection installed and enabled as explained earlier, you will see something similar to:

This graphic presents performance data from the most recent data to metrics collected as much as several weeks old. On the left, you get a list of events that may be of interest from a performance perspective, along with timestamps synced to the local machine clock. On the right, you get metrics representing CPU, Memory, Disks, and Network visualized in four columns.

For each column, utilization metrics are on the left and saturation metrics on the right of the center column line. Hovering over the graph will show you a tooltip with the precise numbers at a particular point in time. This allows you to get a better sense of where to start looking at when doing performance troubleshooting and when a performance problem might have started showing up..

Deeper Insights with Flame graphs

Now, there are times when the simple troubleshooting with the RHEL web console just cannot narrow down the root cause of a performance problem. In these cases, you need deeper insights into what’s going on, and for this reason, we have on-CPU flame graphs in RHEL.

This functionality allows you to profile what’s taking up cycles on your CPU and then determine where your CPU is spending a lot of cycles. Being able to visualize this quickly is a significant step forward from having to look at pages full of stack trace data.

Before proceeding, make sure to have the packages perf and js-d3-flame-graph installed on your system.

To capture flame graph data, you can run the perf command with these options. After you’ve let the command run for a period of time (say 30 seconds), you’ll want to stop data collection by issuing a Ctrl-C in the terminal.

[root@bigdemo1 ~]# perf script record flamegraph -F 99 -g ^C [ perf record: Woken up 3 times to write data ] [ perf record: Captured and wrote 1.194 MB perf.data (3448 samples) ]

To output the flame graph, you can run :

[root@bigdemo1 ~]# perf script report flamegraph

Which will then report:

dumping data to flamegraph.html

When we browse to the result flamegraph.html file, you will see something like the following:

The graph represents the 30 seconds of activity on the CPU that we profiled with perf. You can read this visualization from the bottom to the top to see the flow of the stack traces. Horizontally, the more space occupied by part of the trace, the more cycles that it has occupied on the CPU. Kernel stacks are shown in a shade of orange and user space stacks have a “warm” hue.

If you look closely at the above chart, the first line on the bottom simply says “root”, indicating the root of the stack frame trees. Moving up a line, we get the processes, which in this flame graph are “SetroubleshootP, mysqld, apache2, 240998, rpm, ksoftirqd, kworker, grafana-server, and redis-server”.

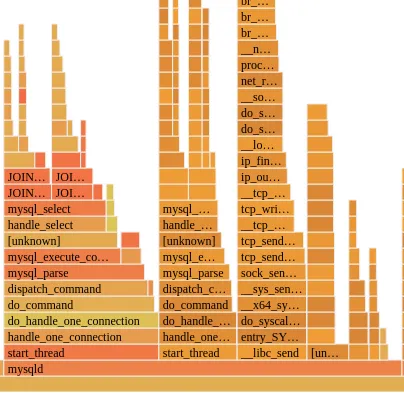

If you zoom in a bit more (you can click on each stack frame), you will notice that mysqld happens to have the most red activity on the host:

As we can see from the above, the “hottest” code path is the “start_thread” path that initiates a mysql_select that invokes a MySQL JOIN operation. Knowing that JOINS are CPU intensive in a SQL database makes this diagram make sense and not necessarily something to worry about, but if we didn’t know that, then the above tells us that our JOINS are taking a decent bit of CPU time.

While there is nothing necessarily wrong with the CPU consumption pattern in this particular case, there are many times where an application is unfairly bottlenecking the CPU and “running hot”.

Now, we have only scratched the surface when it comes to performance enhancements in RHEL 8.4.

Stay tuned for our upcoming posts, where we will share additional performance tools in RHEL such as improvements to our PCP and Grafana stack. In the meantime, we hope that you’ll find these new features extremely useful and efficient in helping you get to the root of performance problems. It’s time to get started with RHEL 8.4.

Sull'autore

Karl Abbott is a Senior Product Manager for Red Hat Enterprise Linux focused on the kernel and performance. Abbott has been at Red Hat for more than 15 years, previously working with customers in the financial services industry as a Technical Account Manager.

Altri risultati simili a questo

Optimizing cluster observability: A strategic approach to selective log routing in Red Hat OpenShift

The efficient enterprise: Scaling intelligence with Mixture of Experts

Keeping Track Of Vulnerabilities With CVEs | Compiler

Post-quantum Cryptography | Compiler

Ricerca per canale

Automazione

Novità sull'automazione IT di tecnologie, team e ambienti

Intelligenza artificiale

Aggiornamenti sulle piattaforme che consentono alle aziende di eseguire carichi di lavoro IA ovunque

Hybrid cloud open source

Scopri come affrontare il futuro in modo più agile grazie al cloud ibrido

Sicurezza

Le ultime novità sulle nostre soluzioni per ridurre i rischi nelle tecnologie e negli ambienti

Edge computing

Aggiornamenti sulle piattaforme che semplificano l'operatività edge

Infrastruttura

Le ultime novità sulla piattaforma Linux aziendale leader a livello mondiale

Applicazioni

Approfondimenti sulle nostre soluzioni alle sfide applicative più difficili

Virtualizzazione

Il futuro della virtualizzazione negli ambienti aziendali per i carichi di lavoro on premise o nel cloud