In the past few posts we have discussed the existing virtio-networking architecture both the kernel based (vhost-net/virtio-net) and the userspace/DPDK based (vhost-user/virtio-pmd). Now we'll turn our attention to emerging virtio-networking architectures aiming at providing wirespeed performance to VMs.

In this post we will be covering the building blocks for providing the desired data plane and control plane in order to achieve wire speed performance. We will cover the SR-IOV technology and how it currently addresses this problem. We will then describe virtio-networking approaches for addressing this challenge including virtio full HW offloading and vDPA (virtual data path acceleration) with an emphasis on the benefits vDPA brings. We will conclude by comparing the previous virtio-networking architectures and the ones presented here.

This post is intended for those who are interested in understanding the essence of the different virtio-networking architectures including vDPA without going into all the details (and there are a lot of details). As usual this post will be followed by a technical deep dive post and a hands on post.

Data plane and control plane for direct access to NIC

In the previous vhost-net/virtio-net and vhost-user/virtio-pmd architectures the NIC had terminated in the OVS kernel or OVS-DPDK respectively and the virtio backend interfaces started from another port of OVS.

In order to speed things up we proceed with connecting the NIC directly to guest.

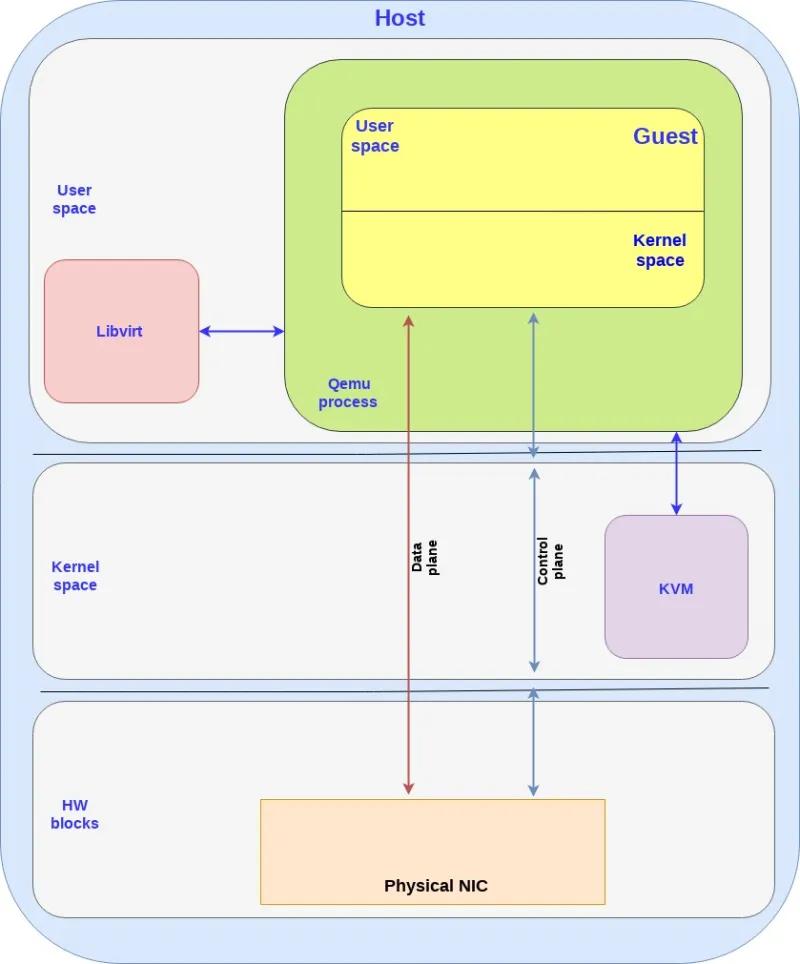

Similar to previous virtio architectures we separate our communication channel to data plane and control plane towards the NIC:

-

Control plane - Used for configuration changes and capability negotiation between the NIC and guest both for establishing and terminating the data plane.

-

Data plane - Used for transferring the actual data (packets) between NIC and guest. When connecting the NIC directly to the guest, this implies that the NIC is required to support the virtio ring layout.

This diagram presents those interfaces:

Note the following points:

-

For additional information on KVM, libvirt and qemu processes, please see the previous posts. A list of posts so far is at the end of this post.

-

The data plane goes directly from the NIC to the guest and in practice this is implemented by a shared memory the guest and the NIC can access (shared by the guest), bypassing the host kernel. This implies that both sides need to use the exact same ring layout else translations will be required and translations have a performance penalty attached.

-

The control plane on the other hand may pass through the host kernel or the qemu process depending on the implementation and we will get back to this later.

SR-IOV for isolating VM traffic

In the vhost-net/virtio-net and vhost-user/virto-pmd architectures we had a software switch (OVS or other) which could take a single NIC on a phy-facing port and distribute it to several VMs with a VM-facing port per VM.

The most straight-forward way to attach a NIC directly to a guest is a device-assignment, where we assign a full NIC to the guest kernel driver.

The problem is that we have a single physical NIC on the server exposed through PCI thus the question is how can we create “virtual ports” on the physical NIC as well?

Single root I/O virtualization (SR-IOV) is a standard for a type of PCI device assignment that can share a single device to multiple virtual machines. In other words, it allows different VMs in a virtual environment to share a single NIC. This means we can have a single root function such as an Ethernet port appear as multiple separated physical devices which address our problem of creating “virtual ports” in the NIC.

SR-IOV has two main functions:

-

Physical functions (PFs) which are a full PCI device including discovery, managing and configuring as normal PCI devices. There is a single PF pair per NIC and it provides the actual configuration for the full NIC device

-

Virtual functions (VFs) are simple PCI functions that only control part of the device and are derived from physical functions. Multiple VFs can reside on the same NIC.

We need to configure both the VFs representing virtual interfaces in the NIC and the PF which is the main NIC interface. For example, we can have a 10GB NIC with a single external port and 8 VF. The speed and duplex for the external port are PF configurations while rate limiters are VF configurations.

The hypervisor is the one mapping virtual functions to virtual machines and each VF can be mapped to a single guest at a time (a VM can have multiple VFs).

Let’s see how SR-IOV can be mapped to the guest kernel, userspace DPDK or directly to the host kernel:

-

OVS kernel with SR-IOV: we are using the SR-IOV to provide multiple phy-facing ports from the OVS perspective (with separate MACs for example) although in practice we have a single physical NIC. This is done through the VFs.

We are a section of the kernel memory (for each VF) to the specific VF on the NIC.

-

OVS DPDK with SR-IOV: Bypassing the host kernel directly from the NIC to the OVS DPDK on the user space which SR-IOV provides. We are mapping the host user space memory to the VF on the NIC.

-

SR-IOV for guests: mapping the memory area from the guest to the NIC (bypassing the host all together). It should be noted that

Note that when using device assignment, the ring layout is shared between the physical NIC and the guest. However it’s proprietary to the specific NIC being used, hence it can only be implemented using the specific NIC driver provided by the NIC vendor

Another note: there is also a fourth option which is common, and that is to assign the device to a dpdk application inside the guest userspace.

SR-IOV for mapping NIC to guest

Focusing on SR-IOV to the guest use case, another question is how to effectively send/receive packets to the NIC once memory is mapped directly.

We have two approaches to solve this:

-

Using the guest kernel driver: In this approach we use the NIC (vendor specific) driver in the kernel of the guest, while directly mapping the IO memory, so that the HW device can directly access the memory on the guest kernel.

-

Using the DPDK pmd driver in the guest: In this approach we use the NIC (vendor specific) DPDK pmd driver in the guest userspace, while directly mapping the IO memory, so that the HW device can directly access the memory on the specific userspace process in the guest.

In this section we will focus on the DPDK pmd driver in the guest approach.

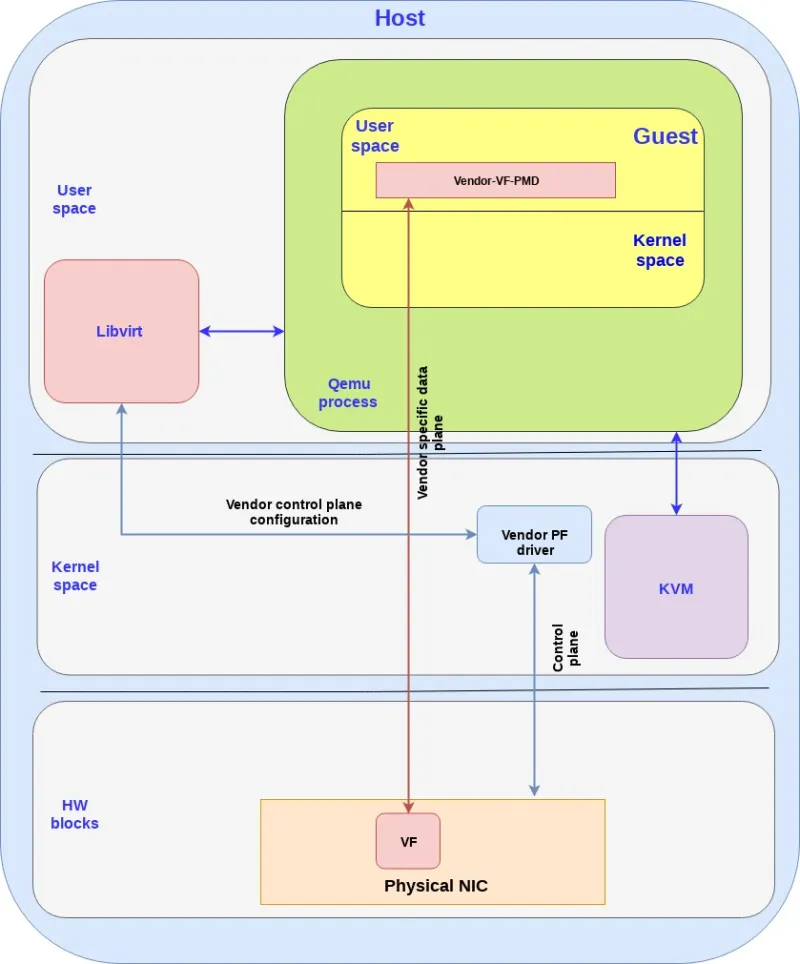

The following diagram brings this all together:

Note the following points:

-

The data plane is vendor specific and goes directly to the VF.

-

For SRIOV, Vendor NIC specific drivers are required both in the host kernel (PF driver) and the guest userspace (VF PMD) to enable this solution.

-

The host kernel driver and the guest userspace PMD driver don’t communicate directly. The PF/VF drivers are configured through other interfaces (e.g. the host PF driver can be configured by libvirt).

-

The vendor-VF-pmd in the guest userspace is responsible for configuring the NICs VF while the vendor-PF-driver in the host kernel space is managing the full NIC.

Summarizing this approach we are able to provide wirespeed from the guest to the NIC by leveraging SR-IOV and DPDK PMD. However, this approach comes at an expense.

The solution described is vendor specific, meaning that it requires a match between the drivers running in the guest and the actual physical NIC. This implies for example that if the NIC firmware is upgraded, the guest application driver may need to be upgraded as well. If the NIC is replaced with a NIC from another vendor, the guest must use another PMD to drive the NIC. Moreover, migration of a VM can only be done to a host with the exact same configuration. This implies the same NIC with the same version, in the same physical place and some vendor specific tailored solution for migration

So the question we want to address is how to provide the SRIOV wirespeed to the VM while using a standard interface and most importantly, using generic driver in the guest to decouple it from specific host configurations or NICs.

In the next two solutions we will show how virtio could be used to solve that problem.

Virtio full HW offloading

The first approach we present is the virtio full HW offloading were both the virtio data plane and virtio control plane are offloaded to the HW. This implies that the physical NIC (while still using VF to expose multiple virtual interfaces) supports the virtio control spec including discovery, feature negotiation, establishing/terminating the data plane, and so on. The device also support the virtio ring layout thus once the memory is mapped between the NIC and the guest they can communicate directly.

In this approach the guest can communicate directly with the NIC via PCI so there is no need for any additional drivers in the host kernel. The approach however requires the NIC vendor to implement the virtio spec fully inside its NIC (each vendor with its proprietary implementation) including the control plane implementation (which is usually done in SW on the host OS, but in this case needs to be implemented inside the NIC)

The following diagram shows the virtio full HW offloading architecture:

Note the following:

-

In reality the control plane is more complicated and requires interactions with memory management units (IOMMU and vIOMMU) which will be described in the next technical deep dive post.

-

There are additional blocks in the host kernel,

qemuprocess and guest kernel which have been dropped in order to simplify the flow. -

There is also an option to pull the virtio data plane and control plane to the kernel instead of the user space (as described in the SRIOV case as well) which means we use the virtio-net driver in the guest kernel to talk directly with the NIC (instead of using the virtio-pmd in the guest userspace as shown above).

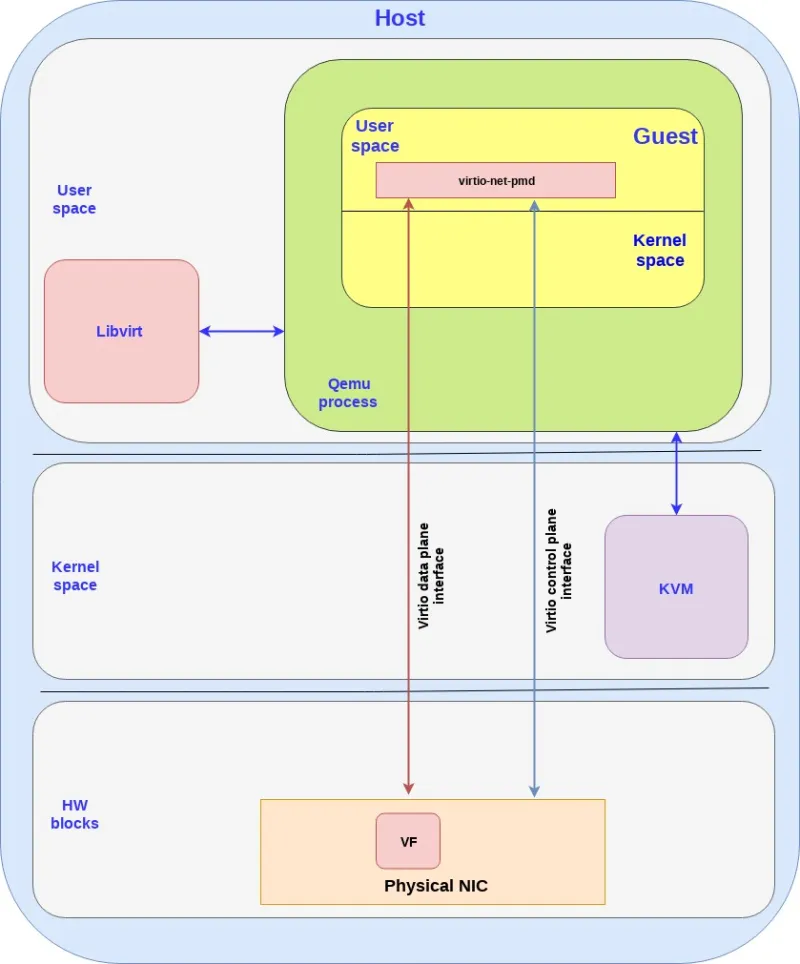

vDPA - standard data plane

Virtual data path acceleration (vDPA) in essence is an approach to standardize the NIC SRIOV data plane using the virtio ring layout and placing a single standard virtio driver in the guest decoupled from any vendor implementation, while adding a generic control plane and SW infrastructure to support it. Given that it’s an abstraction layer on top of SRIOV it is also future proof to support emerging technologies such as scalable IOV (See the relevant spec.).

Similar to the virtio full HW offloading the data plane goes directly from the NIC to the guest using the virtio ring layout. However each NIC vendor can now continue using its own driver (with a small vDPA add-on) and a generic vDPA driver is added to the kernel to translate the vendor NIC driver/control-plane to the virtio control plane.

The vDPA is a much more flexible approach than the virtio full HW offloading enabling NIC vendors to support virtio ring layout with significant smaller effort and still achieving wire speed performance on the data plane.

The next diagram presents this approach:

Note the following:

-

There are additional blocks in the host kernel, QEMU process and guest kernel which have been dropped in order to simplify the flow.

-

Similar to the SRIOV and virtio full HW offloading the data plane and control plane can enter in the guest kernel and not the guest user space (same pros and cons previously mentioned).

vDPA has the potential of being a powerful solution for providing wirespeed Ethernet interfaces to VMs:

-

Open public specification—anyone can see, consume and be part of enhancing the specifications (the Virtio specification) without being locked to a specific vendor.

-

Wire speed performance—similar to SRIOV, no mediators or translator between.

-

Future proof for additional HW platform technologies—ready to also support Scalable IOV and similar platform level enhancements.

-

Single VM certification—Single standard generic guest driver independent of a specific NIC vendor means that you can now certify your VM consuming an acceleration interface only once regardless of the NICs and versions used (both for your Guest OS and or for your Container/userspace image).

-

Transparent protection—the guest uses a single interface which is protected on the host side by 2 interfaces (AKA backend-protection). If for example the vDPA NIC is disconnected then the host kernel is able to identify this quickly and automagically switch the guest to use another virtio interface such as a simple vhost-net backend.

-

Live migration—Providing live migration between different vendor NICs and versions given the ring layout is now standard in the guest.

-

Providing a standard accelerated interface for containers—will be discussed in future posts.

-

The bare-metal vision—a single generic NIC driver—Forward looking, the virtio-net driver can be enabled as a bare-metal driver, while using the vDPA SW infrastructure in the kernel to enable a single generic NIC driver to drive different HW NICs (similar, e.g. to the NVMe driver for storage devices).

Comparing virtio architectures

Summarizing what we have learned in the previous posts in this serious, and in this post, we have covered four virtio architectures for providing Ethernet networks to VMs: vhost-net/virito-net, vhost-user/virito-pmd, virtio full HW offloading and vDPA.

Let’s compare the pros and cons of each of the approaches:

Summary

In this post we have completed covering the solution overview for the 4 virtio-networking architectures providing Ethernet interfaces to VMs ranging from slow (vhost-net) to faster (vhost-user) and wirespeed (virtio full HW offloading and vDPA).

We’ve highlighted how vDPA provides a number of advantages over the other virtio solutions and SR-IOV as well. We’ve also provided a comparison of all fourvirtio technologies. The next post will provide the details on the building blocks used for virtio full HW offloading and vDPA followed by a hands on post.

This concludes the last solutions overview post focusing on VMs and in future solution posts we will move on to look at vDPA for containers and hybrid clouds including on-prem, AWS and Alibaba bare metal servers.

Prior posts / Resources

- Introducing virtio-networking: Combining virtualization and networking for modern IT

- Introduction to virtio-networking and vhost-net

- Deep dive into Virtio-networking and vhost-net

- Hands on vhost-net: Do. Or do not. There is no try

- How vhost-user came into being: Virtio-networking and DPDK

- A journey to the vhost-users realm

- Hands on vhost-user: A warm welcome to DPDK

Want to learn more about edge computing?

Edge computing is in use today across many industries, including telecommunications, manufacturing, transportation, and utilities. Visit our resources to see how Red Hat's bringing connectivity out to the edge.

Edge computing is in use today across many industries, including telecommunications, manufacturing, transportation, and utilities. Visit our resources to see how Red Hat's bringing connectivity out to the edge.

Sobre os autores

Mais como este

Pare de gerenciar o passado e comece a construir o futuro da TI

OpenShift: integração consistente para a empresa híbrida

Navegue por canal

Automação

Últimas novidades em automação de TI para empresas de tecnologia, equipes e ambientes

Inteligência artificial

Descubra as atualizações nas plataformas que proporcionam aos clientes executar suas cargas de trabalho de IA em qualquer ambiente

Nuvem híbrida aberta

Veja como construímos um futuro mais flexível com a nuvem híbrida

Segurança

Veja as últimas novidades sobre como reduzimos riscos em ambientes e tecnologias

Edge computing

Saiba quais são as atualizações nas plataformas que simplificam as operações na borda

Infraestrutura

Saiba o que há de mais recente na plataforma Linux empresarial líder mundial

Aplicações

Conheça nossas soluções desenvolvidas para ajudar você a superar os desafios mais complexos de aplicações

Virtualização

O futuro da virtualização empresarial para suas cargas de trabalho on-premise ou na nuvem