Kubernetes considerations for performance and scalability mentions that it supports up to 5000 nodes on a single cluster where each node is running Kubernetes agents. OpenShift documentation for performance and scalability states a tested maximum of up to 2000 nodes where each node is running OpenShift agents. However, scaling and performance numbers are tied to multidimensional factors like infrastructure configurations, platform limits and workload sizes and hence your mileage may vary from what is mentioned in these documents. One such configuration detail is whether the OpenShift Networking provider is OpenShift SDN or OVN Kubernetes. This post aims at talking about how OVN Kubernetes CNI has been enhanced to allow scaling the node count on OpenShift clusters.

We will start with introducing OVN Kubernetes, its legacy architecture and limitations at scale. Then we will look at the OVN Kubernetes architectural changes that happened in the OpenShift 4.14 release and how this provides improved cluster scaling, network performance, stability and security seamlessly to OpenShift users. Note that all these changes are completely opaque to users. These benefits will take effect via normal OpenShift minor upgrades.

What is OVN Kubernetes?

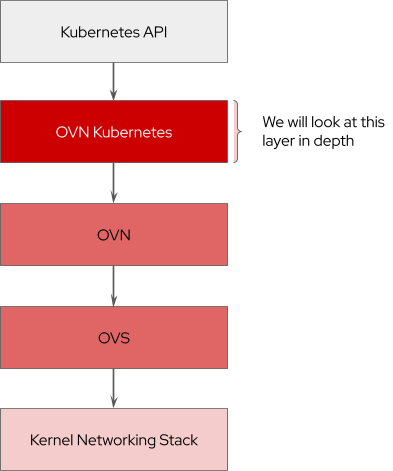

OVN Kubernetes (Open Virtual Networking - Kubernetes) is an open-source project that provides a robust networking solution for Kubernetes clusters with OVN (Open Virtual Networking) and Open vSwitch (Open Virtual Switch) at its core. It is a Kubernetes networking conformant plugin written according to the CNI (Container Network Interface) specifications. OVN Kubernetes is the default CNI plugin of Red Hat OpenShift Networking starting from the 4.12 release.

The OVN Kubernetes plugin watches the Kubernetes API. It acts on the generated Kubernetes cluster events by creating and configuring the corresponding OVN logical constructs in the OVN database for those events. OVN (which is an abstraction on top of Open vSwitch) converts these logical constructs into logical flows in its database and programs the OpenFlow flows on the node, which enables networking on a Kubernetes cluster.

The key functionalities and features that OVN Kubernetes provides include:

- Creates pod networking including IP Address Management (IPAM) allocation and virtual ethernet (veth) interface for the pod.

- Programs overlay based networking implementation for Kubernetes clusters using Generic Network Virtualization Encapsulation GENEVE tunnels that enables pod-to-pod communication.

- Implements Kubernetes Services & EndpointSlices through OVN Load Balancers.

- Implements Kubernetes NetworkPolicies and AdminNetworkPolicies through OVN Access Control Lists (ACLs).

- Multiple External Gateways (MEG) allows for multiple dynamically or statically assigned egress next-hop gateways by utilizing OVN ECMP routing features.

- Implements Quality of Service (QoS) Differentiated Services Code Point (DSCP) for traffic egressing the cluster through OVN QoS.

- Provides ability to send egress traffic from cluster workloads using an admin-configured source IP (EgressIP) to outside the cluster.

- Provides the ability to restrict egress traffic from cluster workloads (Egress Firewall) using OVN Access Control Lists.

- Provides ability to offload networking tasks from CPU to NIC using OVS Hardware Offload thus providing increased data-plane performance.

- Supports IPv4/IPv6 Dual-Stack clusters.

- Implements Hybrid Networking to provide support for mixed Windows/Linux clusters using VXLAN tunnels.

- Provides IP Multicast using OVN IGMP snooping and relays.

OVN Kubernetes Legacy Architecture

Before we dive into the architectural changes of OVN Kubernetes, we need to understand the legacy architecture of OpenShift 4.13 and below and some of the limitations it had.

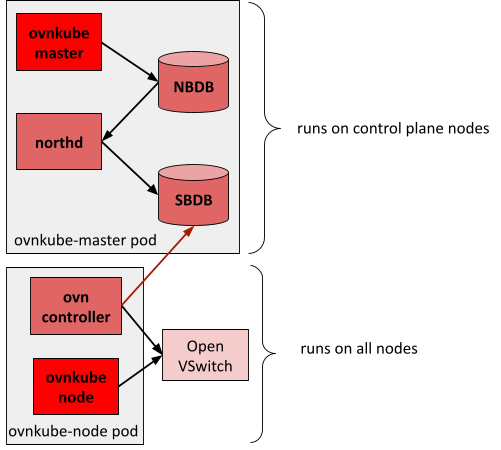

Control Plane Components

These components run only on the controller nodes in a cluster. They are containers running in the ovnkube-master pod in the openshift-ovn-kubernetes namespace:

- ovnkube-master (OVN Kubernetes Master): This is the central brain of OVN Kubernetes plugin that watches the Kubernetes API and receives Kubernetes cluster events for Nodes, Namespaces, Pods, Services, EndpointSlices, Network Policies and other CRDs (Custom Resources Definitions). It translates each of the cluster events into OVN logical elements such as Routers, Switches, Routes, Policies, Load Balancers, NATs, ACLs, PortGroups, and AddressSets. These logical elements are then inserted into OVN’s Northbound database leader. It is also responsible for creating the logical network topology for the cluster and handles pod IPAM allocations which are annotated on the Pod objects.

- nbdb (OVN Northbound database): This is an OVN native component that stores the logical entities created by OVN Kubernetes master.

- northd (OVN North daemon): This is an OVN native daemon that constantly watches the Northbound database and converts the logical entities into lower layer OVN logical flows. It inserts these logical flows into OVN’s Southbound database.

- sbdb (OVN Southbound database): This is an OVN native component that stores the logical flows created by OVN northd.

The NBDB and SBDB containers running across the control plane stack are connected together by RAFT. This helps maintain consistency between the 3 replicas of databases since at a given time only one replica is a leader and the other two are followers.

Per Node Components

These components run on all the nodes in a cluster. They are containers running in the ovnkube-node pod in the openshift-ovn-kubernetes namespace:

- ovnkube-node (OVN Kubernetes Node): This is a process running on each node that is called as a CNI plugin from the kubelet/CRI-O components. It implements the CNI Add and CNI Delete requests. It digests the IPAM annotation written by OVN Kubernetes Master and creates the corresponding virtual ethernet interface for the pods on the host networking stack. It also programs the custom OpenFlows and IPTables required to implement Kubernetes services on each node.

- ovn-controller (OVN Controller): This is an OVN native daemon that watches the logical flows in OVN Southbound database and converts them into OpenFlows (physical flows) and programs them onto the Open vSwitch running on each node.

Open vSwitch runs as a systemd process on each node. It is a programmable virtual switch that implements the OpenFlow specification and enables networking through software-defined concepts.

Limitations at Scale

This architecture had several challenges:

- Instability: The OVN Northbound and Southbound databases are central to the entire cluster. They are connected together by the RAFT consensus consistency model. This has been a cause of many split-brain issues in the past where the databases lose consensus and diverge over time, especially at scale. These issues are unsolvable through workarounds and often need rebuilding of OVN databases from scratch. The impact of this is not isolated and it brings the entire cluster’s networking control plane to a halt. This has particularly been a major pain point for upgrades.

- Scale bottlenecks: As seen in the above diagram, the ovn-controller container connects to the Southbound database for logical flow information. On large clusters with N nodes, this means each Southbound is handling N/3 connections (HA mode) from ovn-controllers. This has led to scaling issues in the past where the centralized Southbound databases are no longer able to keep up with serving ovn-controllers data in large clusters.

- Performance bottlenecks: The OVN Kubernetes brain which is central to the entire cluster is storing and processing changes to all Kubernetes pods, services, endpoints objects across all the nodes. This in turn means each ovn-controller is also watching the global Southbound databases for changes of all nodes thus leading to more operational latency and high resource consumption on the control plane nodes.

- Non-restrictable infrastructure traffic: Since ovn-controllers are talking to the central Southbound databases the infrastructure network traffic transits from a given worker node to the master nodes. In the case of HyperShift where the control plane stack of the hosted cluster lives on the management cluster, this traffic crosses cluster boundaries in a way that cannot be easily restricted.

OVN Kubernetes Interconnect Architecture

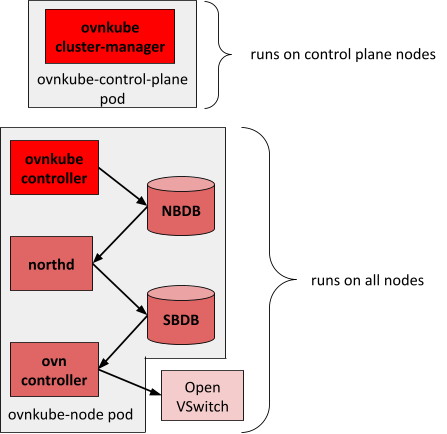

In order to solve the above challenges OpenShift 4.14 introduces a new OVN Kubernetes architecture where most control-plane complexity was pushed down to each node in the cluster.

Control Plane Components:

The control plane is super-lightweight with a single container called ovnkube-cluster-manager (OVN Kubernetes ClusterManager) running in the ovnkube-control-plane pod in the openshift-ovn-kubernetes namespace. This is the central brain of OVN Kubernetes plugin that watches the Kubernetes API server and receives Kubernetes node events. Its main responsibility is to allocate non-overlapping pod subnets out to every node in the cluster. It does not have any knowledge about the underlying OVN stack.

Per Node Components:

The data plane is heavier with each node running its own copy of the OVN Kubernetes local brain called ovnkube-controller (OVN Kubernetes Controller), OVN databases, OVN North daemon and OVN Controller containers running in the ovnkube-node pod in the openshift-ovn-kubernetes namespace. OVN Kubernetes Controller is a combination of legacy OVN Kubernetes Master and OVN Kubernetes Node containers. This local brain watches for Kubernetes events that are relevant to this node and creates the necessary entities into the local OVN Northbound database. The local OVN North daemon converts these constructs into logical flows and saves them into the local OVN Southbound database. OVN Controller connects to the local Southbound database and programs the OpenFlow to Open vSwitch.

Improvements

- Stability: The OVN Northbound and Southbound databases are local to each node. Since they are running in the standalone mode, that eliminates the need for RAFT, thus avoiding all the “split-brain” issues. If one of the databases goes down, the impact is now isolated to only that node. This has led to improved stability of the OVN Kubernetes stack and simpler customer escalation resolution.

- Scale: As seen in the above diagram, the ovn-controller container connects to the local Southbound database for logical flow information. On large clusters with N nodes, this means each Southbound database is handling only one connection from its own local ovn-controller. This has removed the scale bottlenecks that were present in the centralized model helping us to scale horizontally with node count.

- Performance: The OVN Kubernetes brain is now local to each node in the cluster and it is storing and processing changes to only those Kubernetes pods, services, endpoints objects that are relevant for that node (note: some features like NetworkPolicies need to process pods running on other nodes). This in turn means the OVN stack is also processing less data thus leading to improved operational latency. Another benefit is that the control plane stack is now lighter-weight.

- Security: Since the infrastructure network traffic between ovn-controller and OVN Southbound database is now contained within each node, overall cross-node (OpenShift) and cross-cluster (HostedControlPlane) chatter is decreased and traffic security can be increased.

Readers might wonder, if the complexity has been pushed from control to data planes, if they need to specifically configure extra resources on their workers? Our extensive scale testing so far has not indicated requirements for any such drastic measures. However as mentioned in the beginning of this post, no one size fits all.

Drawbacks

Since we have a local OVN Kubernetes brain per node instead of a centralized brain, the load added to the Kubernetes api-server will be similar to the legacy OpenShift SDN network plugin and higher than the legacy OVN Kubernetes architecture in OpenShift 4.13 and lower.

The new architecture brings separate OVN Kubernetes controllers and OVN databases to each node; thus one potential challenge can be to troubleshoot if the entities created in each of the separate OVN databases are accurate on a per-feature level, especially at scale.

Summary

OVN Kubernetes changed its architecture from being centralized to being distributed starting from the OpenShift 4.14 release. This has enhanced cluster scaling, network performance, stability and security in clusters running OVN Kubernetes CNI. We welcome all readers to give us feedback on how your clusters perform on this new architecture.

Über den Autor

Ähnliche Einträge

IT-Stack vereinheitlichen: VMs, Cloud und KI vereint

Red Hat Skills Bundle: Der neue Standard für KI-Agenten

The Containers_Derby | Command Line Heroes

Crack the Cloud_Open | Command Line Heroes

Nach Thema durchsuchen

Automatisierung

Das Neueste zum Thema IT-Automatisierung für Technologien, Teams und Umgebungen

Künstliche Intelligenz

Erfahren Sie das Neueste von den Plattformen, die es Kunden ermöglichen, KI-Workloads beliebig auszuführen

Open Hybrid Cloud

Erfahren Sie, wie wir eine flexiblere Zukunft mit Hybrid Clouds schaffen.

Sicherheit

Erfahren Sie, wie wir Risiken in verschiedenen Umgebungen und Technologien reduzieren

Edge Computing

Erfahren Sie das Neueste von den Plattformen, die die Operations am Edge vereinfachen

Infrastruktur

Erfahren Sie das Neueste von der weltweit führenden Linux-Plattform für Unternehmen

Anwendungen

Entdecken Sie unsere Lösungen für komplexe Herausforderungen bei Anwendungen

Virtualisierung

Erfahren Sie das Neueste über die Virtualisierung von Workloads in Cloud- oder On-Premise-Umgebungen