Have you ever wondered about container performance, and which containers might be using more resources than others? In this post, we’ll use Performance Co-Pilot (PCP) and pmda-podman on Red Hat Enterprise Linux 8 Beta to find out.

What will we look at?

In this post, we will create a MediaWiki setup on RHEL 8 Beta, consisting of:

-

A container with PostgreSQL

-

A container with Apache HTTP, PHP and the MediaWiki code

Mediawiki setups on a single system are quite common. After initial deployment, such setups get used more and more, and eventually one of the containers may need to be migrated to a second system with more resources.

Obviously, the container consuming more resources should be migrated to the system with more resources -- but which of the two containers is that? As Performance Co-Pilot (PCP), with its component pmda-podman as part of RHEL 8, we will use it to compare resource consumption of both containers.

As you might be aware, Red Hat offers many application containers directly via the Red Hat Container Catalog. Rather than using these, we will containerize the applications by ourselves. There are also custom applications where no image exists, so the steps below can help to containerize them.

Containerizing PostgreSQL

For this post, I have decided to create containers with PostgreSQL and Apache/PHP to familiarize myself with the tools. I will not explain each of these steps in depth, as the main goal of this post is to illustrate pmda-podman usage. As a first step, we have to install the tools required for containerization on our RHEL 8 system:

# yum install buildah podman

Once RHEL 8 is released, one will have to login with RHN user credentials, like this:

# podman login registry.redhat.io

We will now use buildah to fetch a container image (afterwards I will just call it ‘image’) of rhel8-beta and make it locally available:

# container=$(buildah from registry.access.redhat.com/rhel8-beta) # echo $container rhel8-beta-working-container

My RHEL 8 system was installed using HTTP from a repository available via local network. As part of the installation, file /etc/yum.repos.d/base.repo was deployed on the system, which contains details so the system can access the repo to install further packages. With the next commands we will copy the repo file into the newly created container, install PostgreSQL and tools, and update the packages in the container.

# buildah copy $container /etc/yum.repos.d/base.repo \ /etc/yum.repos.d/base.repo # buildah run $container /bin/bash # yum -y install postgresql-server tmux psmisc nc # yum -y update # yum clean all

Now we have to initialize the PostgreSQL database files. The script normally used for this is making use of systemctl, which we can not use in this container due to the way it was created. For example, a number of Red Hat's official containers do use systemd.

We create a copy which we will modify, replacing the places where systemctl is used with the actual output of systemctl on the container host.

# cp /usr/bin/postgresql-setup /usr/bin/postgresql-setup2 # sed -i 's,`systemctl show -p.*,NeedDaemonReload=no",' /usr/bin/postgresql-setup2 # sed -i 's,systemd_env="$(systemctl show -p Environment.*,systemd_env="Environment=PG_OOM_ADJUST_FILE=/proc/self/oom_score_adj PG_OOM_ADJUST_VALUE=0 PGDATA=/var/lib/pgsql/data" \\,' /usr/bin/postgresql-setup2 # sed -i 's,envfiles="$(systemctl show -p EnvironmentFiles.*,envfiles="" \\,' /usr/bin/postgresql-setup2

Now we can initialize the database files as user postgres, and then configure PostgreSQL to accept connections over the network.

# su - postgres $ /usr/bin/postgresql-setup2 --initdb $ exit # exit user postgres # sed -i 's,^host,#host,' /var/lib/pgsql/data/pg_hba.conf # echo 'host all all all md5' >>/var/lib/pgsql/data/pg_hba.conf # echo "listen_addresses = '*'" >>/var/lib/pgsql/data/postgresql.conf # exit # exit container, back on rhel8

Now we configure the command the container should use to start the database later, and create a new image which includes the changes which we just performed.

# buildah config --cmd \ "su - postgres -c '/usr/bin/postmaster -D /var/lib/pgsql/data'" $container # buildah commit $container localhost/postgres-test

Now we can start a container from the newly-created image postgres-test, access the container with a bash shell and create a database user and database itself.

# podman run -p 5432:5432 --name psql --hostname psql -d postgres-test # podman exec -it psql bash # su - postgres $ createuser -S -D -R -P -E wikiuser # pass wikiuser $ createdb -O wikiuser wikidb $ exit # exit user postgres # exit # exit container bash

Our PostgreSQL is now available on TCP port 5432 of the host system. The database can also be accessed with the psql client from the container host after installing package postgresql:

$ psql -h 127.0.0.1 -W wikidb wikiuser

Containerizing Apache/PHP and Mediawiki

Now let’s set up the other container, and install the required packages.

# container=$(buildah from registry.access.redhat.com/rhel8-beta) # echo $container rhel8-beta-working-container-1 # buildah copy $container /etc/yum.repos.d/base.repo /etc/yum.repos.d/base.repo # buildah run $container -- /usr/bin/bash # yum install -y php wget less procps-ng lsof psmisc php-cli php-common \ php-fpm php-gd php-gmp php-intl php-json php-mbstring php-pear php-process \ php-xml httpd mod_ssl php-pgsql tmux openssl php-opcache

At this point our container image does not have the complete files of the tzdata package available. This issue has been reported as bz1668185. As long as this is unfixed, we need to either reinstall the tzdata package, or update to a newer version.

# yum update -y tzdata

Let’s now deploy mediawiki, and create self-signed HTTPS certs:

# cd /tmp # wget https://releases.wikimedia.org/mediawiki/1.32/mediawiki-1.32.0.tar.gz # cd /var/www/html # tar xfv /tmp/mediawiki-1.32.0.tar.gz # exit

We will now commit our container into image apache-test, and start a new container from the image.

# buildah commit $container localhost/apache-test # podman run -p 80:80 -p 443:443 -it --name apache \ --hostname apache apache-test /usr/bin/bash

As we cannot use systemctl inside the container to start apache and/usr/libexec/https-ssl-gencerts is not started, which would on a normal RHEL 8 system automatically generate self-signed HTTPS certs for us. We could now run the script manually, or generate the certs as follows:

# openssl req -new -newkey rsa:4096 > new.cert.csr # openssl rsa -in privkey.pem -out new.cert.key # openssl x509 -in new.cert.csr -out /etc/pki/tls/certs/localhost.crt \ -req -signkey new.cert.key -days 730 # cp new.cert.key /etc/pki/tls/private/localhost.key

We will now run httpd without daemonizing, and then start php-fpm. Both will use our terminal, so we will use tmux as a modern replacement of screen to manage these two screens. Of course, also starting multiple shells via podman exec -it apache bash could be used.

# tmux # /usr/sbin/httpd -DFOREGROUND

Apache httpd is now running in the foreground, we can use Ctrl+b and then press c to create a new tmux window. From there, we will run php:

# mkdir /run/php-fpm # /usr/sbin/php-fpm --nodaemonize

Podman has at this point already set up everything so we can access port 80 and port 443. In my case, RHEL 8 runs as a KVM guest. Podman did configure iptables so network requests from the outside to the KVM guest will be redirected to the service in the container: so with a browser running on the hypervisor, I can access http://<ip-of-rhel8-guest>:80 and https://<ip-of-rhel8-guest>:443 .

With this, we can access the mediawiki URL, in my case: https://192.168.4.62/mediawiki-1.32.0/ .

We can now complete the MediaWiki setup. For the apache-container to access the PostgreSQL database, we have to find out the IP of the psql container using the following command:

# podman inspect psql | grep 10.

The database name is wikidb and the database user is wikiuser, as we have configured earlier. With these details, we can complete the mediawiki setup and get to download the LocalSettings.php file.

# apachemnt=$(podman mount apache) # cp LocalSettings.php $apachemnt/var/www/html/mediawiki-*/

From the installer script located in the browser, we can now access the configured mediawiki.

Setting up PCP with pmda-podman

Now that both containers are ready, let’s set up pmda-podman on the RHEL 8 host. Our first step is to install the required packages. Let’s install pcp-zeroconf as a quick way to get PCP configured on this system, it will automatically start pmcd for us. After installation of the pcp-pmda-podman package, we will then install the pmda:

# yum -y install pcp-pmda-podman pcp-system-tools pcp-zeroconf # cd /var/lib/pcp/pmdas/podman/ # ./Install

The next step is to verify the pmda-podman setup, and no output means that no issues were found:

# pcp verify --containers

If this went well, and produced no output, we can use the next command to retrieve container-related metrics from PCP. We will ask for the name of the currently usable containers on the system, and whether they are running or not. I am abbreviating the output for better readability:

[root@rhel8a ~]# pminfo --fetch containers.name containers.state.running containers.name inst [0 or "ac..9d"] value "rhel8-beta-working-container" inst [1 or "9c..3c"] value "psql" inst [2 or "5d..39"] value "rhel8-beta-working-container-1" inst [3 or "ae..da"] value "rhel8-beta-working-container-2" inst [4 or "49..ce"] value "apache" containers.state.running inst [0 or "ac..9d"] value 0 inst [1 or "9c..3c"] value 1 inst [2 or "5d..39"] value 0 inst [3 or "ae..da"] value 0 inst [4 or "49..ce"] value 1 [root@rhel8a ~]#

From this output we see that containers named psql and apache are running on this system. Using

[root@rhel8a ~]# pminfo -t --container psql cgroup

we can see all the cgroup metrics which are available for this container. Among these, we have CPUs and NUMA zones assigned to the container, memory usage and memory size, I/O stats and much more, an excerpt from the output:

cgroup.cpuacct.stat.user [Time spent by tasks of the cgroup in user mode] cgroup.cpuacct.stat.system [Time spent by tasks of the cgroup in kernel mode] cgroup.memory.usage [Current physical memory accounted to each cgroup] cgroup.memory.stat.pgpgin [Number of charging events to the memory cgroup] cgroup.memory.stat.pgpgout [Number of uncharging events to the memory cgroup] cgroup.memory.stat.pgfault [Total number of page faults] cgroup.blkio.all.sectors [Per-cgroup total (read+write) sectors] cgroup.blkio.all.time [Per-device, per-cgroup total (read+write) time]

Comparing container resource consumption

Now with pmda-podman in place, we can look at the details of our two containers and compare them. The following command will start to constantly monitor the current CPU, memory and I/O metrics of the psql container:

[root@rhel8a ~]# pmrep --container psql cgroup.cpuacct.stat.user \ cgroup.cpuacct.stat.system cgroup.cpuacct.usage \ cgroup.memory.stat.pgpgin cgroup.memory.stat.pgpgout \ cgroup.memory.stat.pgfault cgroup.blkio.all.sectors \ cgroup.blkio.all.time

In a second terminal, we can run the same command for container apache. With this in place, we can put our workload on mediawiki and compare which of the containers uses more resources.

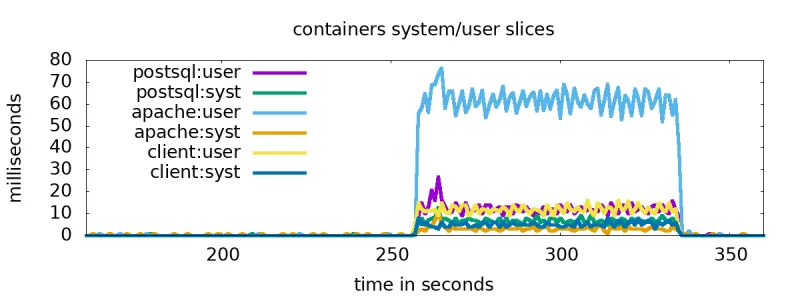

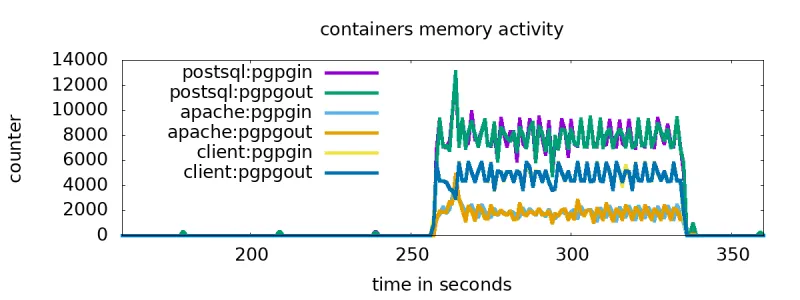

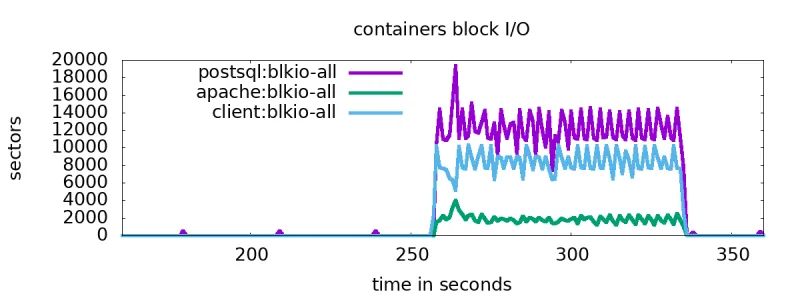

I created a further container client, and used it to run a loop which constantly modified a page in the wiki. I then used the output of the above command for the containers to create graphs with gnuplot. Let’s look at the results:

Here we see the user and system load of the three containers. The script doing modifications starts around second 260 and runs until second 340. The apache container is the top consumer, and our client and database containers are loadwise on the same level.

Slightly different picture for memory activity: the database does most memory accesses.

As for I/O: the database does most I/O. Even the client does more I/O than the apache container, it is not running a daemon but calling curl in a loop. So considering that for a growing MediaWiki instance the CPU is the limiting factor, the apache/php container would be the best candidate to be migrated to a stronger system.

Conclusion

We have looked at basic containerization of two applications, and used pmda-podman to compare resources consumed by three containers. More information about pmda-podman can be found here.

À propos de l'auteur

Christian Horn is a Senior Technical Account Manager at Red Hat. After working with customers and partners since 2011 at Red Hat Germany, he moved to Japan, focusing on mission critical environments. Virtualization, debugging, performance monitoring and tuning are among the returning topics of his daily work. He also enjoys diving into new technical topics, and sharing the findings via documentation, presentations or articles.

Plus de résultats similaires

Cessez de gérer le passé et commencez à bâtir l'avenir de l'informatique

Le catalogue MCP est maintenant disponible : découvrez, déployez et connectez sur Red Hat OpenShift AI

Collaboration In Product Security | Compiler

Keeping Track Of Vulnerabilities With CVEs | Compiler

Parcourir par canal

Automatisation

Les dernières nouveautés en matière d'automatisation informatique pour les technologies, les équipes et les environnements

Intelligence artificielle

Actualité sur les plateformes qui permettent aux clients d'exécuter des charges de travail d'IA sur tout type d'environnement

Cloud hybride ouvert

Découvrez comment créer un avenir flexible grâce au cloud hybride

Sécurité

Les dernières actualités sur la façon dont nous réduisons les risques dans tous les environnements et technologies

Edge computing

Actualité sur les plateformes qui simplifient les opérations en périphérie

Infrastructure

Les dernières nouveautés sur la plateforme Linux d'entreprise leader au monde

Applications

À l’intérieur de nos solutions aux défis d’application les plus difficiles

Virtualisation

L'avenir de la virtualisation d'entreprise pour vos charges de travail sur site ou sur le cloud