Modern business depends on applications. Across industries, organizations use applications to create differentiated services, interact with customers, and connect partners and employees.

Even so, traditional business models can’t keep up with the pace of change brought on by cloud-native technologies and modern application development approaches. These technologies and methodologies help organizations speed innovation and improve agility, giving them a competitive advantage.

As a result, it’s not a question of whether to digitally transform and modernize, but a question of when. For most organizations, creating innovative, differentiated digital services and customer experiences means shifting to a more responsive, agile IT culture. Fast-changing customer and market demands necessitate rapid, flexible application development and delivery models.

However, most organizations still need to maintain at least some of their current infrastructure and processes to make the most of their existing investments. They cannot simply start over and completely rebuild their technology foundation and organizational practices. Cloud-native transformation takes time, and most organizations approach it as an ongoing, iterative journey that incorporates shifts in technology, processes, and culture.

Start with the right technology foundation

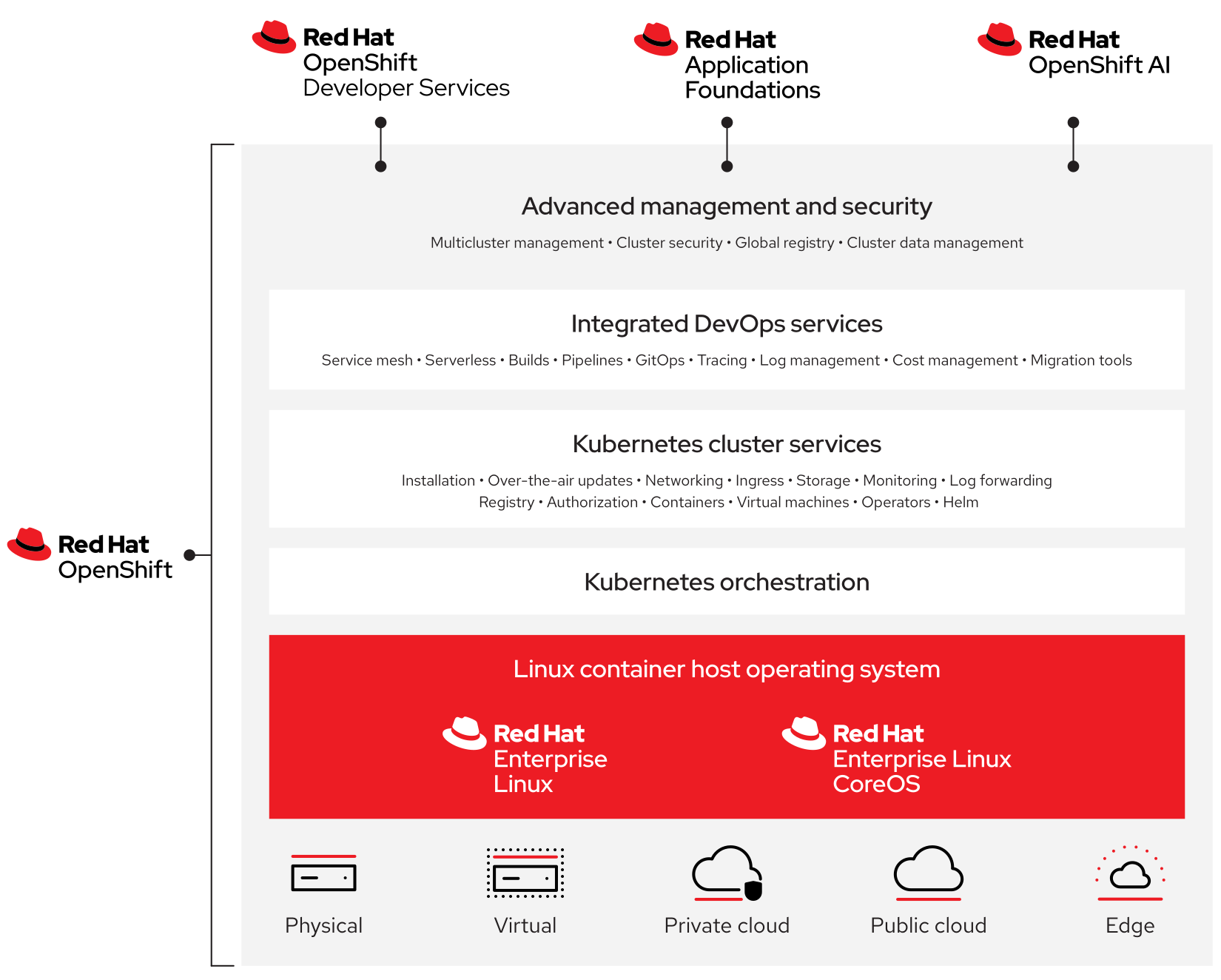

Container technologies and DevSecOps approaches are key components in successful cloud-native journeys. Deploying a Kubernetes-powered application platform can help you make the most of these components across hybrid and multicloud environments. The right platform will provide the agility, consistency, efficiency, and scalability needed to build, deploy, run, and manage applications across datacenter, edge, and public cloud infrastructures—all without locking you into a specific public cloud or vendor.

Look for an application platform that provides:

- A trusted and consistent foundation for application deployment across environments.

- A comprehensive set of cloud-native development and operations services and tools

- Consistent, streamlined security and management capabilities.

- Multiple consumption models, including self-managed on-site, self-managed cloud, and fully managed cloud service options.

While choosing the right technology foundation is a starting point, you also need to consider your people and processes. People are at the core of any enterprise-wide initiative, and cloud-native operations are no different. In order to adopt cloud-native approaches across your organization, all teams—including development, security, and operations—must be on board, participate, and trust each other. Processes move projects from start to finish. Clear processes for creating, deploying, managing, and adapting applications and infrastructure— and incorporating security throughout their life cycles—are essential for broad adoption of cloud-native approaches.

This e-book discusses 8 considerations for adopting a cloud-native approach to application development and delivery.