For the last 15 years, the IT industry has tried to simplify managing workloads by bringing them into huge data centers. Cloud computing has allowed companies to scale on demand, lower costs, and (often) externalize operations.

Today, more and more devices are connected and creating more and more data. Smarthome devices, sensors, and cars have mobile connectivity to track consumption, driving habits, location, and more. All this data gets sent back to these centralized data centers, where artificial intelligence (AI) and machine learning (ML) models allow you to process that data and get preliminary insights before sending it to the cloud.

However, the more data you generate, the more expensive it is to transfer it to the cloud. This proliferation of data means it's increasingly important to enable treatment and processing close to where it gets generated.

[ What is edge machine learning? ]

So what's the goal? You want to run AI/ML algorithms on very small devices worldwide without creating problems. What if you could use the same mechanisms you use in the cloud to run these workloads? This would enable a developer to write an application and run it whether it gets deployed in a cloud environment or an edge computing device.

This is why we created MicroShift, which may help IT systems architects who are looking to manage cloud-native and edge workloads at scale.

Why MicroShift?

MicroShift is a research project that explores how you can optimize OpenShift and Kubernetes for small form factor and edge computing.

Edge devices deployed in the field pose very different operational, environmental, and business challenges from cloud computing. These motivate different engineering tradeoffs for Kubernetes at the far edge than for cloud or near-edge scenarios. MicroShift's design goals cater to this:

- Make frugal use of system resources (CPU, memory, network, and storage)

- Tolerate severe networking constraints

- Update (especially rollback) securely, safely, speedily, and seamlessly (without disrupting workloads)

- Build on and integrate cleanly with edge-optimized operating systems like Fedora IoT and RHEL for Edge

- Provide a consistent development and management experience with standard OpenShift

[ Learn how to accelerate machine learning operations (MLOps) with Red Hat OpenShift. ]

Demo: AI/ML at the edge

This demo aims to run a face detection and face recognition AI model in a cloud-native fashion using MicroShift in an edge computing scenario. To do this, we used the NVIDIA Jetson family boards (tested on Jetson TX2 and Jetson Xavier NX).

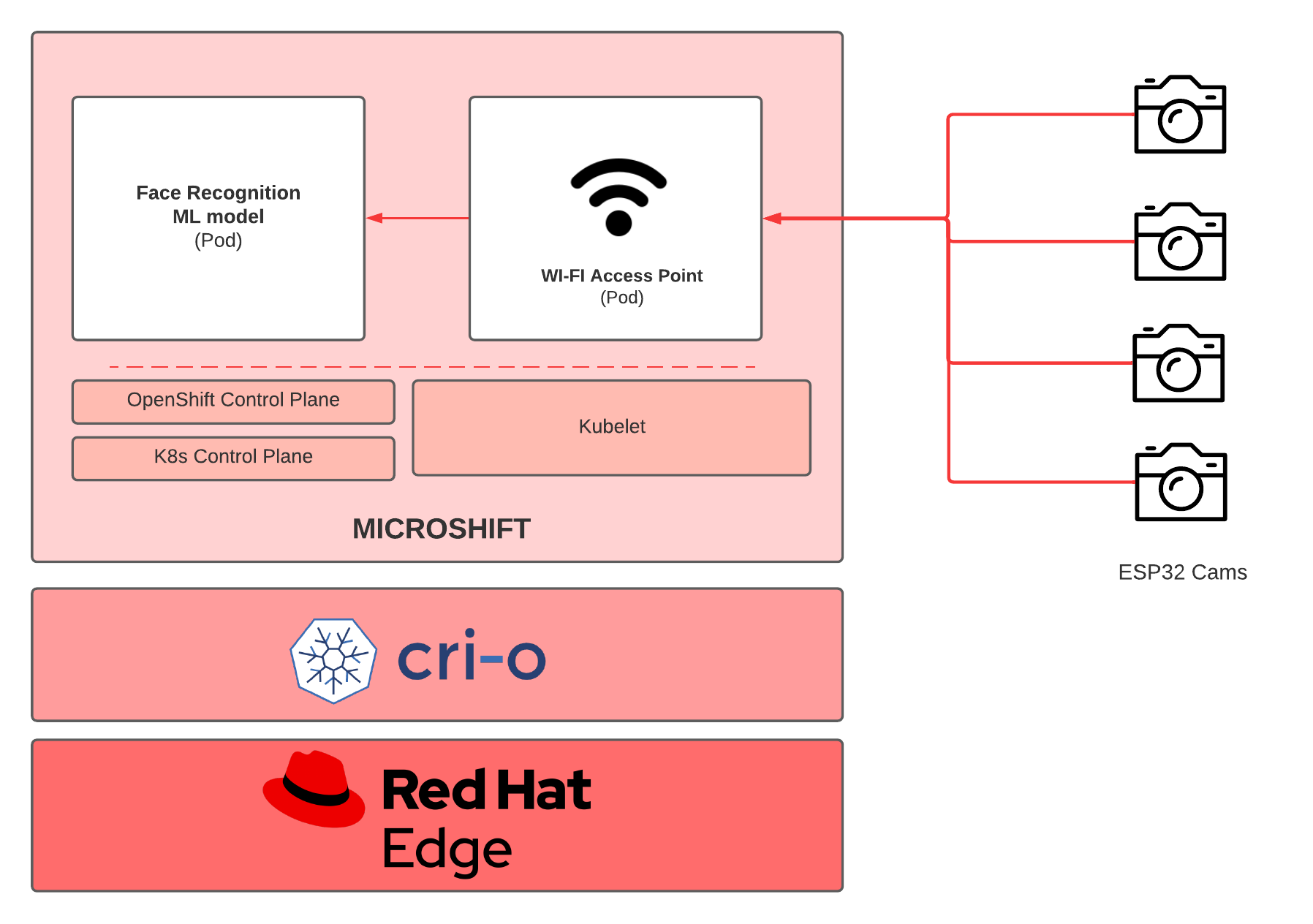

In the diagram above, you can see the basic components of this face recognition use case. There is a lot of documentation about deploying MicroShift, so we'll assume you have it running on your NVIDIA Jetson board.

Two different pods run as deployments. One pod is a WiFi access point that exposes a predefined SSID wireless network. This network gets used by the ESP32 cameras to send their video stream to the Jetson board. The other pod is a straightforward web server that receives registration requests from the cameras. Once a camera is registered, it starts a new video-streaming thread to process a certain amount of frames. We based the face recognition library on Dlib, and we use OpenCV to (for example) draw the squares on top of the faces. We want to show two different algorithms (one using just pure CPU and the other using the embedded GPU from the Jetson board) and see resource consumption.

All the code is open source, and you can find it on Red Hat's Emerging Technologies GitHub.

ESP32 cameras

This demo uses ESP32CAM boards, which are very cheap Arduino-friendly cameras that you can easily program.

The code was built using PlatformIO with the Arduino framework. VSCode IDE has a very convenient plugin that allows you to choose the platform you use and compile the code.

Many tutorials exist for using an ESP32 camera with PlatformIO. One of the most relevant files to look at is platformio.ini, located in the esp32cam directory:

[env:esp32cam]

#upload_speed = 460800

upload_speed = 921600

platform = espressif32

board = esp32cam

framework = arduino

lib_deps = yoursunny/esp32cam@0.0.20210226

monitor_port=/dev/ttyUSB0

monitor_speed=115200

monitor_rst=1

monitor_dtr=0Once you connect the camera to your laptop, a new /dev/ttyUSB0 appears, and you can flash or debug new firmware into the cameras using this port. To flash the firmware, run make flash. If you want to see the cameras' debug output, use make debug right after make flash.

If you want the cameras to connect to a different SSID, modify the file esp32cam/src/wifi_pass.h:

const char* WIFI_SSID = "camwifi";

const char* WIFI_PASS = "thisisacamwifi";WiFi access point

You don't want to depend on any available wireless network not under your control because it could be unsecured. Furthermore, it has to be self-contained to allow users to test this demo. This is why there's a pod that creates a WiFi access point with the credentials mentioned in the above section:

SSID: camwifi

Password: thisisacamwifiIf you want to expose a different SSID and use a different password, change the wifi-ap/hostapd.conf file.

Now, deploy this pod on MicroShift:

oc apply -f wifi-ap/cam-ap.yamlAfter a few seconds, you should see it running:

oc get pods

NAME READY STATUS RESTARTS AGE

cameras-ap-b6b6c9c96-krm45 1/1 Running 0 3sLooking at the pod logs, you can see how the process inside provides IP addresses to the cameras or any device connected to the wireless network.

[ Learn more about Data-intensive intelligent applications in a hybrid cloud blueprint. ]

AI models

The final step is deploying the AI models that perform face detection and face recognition. This pod is basically a Flask server that gets the streams of the cameras once they are connected and starts working on a discrete number of frames.

Deploy the AI models on MicroShift:

oc apply -f server/cam-server.yamlAfter a few seconds:

oc get pods

NAME READY STATUS RESTARTS AGE

cameras-ap-b6b6c9c96-krm45 1/1 Running 0 4m37s

camserver-cc996fd86-pkm45 1/1 Running 0 42sYou also need to create a service and expose a route for this pod:

oc expose deployment camserver

oc expose service camserver --hostname microshift-cam-reg.localMicroShift has mDNS built-in capabilities, and this route gets automatically announced, so the cameras can register to this service and start streaming video.

Looking at the camserver logs, you can see this registration process:

oc logs camserver-cc996fd86-pkm45

* Serving Flask app 'server' (lazy loading)

* Environment: production

WARNING: This is a development server. Do not use it in a production deployment.

Use a production WSGI server instead.

* Debug mode: off

* Running on all addresses.

WARNING: This is a development server. Do not use it in a production deployment.

* Running on http://10.85.0.36:5000/ (Press CTRL+C to quit)

[2022-01-21 11:18:46,203] INFO in server: camera @192.168.66.89 registered with token a53ca190

[2022-01-21 11:18:46,208] INFO in server: starting streamer thread

[2022-01-21 11:19:34,674] INFO in server: starting streamer thread

10.85.0.1 - - [21/Jan/2022 11:19:34] "GET /register?ip=192.168.66.89&token=a53ca190 HTTP/1.1" 200 -Finally, open a browser with the following URL:

http://microshift-cam-reg.local/video_feedThis URL shows the feeds of all the cameras you've registered, and you can see how faces get detected.

[ Check out Red Hat's Portfolio Architecture Center for a wide variety of reference architectures you can use. ]

Conclusion

Running AI workloads at the edge is already happening. The challenge is managing thousands of these devices at scale and using the same tools you use in the cloud. MicroShift enables these cloud-native capabilities in small, field-deployed devices and brings an OpenShift-like experience to developers and administrators.

For more information, watch our presentation AI on the edge with MicroShift at DevConf.CZ 2022.

About the authors

Ricardo is a Principal Software Engineer working at the Red Hat's Office of the CTO in the Emerging Technologies organization as Initiative lead. Ricardo is currently focused on the different kinds of architectures in the AI space like SLMs and multimodality. He has been part of the MicroShift and Edge Manager projects since its inception.

He is a former member of the Akraino Technical Steering Committee and Project Technical Lead of the Kubernetes-Native-Infrastructure blueprint family. He's been doing R&D related to OpenStack, as well as, contributing to OpenDaylight project and OPNFV. He is passionate about new technologies and everything related to the Open Source world. Ricardo holds a MSc Degree in Telecommunications from Technical University of Madrid (UPM). He loves music, photography and outdoor sports.

Miguel is currently working at the Red Hat CTO Office Emergent technologies / EDGE for the MicroShift project. Previously he worked on the Submariner project in the area of multi-cluster communication and security. He started contributing to OpenStack 7 years ago on the Neutron project (virtual networks) fixing bugs and contributing to new frameworks like Quality of Service, where he gained a core reviewer status. Afterward, Miguel joined the networking-ovn project to support OVN from Neutron, contributing the L3HA support patches to Open vSwitch/OVN. Miguel loves working for open source communities, networking, and automation.

More like this

Why flexibility is non-negotiable in the Middle East’s AI transformation journey

The path to autonomous intelligent networks

Technically Speaking | Inside open source AI strategy

Technically Speaking | Build a production-ready AI toolbox

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds