Change is inevitable. Today's enterprise applications are more complex and integrated and require more security and reliability than yesterday's. And I do mean yesterday; requirements for any given IT organization can change by the day!

How can an organization modernize thousands of applications simultaneously in a landscape of changing security, reliability, and operations requirements? Our answer relies on the value of a hybrid cloud platform. How application teams and end users interact with your hybrid cloud platform directly impacts their day-to-day coding and deployment.

[ Discover ways enterprise architects can map and implement modern IT strategy with a hybrid cloud strategy. ]

This article focuses on four tactics to improve hybrid cloud interfaces on platforms and ultimately scale application modernization in such a dynamic environment.

1. Extend interfaces to integrate services

When application teams need to modernize for a new platform deployment, the organization will likely require new integrations and requirements. These could be security-related, focused on improved reliability, or aimed at optimizing the application's cost or resourcing. Often these requirements aren't focused on the application runtime itself but on the ecosystem around the runtime. I usually summarize this challenge as "No enterprise application is only its runtime."

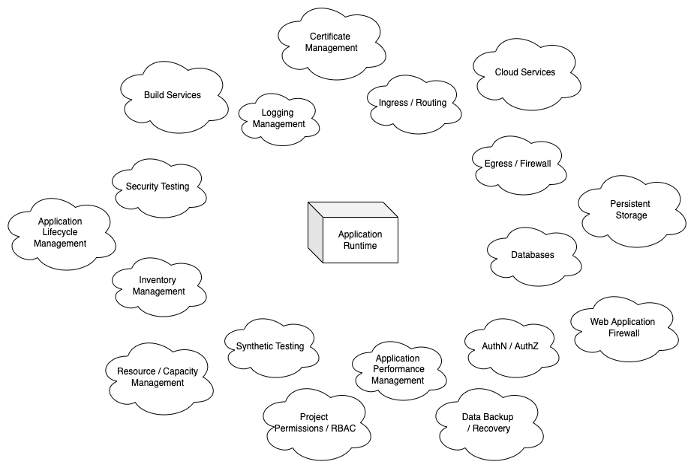

Imagine an application team needs to create solutions for today's modern enterprise application architecture and the integrations and management processes that come with it:

Even if the scope of application teams that need to solve for this modern landscape is low, the risk is that multiple solutions to solve the same modernization activity will overload the organization. This is where extending the interfaces can introduce a central platform to integrate with other key services and deliver all modern application requirements.

[ Learn how to build a flexible foundation for your organization. Download An architect's guide to multicloud infrastructure. ]

One integration that we created within IBM CIO Hybrid Cloud for meeting logging integration requirements is a custom logging operator (based on the Kubernetes operator pattern). Since we built the platform using OpenShift as the hybrid cloud abstraction, any automation defined using an operator approach can translate to public or private cloud and different regions, environments, and configurations. Within the CIO Hybrid Cloud platform, applications can easily forward logs to a central logging service based on a few lines of configuration. Operators within Kubernetes provide a framework for separating the what from the how so that application teams can focus on what they want to do:

"I need to have application logs sent to an external index."

and not how to do it.

In our experience, when rearchitecting an application to meet new requirements, the how to do it is where the application team spends much of its time. The platform can remove that "people cost" to provide common solutions for application modernization.

2. Use common patterns for interfaces

When moving a large application portfolio to a modern, hybrid cloud platform, it's important that the independent interfaces for the platform provide common results. This is generally a challenge once the platform is scaled, less so on initial application onboarding.

Using the example above, if the logging operator samples logs at a particular rate for one workload and doesn't sample logs for another, the application team will likely be confused about what is occurring under the hood. This could also negatively impact the application's health and introduce a larger support need when the application team requires support from the platform team. Fortunately, the logging operator doesn't do that, but this concern could apply to the differences between command-line interface (CLI) vs. graphical user interface (GUI) interaction or parts of the platform's infrastructure.

We applied this "commonality of interfaces" approach to our CIO Hybrid Cloud virtualization offering. Our platform deploys both container and virtualization workloads to OpenShift-based environments (the latter managed by OpenShift Virtualization).

[ Learn the difference between cloud and virtualization. ]

As expected with container workloads, ephemeral volumes to boot the container runtime are deleted when the container process ends. When adding virtualization within the same platform, part of the design enforces an ephemeral boot disk with the virtualization runtime so that when the virtual machine stops or restarts, the boot disk is deleted.

This design organically drives application teams to define application configuration as code, which is required when deploying containers. Regardless of container or virtualization deployment, the application team gets a similar experience in the workload lifecycle.

3. Design self-service interfaces

These two tactics ultimately support a self-service nature to the platform. When modernizing an application portfolio in the thousands, you cannot rely on human touchpoints to complete this activity. The way people interact with the platform and the actions the application team undertakes need to be accessible.

[ How to explain orchestration in plain English. ]

Accessibility toward an interface differs from being authorized to use an interface, in that knowledge and support are wrapped around interactions with the platform. With the ephemeral-boot-disk-for-virtual-machines in the last section, what if you made this lifecycle design but didn't communicate this to end users? The application team would likely assume that their boot disk would persist on a reboot activity when it does not.

Our platform provides rich documentation that defines these interactions with the platform for end users. Our portal also has inline help and workflows baked into how end users interact with the hybrid cloud. And, if users have issues interacting with the platform, they can use links in the GUI for the support team and consultation requests.

For example, the portal notes the expected integration time (around five minutes) for authorization registration to our central groups management interface. This not only integrates the workload automatically to centralized role-based access control but also explains the expected integration time, so end users don't need to interact with the platform team (but again, the platform team is there for any support needs).

This creates a backlog of improvements to the portal, measured by interactions flowing back to the support team. Anytime a ticket or consultation request is created, we can review these support needs to provide additional built-in knowledge or workflows.

4. Gather feedback from your interfaces

All of the approaches for architecting a hybrid cloud require that a platform team continually reviews the ways end users interact with interfaces. The way we review interface data answers a few questions:

- What are the most used interfaces on the platform?

- Are there any interfaces that we can sunset?

- Are there any interfaces that contain errors?

- What can end users not interact with in our platform, and do we need to create a new interface?

One of the best points of feedback for platform interfaces is... you! In my years of experience designing and building hybrid cloud platforms, this is one of the pitfalls platform teams can fall into. I would be remiss if I didn't mention I've fallen into this trap myself. Take some time to build and deploy modern applications on your own platform, note the areas of improvement, and record the positive interactions you have when deploying on a hybrid cloud platform.

[ Modernize your IT with managed cloud services. ]

This originally appeared on Hybrid Cloud How-tos and is republished with permission.

About the author

Ben Pritchett is a Principal Systems Engineer within Red Hat IT, focused on Platforms as a Service. His primary responsibilities are designing and managing container platforms for enterprise IT. This requires a broad understanding of IT, including knowledge of application development workflows all the way to public/private cloud infrastructure management. Since 2015 he has worked on production deployments of Kubernetes and Openshift.

More like this

Why flexibility is non-negotiable in the Middle East’s AI transformation journey

Beyond VM migration: What comes after the lift-and-shift

Transforming Your Timelines | Code Comments

Transforming Your Acquisition

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds