Many well-established organizations are embarking on large digital transformation programs to modernize architectures that predate the internet and digital commerce. These architectures are the sum of siloed systems, often mimicking the organization's communication structure, that are woven together through disjointed point-to-point integration patterns. These environments pose a challenge for modernization efforts.

Our team works with organizations to address this challenge using a pattern we developed called the Event-Driven Book of Reference. This article describes the motivating factors and challenges behind modernization, how the Event-Driven Book of Reference facilitates modernization, and some lessons learned from implementing this pattern at scale.

Modernization goals and challenges

Most organizations focus on accelerating innovation and modernizing applications.

Accelerating innovation

Organizations are looking for a nimble architecture that enables the rapid development of new experiences and applications while ensuring that existing core systems are not adversely impacted. This goal is usually inhibited by an existing architecture that suffers from data silos, complex interdependencies, and an abundant number of fragile core systems that may not scale for new use cases. We see three focus areas for innovation:

- Experience: A thin vertical slice that enables an experience that wasn't possible before

- Data modernization: Bringing data together from multiple sources into a modern common platform

- Integration simplification: Moving away from the high cost and complexity of legacy integration platforms

Modernizing applications

New use cases require new technical capability, and new technical capability requires new software. Yet, existing core systems are still a critical part of the business, making complete replacements costly and high risk. The Strangler pattern is usually recommended as an alternative to modernize existing systems by upgrading one vertical slice of capability at a time. This means the new and the old will coexist until the complete system is replaced, which usually means further integration complexity. Applications dependent on the systems must now manage orchestrating across both the new and the old until a replacement is viable.

This tight coupling is a headache for companies looking to begin modernization. In most cases, it's better to modernize applications incrementally. However, there are cases where you might wish to replace a homegrown custom-built system with a commercial off-the-shelf (COTS) product or software-as-a-service (SaaS) offering.

What is an Event-Driven Book of Reference?

The Event-Driven Book of Reference is an event-driven data platform that ingests data from all systems of record and translates them into industry-aligned data models that are used for consumption. More technically, it is a warm read-only replica of all business events across all systems of record and serves as the Strangler Facade in the Strangler pattern. This allows decoupling writes and reads, with the added bonus of abstracting the detailed data models of systems of record by introducing an industry-aligned data model for consumption. This then enforces wide adoption of the Command Query Responsibility Segregation (CQRS) pattern across the organization.

A caveat about this architecture is that the Book of Reference is eventually consistent. This means that for use cases where an inconsistent read can have monetary implications, coupling reads and writes is essential. For example, a system that checks for account limits before authorizing a purchase should not be based on an eventually consistent system. But most customer-facing use cases, such as online banking, can be suitable for this architecture. In our experience, most use cases do not require strong consistency, and the Book of Reference can be adopted as the place for reads.

Exploring the architecture

The Event-Driven Book of Reference's architecture consists of:

- Ingestion connectors

- Raw landing zone

- Ingestion processing and data quality

For data storage, there is a landing zone that stores the raw events ingested from the systems of record directly, and a curated zone that holds the industry-aligned representation of the ingested events. All data is stored in an append-only, persistent, and immutable log. The data in the ingested zone is ephemeral, and the persistence model is usually driven to allow for some cushion to replay events in case of failure. However, the retention policy for the curated zone is generally driven by the business requirements around the business events it represents. Often, it follows the same retention policy as the underlying system of record that sources the data. In cases where the industry-aligned data model is an aggregation across multiple systems of record, maximum retention is used.

[ Transformation takes practice and collaboration. Get some guidance by downloading the Digital Transformation eBook: Team tools to drive change. ]

At times, batch jobs are used to hydrate the curated zone with historical data. Otherwise, the data feeding into the Book of Reference should be in real time. This means all state changes are broadcasted to the ingested layer in real time, meaning the data pipelines must be streaming applications. However, some back-end processes are inevitably part of scheduled batches. For example, nightly posted-transaction processing at banks. This means you must often adopt a batch data processing platform as well, preferably the same one used for the historical batch jobs. Read access to the curated zone can be either through direct access to the data or APIs built on materialized views. An in-memory data grid can be used for the materialized views in cases where speed and scale are nonnegotiable.

An example architecture

We prefer using Apache Kafka as the Book of Reference. In one implementation, we integrated with the systems of record through Kafka Connect and wrote Kafka Streams applications to transform the feeds to industry-aligned models stored in topics in a curated zone. For all batch jobs, we utilized Informatica Power Center. Then, we either gave direct access to the topics for notification use cases or built APIs for more complex read patterns, such as historical queries. Since this client was in the financial services sector, we adopted the Banking Industry Architecture Network (BIAN) to guide the design of the industry-aligned data models.

The figure below shows the Event-Driven Book of Reference architecture.

A vertical slice

A vertical slice is the set of all applications that together enable a specific business capability or a customer experience. An example is the account summary page in an online banking app. A vertical slice would be all systems and applications that make that customer view possible, such as the system of record that stores balances, the back-end service that serves that data, and the micro frontend that visualizes the data in the web application.

In this architecture, the systems of record that are part of the vertical slice are ingested into the Event-Driven Book of Reference, and materialized views are built on top of the topics in the curated zone.

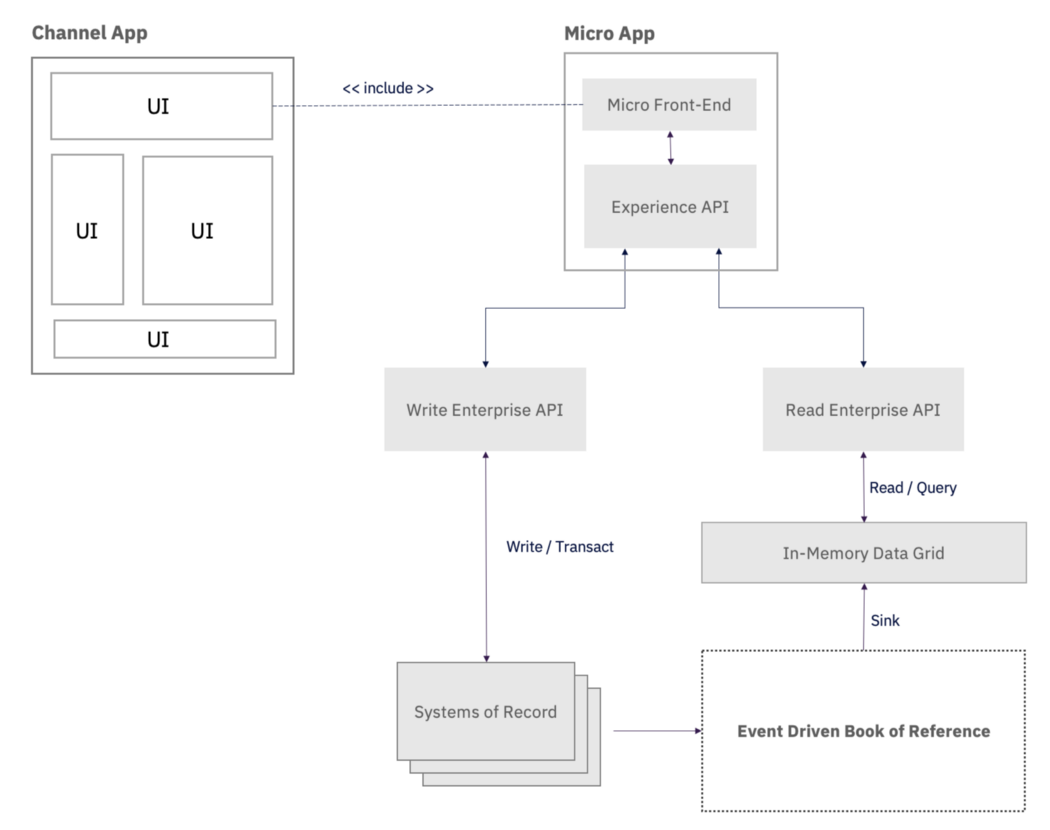

The figure below shows a verticle slice through the architecture.

How the Book of Reference facilitates modernization

Industry-aligned data models decouple consumers from the underlying implementation of existing and new core systems. This decoupling means that the new and the old systems can coexist and then swap when the new system proves to meet all the old requirements.

[ Learn more about how to align your enterprise architecture strategy with your innovation strategy. ]

In a perfect implementation, system modernization is transparent to the consuming applications. Using this pattern, one of our clients managed to modernize critical core systems while also building a flagship customer-facing product and then ship this new experience to both new and legacy customers. While building this customer experience, the technical teams were transparent to the modernization efforts and interfaced with the Event-Driven Book of Reference only during development. We found that by abstracting the systems of record completely from the application teams, our client decreased the pace of development, measured by the time from ideation to shipping to production, by approximately 16 months.

Lessons learned

Here are some lessons we learned while implementing this pattern at scale:

- Most legacy systems do not have instrumentation built in to feed into an event stream. Their existing data models do not lend themselves well to an event-driven and industry-aligned data model. This requires building complex applications for the translation component. We recommend pushing as much of the translation to the underlying system of record as possible to minimize the complexity of the data pipelines.

- Standardized industry-aligned data models are challenging to build. We recommend using preexisting standards, if your industry has them. For example, using BIAN has proved to be successful for some of our financial services clients.

- Since the Book of Reference unifies all systems of record, global keys are necessary to uniquely identify entities that span systems of record. For example, in one case, we dealt with account numbers that were not unique across regions. This worked in the existing system because different regions were handled differently. So we introduced globally unique identifiers, a first for our client, in the curated zone. We found that since existing architectures usually did not necessitate global keys, getting one is hard to come by.

In summary

To conclude, an Event-Driven Book of Reference facilitates modernization. It serves as the facade of the Strangler Pattern to allow for decoupling consuming applications and the systems of record that provide the coexistence layer that enables full core replacement. It also provides a foundation for adopting the CQRS pattern, where reads are based on industry-aligned data models and writes are based on the systems of record.

This decoupling provides a lasting foundation for future growth. Several of our clients have implemented it, and it has proven to be a successful pattern for modernization.

This article originally appeared on Medium and is republished with permission.

About the authors

Shahir A. Daya is an IBM Distinguished Engineer and Business Transformation Services Chief Architect in IBM Consulting in Canada, where he is responsible for overall solution design and technical feasibility for client transformation programs. He is an IBM Senior Certified Architect and an Open Group Distinguished Chief/Lead IT Architect with more than twenty-five years at IBM. Shahir has received the prestigious IBM Corporate Award for exceptional technical accomplishments. He has experience with complex high-volume transactional applications and systems integration. Shahir has led teams of practitioners to help IBM and its clients with application architecture for several years. His industry experience includes banking, financial services, and travel & transportation. Shahir focuses on cloud-native architecture and modern SW engineering practices for high performing development teams.

He has supported the design and stand-up of several joint clients and IBM delivery centers adopting modern SW engineering practices to deliver modernization programs. Shahir is also responsible for defining the sectors' technical strategy relating to asset development/harvesting, new technical areas of focus, and thought leadership for the specific technology area. He provides technical oversight and direction on critical projects.

Shahir holds a B.A.Sc. in Computer Engineering from the University of Toronto and has co-authored 3 IBM Redbooks on Microservices and Hybrid Cloud Integration. He is an inventor with several issued U.S. Patents. Shahir is a member of the Institute of Electrical and Electronics Engineers (IEEE) and the Association for Computing Machinery (ACM). Shahir is an active mentor in the University of Toronto Engineering Alumni Mentorship Program and the University of Toronto Women in Science and Engineering (WISE) Mentorship Program. He is also a coach for the FIRST LEGO Robotics Jr. League.

You can follow Shahir on Twitter and Medium.

Mehryar is an Application Architect and Managing Consultant at IBM Consulting in Canada. He has over three years of experience writing software and leading engineering teams in building data platforms. His main area of expertise is Streaming Systems and Event-Driven Architecture. Mehryar is an alumnus from the University of British Columbia, where he studied Computer Science and Economics.

More like this

Managing IT Operations when AI outpaces your patching cycle

Stop managing, start orchestrating: Streamlining catalyst operations with Red Hat Ansible Automation Platform

Operating System Management | Compiler

Technically Speaking | Taming AI agents with observability

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds