Data is needed at every level of enterprise architecture—from planning to implementation—yet data manipulation and management are advanced skills that take years to master. Not everyone who works with data has the time or capability to learn how to do complex queries on a database or to understand how to join database schemas to work with aggregated data. These are tasks that require specialized skills and tools.

However, many data management tasks can be done using commodity desktop applications that you work with daily. These applications allow you to access, organize, and manipulate data in sophisticated and surprisingly easy ways.

Here's a case in point.

I used to have to run a query every day for a project our department was monitoring. I got tired of the daily tedium that went with pulling this data. This work was ripe for automation. All I needed was an excellent tool to help me out.

Google Workspace came to the top of my mind because it's easily accessible, always online, and already used by the enterprise. Also, it's secure. I was drowning in Google Docs, so I knew adoption would be easy. Once I looked into the backend capabilities, I was pleasantly surprised by how robust this everyday tool could be for enterprise automation.

Google has created a language called Apps Script that gives power and functionality to the Google Workspace Platform. I read some documentation, slapped together some code, and said goodbye to my daily manual query. Mission accomplished!

Here's how I built my automated data flow using Google Workspace.

[ Do you have tips and tricks that help you do your job better? Consider sharing them as everyone benefits when enterprise architects write about their jobs. ]

Automating the task

My main challenge in implementing an automated solution was reporting. My strategy was to use Google Sheets as my presentation layer. I'd connect my Google Sheets frontend to a database on the backend by creating some custom code in Apps Script to do the data binding, as shown below.

To take full advantage of the opportunity the Google Workspace tools provide, I needed to do three things:

- Identify my data source

- Make a connection

- Configure the triggers that would update the frontend daily

Here's how I did these tasks.

Find the data source

I needed to identify my data source and connect my Google Sheets frontend to it to automate reporting effectively. Regarding the data source, I gave some thought to my data requirements and the questions I was trying to answer. Once I had a clear idea about these prerequisites, I could choose a data source. I can utilize databases and APIs as some relevant data sources. I decided to take a document-centric approach and store my data in a NoSQL database for this purpose.

Once I decided on my data source, I needed to determine how to connect it from the Google Sheets frontend.

Make the connection!

There are a ton of different ways you can connect your data to Google Sheets. There are also many open source packages you can use to help automatically feed data into a Google Sheets Workspace. LodashGS is one of the open source packages I often use with Apps Script.

To create the Apps Script, I used the Script editor, which is found in the Google Sheets menu under the Tools menu.

I added the code that queries my NoSQL database into the Script editor:

function updateSheet(){

// Get a reference to the spreadsheet to update

var sheet = SpreadsheetApp.getActive().getSheetByName("Sheet1")

// Get the data from the datasource by providing a URL to the database

// and the authentication token

var getData = UrlFetchApp.fetch(URL+ {'headers': {'Authorization': 'Basic TOKEN'}

// Convert the raw data to a JSON object

var jsonObj = JSON.parse(getData)

// Traverse the JSON object and set the values to the firstName and

// lastName value to the first and second columns in the spreadsheet

for(var i=0;i<jsonObj.length;i++){

sheet.getRange(i+2, 1).setValue(jsonObj[i].firstName)

sheet.getRange(i+2, 2).setValue(jsonObj[i].lastName)

}

}

After coding the connection to the data source, I needed to set some triggers.

Set the triggers

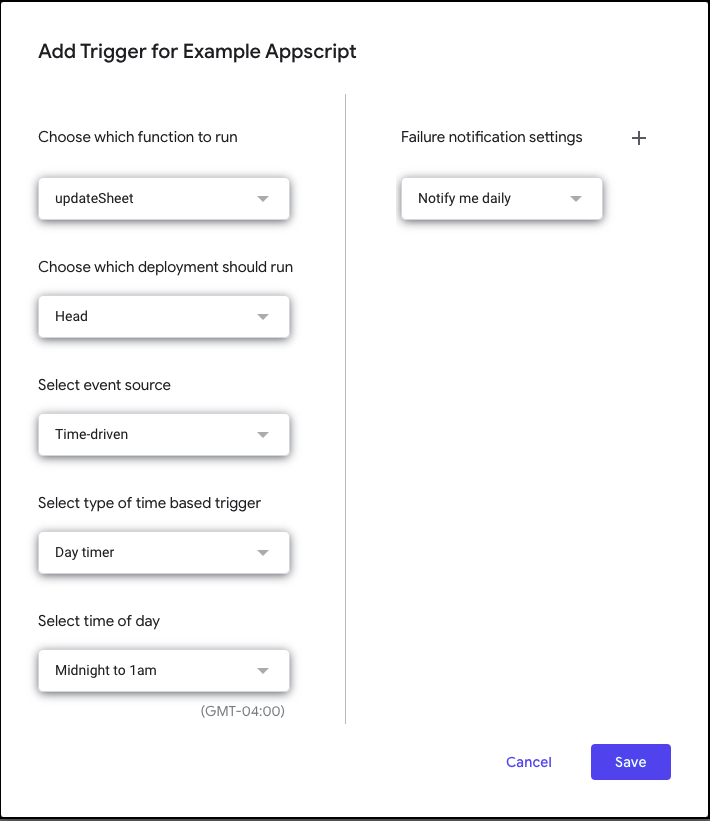

A key aspect of automating my reporting chores is setting triggers to respond to activities within my data source. Triggers allow me to avoid manually interacting with the application process directly. Since I want to update my frontend daily, I decided to write a trigger that executes every day at a set time. It turns out I could write that trigger in Google Sheets. Notice in the screen below that the trigger defined in the user interface dialog sets the trigger to use a Day timer to fire the trigger between midnight and 1 AM.

Now the trigger is in charge of executing my Apps Script every day. No more manual intervention.

You'll probably have a different set of events you'll want to respond to. For example, you might want to update your viewing frontend when there's a change in the data store. In this case, you'll write a trigger to execute when the state of your data store changes.

The important thing to understand is that using triggers will go a long way in your automation process using Google Sheets.

So, once the data store is identified, the connection logic is written in Apps Script, and the trigger code is created in Google Sheets, you're good to go in terms of viewing your data.

[ Download this free guide from Red Hat: 5 steps to automate your business. ]

Viewing your data

Structuring how data is presented in Google Sheets is a matter of programming. Code in my Apps Script creates the columns and rows needed to present the underlying data accurately. In the Apps Script above, there are comments that point out in the code how to format the Google Sheets' columns and rows according to the structure of data in the NoSQL database.

Getting the hang of formatting columns and rows in Google Sheets using Apps Script can take a bit of time for those new to the technique. But, given the benefits of automation, acquiring some expertise with Apps Script is time well spent.

Commodity-based automation

Data management is and will be a critical and costly aspect of enterprise architecture. Any measures that you can take to simplify working with data are advantageous, both operationally and financially. Fortunately, technology is constantly evolving, and everyday tools such as Google Workspace can support enterprise automation. You'd be surprised by the number of commodity applications you can adapt to do significant work in large-scale enterprise architectures. Taking the time to think about the tasks you do continually and finding a commodity-based automation solution that works for you will make implementing your enterprise architecture a more efficient, cost-effective undertaking.

About the author

Jesse is a Learning Data Architect and Analyst for Red Hat devoted to data-driven learning. He specializes in providing insight into database storage, engineering, and utilization methods. He also creates automated and secure solutions to provide operational reporting that extends into deeper analytics.

Jesse currently resides in Raleigh, NC where, when he's not massaging data, he's trying a new coffee or chilling with his pup, Kobe.

You can connect with Jesse on LinkedIn.

More like this

Build security into ITOps from the start with automation

Managing IT Operations when AI outpaces your patching cycle

Operating System Management | Compiler

Technically Speaking | Taming AI agents with observability

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds