TLDR;-)

In the previous blog, we saw how Ingress Controllers can be used to enable public access to private OpenShift clusters using the IPI deployment methodology. Today, we’re covering one of OpenShift’s managed offerings, also known as Azure Red Hat OpenShift (ARO) to see how we can enable the same type of scenarios (and their differences).

Why a Managed OpenShift solution?

Back in March 2021, Red Hat launched the Red Hat OpenShift Services on AWS (ROSA in short). This was another milestone for the Managed OpenShift offerings made available to our customers.

ROSA now complements the Azure Red Hat OpenShift (ARO) and the OpenShift Dedicated (OSD) managed capabilities. Those 3 sets of services are managed directly by a group of specialists from Red Hat known as Red Hat Site Reliability Engineers, or SRE’s (see this excellent blog on the SRE team).

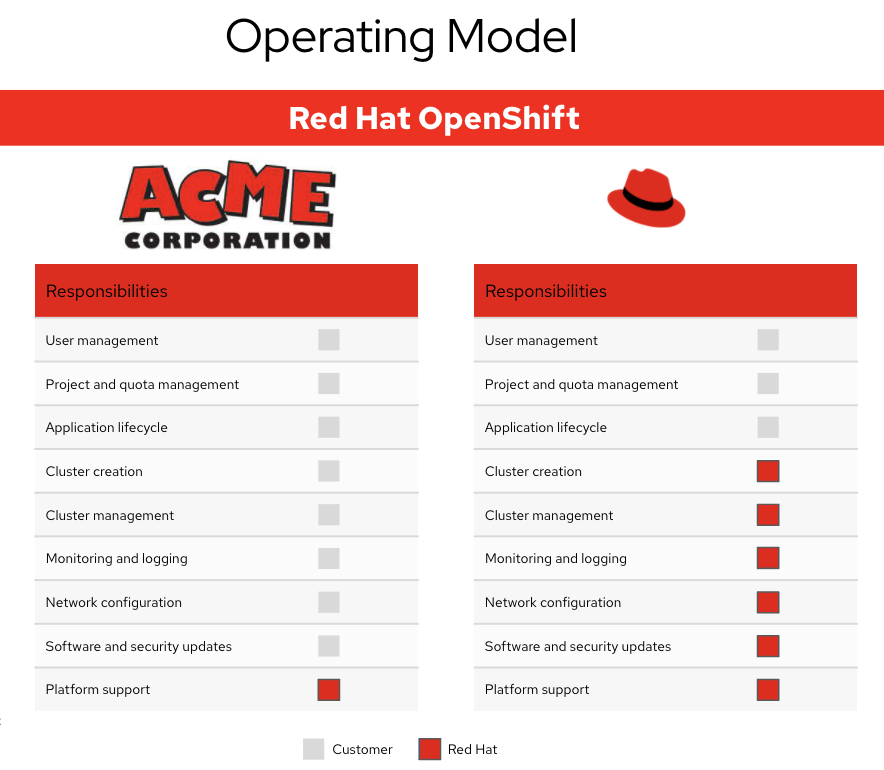

The benefits of having a managed environment are many. The following figure shows an example illustrating the involvement required when moving from managing an environment on your own (on the left column, which is like the IPI install we demonstrated in the previous blog) to having it managed by a dedicated group of specialists (on the right column).

Now, even though a managed capability comes with lots of benefits, there are still some specific components and design decisions that customers will need to be mindful of when using a managed environment.

What are the different Managed OpenShift solutions?

The Red Hat managed OpenShift capabilities are effectively tied to the cloud providers capabilities (e.g AWS, Azure and GCP). For instance:

- OpenShift Dedicated (OSD) - Red Hat OpenShift Dedicated is a fully managed service available on Red Hat OpenShift on Amazon Web Services (AWS) and Google Cloud. The deployment of the OSD cluster is handled via the Red Hat SRE team either within the customer’s cloud account or within a dedicated AWS or Google Cloud account.

- Microsoft Azure Red Hat OpenShift (ARO) - Microsoft Azure Red Hat OpenShift provides a model that allows Red Hat to manage clusters deployed into a customer’s existing Azure account. The deployment of the ARO cluster is handled by the customer via CLI within their specified Azure account.

- Red Hat OpenShift Service on AWS (ROSA) - Red Hat OpenShift Service on AWS (ROSA) provides a model that allows Red Hat to manage clusters into a customer’s existing Amazon Web Service (AWS) account. The deployment of the ROSA cluster is handled by the customer via CLI within their specified AWS account.

All right, so can I enable public access to an ARO private cluster?

The step by step guideline for building an ARO private cluster is available here.

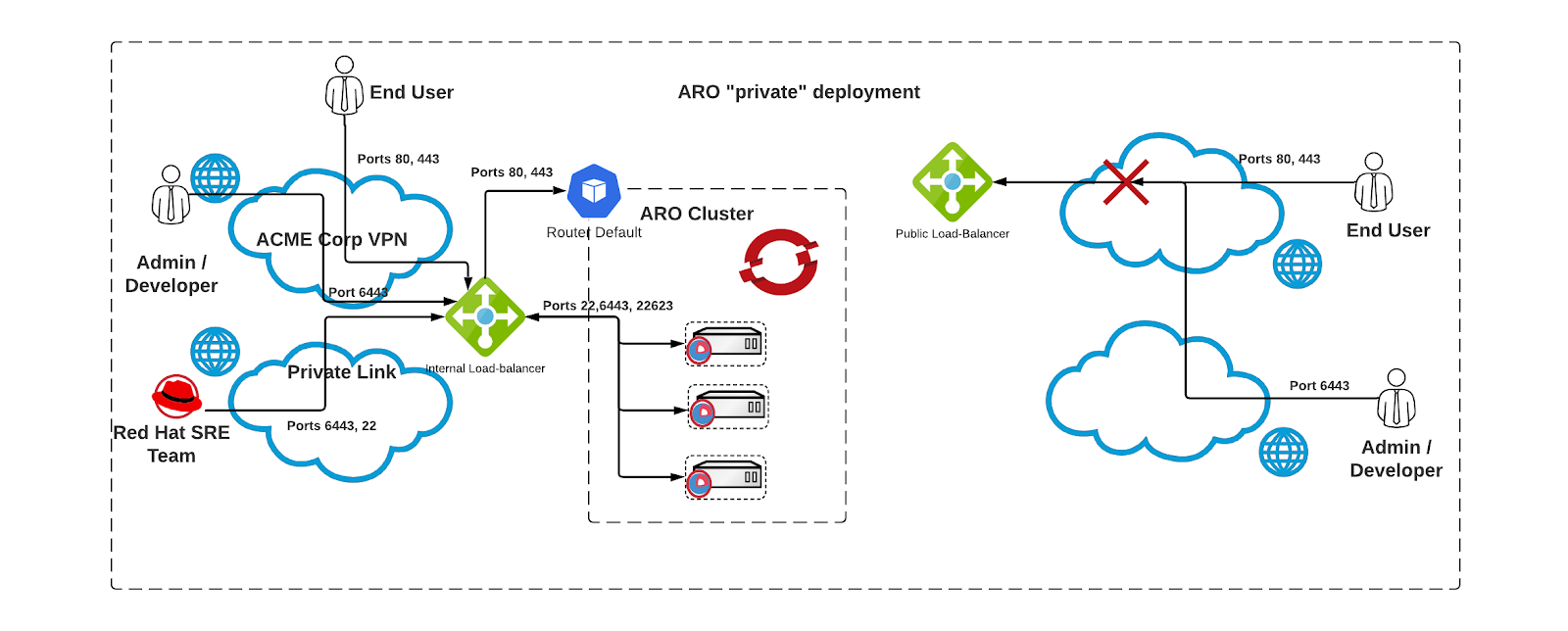

Compared to a standard IPI install, there are some subtle differences (essentially to cater for the SRE access to manage the platform). For a private ARO deployment 2 load-balancers are created, one internal and one external.

The SRE team gains access to the ARO cluster via the Internal Load-balancer through a private link. This is presented in the figure below.

Specifically for this blog, I have used the following parameters to create my ARO private cluster (see the apiserver-visibility and ingress-visibility set both to Private).

simon@Azure:~$ LOCATION=australiaeast

simon@Azure:~$ CLUSTER=simon-private-aro

simon@Azure:~$ RESOURCEGROUP="v5-$LOCATION"

simon@Azure:~$ az aro create --resource-group $RESOURCEGROUP --name $CLUSTER --vnet aro-private-vnet --master-subnet master-subnet --worker-subnet worker-subnet --apiserver-visibility Private --ingress-visibility Private --pull-secret @/home/simon/secret.txt

As usual, to get access to this cluster, I will use a jumphost in Azure (e.g sitting in the same private network as my ARO cluster).

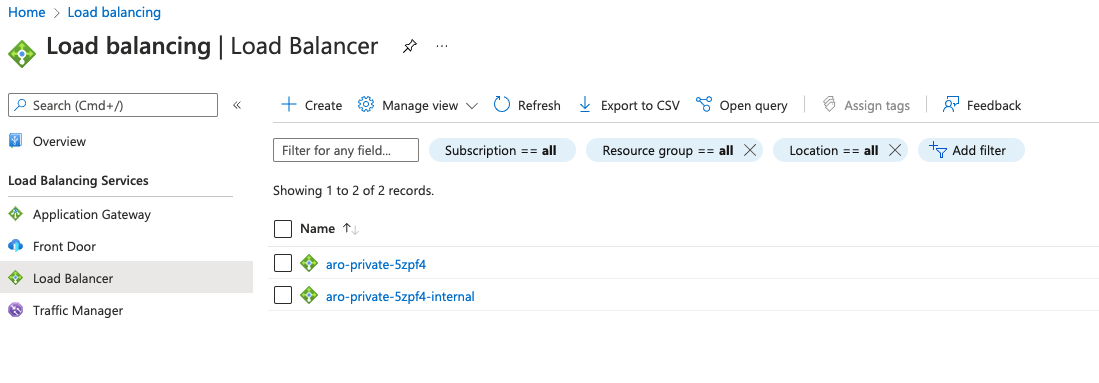

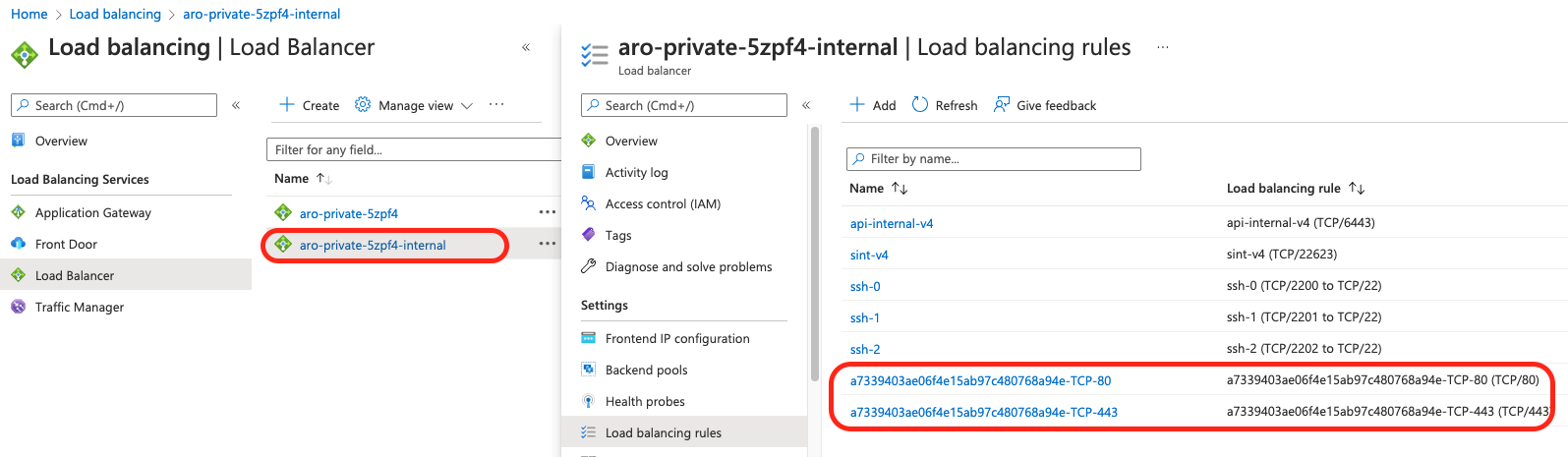

I am including here a few screenshots showing the load-balancers and the associated ports used.

We now need to get the credentials for this ARO cluster.

az aro list-credentials --name $CLUSTER --resource-group $RESOURCEGROUP

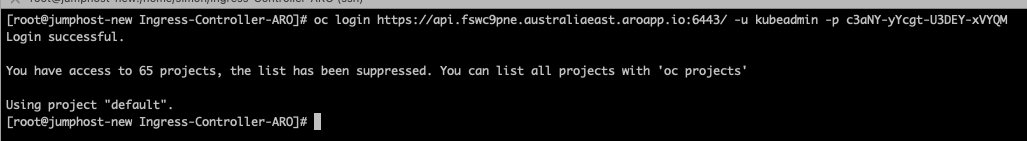

Then login from the jumphost to the ARO cluster

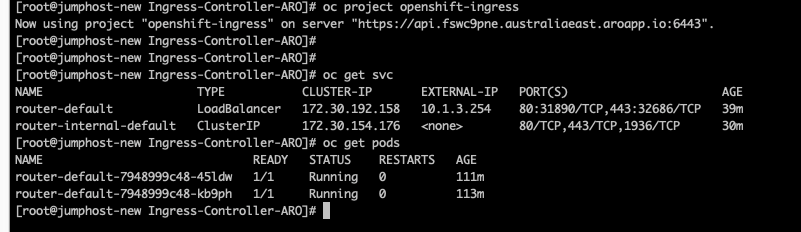

You can check that the default ingress controller is configured properly.

We will now deploy the same sample application we have used in the previous blog and create a route for it.

oc new-project demo

oc new-app https://github.com/sclorg/django-ex.git

oc expose service django-ex

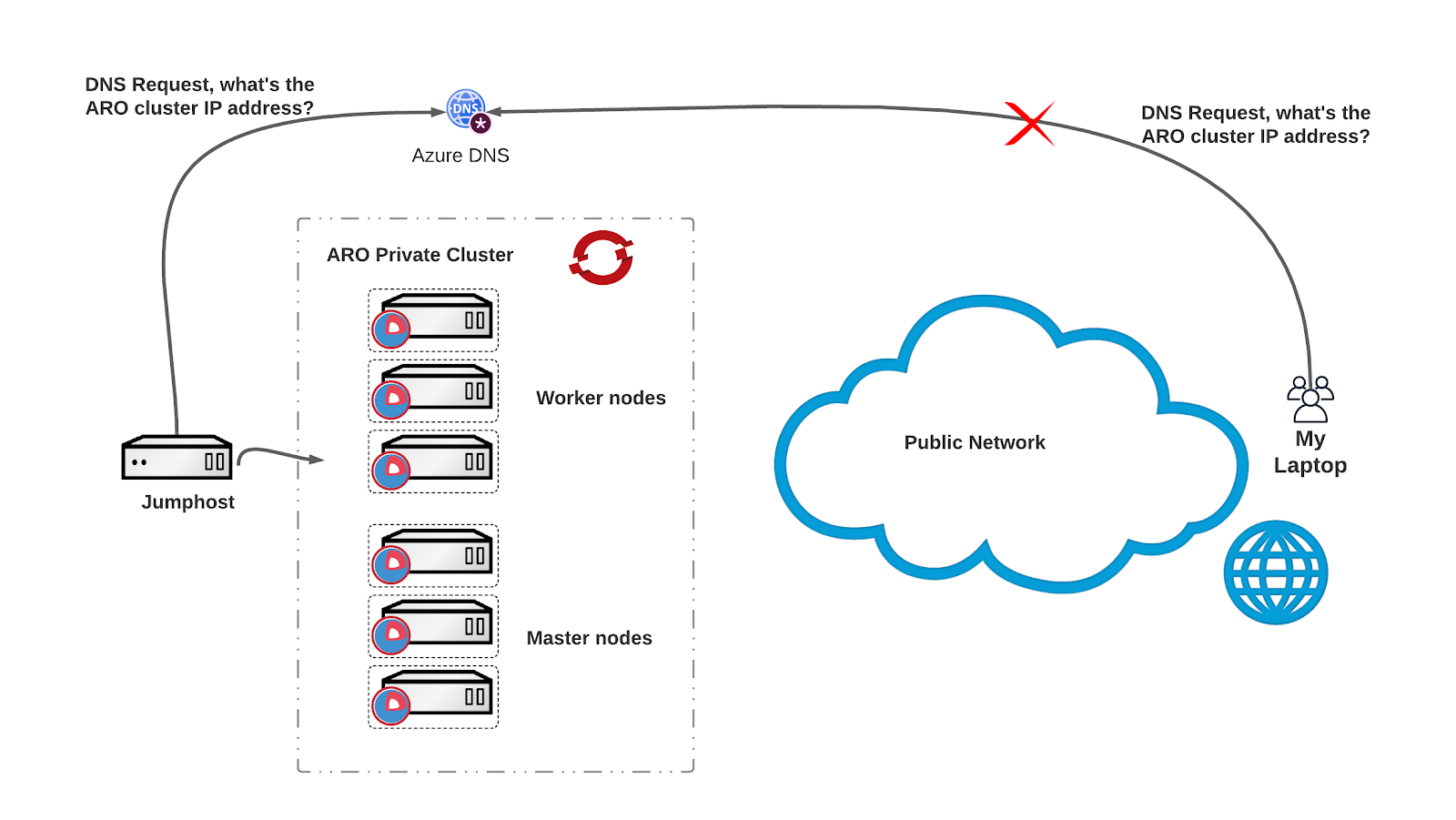

We can now check that the application is reachable from the jumphost but not from the outside world (e.g my laptop). To fully understand the setup review the following diagram:

We are now going to deploy another ingress-controller, I’m reusing the same YAML file that I shared in the previous blog (see here).

Following the successful deployment of the Ingress-Controller, we can see that two new PoDs (router-ingress-external) and associated services (router-ingress-external) have been deployed in the openshift-ingress namespace. Notice the External-IP address of the router-ingress-external service matching the IP address of the Load-balancer.

We now simply need to point the DNS entry for the globally routable domain to the IP address of the load-balancer. In my setup I have reused AWS Route53 and the same domain name (*.simon-public.melbourneopenshift.com) but there’s nothing stopping you from using Azure DNS services or any other DNS provider.

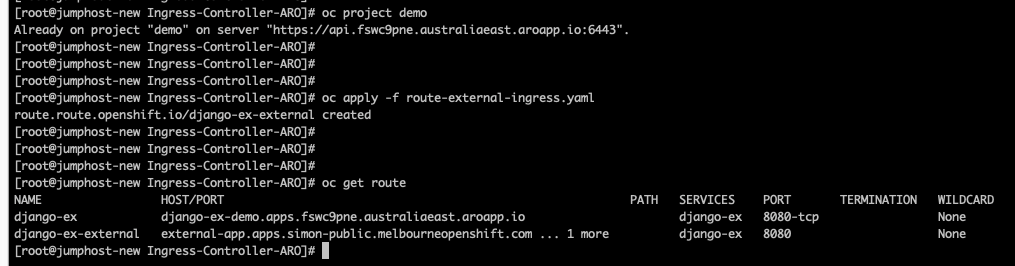

Let’s now deploy the new route for the application associated with the new Ingress-controller, and again, I am using the same file from the previous blog. And as before, let’s try to browse to this endpoint.

And there you go again! As you can see in the screenshot the django-ex-external route is now associated with the external ingress controller exposing our application on our private cluster to the world!

What’s next?

In this blog, we’ve looked at how Ingress Controllers can be used to expose Applications to the external world for a private ARO deployment. What’s interesting is that the same method and YAML files have been used for all implementations!

Which to me, is just another reason it’s super cool to use OpenShift…

About the author

Simon Delord is a Solution Architect at Red Hat. He works with enterprises on their container/Kubernetes practices and driving business value from open source technology. The majority of his time is spent on introducing OpenShift Container Platform (OCP) to teams and helping break down silos to create cultures of collaboration.Prior to Red Hat, Simon worked with many Telco carriers and vendors in Europe and APAC specializing in networking, data-centres and hybrid cloud architectures.Simon is also a regular speaker at public conferences and has co-authored multiple RFCs in the IETF and other standard bodies.

More like this

Strengthening the enterprise foundation: Red Hat and Oracle’s expanding collaboration

The Open Accelerator joins the Google for Startups Cloud Program to empower the next generation of innovators

Bringing Deep Learning to Enterprise Applications | Code Comments

Transforming Your Identity Management | Code Comments

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds