Rationale

The entire premise behind GitOps is to use Git as the source of truth for infrastructure and application configuration, taking advantage of Git workflows, while at the same time, having automation that realizes the configurations described in Git repositories (GitOps operators when we are deploying to Kubernetes).

That said, both infrastructure configuration and application configurations require access to some kind of sensitive assets, most commonly called secrets (e.g. authentication tokens, private keys, etc), to operate correctly, access data, or otherwise communicate with third party systems in a secure manner.

However, storing confidential data in Git represents a security vulnerability and should not be allowed, even when the Git repository is considered private and implements access controls to limit the audience. Once a secret has been pushed in clear-text (or in an easily reversible state) to Git, it must be considered compromised and should be revoked immediately.

So, how can we overcome this limitation and provide users and customers implementing GitOps with mechanisms to provision secrets to their applications without compromising their confidentiality? There are approaches and open source projects in the Kubernetes ecosystem that address these challenges, each in their different ways. This document provides an overview of several popular options in this space.

Secrets management methodologies

There are two main architectural approaches when it comes to managing secrets in GitOps:

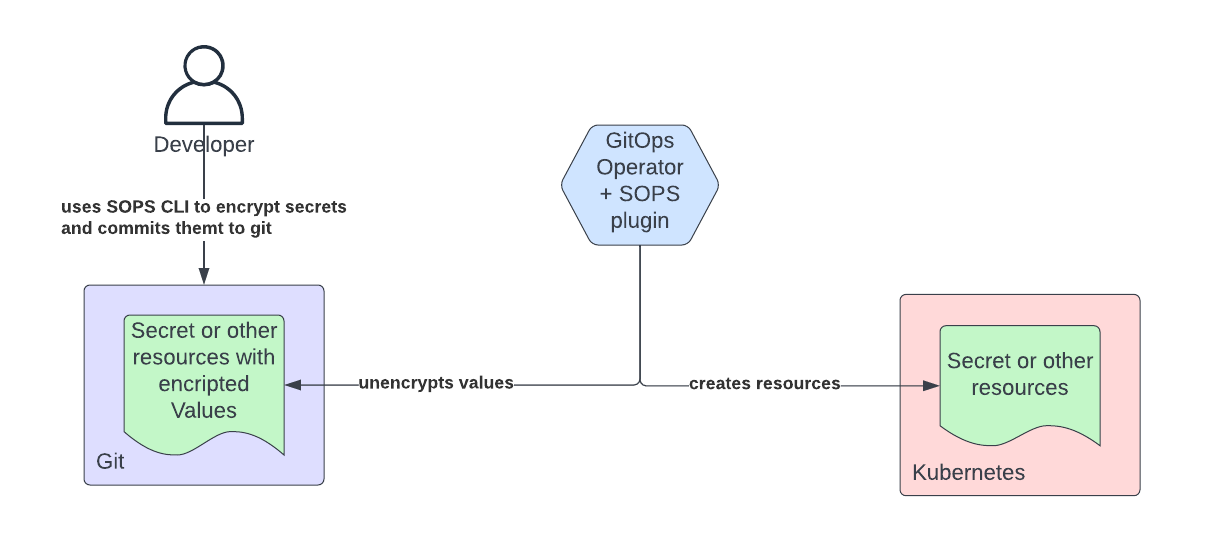

- Encrypted Secrets are stored within Git repositories and automation facilitates the decryption and rendering of them as Kubernetes Secrets.

- Reference to secrets are stored in Git repositories and automation facilitates the retrieval of the actual secrets based on these references. Finally the retrieved secrets are rendered as Kubernetes Secrets.

Encrypted Secrets in Git

There are at least two popular open source projects that chose to follow the methodology of storing encrypted secrets in Git: Bitnami’s Sealed Secrets and Mozilla's SOPS.

The following considerations apply to both projects:

- Plain text secrets are handled by humans to be encrypted and stored Git.

- The identity of the Git committer of the encrypted secrets can be easily tracked down by reviewing the commit logs in Git. This information can be used to mount social engineering attacks.

- If the encryption keys are compromised, it can be hard to track down all of the secrets that were encrypted with the compromised key and revoke them as these secrets can be spread across a large number of repositories.

Other less popular projects that feature the same approach are Kamus and Tesoro (from the Kapitan framework). We will not examine them in detail as they don’t add much to the discussion.

Bitnami Sealed Secrets

The Sealed Secrets project uses public-key cryptography to provide a way to encrypt secrets while being rather easy to use.

Sealed Secrets consists of two main components:

- A Kubernetes controller that has knowledge about the private & public key used to decrypt and encrypt encrypted secrets and is responsible for reconciliation.

- A simple CLI (kubeseal) that is used by developers to encrypt their secrets before committing them to a Git repository.

Sealed Secrets introduces a new custom resource (SealedSecret) to the Kubernetes API. The controller watches for changes to these custom resources, decrypts the encrypted secrets within the SealedSecret resource and reconciles the outcome to a Kubernetes Secret. The private keys used for decrypting the secrets are stored in etcd as Kubernetes Secrets.

The KubeSeal CLI is used to facilitate the encryption process: it allows the developer to take a normal Kubernetes Secret resource and convert it to a SealedSecret resource definition. When the developer has a connection to the control plane of the Kubernetes cluster, the public key required for performing the encryption can be automatically fetched from the controller running in the cluster. Otherwise, the public key has to be provided as input by the user.

The Sealed Secrets controller supports automatic secret rotation for the private key and also expiry of previously used secrets to enforce the re-encryption of secrets. Also, upon encrypting the secrets, a random nonce will be used with the encrypted data making reverse engineering through brute-forcing (i.e. match ciphertext to plain text) very expensive to achieve.

However convenient this method seems to be, it poses a risk of the initial Secret resource being checked into Git accidentally. The original Secret resource needs to be available for the developer, whether it be to make modifications (e.g. a new value has to be added, or an existing one needs to be changed) or just to view a previously encrypted value. This risk can be somewhat mitigated by implementing proactive procedures, such as adding pre-commit hooks to the Git repository that reject Kubernetes secrets being checked in.

Furthermore, if the private key that resides in the cluster is lost (by accidental removal or in a disaster situation), and there is no backup of it, all secrets have to be re-encrypted with the public key of the new private key, and then subsequently committed to all Git repositories.

The controller supports multi-tenancy on a namespace level, in that it requires the destination namespace to be explicitly specified. A SealedSecret that was encrypted for namespace A cannot be decrypted when placed in namespace B, and vice versa. However, this constraint is enforced by the controller, not cryptographically.

The SealedSecrets approach also does not scale well as the number of clusters increases. Each cluster will have its own SealedSecrets controller and private keys, which implies that if the same secret needs to be deployed to multiple clusters, then multiple SealedSecret objects need to be created, increasing the maintenance overhead and the complexity of the GitOps configuration.

Finally a compromise of the private keys, whose security essentially depends on the cluster RBAC and how well etcd is protected, extends beyond the cluster itself. Each Git repository a secret is associated with can also be considered compromised, and given the distributed nature of Git, may be hard to track down and revoke.

Mozilla SOPS

SOPS (Secrets OPerationS), or sops, is a special-purpose encryption and decryption tool, and neither scoped, nor limited to Kubernetes Secrets resources or Kubernetes at all. Multiple input formats are supported including YAML, JSON, ENV, INI and BINARY formats.

SOPS also supports the integration with some of the commonly used Key Management Systems (KMS), such as AWS KMS, GCP KMS, Azure Key Vault and Hashicorp’s Vault. It’s worth noting that these key management systems are not actually used to store the secrets themselves, but rather provide the encryption keys used for securing the data. If no such system is available, a PGP keypair can be used instead. This approach has the advantage of giving the users the opportunity to iterate upon their requirements along with enabling a rapid adoption by developers. For example, PGP can be used in development environments while reserving the use of KMS in higher level environments without having to swap out any of the underlying tooling.

However, given that SOPS is a command line tool, its usability is limited in the Kubernetes-native world. While there are plugins for tools such Helm (here) that will decrypt values files (values.yaml) stored alongside a Helm Chart prior to the installation into a cluster, support in GitOps operators is still limited.

In the case of Argo CD, users would have to build custom container images with SOPS baked in, and then use Helm extensions (see an approach here) or Kustomize extensions (here or here).

Support for SOPS in Flux is, instead, more mature, as SOPS can be configured directly in Flux’s Manifests.

The sops-secrets-operator makes an attempt to combine the concepts of SOPS and SealedSecrets. It uses the SealedSecrets workflow, but instead of a proprietary encryption scheme, it uses SOPS for encryption.

The SOPS approach does not scale well with an increasing number of teams and individuals. The fact that actual humans have to perform the encryption and manage PGP keys or authentication to other encryption transit systems is a weakness of this approach. Ultimately, humans become the weaker link in the security chain. Integration with a Key Management System is preferable over the direct use of PGP keys. While this does not eliminate the human factor and the need to interact directly with secrets, at least it removes the burden of having to manage the key.

But, if an organization has access to a key management system, other and perhaps better approaches are possible. Let’s take a look at them in the next section.

Reference to Secrets Stored in Git

In this approach we store in Git a manifest representing a reference to a secret housed in a key management system. Then, a GitOps operator deploys that manifest to the Kubernetes cluster and an operator retrieves the secret and applies it as a Kubernetes Secret to the cluster.

Two main projects implement this approach: ExternalSecrets and the Kubernetes Secret Store CSI Driver.

Both approaches require the existence of a key management system. This will be typically an enterprise level system that is used to manage the secrets for the entire enterprise and capabilities beyond simply distributing encryption keys.

ExternalSecrets

The ExternalSecrets project was initially developed by GoDaddy to enable the secure usage of external secret management systems, such as HashiCorp’s Vault, AWS Secrets Manager, Azure Key Vault, Alibaba KMS, and GCP Secret Manager, within Kubernetes. Through the efforts of the community, the number of supported key management systems supported by the project continues to grow thanks to an easy-to-contribute plugin model.

ExternalSecrets is a Kubernetes controller that reconciles Secrets into the cluster from a Custom Resource (ExternalSecrets) that includes a reference to a secret in an external key management system. The Custom Resource specifies the backend containing the confidential data, and how it should be transformed into a Secret by defining a template. The template can include dynamic elements (in lodash format), and can be used to add labels or annotations to the final Secret resource, or to mutate some of the data after it’s been loaded from the backend store.

The ExternalSecrets resources themselves can be safely stored in Git, as they do not contain any confidential information.

ExternalSecrets itself does not perform any cryptographic operations, such as encrypting or decrypting secrets, and fully relies on the security of the Secret Store backends.

The support for multi-tenancy in this operator has matured over time with different approaches to ensure that different tenants were isolated from one another and could not retrieve each other's secrets. The most recent and most mature approach consists of using a namespaced scoped SecretStore resource with namespace-local credentials such that each tenant will use different credentials to authenticate to the key management system.

It’s worth noting that there is limited support for secret renewal in the ExternalSecret project. This feature is needed when the secrets returned by the key management system are short-lived and require rotation.

Kubernetes Secrets Store CSI driver

The Secrets Store CSI driver is also a project that aims at bringing secrets from external secret stores to a Kubernetes cluster. Supported backends include HashiCorp Vault, Azure Key Vault and GCP Secret Manager. Additional backends can be developed externally and integrated as so-called “providers” following a plugin model. As opposed to the ExternalSecrets project, the Secrets Store CSI driver (as the name suggests) is not implemented as a controller to reconcile the data into Secret resources, but instead uses a separate volume that is mounted to a Kubernetes pod to contain the secrets. This approach makes adoption somewhat more complex if not impossible for those use cases in which secrets are not consumed by pods directly.

Although the Secrets Store CSI driver does provide optional features that will synchronize content to a Secret resource in Kubernetes, due to the nature of being a CSI implementation, the driver and the secret it creates will be ultimately bound to a workload. In addition, another limiting factor is, if workloads are removed, the optionally-created Secret will be removed as well.

At the moment, there is limited support for rotating secrets, as this capability is still in alpha status and not supported by all providers.

Similar to ExternalSecrets, the Secrets Store CSI driver does not perform any cryptographic operations by itself but rather leverages backend systems to securely encrypt and store secret data.

The providers used to connect to the backend systems are developed and maintained by external third parties, and while this approach facilitates development of new providers, it also imposes questions on code security, maturity and compatibility. However, the Secrets Store CSI driver provides some rules and patterns to follow when developing such a provider, and the risk seems negligible. That said, when adopting a provider, it is important to understand how multi tenancy is managed. Some providers use a single shared credential to access secrets for all of the tenants.

Overall, the objective of the Secret Store CSI driver project is not entirely clear. On one hand it seems to subscribe to the idea that we Kubernetes Secrets should not be used and to instead just mount temporary in-memory volumes to pods containing secrets fetched from a key management system. But, at the same time, include the capability to use Kubernetes Secrets, ultimately exposing secret information via etcd. On the other hand, if we accept the risk of exposing secret information via etcd, this project does not cover the use case of having a Kubernetes Secret that is not actually mounted to a pod.

Conclusion

We have seen two architectural approaches to managing secrets with GitOps: encrypted secrets stored in Git and storing a reference to secrets in Git. The latter approach appears more promising as it can scale across the dimensions of teams/people and clusters while not compromising security. The former approach is perhaps suitable when an enterprise key management system is not available or as one is at the beginning of a GitOps journey. The challenge with this approach is that it may not be able to scale appropriately to suit an enterprise wide size.

Within the “reference to secrets stored in Git approach”, if your purpose is to have a solid way to create Kubernetes Secrets, then the External Secret project is a good fit. If your purpose is to have a way to feed the secrets to a pod, the Secret Store CSI driver is preferable as it does not require the creation of a Kubernetes Secret within the cluster. However, in the latter case, there is also the option of using a sidecar to fetch and refresh secrets from the Key Management System (several offer a container image that can be used for this purpose).

As discussed previously, avoiding the creation of Kubernetes Secrets relieves some of the need for securing etcd, but it is (at this point of the Kubernetes project) almost impossible to achieve as too many core and added features rely on the existence of Kubernetes Secrets. So, realistically, one will have to strike a balance between the secrets that can be reflected as Kubernetes Secrets and those that are to be consumed directly by pods.

Additionally, if our objective is to manage all of our IT configurations with a GitOps approach, then this should also apply to the Key Management System. Operators, such as External Secrets, do not solve the challenges involved with managing secrets, but instead just move them up a level, from Secret creation in Kubernetes, to secret creation in the Key Management System.

To break this recursive loop, the Key Management Systems must be able to dynamically generate secrets in coordination with the endpoint that needs to authenticate credentials, thus removing the requirement to feed into the Key Management System externally-generated secrets, which often end up being handled by humans. As an example, a Key Management System can coordinate with a database to dynamically create narrowly-scoped and short-lived database credentials. Implementations of this capability can be found in Hashicorp Vault with secret engines, or Akeyless with dynamic secrets.

In conclusion, to achieve an end-to-end GitOps-based secret management approach, we need a Key Management System that supports dynamic secrets and the ability to configure it via GitOps. We have shown how this can be achieved in the case of Vault in this article.

About the authors

Raffaele is a full-stack enterprise architect with 20+ years of experience. Raffaele started his career in Italy as a Java Architect then gradually moved to Integration Architect and then Enterprise Architect. Later he moved to the United States to eventually become an OpenShift Architect for Red Hat consulting services, acquiring, in the process, knowledge of the infrastructure side of IT.

Currently Raffaele covers a consulting position of cross-portfolio application architect with a focus on OpenShift. Most of his career Raffaele worked with large financial institutions allowing him to acquire an understanding of enterprise processes and security and compliance requirements of large enterprise customers.

Raffaele has become part of the CNCF TAG Storage and contributed to the Cloud Native Disaster Recovery whitepaper.

Recently Raffaele has been focusing on how to improve the developer experience by implementing internal development platforms (IDP).

More like this

[node:rh-smart-meta-title]

MCP security: Containerization and Red Hat OpenShift integration

Collaboration In Product Security | Compiler

Keeping Track Of Vulnerabilities With CVEs | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds