TLDR;-)

In this blog, I will try to explain how Ingress Controllers can be used to enable public access to OpenShift private clusters. You will be able to follow a step-by-step guide for a request that I see happening with my customers and their OpenShift deployments. Over a series of short blogs, I would like to present a few options available that may help the reader with these types of scenarios. In this first article, I will cover some of the foundations we need, including DNS within a Kubernetes cluster, and importantly, how the Ingress Operator works within OpenShift to get traffic into clusters. Then,we will see how this works for an Installer Provisioned Infrastructure (IPI) method for OpenShift.

So, why enable public access to private clusters?

The use case is the following: Often my customers deploy OpenShift as a private-cluster setup. By “private” I mean that the OpenShift cluster can only be accessed from an internal network and that this cluster is not visible and reachable from the internet.

However, sometimes a group of the applications on the cluster may also need to be exposed to users who are not on the company’s internal network. Those users could be customers, remote workers, partners, and others.

All right, so how do we get external access to a “private” cluster?

One of the easiest ways to deploy OpenShift on your own is through the IPI installation method. IPI uses a single configuration file as its source of truth, making it easy to customize a complex deployment with a few simple lines of YAML.

If the target infrastructure is a public cloud provider (AWS, Azure, Google), customers can also rely on the use of managed OpenShift solutions (like ROSA, ARO, and OSD) instead of managing OpenShift themselves. Please note that OSD, ROSA, and ARO will be covered in a different blog.

Throughout this blog, I will use AWS as the basis for the infrastructure, but the same approach can be followed with any other public cloud provider.

I will start first with a private-cluster IPI install. As part of the OpenShift documentation, this section covers the deployment of a private cluster on AWS using the IPI method.

For a detailed description of how to do it, you can refer to the following link. It is a step-by-step installation guide that shows you how to create a private cluster on AWS.

Now, once we have successfully done this deployment, this is how the overall topology looks for a private cluster.

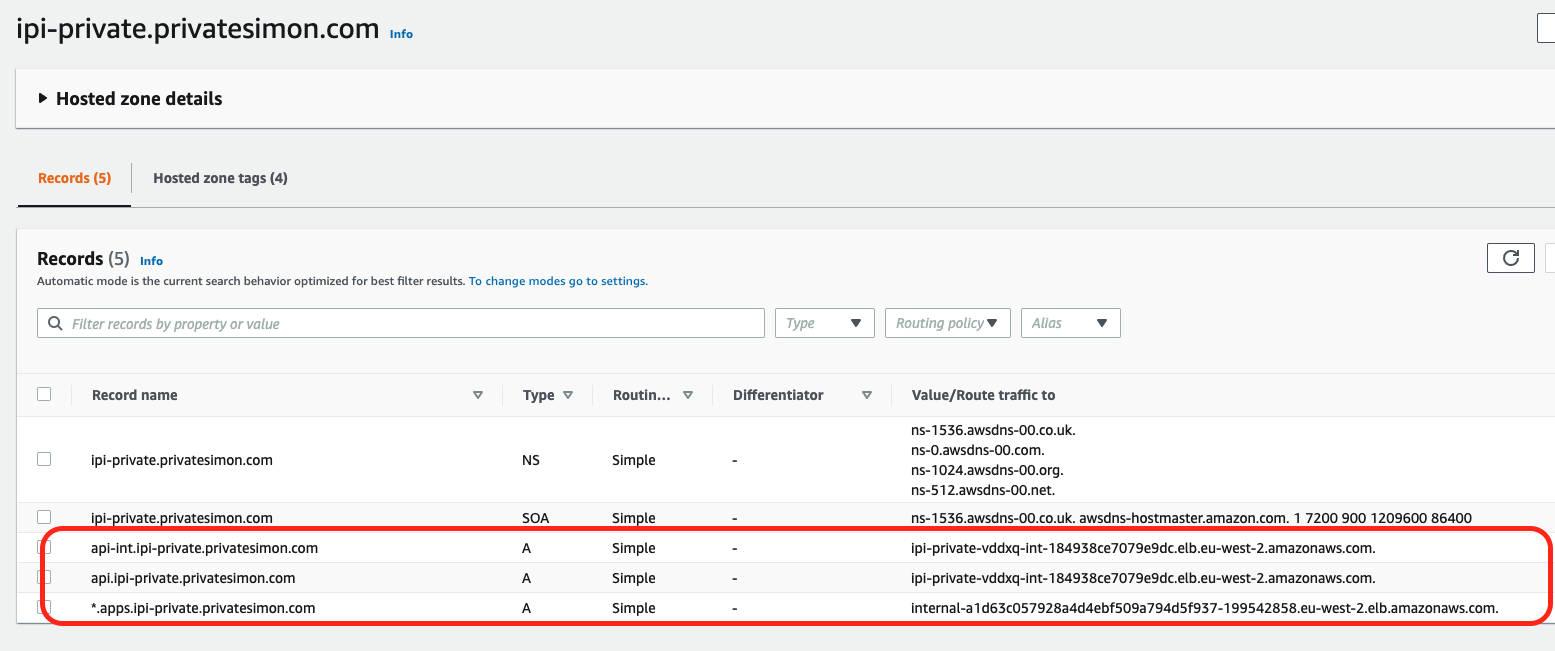

I have done the installation in an AWS region with multi-AZ (eu-west-2). The name of my cluster is ipi-private and the associated domain name is privatesimon.com.

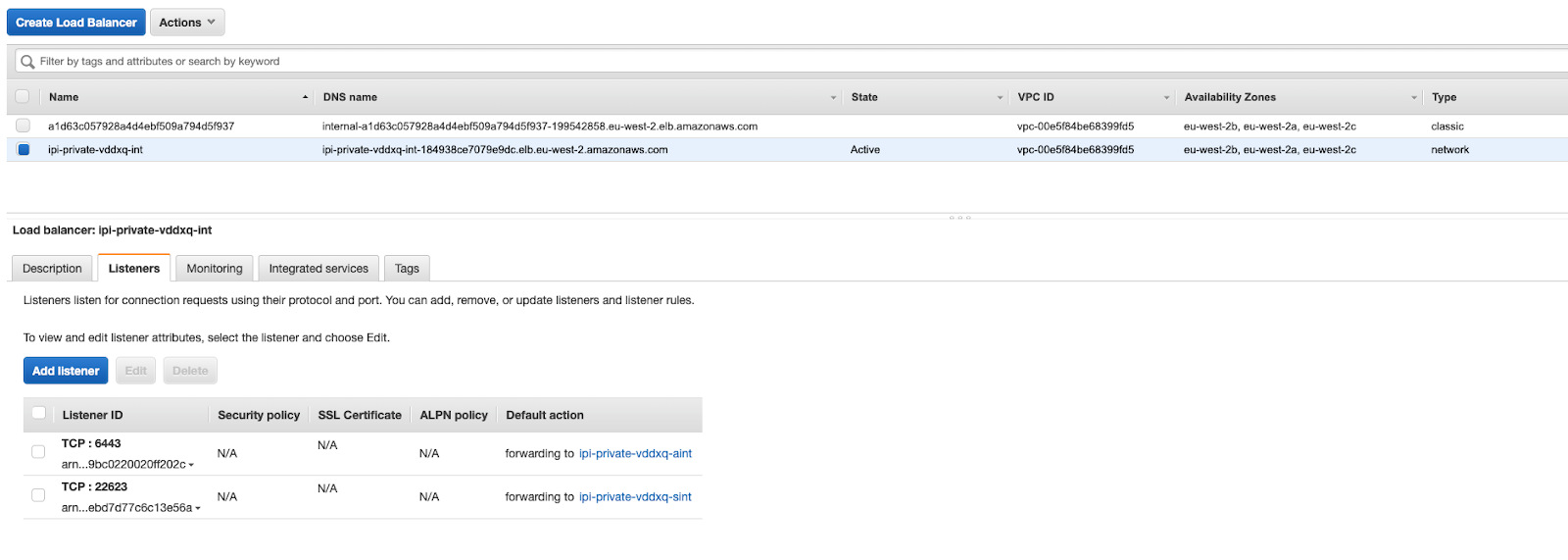

As can be seen from the figure above, 2 load-balancers have been created, one for ports 443 and 80 (for example, accessing the applications) and the other one for port 6443 (for the OpenShift API Server) and 22623 (that port is used by the machine config operator to provide the configuration, in the form of ignition files, to master and worker nodes).

I am including the screen capture of the load-balancers created as well as the DNS entries for this cluster:

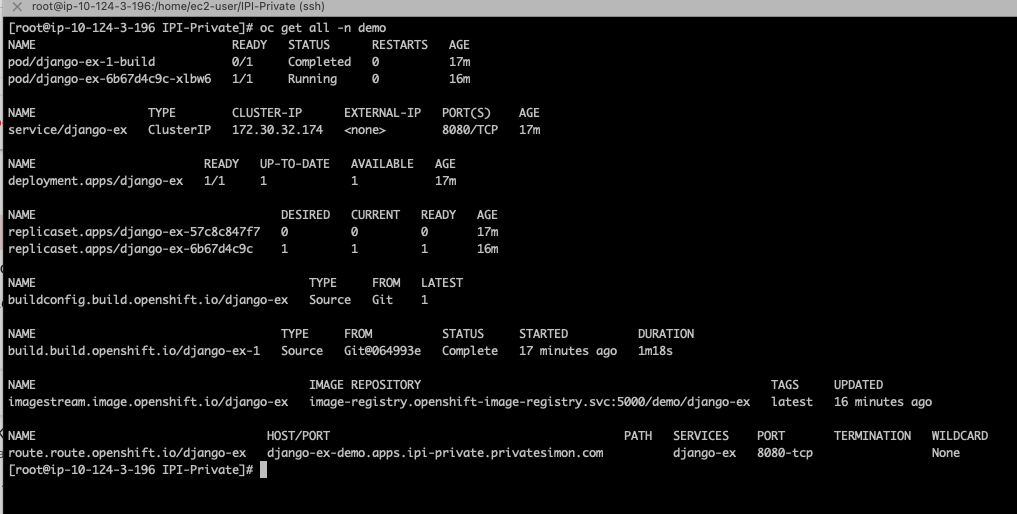

Now, let’s deploy a sample application for this cluster (taken from here):

oc new-project demo

oc new-app https://github.com/sclorg/django-ex.git

Wait a bit and then check that the application has deployed properly:

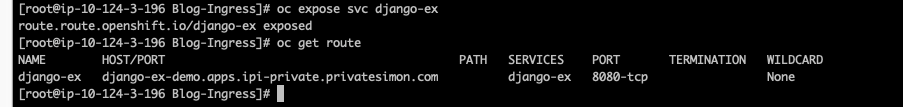

I will now use the OCP cli command “oc expose” to provide this application with a route that is reachable from the internal side.

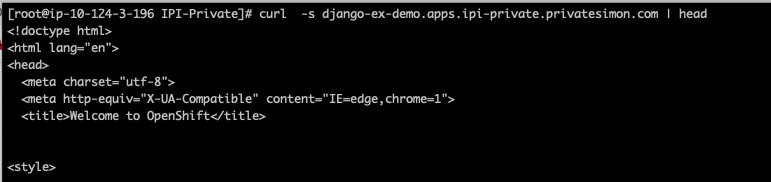

To reach the internal side, I am using a jump host sitting on the same private environment as my OpenShift cluster:

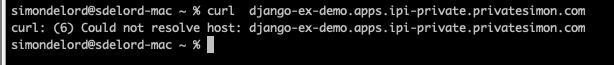

And remember, this URL is completely unreachable from the outside world. This is what I get from trying to reach it from my laptop (for example, from the web):

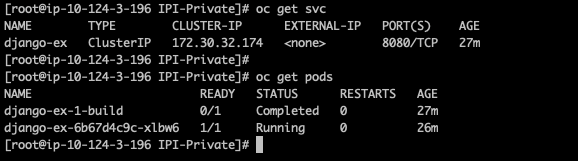

Just because we need it for later, we will get the information for the service in this namespace and the relevant ports used in it (for example, onto which port the traffic is sent to the PoDs).

As you can see, there is one service (called django-ex), and it is sending traffic to the associated PoD on port 8080:

Now, to expose applications sitting on the cluster to the outside world (that is, the web), there are two main components that need to be organized:

- DNS - Unlike a private cluster, which does not need a globally routable domain name (for example, reachable from outside the private network), for our application to be reachable, we will need to create one.

- A way of getting traffic into the cluster. We are going to dive a bit more into how this is done in OpenShift.

How does traffic get into a “private” cluster in the first place?

OpenShift uses the Ingress Operator (see here) as the component responsible for enabling external access to OpenShift. By external we mean outside of the Kubernetes cluster itself (for example, OpenShift).

Behind the scenes, the Ingress Operator deploys and manages one or more HAProxy-based Ingress Controllers to handle routing.

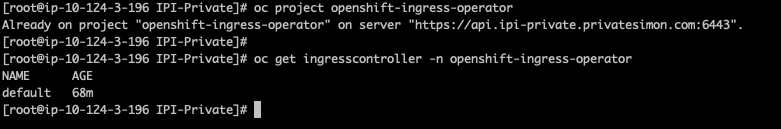

By default it creates an Ingress controller called the “default” ingress controller (in the openshift-ingress-operator namespace):

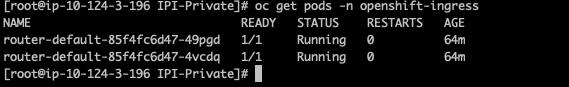

This ingress controller, creates two PoDs running the HAProxy in the openshift-ingress namespace. Notice the name of the PoDs with “router-default” as per the name of the Ingress Controller (default):

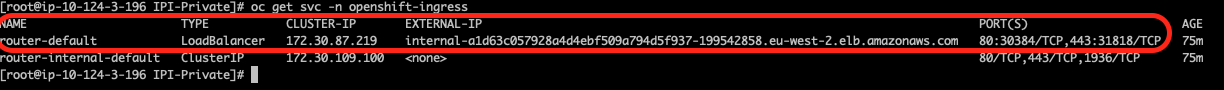

If we look at the services associated with those two PoDs, the service we are interested in is the “router-default” service. It maps traffic from the external IP internal-a1d….elb.amazonaws.com (for example, the load-balancer we have seen in a previous step) to ports 80 and 443 via NodePorts 30384 and 31818 (check again the screen capture of the internal-a1d….elb.amazonaws.com load-balancer and the port numbers for the listeners). You can have a look at NodePorts in the Kubernetes documentation here:

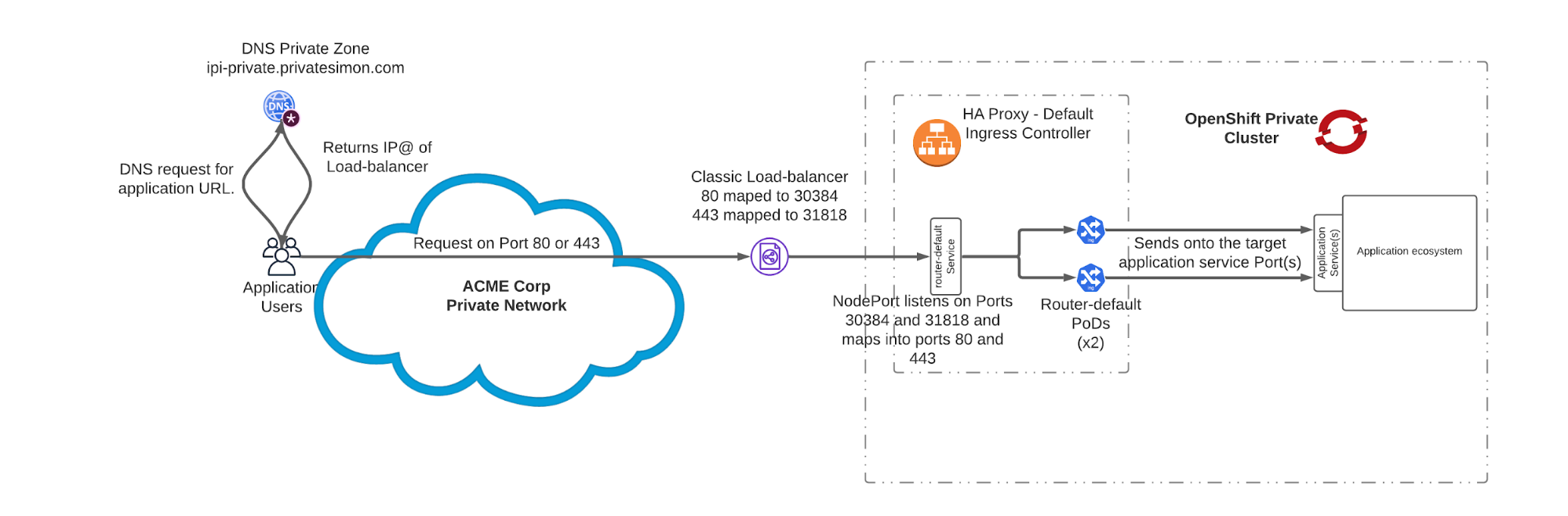

When a user tries to access an app and sends a request on port 80 or 443 to the application URL, this is what happens: The URL gets resolved (that is the role of DNS) to the IP address of the load-balancer.

The load-balancer receives the packet on port 80 or 443. This load-balancer sends the packet to the worker node IP@:30384/31818 based on whether it is HTTP or HTTPS.

Because the service uses a NodePort setup, each node proxies that port (the same port number on every node) into the router-default service.

The service router-default receives the packet and then maps it accordingly to one of the 2 router-default PoDs on port 80 or 443.

The router-default PoDs can then send the packet to the relevant service for the application that the user is trying to reach.

The diagram below shows how this works:

What’s next?

In this blog, we have looked at how Ingress Controllers work. This is required to understand what we are about to do for IPI, ARO, and ROSA environments, which we will cover in the subsequent blogs.

I would also like to thank my colleagues Mark Whithouse, August Simonelli, Adam Goossens, and Shane Boulden for their feedback and suggestions with this series of blogs.

About the author

Simon Delord is a Solution Architect at Red Hat. He works with enterprises on their container/Kubernetes practices and driving business value from open source technology. The majority of his time is spent on introducing OpenShift Container Platform (OCP) to teams and helping break down silos to create cultures of collaboration.Prior to Red Hat, Simon worked with many Telco carriers and vendors in Europe and APAC specializing in networking, data-centres and hybrid cloud architectures.Simon is also a regular speaker at public conferences and has co-authored multiple RFCs in the IETF and other standard bodies.

More like this

Introducing OpenShift Service Mesh 3.2 with Istio’s ambient mode

Looking ahead to 2026: Red Hat’s view across the hybrid cloud

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds