Application modernization is the process of taking existing legacy applications and systems and refactoring them to drive faster time to market and to improve application performance and scalability. There are multiple modernization strategies for changing the application, but the one you select depends on your organization’s need for change. Each strategy requires different levels of involvement from the IT team and access to a modern application development platform that can provide a variety of modern tools, technologies and frameworks.

Most of the modernization efforts today are centered around migrating monolithic applications to cloud-native microservices applications that can support open collaboration between IT teams and automated application deployment and life-cycle management. Modern application development platforms, like Red Hat OpenShift, were designed to deliver a more consistent experience for building, deploying and running applications across the hybrid cloud. However, other technologies and application services are needed to facilitate the development process. This goes over a few considerations to keep in mind when modernizing applications.

Application modernization and microservices communication

With the rise of microservices and the success of many organizations implementing new applications, application modernization has taken a different direction requiring a methodical approach for transforming existing applications to a more modular and granular approach.

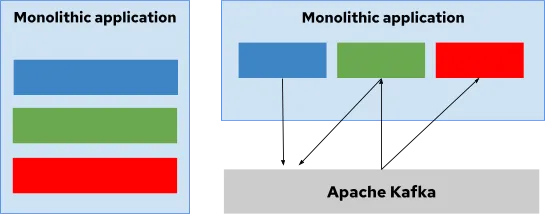

One method is to decompose monolith applications into independent, loosely connected microservices using a defined communication layer. This communication layer could be based on HTTP, messaging or event-driven communication. Organizations could also choose to use all three of them depending on the different business requirements.

Apache Kafka has become one of the options when looking at message driven communication between microservices. Apache Kafka is a distributed streams processing platform that uses the publish/subscribe method to move data between microservices, cloud-native or traditional applications and other systems. This technology differentiates itself from others by its ability to send and receive messages at a very fast rate, horizontally scale as the number of requests increases and retain the data even after messages have been received.

For microservices communication, Apache Kafka provides a scalable and reliable method for communication that allows you to support scenarios where large amounts of data need to be moved from multiple data sources to microservices, or in cases where near-real-time delivery of messages is required. It also allows for keeping microservices loosely decoupled and decentralized and allowing for asynchronous communication due to its broker architecture.

Apache Kafka is not only good for connecting legacy applications with other systems, but also allows for building and creating of new applications faster since developers can reuse streaming pipelines to communicate between microservices. This technology allows you to respond to modern requirements around digital experiences and streaming data.

About Red Hat OpenShift Streams for Apache Kafka

Red Hat has recently made available a fully managed Kafka service designed for IT development teams that want to incorporate streaming data into applications to deliver real-time experience and improve application velocity. Red Hat OpenShift Streams for Apache Kafka allows developer teams to keep the communication between microservices loosely coupled.

Red Hat OpenShift Streams for Apache Kafka provides a streamlined developer experience for building, deploying and scaling real-time applications in hybrid cloud environments. The combination of seamless operations allows teams to focus on core competencies, accelerate application velocity and reduce operational cost.

We invite you to read more about this by visiting the Red Hat OpenShift Streams for Apache Kafka page to learn more. You can also learn more about application modernization and microservices communication in the "Understanding Kafka in the Enterprise" webinar series.

About the author

Jennifer Vargas is a marketer — with previous experience in consulting and sales — who enjoys solving business and technical challenges that seem disconnected at first. In the last five years, she has been working in Red Hat as a product marketing manager supporting the launch of a new set of cloud services. Her areas of expertise are AI/ML, IoT, Integration and Mobile Solutions.

More like this

When AI finds the bugs: Why defense in depth was always the answer

AIOps and Ansible Automation Platform: Where AI intelligence meets trusted execution

Collaboration In Product Security | Compiler

Keeping Track Of Vulnerabilities With CVEs | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds