In our last post we introduced Davie Street Enterprises (DSE), a fictional company at the start of its digital transformation. We'll be looking at some of their problems, and solutions, through a series of posts.

DSE, a leading provider of widgets, has many large manufacturing plants around the world. Traditionally, each plant’s local IT staff was in charge of its own operations, with little oversight from corporate IT. As the company grew, each plant became its own de-facto data center. Early attempts at connecting them over a wide area network (WAN) produced mixed results.

In one instance, an IP address overlap combined with a misconfigured load balancer caused an outage with an inventory tracking system. This took almost two days to resolve and cost the company more than $100,000 in lost revenue. As a result, the WAN deployment was delayed and eventually scaled back. This also contributed to delays in migrating services to the cloud.

During the root cause analysis of the outage, it was discovered that human error in a tracking spreadsheet led to the same IP address being allocated to two different systems. Like many companies, DSE was using spreadsheets to track and allocate IP addresses, a tedious and error prone process. DSE soon realized that an automated system to track IP addresses is critical.

Incidents like this were not uncommon at DSE. They also faced growing competition from competitors, who had fully embraced a digital transformation and recently introduced new virtual widgets (VWs). Something needed to be done.

DSE was under pressure to reduce costs, improve operational efficiencies through technology and deliver on the promise of VWs. She needed someone with the expertise to drive this effort, so she hired Daniel Mitchell as Chief Architect. One of his first tasks would be to lead DSE’s automation efforts as part of the overall digital transformation. DSE was already a customer of Red Hat and Daniel had collaborated with Red Hat on several occasions, so he hired us as consultants.

Gloria Fenderson, Sr. Manager of Network Engineering at DSE, was in charge of the original WAN migration project. She knew first-hand how manual changes and updates could lead to outages and was eager to embrace automation. When the head of IT operations, Ranbir Ahuja, asked her to work directly with Daniel on the automation plan, she jumped at the opportunity to help guide DSE’s digital transformation.

She worked with Mitchell to make sure any proposed solutions met the company’s needs. They met with department heads and front-line IT staff to formulate a plan. After some initial demos and internal conversations, they decided to use Red Hat Ansible Automation Platform as its automation platform of choice. DSE has many disparate systems and Ansible’s agentless architecture makes it easier to integrate into existing systems.

The networking engineering team, headed by Fenderson, is in charge of keeping track of IP assignments. Besides the manufacturing plants, DSE also has hundreds of regional sales offices whose IT operations they manage. Keeping track of these IP assignments with a spreadsheet was almost a full time job. Care had to be taken to not duplicate IPs, which often involved multiple engineers double checking assignments. She wanted to automate this process to reduce errors and free the network engineers to work on bigger projects. Because IP Address Management (IPAM) is so closely tied to DNS and DHCP, they also needed a solution that was well integrated with these services.

Infoblox, a leading provider of DNS, DHCP, and IPAM (DDI) enterprise solutions and real life Red Hat partner, was brought in to provide proof of concept for a centralized solution. Infoblox is also Red Hat Ansible certified, which means their Ansible modules are tested and backed by Red Hat and Infoblox support.

A tiger team, dubbed Alpha, was formed from Red Hat and Infoblox consultants and DSE network engineers to work on the proof of concept.

One of DSE’s requirements for the automated solution was that it needed to be self-service. The Alpha team decided to use Ansible Tower surveys to demonstrate self-service. After deploying the stand-alone Infoblox NIOS appliance, they prepared the Ansible Tower server.

First, they installed the infoblox-client on the Ansible Tower server. This installs the Python libraries necessary for Ansible to communicate with NIOS.

$ sudo pip install infoblox-client

---

nios_provider:

host: 192.168.1.20

username: admin

password: infobloxThey created a project folder called ansible-infoblox. Since they wanted to use the latest certified Ansible collection from Infoblox, they created a file called requirements.yml and put it in a folder called collections. Ansible Tower will then pull in the collection when the project is synced.

--- collections: - infoblox.nios_modules

They then assigned some default values for testing. These can be overridden later in Ansible Tower with a survey.

They created a new file called nios_add_ipv4_network.yml. The parent container, 10.0.0.0/16, was created during the initial setup of NIOS.

--- - name: create next available network in nios hosts: localhost connection: local collections: - infoblox.nios_modules vars: cidr: 24 parent_container: 10.0.0.0/16 start_dhcp_range: 100 end_dhcp_range: 254 region: North America country: USA state: CA

NIOS needs to be queried for the next available subnet within the parent container. The following lines will return the next available /24 (our cidr variable above) in the format 10.0.12.0/24 and assign it to the networkaddr variable.

tasks:

- name: RETURN NEXT AVAILABLE NETWORK

set_fact:

networkaddr: "{{ lookup('nios_next_network', parent_container, cidr=cidr, provider=nios_provider) }}"While the Alpha team could have created the network with the nios_network module, the module does not support assigning the network to an NIOS grid member at this time.. For that, they needed to use the Infoblox API and the Ansible uri module to make the change.

They used a jinja2 template to create the JSON file necessary for the change. They creates a file called templates/new_network.j2

{

"network": "{{ networkaddr[0] }}",

"members": [

{

"_struct": "dhcpmember",

"ipv4addr" : "{{ nios_provider.host }}"

}

]

} - name: CREATE JSON FILE FOR NEW NETWORK

template:

src: templates/new_network.j2

dest: new_network.json

- name: CREATE NETWORK OBJECT AND ASSIGN GRID MEMBER

uri:

url: https://{{ nios_provider.host }}/wapi/v{{ nios_provider.wapi_version }}/network

method: POST

user: "{{ nios_provider.username }}"

password: "{{ nios_provider.password }}"

body: "{{ lookup('file','new_network.json') }}"

body_format: json

status_code: 201

validate_certs: falseThe output from the template will create a json file that will look like this:

{

"network": "10.0.12.0/24",

"members": [

{

"_struct": "dhcpmember",

"ipv4addr" : "192.168.1.20"

}

]

}Now that they had a network established, they created a DHCP range using the NIOS REST API.

First, they created a template file called templates/new_lan_range.j2

{

"start_addr": "{{ networkaddr[0] | ipaddr(start_dhcp_range) | ipaddr('address') }}",

"end_addr": "{{ networkaddr[0] | ipaddr(end_dhcp_range) | ipaddr('address') }}",

"server_association_type": "MEMBER" ,

"member":

{

"_struct": "dhcpmember",

"ipv4addr" : "{{ nios_provider.host }}"

}

}Then added this to the playbook:

- name: CREATE JSON FILE FOR NEW DHCP RANGE

template:

src: templates/new_lan_range.j2

dest: new_lan_range.json

- name: CREATE DHCP RANGE FOR NEW NETWORK

uri:

url: https://{{ nios_provider.host }}/wapi/v{{ nios_provider.wapi_version }}/range

method: POST

user: "{{ nios_provider.username }}"

password: "{{ nios_provider.password }}"

body: "{{ lookup('file','new_lan_range.json') }}"

body_format: json

status_code: 201

validate_certs: falseThe json file will look like this example:

{

"start_addr": "10.0.12.100",

"end_addr": "10.0.12.254",

"server_association_type": "MEMBER" ,

"member":

{

"_struct": "dhcpmember",

"ipv4addr" : "192.168.1.20"

}

}Now that they had a new network created and assigned a DHCP range, they added in some details with the nios_network module. Here is where they configured DHCP options and updated the extensible attributes:

nios_network:

network: "{{ item }}"

comment: Added from Ansible

extattrs:

Region: "{{ region }}"

Country: "{{ country }}"

State: "{{ state }}"

options:

- name: domain-name

value: example.com

- name: routers

value: "{{ item | ipaddr('1') | ipaddr('address') }}"

state: present

provider: "{{ nios_provider }}"

loop: "{{ networkaddr }}"grid/b25lLmNsdXN0ZXIkMA may differ for your installation. Refer to the Infoblox NIOS documentation on how to determine this value in your own environment. - name: RESTART DHCP SERVICE

uri:

url: https://{{ nios_provider.host }}/wapi/v{{ nios_provider.wapi_version }}/grid/b25lLmNsdXN0ZXIkMA:Infoblox?_function=restartservices

method: POST

user: "{{ nios_provider.username }}"

password: "{{ nios_provider.password }}"

body: "{{ lookup('file','restart_dhcp_service.json') }}"

body_format: json

status_code: 200

validate_certs: false Next, they created the json file restart_dhcp_service.json

{

"member_order" : "SIMULTANEOUSLY",

"service_option": "DHCP"

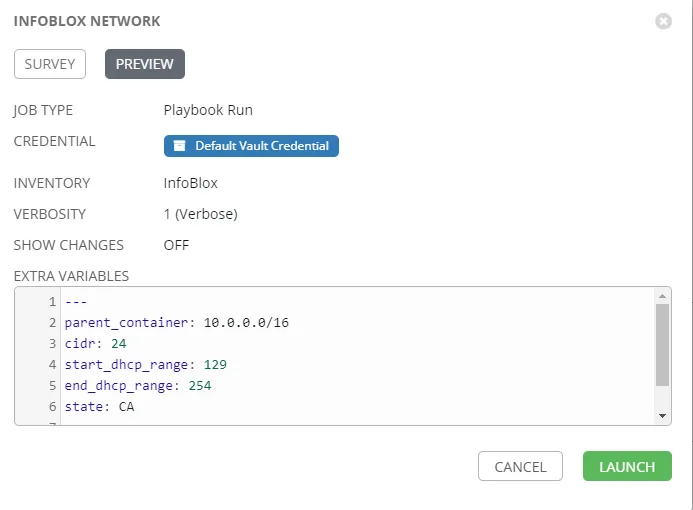

}In Ansible Tower, they created a job template and survey so anyone can create a new network in NIOS. They created a new inventory in Ansible Tower with localhost as the host. The contents of the nios.yml file were added as extra variables to the inventory.

The team decided to use a private GitHub repo for source control and created a project in Ansible Tower that will pull in that repo.

They then created a job template for the new playbook.

Next, they added a survey so a user can choose where they need the new subnet created and customize any extensible attributes, satisfying the self-service requirement. A feature enhancement to the playbook would be to add logic that restricts where subnets can be created based on state, region, or other criteria. An example survey is shown here.

Before testing the job template, they checked NIOS to see what existing networks are configured and found that 10.0.12.0/24 is the next available network.

Back in Ansible Tower, they set the verbosity of the job to 1 and pressed launch.

The team then checked the next available network in the output:

In NIOS, they examined the network to verify the changes had been made. Success.

Next steps

After presenting the solution to DSE management, Mitchel and Fenderson are given the go-ahead to roll out the Infoblox NIOS solution to the entire organization. They can work with Red Hat and Infoblox to further develop the IPAM solution and migrate existing data into Infoblox. Now that IT leadership at DSE has a solid understanding of automating IPAM, they decide to roll out Ansible to other segments in the company, starting with their F5 BIG-IP instances. Keep up to date on DSE's journey here.

About the author

George James came to Red Hat with more than 20 years of experience in IT for financial services companies. He specializes in network and Windows automation with Ansible.

More like this

From Amazon Linux 2 to RHEL: Plan your conversion

Convert and upgrade your RHEL-like system to RHEL in one go

Technically Speaking | Taming AI agents with observability

You Can't Automate Buy-In | Code Comments

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds