As organizations strive to meet the demands of today's fast-paced business landscape, they are increasingly turning to container orchestration platforms and Database-as-a-Service (DBaaS) solutions to empower their developers and streamline their application development processes.

Container orchestration platforms enable organizations to build, deploy, and manage applications in a more agile and scalable manner. They promote consistency, portability, and resilience in application deployments while enabling automation and simplifying complex operational tasks.

On the other hand, DBaaS solutions offer managed and scalable databases that reduce the overhead of manual database provisioning and administration, allowing development teams to focus on delivering value-added features.

Couchbase Capella is a popular, fully managed DBaaS offering now available in AWS, GCP, and Azure. Red Hat OpenShift is the de facto standard for enterprise Kubernetes available for on-premises, public cloud, and as a managed offering in AWS, GCP, Azure, and IBM Cloud.

Many organizations have adopted hybrid and multi-cloud architectures to achieve their availability requirements. By leveraging the strengths of architectures that rely on different cloud environments and providers, organizations can build highly available applications and services that can withstand failures, scale dynamically, and meet the demands of modern business requirements.

This blog will introduce three potential reference architectures based on existing Couchbase customer use cases to demonstrate how Red Hat OpenShift and Couchbase Capella can be incorporated as complementary technologies within your enterprise cloud environment.

Architecture 1: Hybrid and Multi-Cloud

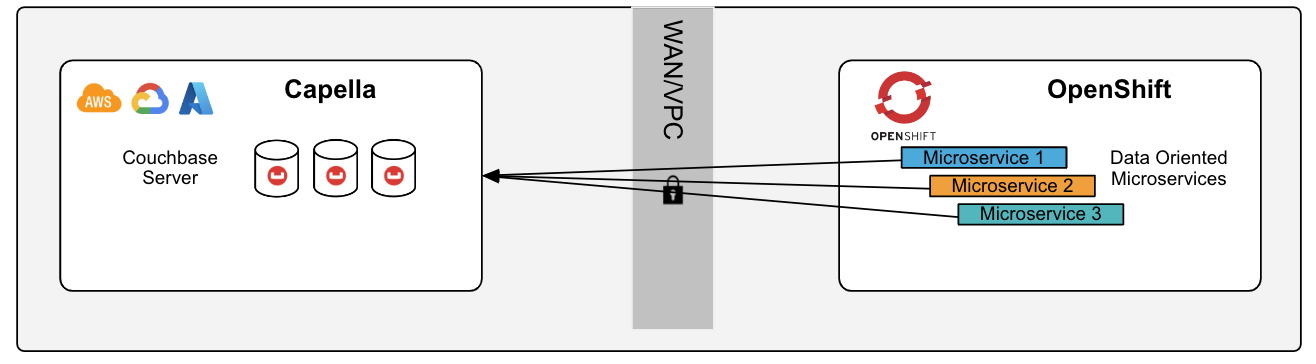

Many existing Couchbase customers run Red Hat OpenShift and Couchbase powered by the Couchbase Autonomous Operator. The Couchbase Autonomous Operator automates and manages Couchbase Server running on OpenShift and open-source Kubernetes. Customers are adopting multi-cloud and hybrid-cloud architectures to achieve their availability and regulatory goals, whether running in multiple cloud environments or on-premises, replicating to a cloud environment. This architecture will describe Couchbase Server running on OpenShift replicating to Couchbase Capella running on AWS, GCP, or Azure.

The OpenShift cluster can run on-premises, self-managed in a public cloud, or as a managed service. Data is replicated and fully encrypted between the Couchbase cluster running on OpenShift and the Capella cluster using Cross Data Center Replication (XDCR). Replications can be configured bi-directionally from Capella to the Couchbase cluster running in OpenShift and from the Couchbase cluster running in OpenShift to Capella. Data in Couchbase is logically separated into buckets, scopes, and collections. Think of a bucket as the equivalent of a database in the relational world, with a scope the equivalent of a schema, and a collection the equivalent of a table.

You can configure replications with fine-grained controls at the bucket, scope, or collection level. The OpenShift cluster may be located in a different cloud or on-premises. XDCR protects against data center/cloud region failure and provides high-performance data access for globally distributed, mission-critical applications.

Architecture 1: The remote cluster is configured from Capella to the Couchbase Cluster running in OpenShift

The components required for this architecture begin with a Couchbase Capella cluster running version 7.0 or 7.1 running in AWS, GCP, or Azure. On the other side is Couchbase server 7.0 or 7.1 running on OpenShift 4.10, 4.11, or 4.12. It is running on-premises, in a public cloud, or as a managed offering and the Couchbase Autonomous Operator 2.3 or 2.4.

Cross Data Center Replication (XDCR) can be configured and managed via the Couchbase Web UI in the Couchbase OpenShift environment or fully managed by the Couchbase Autonomous Operator. However you decide to manage the replications, the configuration remains consistent with Couchbase Server: Configure the remote cluster (the Capella connection string) with authentication and define the data to be replicated (bucket, scope, or collection) with filtering and conflict resolution.

There are several network considerations when implementing this architecture. Let's start by asking a few questions: Where is your OpenShift deployed? On-premises, in a public cloud, and if cloud, is it the same public cloud as your Capella cluster? Is there a requirement for traffic not to traverse the public internet? Will you plan to replicate data bi-directionally when the feature is available?

There are options available, depending on the answers. If the Capella and OpenShift clusters both reside in the same public cloud, you can either configure a VPC peered connection or a private link between the Capella and OpenShift clusters. Private links can also be used between an on-premises OpenShift cluster and Capella. If the end goal is to replicate data in both directions, we recommend the Couchbase Cluster running on OpenShift be exposed via Loadbalancer networking and external DNS.

To learn more, please refer to Couchbase Networking | Couchbase Docs.

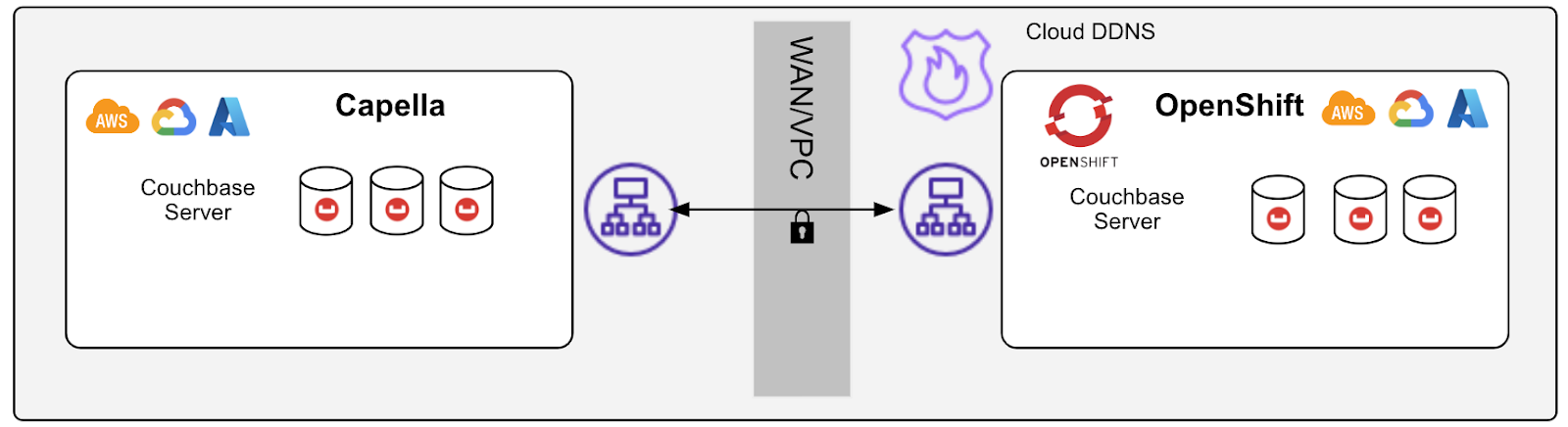

Architecture 2: Microservice Applications with a Capella Backend

Microservices architectures enable organizations to build complex, scalable, and resilient applications that align well with modern software development and deployment practices. They are an attractive choice for modern, agile, and business-aligned application development initiatives.

Many of our customers have embraced running microservices as part of an application modernization or digital transformation initiative. They also utilize OpenShift's integrated developer tools to deploy and manage their applications but may not have the capacity within their OpenShift cluster to also run a large persistent database like Couchbase. In that case, Capella can serve as the persistent datastore for your data-oriented microservices.

This blueprint describes running data-oriented microservices within OpenShift, which read and write to Capella on AWS, GCP, or Azure. The OpenShift cluster will optimally be deployed in a peered VPC within the same cloud provider (AWS, GCP, Azure). Peering the OpenShift cluster with the Capella cluster will improve throughput, latency, and provide additional security by having traffic not traverse the public internet.

A good microservice should be lightweight and stateless. The goal of a microservices architecture is to break application functionality into discrete, independently operating components. Each microservice should serve one functional area of the overall application. What is most important is that each microservice should be able to evolve independently from the rest of the application landscape, which means that changes or updates to one microservice should not affect the functionality or operation of another. This approach provides flexibility and agility in development and maintenance. Couchbase Capella supports multiple data models within the same cluster with shared microservices on a central database or a single database per microservice.

Architecture 2: Microservice applications connect to Capella using VPC peering, private links, or the public internet. All traffic will be encrypted.

The components for this architecture are a Capella cluster running Couchbase 7.0 or 7.1 and a current OpenShift cluster running on-premises or in a public cloud. The OpenShift cluster should be deployed in the same public cloud and region as the Capella cluster to minimize latency and maximize performance. As with Architecture 1, use VPC peering or private links.

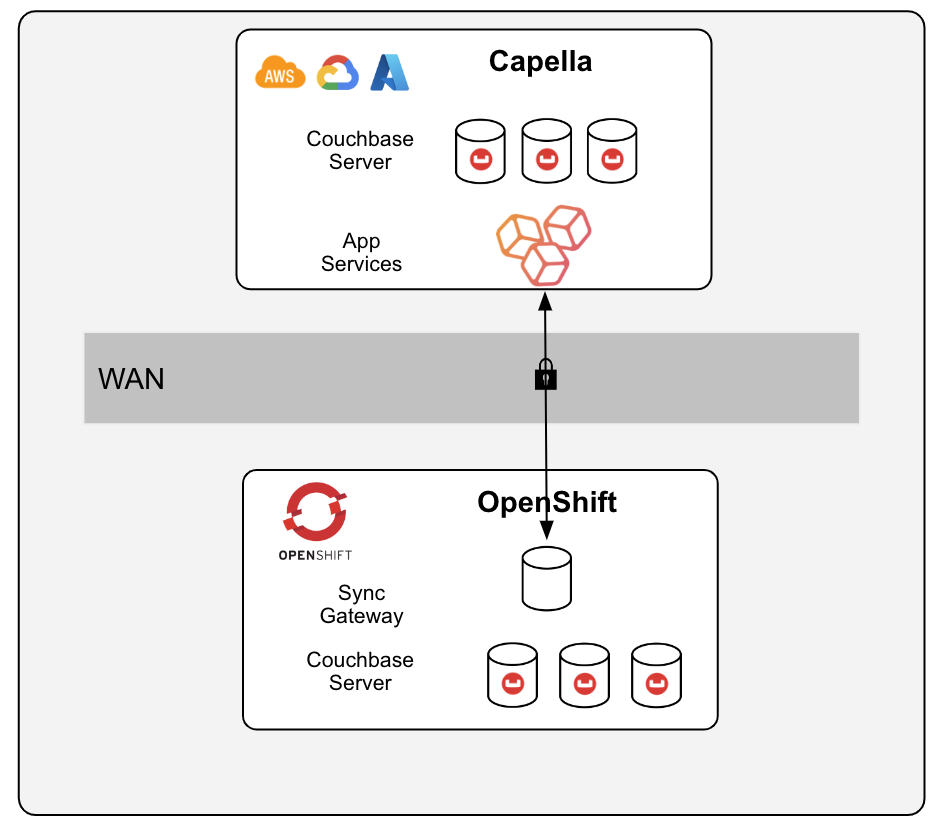

Architecture 3: Capella Centralized Database and OpenShift at the Edge

Couchbase customers have found that running Couchbase Server at the edge where data is produced and consumed offers substantial performance and latency benefits across industries and use cases, including reduced data transfer latency, improved data access, enhanced scalability, increased data privacy and security, and offline capabilities. And Red Hat OpenShift offers several features and capabilities that can accommodate smaller footprint installations on-premises or in regional cloud datacenters, including three-node clusters combining control plane and worker nodes, single-node clusters, and remote worker nodes.

These features make OpenShift a flexible and scalable container platform that can be tailored to meet the requirements of many different deployment scenarios, including edge environments with limited resources or space. Couchbase recommends at least three OpenShift worker nodes to achieve high availability. Running Couchbase alongside your applications within an OpenShift cluster can further reduce the size of the server footprint in edge locations by not requiring separate machines or VMs.

This architecture deploys a small footprint OpenShift cluster at the edge, running Couchbase Autonomous Operator, Couchbase Server, and Couchbase Sync Gateway. The edge cluster may host web and mobile applications for various use cases, including Customer360, Catalog & Inventory, Field Service, and IoT Data Management. Application data is synchronized to a centralized Capella cluster via inter-sync-gateway replication with Capella App Services. Bi-directional replications are configured at the edge. Data replications can be configured as continuous or started/stopped manually.

Couchbase Sync Gateway's inter-sync gateway replication feature supports cloud-to-edge synchronization use cases, where data changes must be synchronized between a centralized Capella cluster and a potentially large number of edge clusters while still enforcing fine-grained access control. This helps organizations maintain data integrity, privacy, and security, all while enabling efficient and controlled data synchronization between the cloud and edge environments. This is an increasingly important enterprise-level requirement.

Architecture 3: The edge cluster Sync Gateway defines the Inter-Sync-Gateway replication to Capella App Services. Replication can be continuous or started and stopped via REST.

The components required for this architecture are Couchbase Capella running 7.0 or 7.1 with Capella App Services. The OpenShift cluster running at the edge should run OpenShift 4.10, 4.11, or 4.12, with the Couchbase Autonomous Operator 2.3 or 2.4, Couchbase Server 7.0 or 7.1, and Sync Gateway 3.0. Configure the inter-sync gateway replication at the edge to replicate to the Capella App Service based on network bandwidth and availability. This architecture is intended to be deployed with several edge locations and a single centralized Capella database.

The Capella App Services instances must be reachable from the OpenShift edge cluster. The Sync Gateway pod(s) running in the OpenShift edge cluster should be exposed through OpenShift Routes for connectivity. Inter-sync-gateway replication will drastically reduce the amount of network bandwidth through compression and delta-sync capabilities.

Wrap up

This blog introduced three reference architectures demonstrating how Capella, the fully managed database-as-a-service solution from Couchbase, can be integrated into a hybrid cloud environment running Red Hat OpenShift, the industry-standard enterprise Kubernetes platform. When leveraged as complementary technologies in an enterprise cloud environment, Capella and OpenShift allow developers to deploy applications easily, scale resources dynamically, and leverage the benefits of a managed database for seamless data management, ultimately accelerating application development and delivery processes.

Stay tuned for follow-on blogs covering each of the three architectures discussed here in detail.

About the author

More like this

The agentic paradox and the case for hybrid AI

Context-aware advisor recommendations in Red Hat Lightspeed

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds