Last year, we announced how Verizon and Red Hat are teaming up to deliver a hybrid mobile edge computing (MEC) solution using Verizon 5G Edge and Red Hat OpenShift – a novel approach to converge both public 5G networks with AWS Wavelength and private 5G networks with AWS Outposts under a single compute mesh using Kubernetes.

Today, we wanted to introduce an additional layer of abstraction in multi-cloud hybrid MEC. As enterprise customers seek greater choice across their edge deployments, we believe that choices across physical geography, network type and cloud provider are equally important.

While we primarily focused on the architecture of Red Hat OpenShift during re:Invent itself, we wanted to take the time to highlight the theory behind “why hybrid MEC” across three key areas:

-

How MEC brings the best of both recent compute and network optimizations

-

How the underlying Red Hat OpenShift design is inherently flexible to accommodate emergent architectures and technologies

-

How enterprises can leverage hybrid MEC to redesign their end-to-end value chains

Red Hat OpenShift on 5G Edge

Using Red Hat OpenShift on Verizon 5G Edge, enterprises can flexibly deploy low-latency applications on demand anywhere across more than 13 geographies (e.g., Wavelength Zones) on the public network and expand to a limitless number of locations on their private managed networks.

Red Hat OpenShift helps standardize the developer and applications experience anywhere on Verizon 5G Edge. Maybe even more importantly, Verizon 5G Edge customers can operate their deployments from a single pane of glass using Red Hat Advanced Cluster Management for Kubernetes (ACM) for more seamless operations. Additionally, with Red Hat OpenShift they have the same tools to develop, manage and deploy their applications thanks to pre-integration work done with an extensive list of Red Hat partners. Plus, as Verizon 5G Edge expands to new geographies or even new cloud providers, the experiences remain common.

This is great news for enterprises: The ability to deliver lower latency experiences simultaneously across private and public networks will allow enterprises to rethink applications from their data architectures to the personalized experiences afforded by the increasingly geo-distributed application designs. Using this hybrid edge architecture, for example, a logistics company could connect its fleet and its branch offices under a single compute mesh, driving cost efficiencies and deployment ease.

Additionally, enterprises can leverage opportunities to transform not only the cloud itself but also the resources around the cloud. Purpose-built hardware can be disintermediated and offloaded to the cloud, resulting in increasingly flexible, lightweight, and dynamic device pools.

The future of edge applications is more than just new infrastructure patterns or even new modes of 5G-driven network intelligence. We believe, rather, that it is about building a compute mesh from the cloud to the edge across both public and private networks to unify the application experience under a single architecture. By using Red Hat OpenShift, Verizon has begun to explore how such an experience might be possible.

But how did the mobile edge come about in the first place?

The shift to the edge

With the advent of high-speed 5G networks, enterprises have begun to embrace the edge like never before. But this was not always the case.

Over the past decade, as mobile devices sought ever-increasing data volumes, lower latency and best-in-class reliability, network paradigms struggled to keep up with customers’ demands.

To address this challenge, cloud providers have taken two complementary approaches: bringing compute closer to the end-user and bringing the network closer to its downstream devices. An example of the former, content delivery networks (CDN) have used their densified points of presence to enrich otherwise static storage resources with Function-as-a-Service (FaaS) capabilities. While this has unlocked increasingly lightweight deployment models for compute and storage, non-deterministic network behavior can still create latency issues for edge-based FaaS.

That’s where network optimizations began to play a similar role. In the same way that interconnect services brought an enterprise’s network edge topologically closer to the cloud, solutions such as AWS Global Accelerator have developed useful approaches to enhance performance at scale. Instead of bringing compute closer to the user, services could bring the user closer to the network.

While both solutions provide tremendous value to customer architectures, neither are positioned as the most performant solution for the mobile domain. In a world where the vast majority of the latency is consumed by the air interface, only a carrier-native service could truly deliver the performance of a network accelerator with the compute flexibility at the edge.

The mobile edge has become one of the most compelling opportunities to-date. Using Verizon 5G Edge, a real-time cloud computing platform, developers have been able to harness flexibility by extending their existing virtual private cloud (VPC) environments to the edge of public 5G networks with a single pane-of-glass for management of edge and non-edge workloads. Developers have used these on-demand, pay-as-you-go compute environments to build solutions such as: crowd analytics, predictive maintenance, and vision-enhancement technology for the visually impaired, to name a few examples.

However, developers have yet to create this same public cloud experience at scale in a related model of edge computing: extending VPC environments to the edge of private 5G networks. Why might this matter?

As enterprises build application workflows today, they often inadvertently introduce compute silos whereby application architectures (like Kubernetes) are constrained to a given environment and cannot extend beyond a given geographic scope. But what if there was a way to re-use latency-tolerant components (e.g., control plane) and distribute latency-critical components, within a single compute cluster, across public and private networks separated by thousands of miles?

Why Red Hat OpenShift is edge-friendly

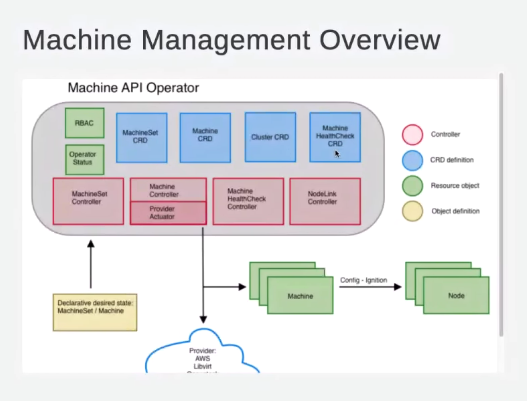

Using Red Hat OpenShift in a cloud-native architecture, one of the most fundamental software abstractions is the notion of MachineSets. When determining the desired number of nodes for a cluster, MachineSets govern the management of machines, which in turn governs the underlying resources (i.e., VMs) available today.

In the same way that ReplicaSets maintain a stable set of pods in traditional Kubernetes environments, MachineSets provide a representation of the underlying physical hardware, including the cloud provider, image, and other flavors of the logical node itself.

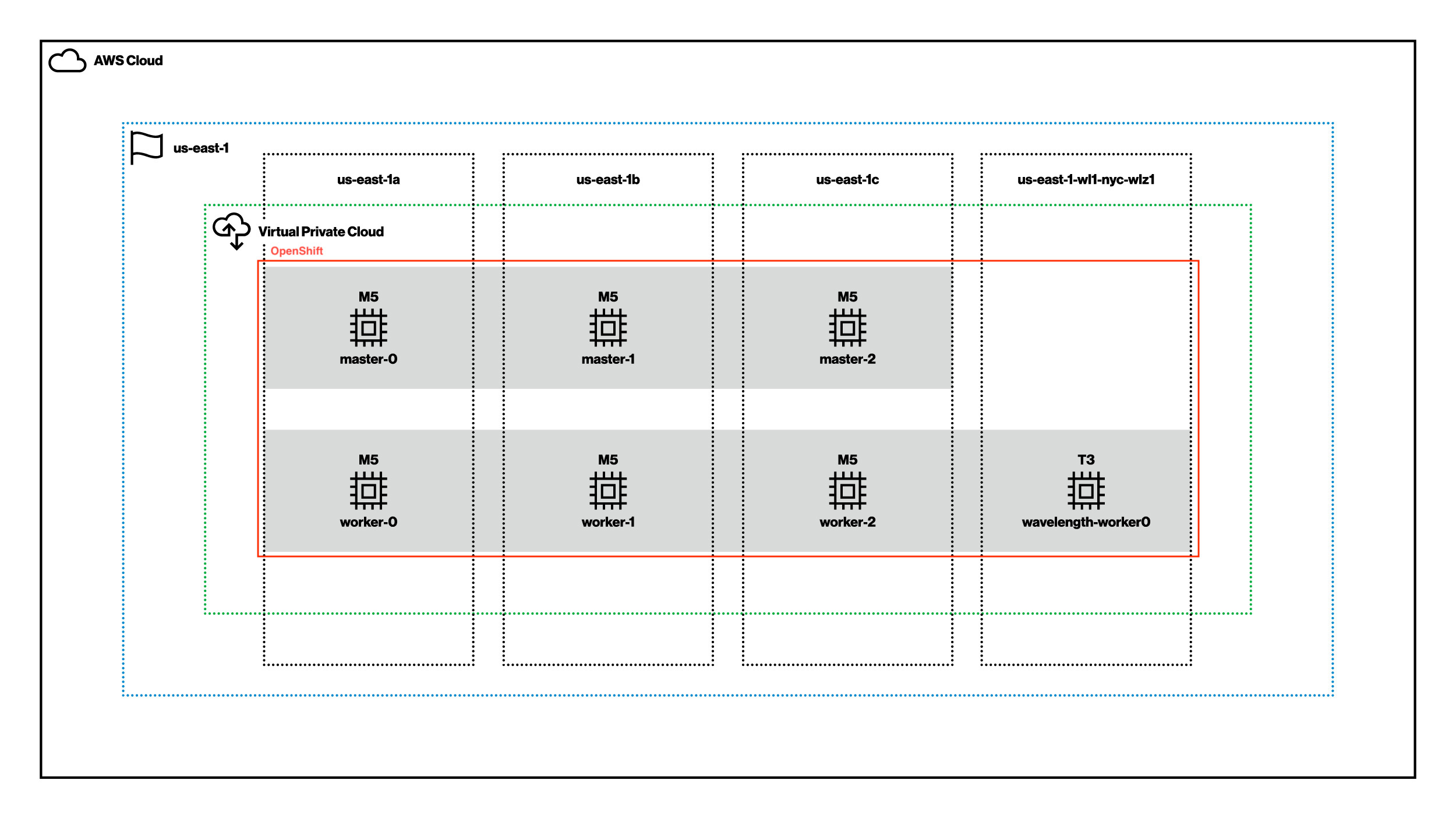

The beauty of this MachineSet abstraction is that, while each machine object may be unique to a given cloud provider or deployment model, together MachineSets can abstract away complexities of the network itself. Take, for example, the following deployment presented at re:Invent 2021: Build & deploy applications faster with Red Hat OpenShift Service.

Now, let's use high availability as an example of how Red Hat OpenShift can simplify implementation. Traditionally on a single cloud provider like AWS, a highly available architecture might consist of MachineSets in each of three or more availability zones (AZs) within a region, providing more failure domains.

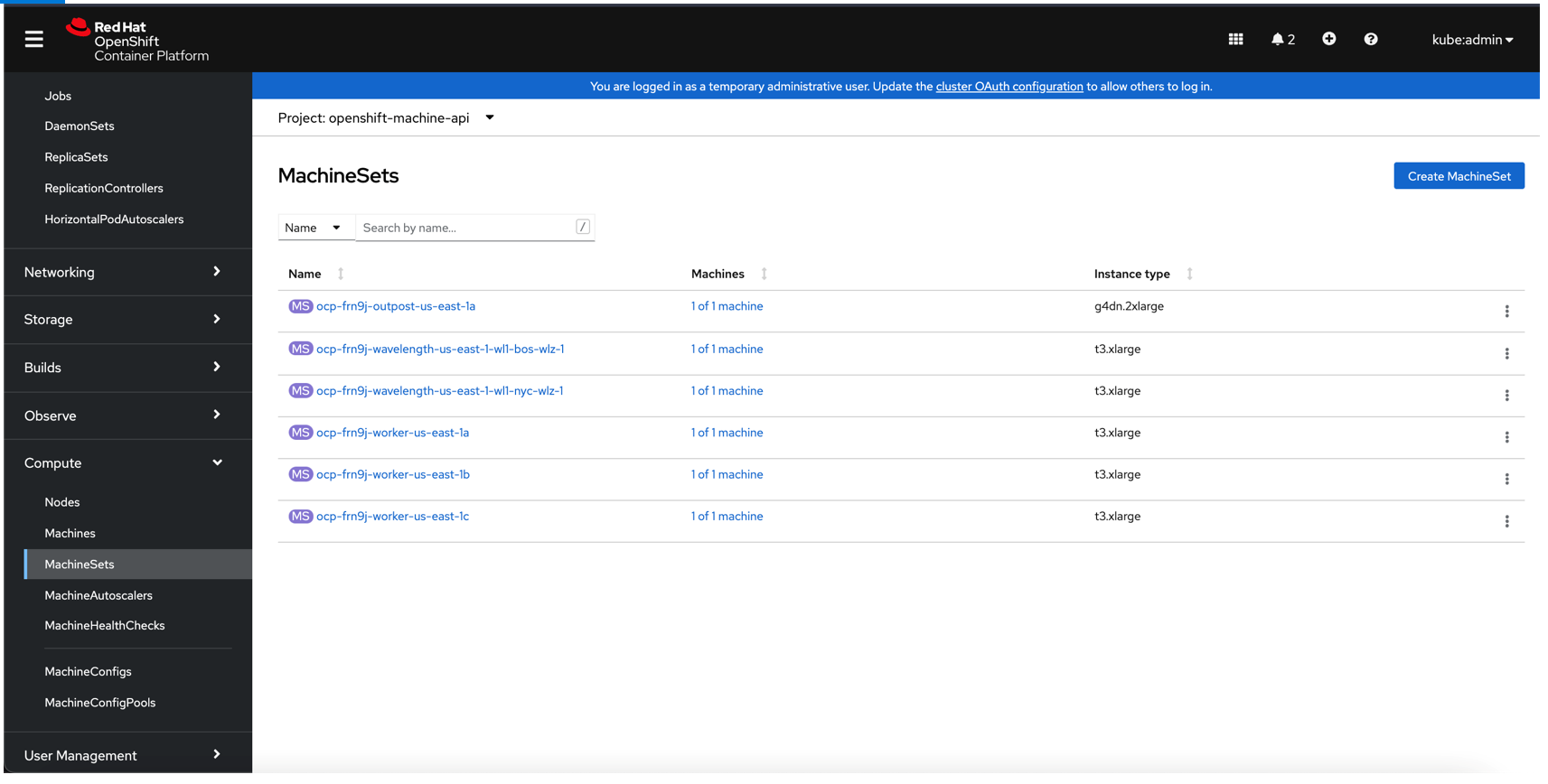

Add in the hybrid MEC world and each edge – across both public MEC (e.g., AWS Wavelength) and private MEC (e.g., AWS Outposts) - could become failure domains, as well. In this way, we can instantiate additional MachineSets in each of these edge compute zones without any incremental complexity. With Red Hat OpenShift, the inclusion of the edge is just another deployment manifest file – even if the underlying infrastructure specifications correspond to zones over 1,000 miles apart.

To prove this design out, we actually created a Red Hat OpenShift cluster in three AZs in Northern Virginia, Wavelength Zones in New York City and Boston, and a dedicated Outpost in Texas. All from less than 200 lines of YAML (yet another markup language).

This is just one of the ever-complex orchestration opportunities made more seamless using Red Hat OpenShift. MachineSets can be extended beyond a single cloud provider into other clouds to introduce additional edge topologies. As long as there’s a direct connection to the control plane in the other cloud providers via the internet, this extension can even be done as part of the same cluster, further reducing operational overhead.

Build on Verizon 5G Edge with Red Hat OpenShift today

There’s no denying the mobile edge is in its infancy. Even so, just last year Verizon saw more than 100 submissions to our 5G Edge Computing Challenge from 22 countries, demonstrating the power of the mobile edge applied to healthcare, retail, gaming and beyond.

While the Verizon 5G Edge footprint continues to grow, one thing has remained consistent: Across enterprise application modernization journeys in the years to come, moving to the edge will no longer be a yes or no question. Rather, the edge will continue to encapsulate a limitless set of permutations as customers explore architectures that best fit their needs.

To learn more about Red Hat OpenShift on Verizon 5G Edge, check out the infrastructure fundamentals or join us at this year’s MWC Barcelona.

About the authors

Robert Belson is a Developer Relations Lead at Verizon.

Vijay Chintalapati has been with Red Hat since 2014. Over the years, he has helped numerous customers and prospective clients with deep expertise in OpenShift, automation and middleware offerings from Red Hat.

More like this

The agentic paradox and the case for hybrid AI

Context-aware advisor recommendations in Red Hat Lightspeed

Crack the Cloud_Open | Command Line Heroes

Open Curiosity | Command Line Heroes

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds