This is a guest post by Nils Buer, Director of Product Management at Stonebranch.

Managing Kubernetes can be complex, which is why many organizations turn to Red Hat OpenShift to run their most important applications.

Even with a powerful platform like OpenShift, however, it’s not always easy for enterprises to connect applications running in containers to data sources that are outside of those containers. Complexity, once again, is the culprit—in this case, the ever-increasing complexity of hybrid IT environments running a mix of on-prem, cloud and containerized solutions.

For IT Ops, DevOps and DataOps teams, simplicity is the ultimate goal, and it’s closer than they might think: an automated data pipeline that connects the Kubernetes managed by OpenShift with the data sources needed to make the applications within the Kubernetes platform work.

In this blog post, we’ll illustrate how to use Stonebranch’s Universal Automation Center (UAC) to extend the capabilities of Red Hat OpenShift and enable on-demand, more secure transfer of business data located in cloud storage, on a mainframe or in a hybrid environment to an application running on OpenShift, and vice versa.

We'll cover how to:

- Automatically trigger a file transfer in real-time based on various events, such as the arrival of a file, an email or a message in the message queue. Time-based transfers can also be triggered.

- Trigger the file transfer from any application calling a REST web service.

- Successfully implement cluster management (specifically, the more secure distribution of data to all PODs in an OpenShift cluster).

- Satisfy the need for real-time monitoring and auditing of the entire file transfer process.

- Functionally integrate applications running in OpenShift into the legacy IT landscape.

Brief Introduction to Universal Automation Center

Universal Automation Center is a comprehensive Service Orchestration and Automation Platform. A key solution within UAC is real-time data pipeline orchestration, which offers out-of-the-box functionality to transfer data from any source to one or multiple OpenShift PODs in parallel.

By combining Red Hat OpenShift with UAC, enterprises can:

- Provide access to the latest and most up-to-date business data across the entire hybrid IT environment.

- Enable end-users to schedule applications running on OpenShift in the same way as applications running in the cloud, on a (virtual) server or on the mainframe.

- Provide cluster scenario support by sending and receiving data simultaneously to all PODs related to an application or only to the one with the lightest current load (round robin and other scenarios are also supported).

- Integrate applications run on OpenShift with any current business process automation flows consisting of both OpenShift and non-OpenShift applications, such as an SAP order-to-cash process.

Scheduling applications this way provides all the benefits of an application deployed on the OpenShift orchestration platform, including significantly reduced resource consumption, scalability and performance, fast deployment and testing.

Universal Automation Center Platform Overview

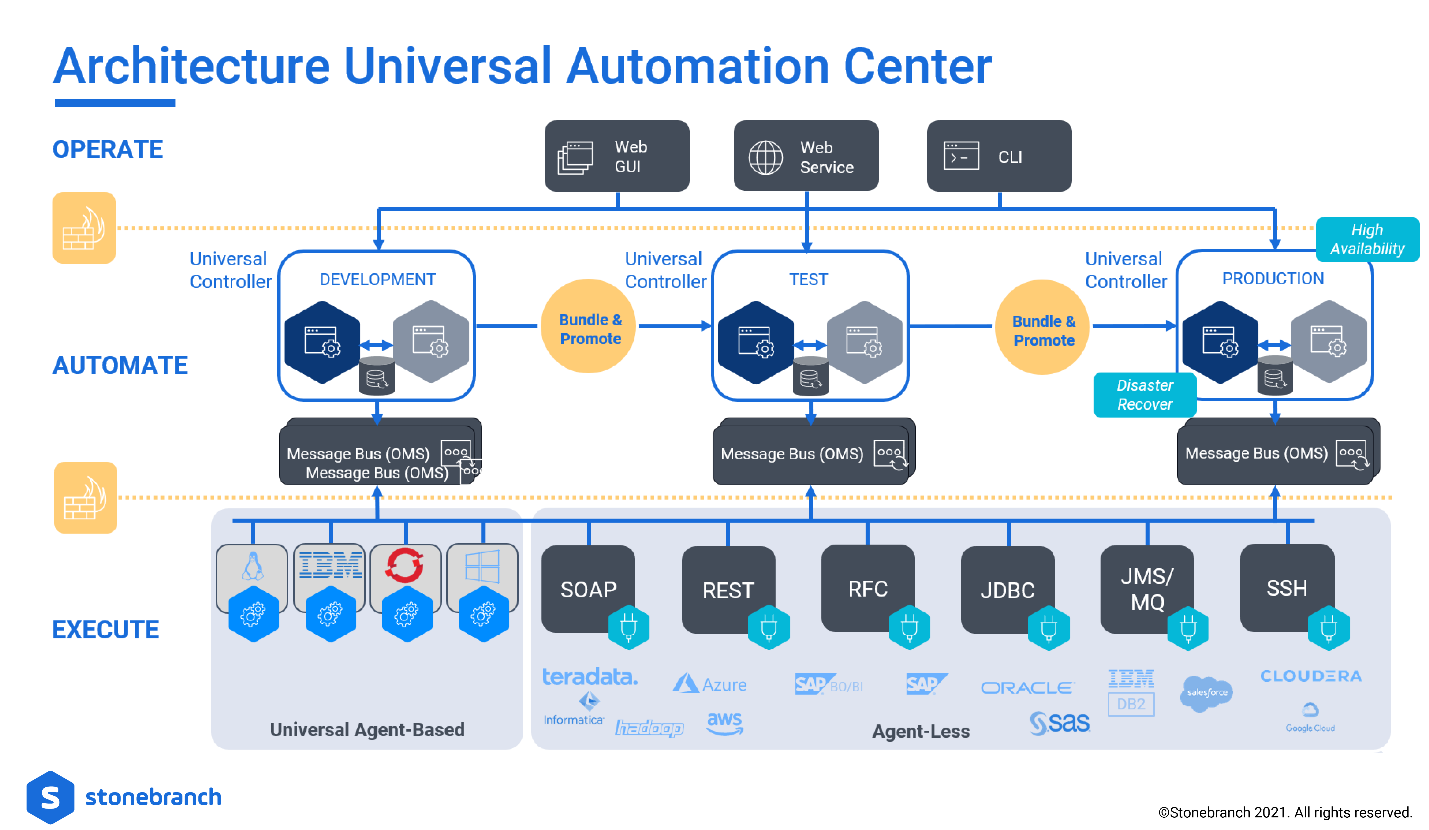

The two key components of UAC are:

- Universal Controller (UC): A browser-based workflow, reporting and orchestration engine. The UC is the central workload scheduler and serves as the centralized command center within the platform.

- Universal Agents (UA): This workload execution agent is designed to execute and control any remote system’s automation process.

As soon as an agent is installed on a server, it automatically connects to the Universal Controller’s middleware message bus OMS and is ready to execute commands/scripts and file transfers. Keep in mind that the Universal Controller will also connect to third-party systems via API. In this particular scenario, no agent needs to be installed. We call this an agent-less connection.

File Transfer Workflow

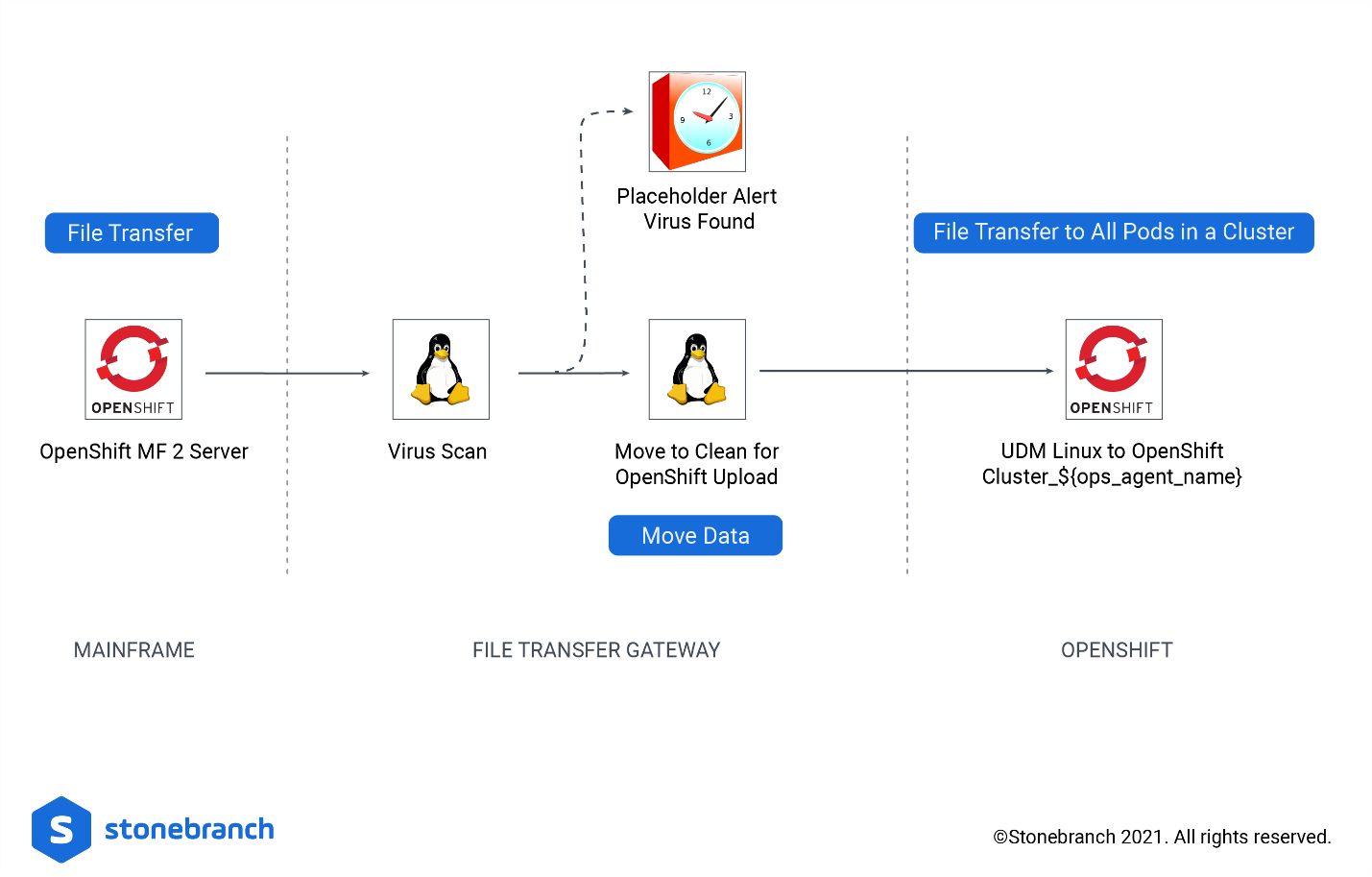

Now, let's look at a simple OpenShift file transfer workflow designed within the Universal Controller.

In the example workflow below, we provide data from the mainframe to all application instances within the same application.

- Task 1: The first task "openshift_mf_2_server" transfers the data from the mainframe to an intermediate file transfer gateway.

- Task 2: On the file transfer gateway server (FTG), the enterprise virus scanner is started for the received data by the task, "VirusScan"

- Task 3: If the virus scan was successful, the received data is moved to the folder "clean" using task "move_to_clean_for_OpenShift_upload".

- Task 4: If the move was successful, task "UDM_Linux_to_OpenShift Cluster_${ops_agent_name}" is started and loads the data to all PODs started for a specific application. All assigned PODs of an agent cluster, therefore, will get the same data at the same time.

Setting Up and Using UAC with OpenShift (the Technical Stuff)

Universal Automation Center and Red Hat OpenShift are currently integrated with one another. In fact, UAC is a certified Red Hat partner. So, once you have both platforms, it’s pretty simple to get started. Let’s go ahead and drill into the basic setup:

- For OpenShift, a dedicated OpenShift image containing the Stonebranch Universal Agent, which is Red Hat–certified and has passed multiple vulnerability checks, can be found on the Red Hat Ecosystem Catalog.

- The end-user may deploy the OpenShift image as a sidecar container to any POD running an application. The deployment can be done via Command line or directly from the OpenShift Dashboard GUI interface.

- As soon as a POD is started, the sidecar container is automatically initiated, and the contained Universal Agent automatically connects to Universal Automation Center’s message bus (OMS).

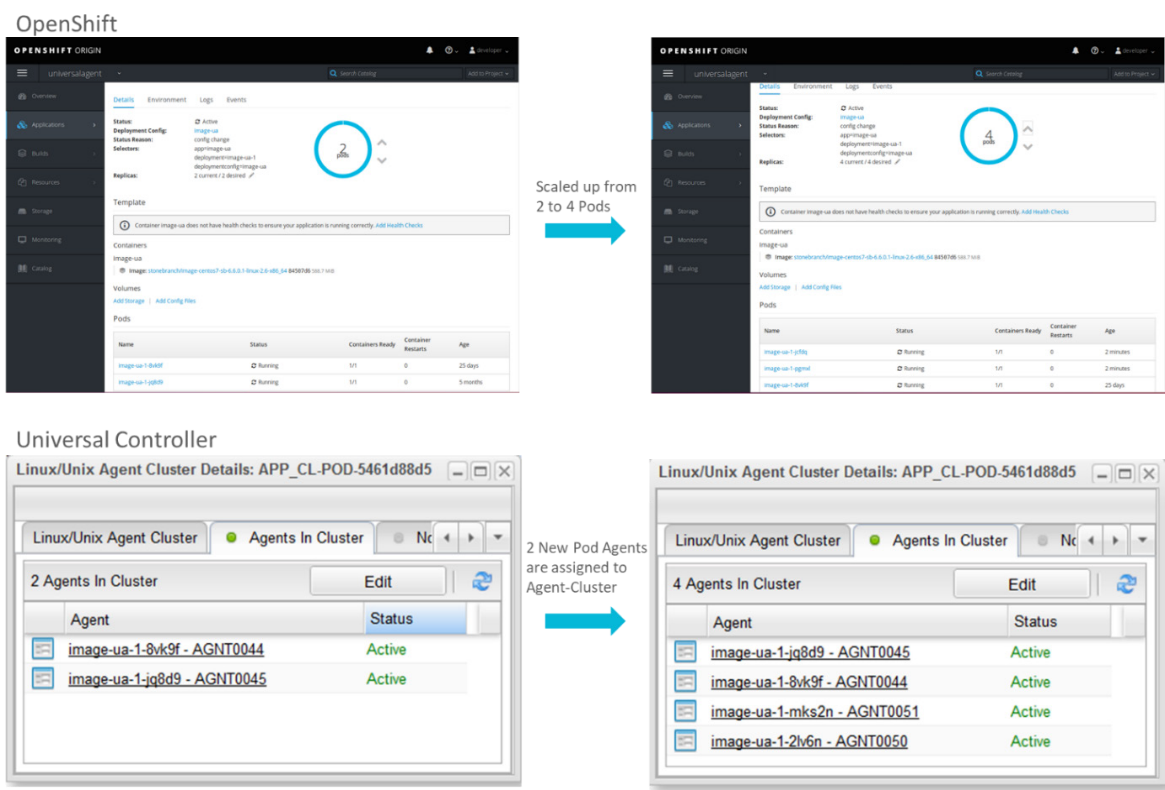

During the start of the POD, the Universal Agent is automatically assigned to a Universal Controller application cluster, providing various cluster functionalities like load-balancing or broadcast cluster, which allows users to send a file or command to all agents in the cluster simultaneously. In addition, file monitoring is supported. This allows for the automatic triggering of a file transfer (or other action) based on a file arrival in one or multiple PODs.

One dedicated Universal Controller application cluster is created for each OpenShift application. As soon as the Universal Agent registers at the Universal Controller message bus, it is available to send and receive files from any other Universal Agent installed on a server or mainframe within the IT landscape, or agent-less from any cloud storage. The Universal Agent can also send and receive files from any cloud storage (e.g., AWS). In addition, the application running in the POD can be scheduled like any other application and included in any business process automation workflow.

If the web-portal load increases, the OpenShift orchestration platform allows users to dynamically scale up the number of application instances by starting an additional POD. When doing this, all new started PODs are automatically added to the Universal Controller agent cluster related to the application.

Next Steps:

If you already have Stonebranch Universal Automation Center and Red Hat OpenShift, you have two options.

- Head over the Red Hat Ecosystem Catalog and download the Universal Agent for OpenShift. Then deploy the Red Hat certified Universal Agent container image as a sidecar container to any POD to which you want the data transferred.

- For a more turnkey approach, where you can install a pre-configured agent as Openshift Operator from within the OpenShift platform, you may download the agent from the Red Hat Marketplace, where the Agent as Operator will be available in the coming weeks.

If you don’t already work with Stonebranch, we invite you to take a look at the platform. Working with Kubernetes and OpenShift is just part of a much broader service orchestration and automation platform. You may request a free trial here. Once you have the UAC up and running, you can follow the same instructions above.

Ready to Try It Yourself?

To more securely try out the transfer of business data located on the mainframe, in the cloud, in a Hadoop cluster or any equal (virtual) server from or to an application running on OpenShift, only two steps are required:

- Deploy the Red Hat certified Universal Agent container image (provided through the public Docker hub registry) as sidecar container to any POD to which you want the data transferred.

- Request a Universal Controller cloud account on stonebranch.com.

If you want to transfer data from cloud storage or Hadoop cluster to a POD, no additional Universal Agent is required. This can be done as part of a big data pipeline automation and orchestration initiative. If you want to transfer data from a mainframe or (virtual) server, you will need to install a Universal Agent on each (virtual) server or mainframe. The Universal Agent can be downloaded from stonebranch.com.

About the author

Red Hatter since 2018, technology historian and founder of The Museum of Art and Digital Entertainment. Two decades of journalism mixed with technology expertise, storytelling and oodles of computing experience from inception to ewaste recycling. I have taught or had my work used in classes at USF, SFSU, AAU, UC Law Hastings and Harvard Law.

I have worked with the EFF, Stanford, MIT, and Archive.org to brief the US Copyright Office and change US copyright law. We won multiple exemptions to the DMCA, accepted and implemented by the Librarian of Congress. My writings have appeared in Wired, Bloomberg, Make Magazine, SD Times, The Austin American Statesman, The Atlanta Journal Constitution and many other outlets.

I have been written about by the Wall Street Journal, The Washington Post, Wired and The Atlantic. I have been called "The Gertrude Stein of Video Games," an honor I accept, as I live less than a mile from her childhood home in Oakland, CA. I was project lead on the first successful institutional preservation and rebooting of the first massively multiplayer game, Habitat, for the C64, from 1986: https://neohabitat.org . I've consulted and collaborated with the NY MOMA, the Oakland Museum of California, Cisco, Semtech, Twilio, Game Developers Conference, NGNX, the Anti-Defamation League, the Library of Congress and the Oakland Public Library System on projects, contracts, and exhibitions.

More like this

Why flexibility is non-negotiable in the Middle East’s AI transformation journey

The future of AI demands a hybrid foundation

Technically Speaking | Inside open source AI strategy

Technically Speaking | Build a production-ready AI toolbox

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds