Open Source is the foundation of most applications deployed today, and containers have become the standard delivery mechanism for these applications. This raises a question, however, for many organizations: How do we ensure security in our software supply chain and make sure the latest fixes for CVEs and vulnerabilities are applied to our application stack?

Red Hat container catalog contains a large number of certified container images for language runtimes, databases, and application services to provide organizations with a trusted source for the container images they consume within their applications. The images that come from Red Hat are always signed and verified to ensure origin and integrity and are regularly updated to address bugs, CVEs, and vulnerabilities. Red Hat Universal Base Image (UBI) took this offering a step further and made a trusted set of base images available to all organizations regardless of whether they are Red Hat customers or not.

While this addresses trust in the source of the images, it does not solve the challenges of images being updated nor of rebuilding application images on top of these base images.

OpenShift Container Platform historically has addressed this challenge by using Image Streams. An image stream is an abstraction for referencing container images from within OpenShift while the referenced images are an image registry such as OpenShift internal registry, Quay, or other external registries. Image streams are capable of defining triggers which allow your builds and deployments to be automatically invoked when a new version of an image is available in the backing image registry. This in effect enables rebuilding all images that are based on a particular base image as soon as a new version of the base image is available in the Red Hat container catalog and therefore updates all images with the latest bug, CVE, and vulnerability fixes delivered in the latest base image. The challenge, however, is that this capability is limited to BuildConfigs in OpenShift and does not allow more complex workflows to be triggered when images are updated in the Red Hat container catalog. Furthermore, it is also limited to the scope of a single cluster and its internal OpenShift registry.

Fortunately, though, using Red Hat Quay as a central registry in combination with OpenShift Pipelines enables infinite possibilities in designing sophisticated workflows for ensuring a secure software supply chain and automatically performing any set of actions whenever images are pushed, updated, or security vulnerabilities are discovered in the Red Hat container catalog.

In this blog post, we will highlight how Red Hat Quay can be integrated with Tekton pipelines to trigger application rebuilds when images are updated in the Red Hat container catalog. At a high level, the flow will look like this:

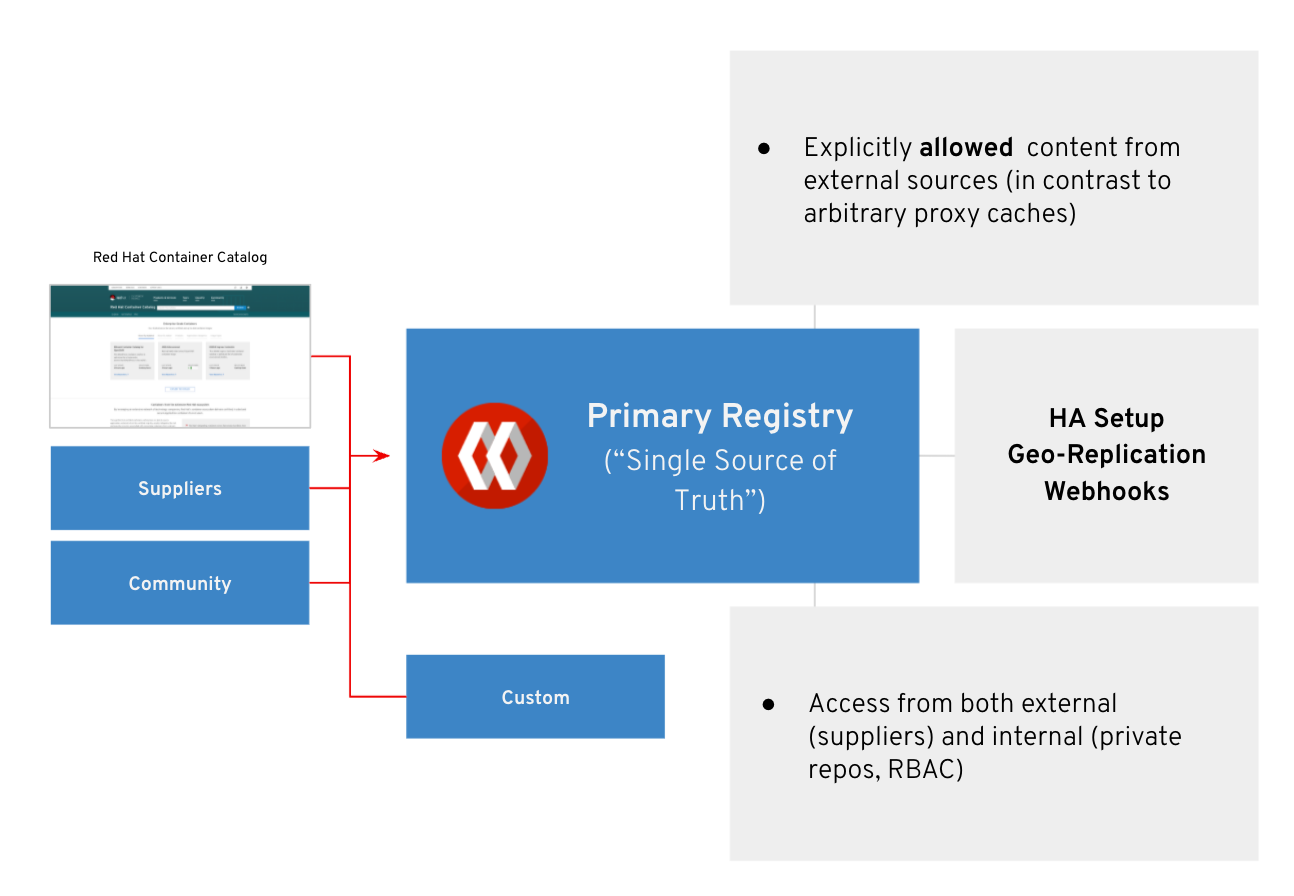

Red Hat Quay as a Content Ingress point

The Red Hat container catalog is the official source for Red Hat’s containerized products. Due to the requirement to have an active internet connection, many Red Hat customers today already use a private registry like Red Hat Quay to automatically download newly released images from the catalog. In turn, these images are usually served internally to developers and to production OpenShift clusters that are not allowed to have a direct internet connection.

This form of automated content ingress is something that Red Hat Quay supports via its repository mirroring feature. It allows users to stay in sync with an arbitrary public container image repository. It is also often used as a way to bring in untrusted content from public container registries for further introspection by using Quay’s image scanning feature.

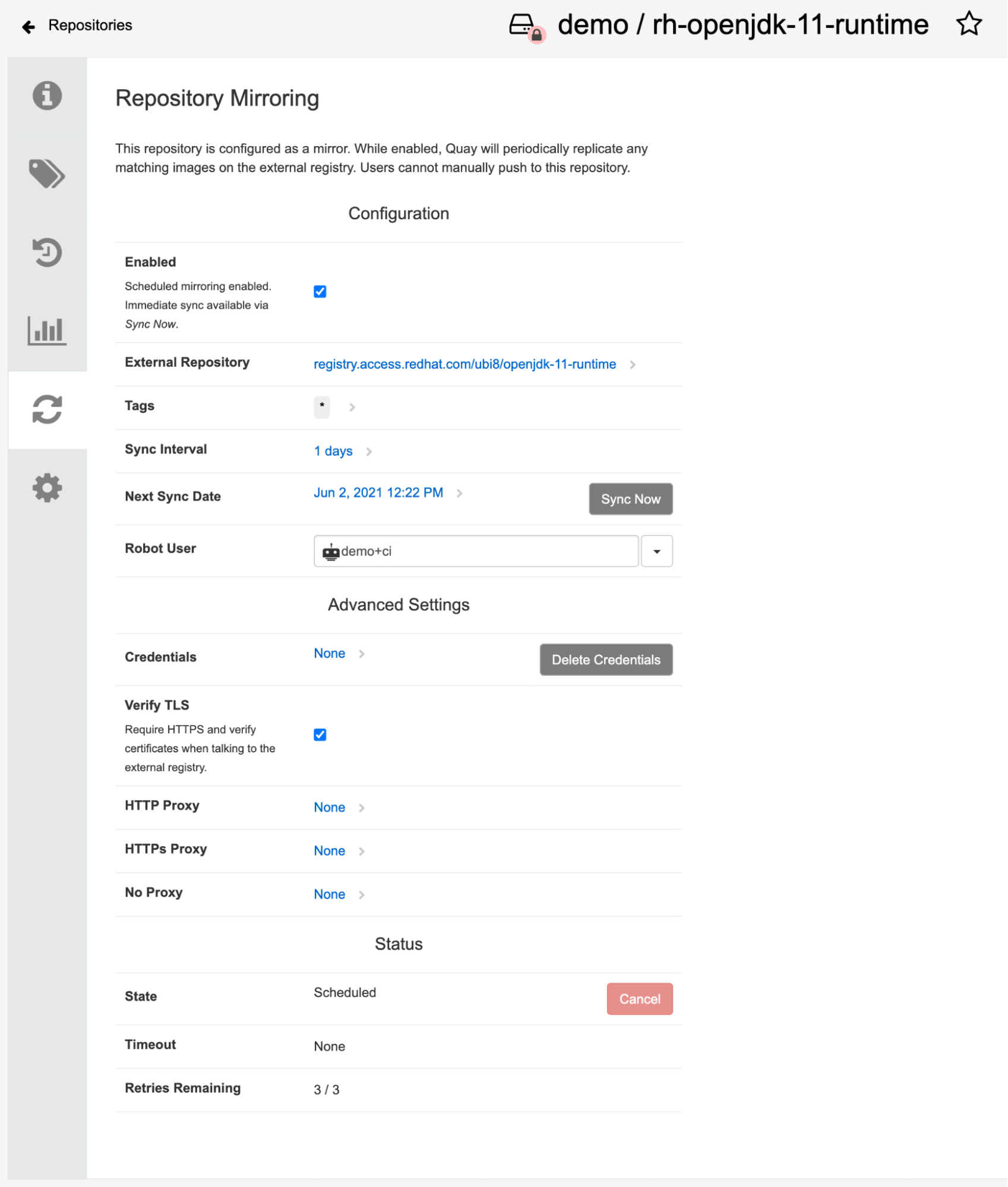

Below is an example of Quay repository mirroring configuration for a popular base image for Java developers:

This configuration would mirror the UBI8-based OpenJDK 11 runtime base image once a day and syncs any new tags or updated tag references into this Quay repository. Note that such a mirror repository can not be used to push additional images manually, since Quay strives to keep the content in sync with the upstream registry.

As such, image tags that were deleted in the upstream repository will also be deleted in the mirror; tags that were overwritten in the upstream are also overwritten in the mirror, and so on.

When a repository is configured as a mirror, Quay continuously discovers and mirrors all tags as they are added or removed from the remote source repository to make sure it stays in sync with the source repository. As an example, the following report on the mirrored repository shows the tags and images that are updated after a sync is completed:

Once images are mirrored into Quay, they are introspected for security vulnerabilities using Quay’s image scanning feature. This information can be used in other pipelines to make decisions about whether an image is acceptable for production usage.

In the scenario of this blog post, we are looking to automate the rebuild of a Java-based application in case there is a new version of the base image that is being released. Two requirements need to be met for this to work:

- The application image build instructions (usually a Dockerfile) has to refer to the base image using a floating tag that is overwritten when the images is patched, for example, 1.9 instead of 1.9-1 or 1.9.2.

- The build process for the application has to be triggered automatically when the floating tag is overwritten.

The first requirement is handled by Red Hat. Stable major/minor version releases are maintained via floating tags when vulnerabilities are patched, leading to a new patch release, for example, . 1.9-1. The tag 1.9 would be maintained and updated to point to a newer image when, for example, 1.9-2 is released. For reproducibility and consistency, the individual patches are published via their own tag.

The second requirement can be fulfilled by Quay’s event notifications. This is a very flexible feature that can trigger various actions on different event types in a repository. For instance, it could trigger a Slack Message when a new build job is scheduled for this image or a Quay notification when a new security vulnerability is discovered, as Quay is constantly updating image vulnerabilities.

When integrating with OpenShift Pipelines, Quay can call a webhook URL when a new image tag has been pushed and use a custom HTTP POST operation to supply interesting information about the new image:

Triggering Application Build Pipeline From Quay

OpenShift Pipelines enables using Tekton on OpenShift for creating Kubernetes-native continuous integration pipelines that automate various steps of application delivery. Using Tekton, you can create a pipeline that uses buildah for building the application image based on the Dockerfile that is provided with the application.

Tekton triggers provide a flexible mechanism for defining webhooks that conform to any source and are able to consume the payload provided by the source in the pipeline. Taking advantage of Tekton triggers, one can define webhooks that are adjusted to Quay notifications and can start the application build pipeline based on the information provided by Quay notifications.

The following is an example of the JSON payload provided by Quay notifications when new images are pushed to the mirror registry, whenever images are updates in the Red Hat container catalog:

{

"name": "rh-openjdk-11-runtime",

"repository": "demo/rh-openjdk-11-runtime",

"namespace": "demo",

"docker_url": "quay.io/demo/rh-openjdk-11-runtime",

"homepage": "https://quay.io/repository/demo/rh-openjdk-11-runtime",

"updated_tags": [

"1.9-1"

]

}

Using Tekton, a TriggerBinding can be defined to capture the Quay notification payload as parameters to be then made available to the application build pipeline:

apiVersion: triggers.tekton.dev/v1alpha1

kind: TriggerBinding

metadata:

name: quay-push

spec:

params:

- name: repository_name

value: $(body.name)

- name: repository

value: $(body.repository)

- name: repository_namespace

value: $(body.namespace)

- name: repository_url

value: $(body.docker_url)

- name: repository_homepage

value: $(body.homepage)

- name: repository_updated_tag

value: $(body.updated_tags[0])

The next step is to define a TriggerTemplate which specifies how these Quay notifications should be used when starting the application build pipeline:

apiVersion: triggers.tekton.dev/v1alpha1

kind: TriggerTemplate

metadata:

name: build-pipeline

spec:

params:

- name: repository_name

- name: repository

- name: repository_namespace

- name: repository_url

- name: repository_homepage

- name: repository_updated_tag

resourcetemplates:

- apiVersion: tekton.dev/v1beta1

kind: PipelineRun

metadata:

generateName: build-pipeline-

labels:

tekton.dev/pipeline: build-pipeline

spec:

pipelineRef:

name: build-pipeline

params:

- name: APP_IMAGE_TAG

value: latest-$(tt.params.repository_updated_tag)

- name: BASE_IMAGE_NAME

value: $(tt.params.repository_url)

- name: BASE_IMAGE_TAG

value: $(tt.params.repository_updated_tag)

...

In the above example, the application build pipeline has three parameters that define how the application image should be tagged and which base image url and tag should be used for building the application image. Using the values from Quay notifications, the base image URL and the new image tag that is made available after syncing and mirroring the new image tags from Red Hat container catalog would be used as the base image for rebuilding the application image.

After creating a Tekton event listener that uses the Quay push TriggerBinding and the application build pipeline TriggerTemplate, you could go to the settings of the mirrored base image repository on Quay and add a notification on “Push to Repository” events to send a webhook to the webhook URL that is exposed by the Tekton event listener.

For a complete example of the Tekton pipeline and trigger definitions, refer to the following GitHub repository: https://github.com/siamaksade/quay-mirror-pipeline.

This setup effectively chains image push events with container image (re)builds in Tekton and relieves the owner of the application from the burden of manually discovering and adopting new base image versions. Since there are also notifications available for newly discovered vulnerabilities in existing images, this mechanism could also be used to rebuild the application using newer patch versions of application dependencies, but we will save this topic for another blog post.

About the authors

Siamak Sadeghianfar is a member of the Hybrid Cloud product management team at Red Hat leading the cloud-native application build and delivery on OpenShift.

More like this

Why flexibility is non-negotiable in the Middle East’s AI transformation journey

Beyond automation: Why the surge in AI-driven security vulnerabilities demands human technical advocacy

Operating System Management | Compiler

Collaboration In Product Security | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds