In an earlier blog, we wrote about how very low latencies in Java-based microservices can be achieved through our plug-in wrapper. That solution was general in nature, applicable to any API service.

In this blog, we show that the plug-in wrapper is applicable to a specific microservices framework - the open source microservices framework Light-4-J. In particular, we took an implementation of a microservices chaining tutorial, built upon it, and applied our Java plug-in wrapper API management component to it.

As we stated in our first blog, this approach may be well used for a particular use-case, i.e. internal API traffic, typically microservice to microservice. Services exposed to external parties, outside the DMZ, can continue to use the API gateway deployment for its routing and security capabilities. And indeed this differentiates Red Hat 3scale from other vendors in that both the plug-in deployment and the gateway deployment are feasible.

Figure 1 - Plug-in approach: API Management intelligence and configuration are decoupled from traffic enforcement and reporting

Context

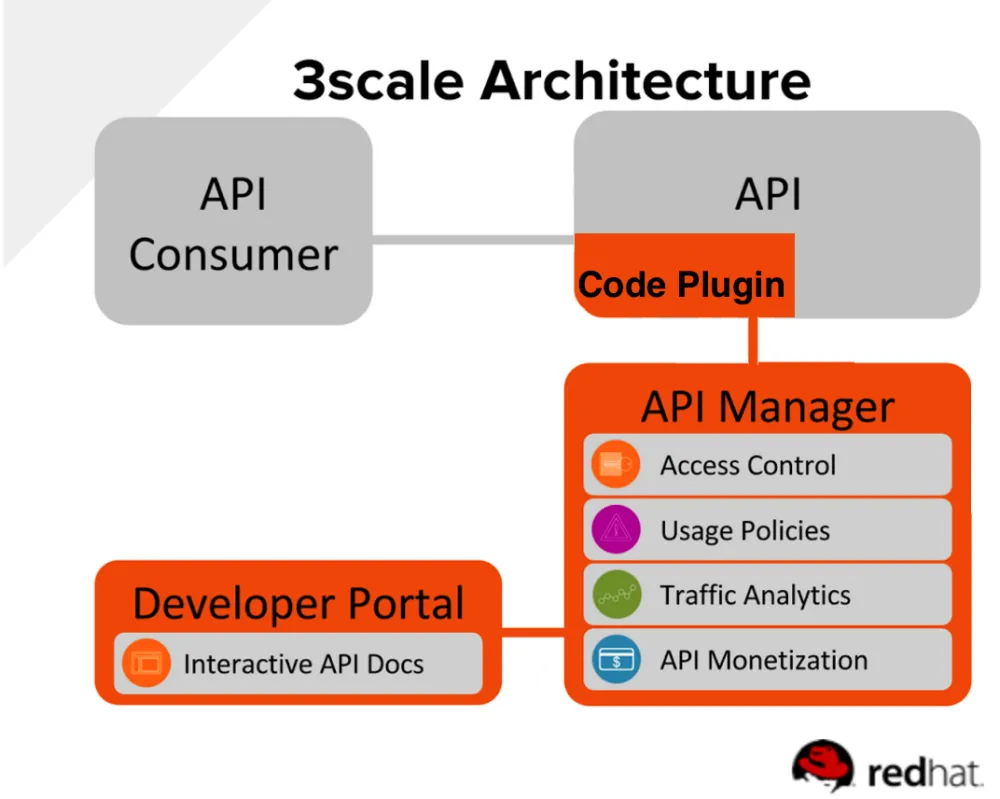

First, let’s add some context on Red Hat 3scale through a depiction of our architecture.

With Red Hat 3scale, you have total flexibility as to how you implement API traffic authorization and reporting. As you can see in Figure 1, we separate API managerial concerns from API traffic gatekeeping or enforcement concerns. The diagram shows the approach we describe in this blog, i.e. code plug-in architecture. It represents an alternative to an API gateway for use in the specific cases mentioned above. This flexibility of choice allows you to architect the optimal topology for your requirements without the need to consider costs (we don’t charge for gateways or plug-ins).

The other element, decoupled from the enforcement components, is the central API Manager. It allows API providers to:

- Apply access control and security. Multiple authentication modes are available, including API key, Oauth 2.0 including Openid Connect, and delegation to external identity providers.

- Create usage policies. Application and individual level consumer segmentation is possible offering different levels of API access to be granted to different classes of API user, e.g. internal users, trusted partners, or anonymous web signup users.

- Get high level or detailed views into API traffic usage patterns and analytics.

- Apply API monetization. This can be direct API monetization or internal chargebacks between departments.

- Configure and expose a first class developer portal - the external face of the API, typically branded to the corporate look and feel of the provider. This allows API consumers to onboard and learn about the API, for example, using Swagger.

Objective

We wanted to access the five managerial features by describing the Light-4-J microservices architecture. Latency and complexity need to be kept to an absolute minimum. Our most immediate requirements of the five features were usage policies and traffic analytics. Specifically to:

- Authorize traffic - ensuring calling clients have access to the services and endpoints they’re trying to hit according the policies defined.

- Report the traffic - so it can be viewed in the analytics component of 3scale API Management.

Our customer favored the code plug-in approach over the gateway approach for use with the light-4j microservices framework.

Solution

On the microservices API side, use the Light-4-J interceptor component. What is neat about this component is that not only is it pluggable but also that it is configuration based. This makes the plug-in itself very non-intrusive to the functionality delivering API.

We then invoked a custom wrapper on top of the standard OOTB plug-ins offered by 3scale.

The custom wrapper over the supported plug-in provides the following functionality:

- Cache credentials. This helped tremendously with the latency requirements.

- Asynchronous API manager invocation for updating metrics.

To reiterate, the solution is noteworthy for the following reasons:

- The entire security implementation is non-intrusive to the functional API.

- The security implementation, hence, is changeable (say from API key to API keypair to JWT token).

- It facilitates parallel development with one set of developers focusing one area, such as creating the functional API, and another team to focus on another area, such as the security implementation.

- It is applicable to all microservices frameworks if the customer uses multiple microservices frameworks.

Implementation

Let’s take a look at our API calls, before we add the 3scale plug-in wrappers. We have four services, let’s call them A, B, C and D. There are both chained and self-contained microservices. (Initial code was taken from this github repo.)

Figure 2 - API call flow for microservices A, B, C and D

As we can see, calls to A and B involve chained calls to C and D. Calls to C and D are self-contained. We’ve expanded the implementation by adding 25 endpoints to each microservice. There are therefore 100 endpoints in total.

Next we’ll depict the call flow when we add the plug-in wrappers to our four microservices. This shows the most complex API call - a call to one of A’s endpoints. As shown above, a call to A ultimately results in a call to B, C, and D through chaining.

Figure 3 - API call flow to one of A’s endpoints, including 3scale

NOTE: 3JPW stands for the 3scale Java plug-in wrapper.

As you can see, the client, which is itself likely to be a microservice, makes a call to one of microservice A’s 25 endpoints, possibly using the URL path /apia/data25?apikey=xxx. There are 100 clients, each with its own API key. You can see the call flow where each microservice makes a call to the 3scale API manager to authorize and report that request. As you can see next in the next diagram, inside the plug-in wrapper, we cache 3scale’s authorization responses.

Figure 4 - Plug-in wrapper communication with 3scale API manager and its effects

NOTE: MS body refers to the actual body of the microservice, i.e., its implementation code.

If the cached response indicates:

1) A successful authorization, we allow the call straight through to the microservice body to process the request. 3scale is contacted asynchronously - or after it has been passed to the microservice.

2) An unsuccessful or no authorization, the plug-in wrapper will make a blocking or synchronous call to 3scale. Access to the microservice will only be granted if 3scale allows it.

As the vast majority of API calls are successfully authorized, the vast majority of calls don’t wait for 3scale to respond - allowing this extremely low latency solution. 3scale is still keeping track of all calls and only allowing through authorized calls.

Results

We ran several tests ranging from 20,000 requests to 1,000,000 requests. We consistently achieved average latencies of approximately 1ms per call.

Here are some test parameters:

- JMeter as our load testing tool

- 10 concurrent threads

- 100 clients, each with its own API key

Figure 5 - Latencies were consistently 1ms per call on average

We also tested the real-life scenario of authorization failures by simulating a small percentage of requests to result in authorization failures. It did not materially alter our average throughput or latency values.

Conclusion

We’ve demonstrated the usage of the Java plug-in wrapper as a traffic management agent to authorize and report on API traffic to the microservices framework, Light-4-J. This mechanism enables us to tap into and utilize the superb API management capabilities provided by Red Hat 3scale with very low latencies. This approach is particularly applicable for the microservice to microservice API calls that pertain in our example. In these situations, the plug-in wrapper approach is a suitable alternative to an API gateway as its overhead in terms of latency and complexity is lower.

Red Hat 3scale API Management provides unique deployment options that few other vendors do:

- Gateway deployment for traditional brownfield services

- Plug-in deployment for greenfield microservices

The code for this example is available at https://github.com/tnscorcoran/light-4-j-plugin-wrapper.

Future

Why watching this space might be interesting:

- Demonstrate such integrations with other microservice frameworks in the future.

- Provide other enhancements like batched write to the API manager.

- Provide more performance tuning pointers.

About the authors

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds