Scaling your Kubernetes infrastructure across multiple clusters brings the challenge of efficient lifecycle management. Luckily, the solution lies within the Multicluster Engine for Kubernetes operator (MCE). Whether you opt for this standalone operator or Red Hat Advanced Cluster Management for Kubernetes, MCE empowers you to handle your clusters' lifecycle seamlessly.

The latest update of the Assisted Installer enables you to deploy the MCE operator on your cluster effortlessly. With this integration, not only does your new OpenShift cluster become the OpenShift cluster that manages all your clusters (aka your "hub cluster"), but it also becomes the focal point for day 2 lifecycle management of the hub cluster itself. Even if you initially plan to have only one cluster, MCE is handy for tasks such as adding and removing worker nodes to your cluster.

Key Points

- Deploy the multicluster engine for Kubernetes operator (MCE) with Assisted Installer.

- Add nodes to your hub cluster.

- Transform your new OpenShift cluster into a central hub of clusters from the installation.

- Manage the lifecycle of multiple OpenShift clusters, including your hub cluster.

Deploy Your Cluster and Enable MCE

Let’s deploy a new cluster and enable MCE and the Logical Volume Manager operator to have storage, which is needed for MCE to be able manage bare metal clusters.

Head to https://console.redhat.com/preview/openshift/create/datacenter and create a cluster. I'll call this cluster 'pm-cluster' (you will see references to this cluster name in the rest of this guide) and for simplicity I'll start with a Single-Node OpenShift cluster (SNO).

Go through the rest of the installation process, and when you first log in as 'kubeadmin', you’ll be presented with the MCE user interface.

Log Into Your New Cluster

The first screen you’ll see as the kubeadmin user is the MCE user interface.

Note that it shows a cluster with the name 'local-cluster. This is actually the cluster I named pm-cluster, which to MCE is seen as its local-cluster and uses that name, regardless of how you called the cluster at installation time. We will use this local-cluster name later in this blog post to complete the configuration.

While you may think that the cluster is already managed by MCE since it’s in the cluster list, it’s not yet, and we will import it in the next steps.

Verify that LVMS Works

We'll get back to the UI later. Now let's check on the command line that MCE has its storage.

Go to a terminal, in my case a MacOS terminal, and load the kubeconfig file in the KUBECONFIG environment variable to get ready to use 'oc' against your new cluster.

Verify that the LVMS storage has created the default storage class:

% ./oc get storageclass

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

lvms-vg1 (default) topolvm.io Delete WaitForFirstConsumer true 82m

Verify that the LVMCluster is ready (see docs):

% ./oc get lvmclusters.lvm.topolvm.io -n openshift-storage -ojsonpath='{.items[*].status.deviceClassStatuses[*]}'

{"name":"vg1","nodeStatus":[{"devices":["/dev/sdb"],"node":"node47.pemlab.rdu2.redhat.com","status":"Ready"}]}%

Note that the output line says that its status is Ready, so we have storage ready and are good to go.

Enable the Bare Metal Operator (and Metal3)

The Bare Metal Operator is part of the Metal3 Kubernetes Project (Metal3.io) and it will be used by MCE to deploy OpenShift nodes. It's pre-installed and is enabled by creating a Provisioning CR as follows:

Provisioning.yaml

apiVersion: metal3.io/v1alpha1

kind: Provisioning

metadata:

name: provisioning-configuration

spec:

provisioningNetwork: "Disabled"

watchAllNamespaces: false

% ./oc apply -f Provisioning.yaml

You should now see the Metal3 pods running:

% ./oc get pods -n openshift-machine-api

NAME READY STATUS RESTARTS AGE

cluster-autoscaler-operator-7465989c57-52dxv 2/2 Running 0 5h34m

cluster-baremetal-operator-b66949cdd-9wdrc 2/2 Running 0 5h34m

control-plane-machine-set-operator-7985fcb674-8ch5l 1/1 Running 0 5h34m

ironic-proxy-cb66b 0/1 ContainerCreating 0 1s

machine-api-operator-5b58f77484-2qc7n 2/2 Running 0 5h34m

metal3-6698bf578-xhw7t 0/5 Init:0/1 0 4s

metal3-image-customization-5747947db4-4wbpq 0/1 Init:0/1 0 2s

Make Your Cluster Manageable by MCE

Now you want to have your cluster managed by MCE. To do that, you need to create and connect a series of pieces (custom resources) together:

- A Secret for your OpenShift Pull Secret so that the nodes can be installed in your cluster with it.

- A Secret for the kubeconfig file of your cluster, so that MCE can manage it

- An AgentClusterInstall and a ClusterDeployment: These CRs will represent your cluster in MCE and will have information such as the kubeconfig attributes.

More information about this can be found here.

While this allows you to get to know the internals of MCE and its components, we expect this to become much easier in future releases.

Let's get to it.

Create the Secret for Your Pull Secret

Get your pull secret from console.redhat.com and paste it to the following file:

Secret.yaml

apiVersion: v1

kind: Secret

type: kubernetes.io/dockerconfigjson

metadata:

name: pull-secret

namespace: local-cluster

stringData:

.dockerconfigjson: 'YOUR_PULL_SECRET_JSON_GOES_HERE'

./oc apply -f Secret.yaml

Create the Secret for Your Cluster's kubeconfig

You will need the path to the kubeconfig file and then pass it to this command:

./oc -n local-cluster create secret generic local-cluster --from-file=kubeconfig=kubeconfig-noingress

Create the AgentClusterInstall

AgentClusterInstall.yaml

apiVersion: extensions.hive.openshift.io/v1beta1

kind: AgentClusterInstall

metadata:

name: local-cluster

namespace: local-cluster

spec:

networking:

userManagedNetworking: true

clusterDeploymentRef:

name: local-cluster

imageSetRef:

name: openshift-v4.13.6

provisionRequirements:

controlPlaneAgents: 1

% ./oc apply -f AgentClusterInstall.yaml

Create the ClusterDeployment

Note that it uses the actual cluster name 'pm-cluster' and that it refers to the pull secret at the bottom.

ClusterDeployment.yaml

apiVersion: hive.openshift.io/v1

kind: ClusterDeployment

metadata:

name: local-cluster

namespace: local-cluster

spec:

baseDomain: pemlab.rdu2.redhat.com

installed: true

clusterMetadata:

adminKubeconfigSecretRef:

name: local-cluster

clusterID: ""

infraID: ""

clusterInstallRef:

group: extensions.hive.openshift.io

kind: AgentClusterInstall

name: local-cluster

version: v1beta1

clusterName: pm-cluster

platform:

agentBareMetal:

agentSelector:

matchLabels:

foo: bar

pullSecretRef:

name: pull-secret

% ./oc apply -f ClusterDeployment.yaml

Configure the Host Inventory in MCE

Now that the pm-cluster or local-cluster is managed by MCE, you want to test the power of MCE by adding a node to it. To do this, you need a host inventory, which is one of the constructs of MCE to keep track of the hosts available to use in clusters.

Click Infrastructure -> Host Inventory -> Configure host inventory settings. Use defaults as in the screenshot below and click Configure. It will take a few minutes for the configuration to take effect. You’ll eventually see "Host inventory configured successfully".

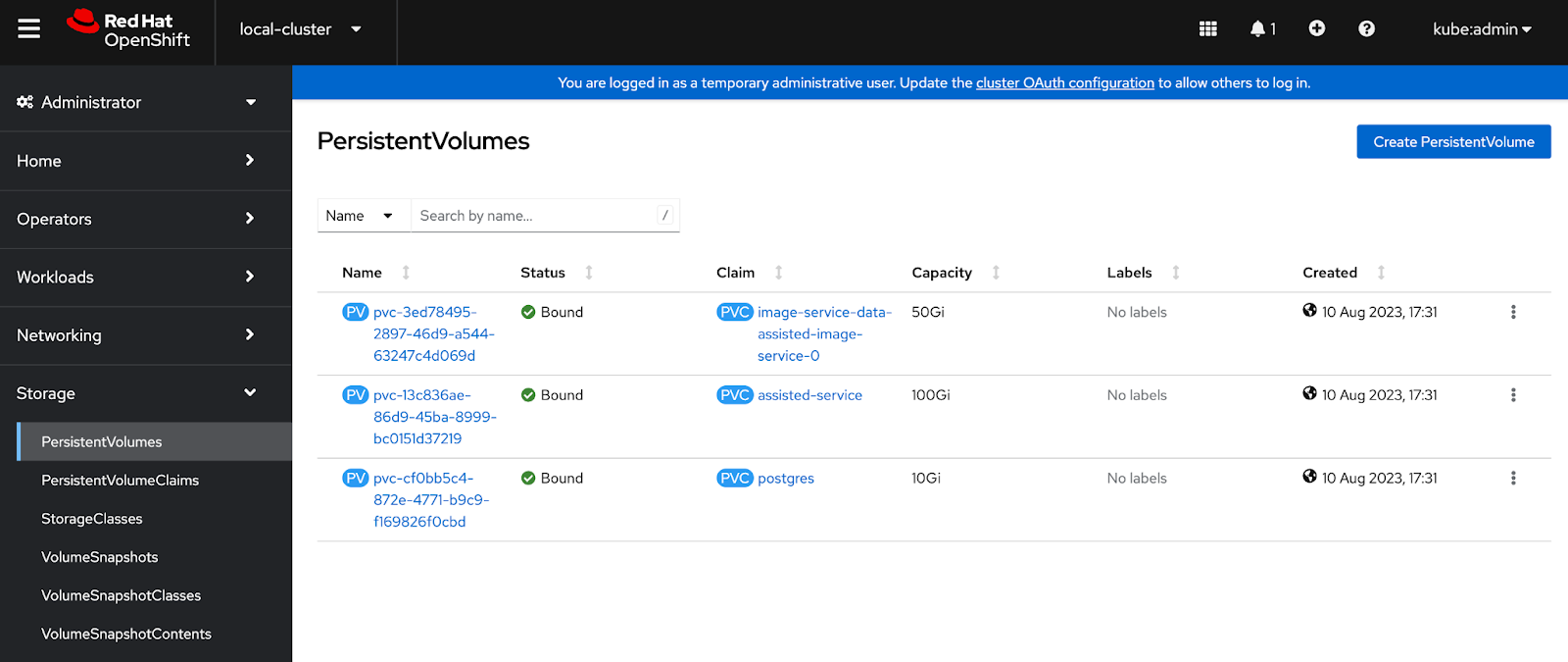

Note that the storage required by MCE and provided by LVMS, which we installed along with it, has been created now.

Create an Infrastructure Environment

You have configured the infrastructure environment capability in MCE. Now let’s create one to add a host to it, which you will then add to the cluster.

Have your pull secret and ssh public key ready to pass them to the infrastructure environment you are about to create. Click 'Create infrastructure environment', fill the required fields, and click Create.

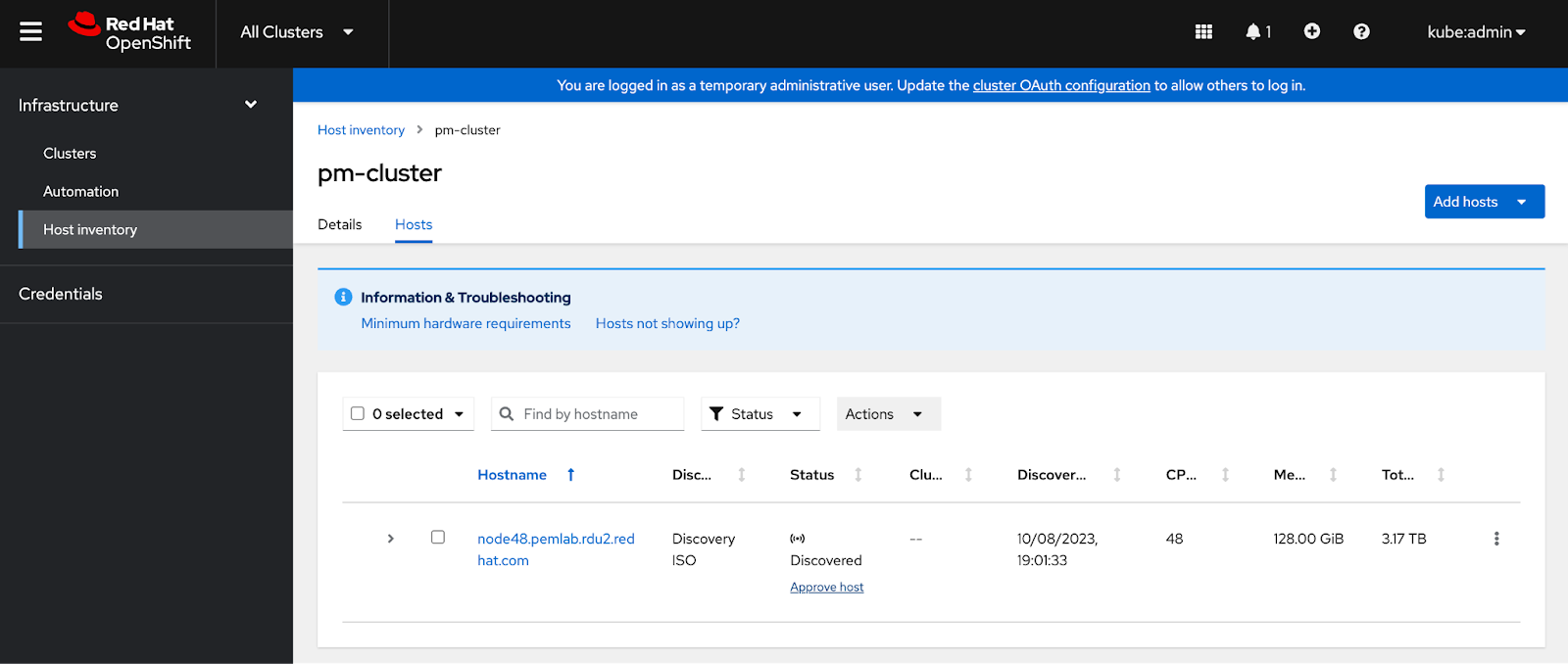

Then, from the Hosts tab, add a new host to the infrastructure environment.

I will use the discovery ISO and boot a host from it manually. You can also put Metal3 to work and use the BMC (e.g., iDrac), and then Metal3 would do the booting from the image automatically for you.

When the host is discovered in the Hosts tab, click Approve Host and then it's ready to add it to your cluster.

Add the Node to the Cluster

The host that you added can now be used to expand your cluster.

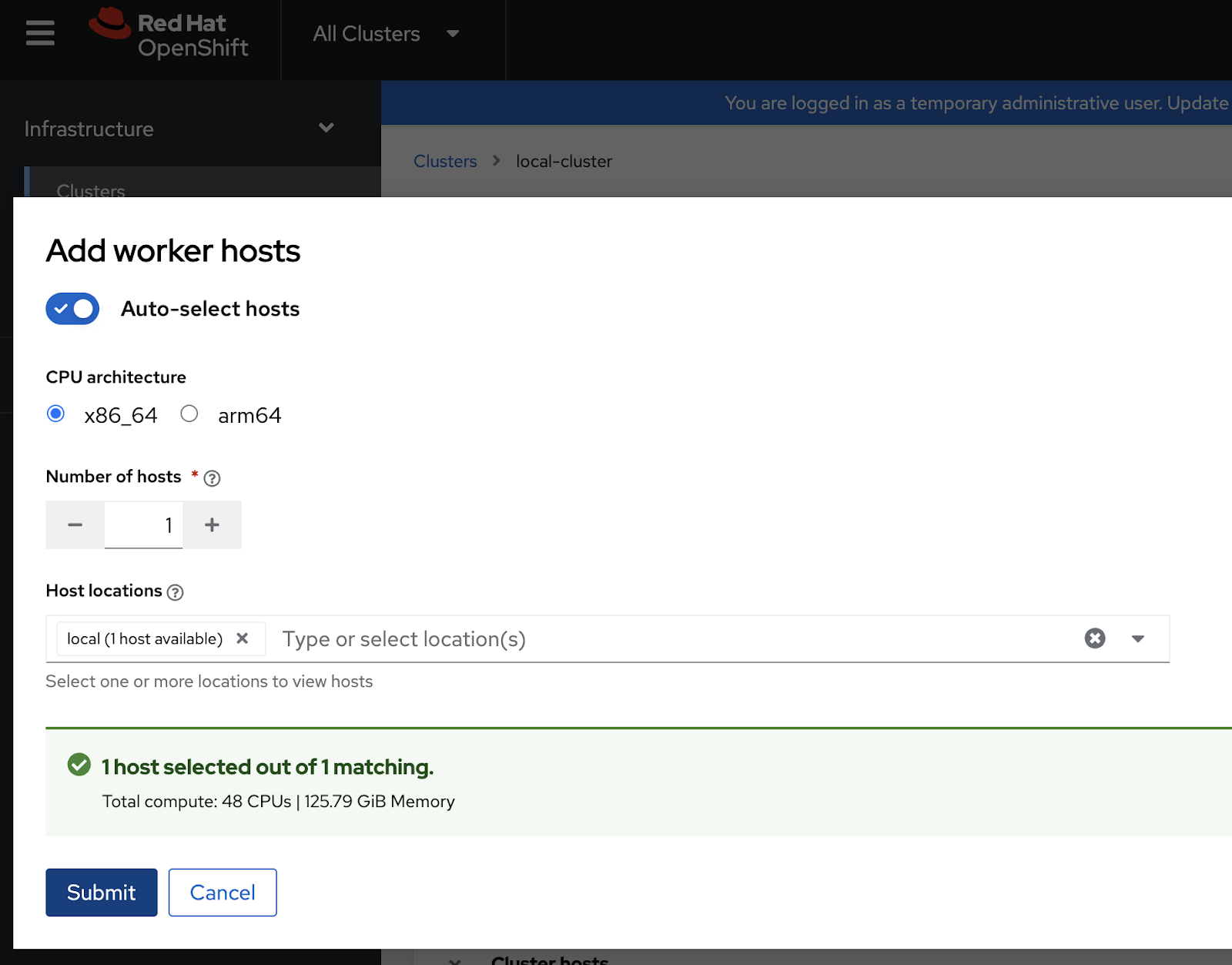

Go to Infrastructure, then Clusters, and click "local-cluster". Then click on Actions and Add hosts.

The host you added to the infrastructure environment will be automatically selected.

After you click Submit, the host will be added as a compute node to the pm-cluster (or local-cluster to MCE). Don't worry if the status says "Insufficient", this is temporary while the host is being discovered.

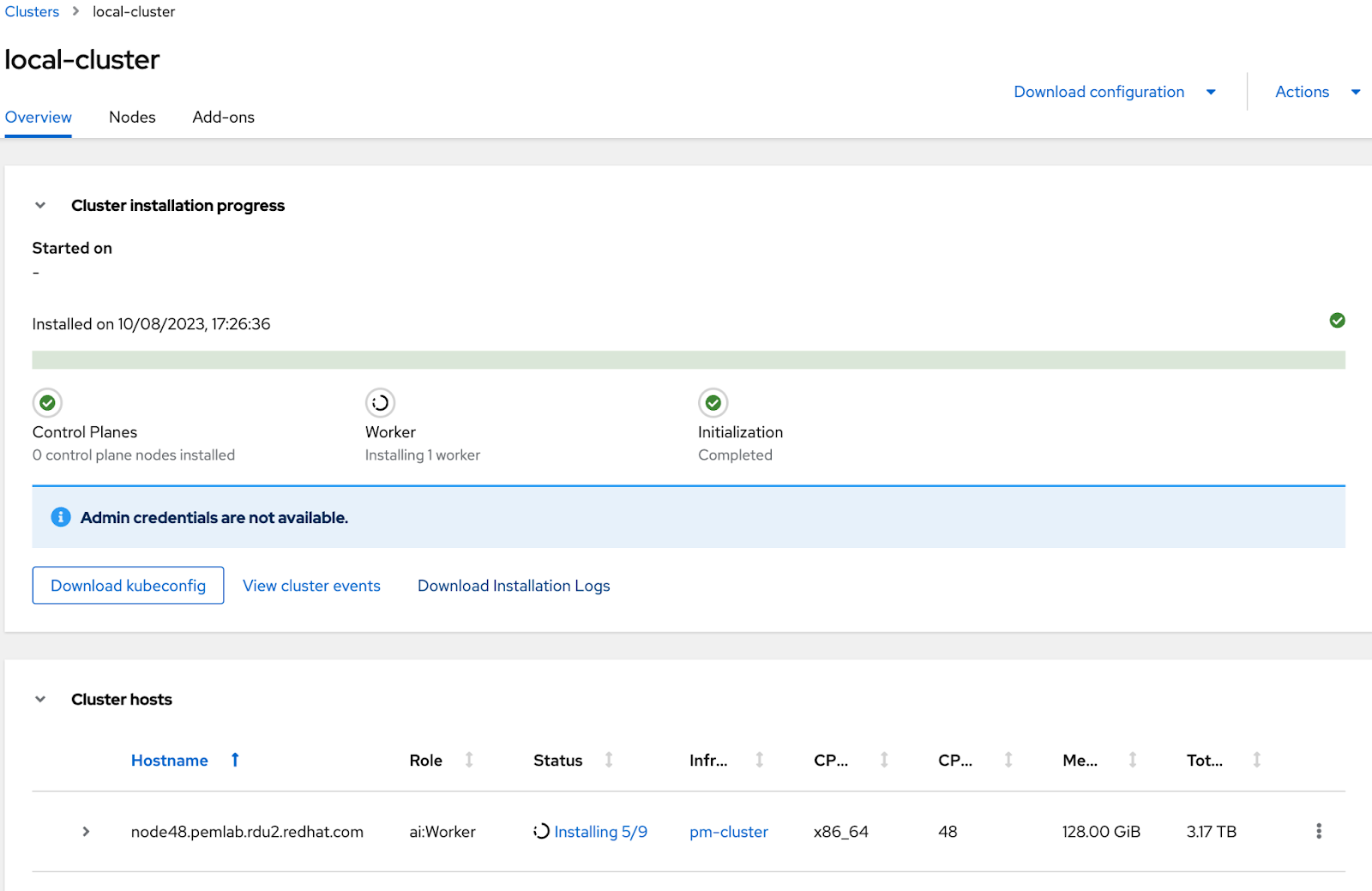

The host will be installed as a compute node, showing the progress until the installation is complete.

After it finishes, your OpenShift cluster will have one more node for compute.

Final Notes

This post has shown the power that the MCE operator provides for cluster management. Now, with the Assisted Installer making installations effortless, you can deploy MCE in any cluster to manage one or multiple clusters and your host infrastructure for them. This allows you to expand existing clusters or create new ones as needed. Tasks such as adding or removing hosts (note that to remove hosts they must have been added with MCE, not with the initial cluster installation) can be easily accomplished, and full automation, including Zero Touch Provisioning, is also an option.

We are actively working on automating the import of the local-cluster, enabling you to initiate infrastructure management immediately. Stay tuned for further enhancements to these powerful technologies, which we are currently developing.

About the author

More like this

A decade of open innovation: Red Hat continues to scale the open hybrid cloud with Microsoft

Stop managing the past and start building IT’s future

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds