In previous articles, we have introduced vDPA (Virtual Data Path Acceleration) which replaces existing closed and proprietary SR-IOV interface with open and standard virtio interfaces for the purpose of packet steering (as opposed to packet processing). When we say vDPA we actually mean virtio/vDPA where virtio is used for the data plane while vDPA focuses on the control plane. vDPA is basically a kernel shim layer translating the vendor specific control plane to a virtio control plane and simplifying the work for the NIC vendor adoption. In this context, it’s also important to point out that some NIC vendors implement virtio full hardware (HW) offloading where both the data plane and control plane are implemented in the NIC and are fully virtio-compliant. In that case, there is no need for the vDPA shim layer to translate the control plane.

In this article we will focus on the work being done to integrate virtio/vDPA into Kubernetes as the primary interface for pods. This solution is part of a larger approach of offloading the networking from the host to the network interface card (NIC). The HW offloading solution includes the packet processing (for example moving from Open vSwitch (OVS) running in the kernel to a HW implementation) and the packet steering where the virtio/vDPA comes into play.

We will be describing the main building blocks of the solution and how they fit together. We assume that the reader has an overall understanding of Kubernetes, the Container Network Interface (CNI), device plugins and operators.

It should be noted that virtio/vDPA can also be part of a VDUSE (vDPA in user space) solution where a standard virtio interface is provided to the pod, however, that topic is covered in depth in Introducing VDUSE: a software-defined datapath for virtio

virtio/vDPA and the pod’s primary interface

Now let’s focus on the architecture of this solution. We’re running a container orchestration platform such as Kubernetes or OpenShift and we have at least one bare metal worker node where workloads can exploit the high bandwidth and low latency offered by virtio/vDPA interfaces.

We will start by describing how the bare metal worker node is configured in practice.

Worker node and HW offload

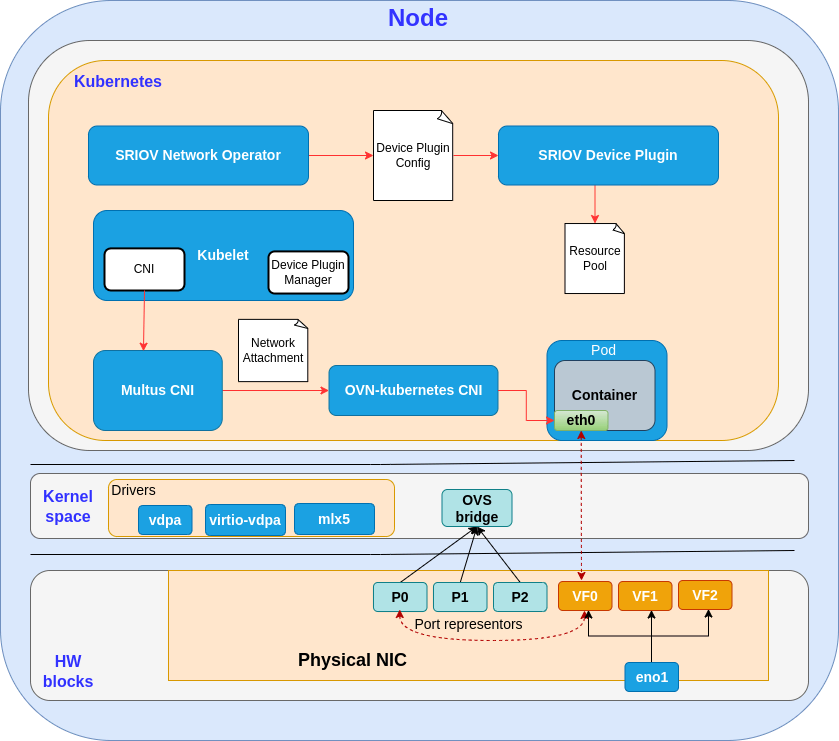

The diagram below shows the target configuration on the worker node. Note that all the Open Virtual Network (OVN) and OVS components have to be installed in the system before the NIC provisioning happens. This can be done manually along with the Kubernetes installation, or you can have everything automatically configured by OpenShift by using the Machine Config operator (MCO).

We will now review the OVN and OVS related blocks (for more information on the vDPA path see How vDPA can help network service providers simplify CNF/VNF certification).

OVN controller

The OVN project is an open source project originally developed by the Open vSwitch team at Nicira. It complements the existing capabilities of OVS and provides virtual network abstractions such as virtual L2 and L3 overlays, security groups and DHCP services.

OVN controller is the local controller daemon running on every worker node. It connects up to the OVN Southbound database over the OVSDB protocol, down to the Open vSwitch database server over the OVSDB protocol and to ovs-vswitchd via OpenFlow.

OVS

Open vSwitch is a multilayer virtual switch that is designed to function in virtual machine (VM) environments. The typical use of OVS is to forward traffic between pods on the same physical host and between pods and the physical network. The OVS implementation comprises a vSwitch daemon (ovs-vswitchd), a database server (ovsdb-server) and a kernel datapath module. OVS hardware offloading is enabled in order to offload the packet processing to the NIC card.

HW offload to NIC

The solution described in this post integrates a NVIDIA Connectx-6 DX card, however, the implementation is vendor-agnostic and other cards from different vendors are also in the process of being integrated.

NVIDIA’s Linux Switch uses the Switchdev driver as an abstraction layer which provides open, standard Linux interfaces and ensures that any Linux application can run on top of it.

Once the Switchdev driver is loaded into the Linux kernel, each of the switch’s physical ports and virtual functions (VFs) are registered as a net_device within the kernel.

In switchdev mode, a port representor is created for each of the VFs and added to the OVS bridge. In this case, OVS does the packet processing, but this activity is CPU intensive, affecting system performance and preventing full utilization of the available bandwidth.

This is where the HW offloading comes into play.

When the first packet is received (packet miss), it is still handled by the OVS software (slow path), but any subsequent packet will be matched by the offloaded flow installed directly in the NIC (fast path). This mechanism is implemented by OVS and TC flower. As a result, we can achieve significantly higher OVS performance without the associated CPU load.

vDPA workflow in Kubernetes

Now let’s go step by step through the vDPA/Kubernetes workflow, describing the overall architecture and components that take part in the implementation.

SR-IOV Network Operator

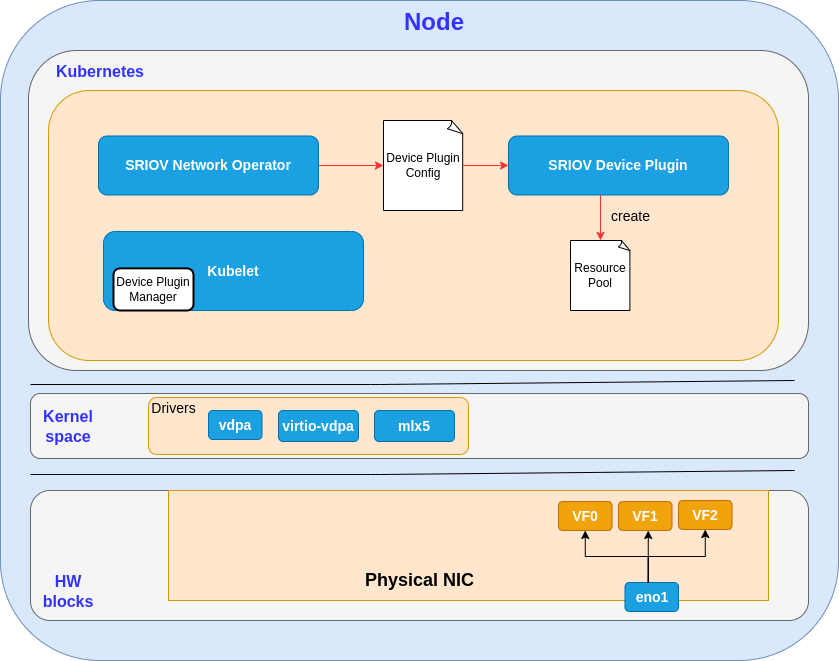

The Single Root I/O Virtualization (SR-IOV) Network Operator is a SW extension to Kubernetes and follows the operator pattern. It’s in charge of coordinating all of the different components, as shown in the following diagrams.

Kubelet is the primary "node agent" that runs on each node of the cluster.

The operator is triggered by a policy applied from cluster administrator and is mainly responsible for the NIC configuration:

- The user creates a policy and submits it to the operator

- The NIC is configured in switchdev mode with HW offload enabled on the Physical Function (PF), VFs and port representors

- NIC is partitioned into SR-IOV VFs

- The vDPA drivers are installed in the linux kernel (i.e., vdpa, virtio-vdpa)

- The vendor drivers are installed in the linux kernel (e.g., mlx5_core for NVIDIA cards) and bound to the VFs

- The vDPA devices are created and bound to the virtio-vdpa driver

- A YAML configuration manifest is generated for the SR-IOV-network-device-plugin to operate

SR-IOV Network Device Plugin

The SR-IOV network device plugin is a Kubernetes device plugin for discovering and advertising networking resources in the form of SR-IOV VFs.

The following diagram shows the connection between the SR-IOV device plugin and the SR-IOV operator:

The following are the detailed actions the SR-IOV device plugin performs in the context of virtio/vDPA:

- Discover vDPA devices and create resource pools as per the configuration manifest coming from the operator. The configuration tells how to arrange the SR-IOV resources into pools. For example, we can say VFs #0-3 go to pool 1 and VFs #4-7 go to pool 2.

- Register the vDPA devices to Kubelet (device plugin manager), so that these resources can be allocated to pods.

- Allocate vDPA devices when requested by pod creation. The pod manifest must have a reference to the resource pool for the injection of the requested network interface.

CNI, OVN-Kubernetes and the pod

Finally, we have all the components in place and we can describe the end-to-end solution.

CNI (Container Network Interface) consists of a specification and libraries for writing plugins to configure network interfaces in Linux containers, along with a number of supported plugins.

Multus CNI is a CNI plugin for Kubernetes that enables attaching multiple network interfaces to pods. Typically, in Kubernetes, each pod only has one network interface (apart from a loopback). With Multus you can create a multi-homed pod that has multiple interfaces. This is accomplished by Multus acting as a "meta-plugin", a CNI plugin that can call multiple other CNI plugins. The current solution still uses the primary interface, but this limitation will be addressed in the near future (more details are provided in the “Connecting virtio/vDPA to the pod secondary interface (future work)” paragraph section below).

OVN-Kubernetes CNI plugin is a network provider for the default cluster network. OVN-Kubernetes is based on OVN and provides an overlay-based networking implementation. A cluster that uses the OVN-Kubernetes network provider also runs OVS on each node. OVN configures OVS on each node to implement the declared network configuration.

Let’s have a look at the final flow described in the diagram below.

A recap of the whole workflow:

- The SR-IOV network operator configures the NIC, loads the drivers in the kernel and generates a configuration manifest for the device plugin

- The SR-IOV network device plugin creates the resource pools and allocates vDPA devices to pods when requested by Kubelet

- The user creates a network attachment, a custom resource that brings together the resource pool and the designated CNI plugin for the pod network configuration

- The user creates the pod manifest and selects the resource pool for the network interface

- When the pod is created, Multus CNI takes in input the network attachment and delegates the OVN Kubernetes CNI for performing the pod network configuration

- OVN Kubernetes moves the virtio/vDPA device into the container namespace (eth0 in the illustration above) and adds the corresponding port representor to the OVS bridge

Connecting vDPA to the pod secondary interface (future work)

As already mentioned, the current solution provisions virtio/vDPA on the pod’s primary interface.

Why is it so important to have vDPA on the secondary interface as well?

For a number of reasons:

- Customers need to define isolated tenant networks that form a single L2 domain. The primary interface isn’t fit for this purpose since it is used for both the Kubernetes control plane and the dataplane overlay.

- Customers, including CNF developers, have advanced networking requirements for secondary interface networking. Extending the OVN Kubernetes CNI to manage multiple interfaces seems the natural way to go.

- Accelerated CNFs running user space DPDK applications can’t use the primary interface going through the kernel

In the current solution the packet processing still happens in the Linux kernel, but what if we want to run our applications in userspace?

Data Plane Development Kit (DPDK) aims to provide a simple and complete framework for fast packet processing in data plane applications, offering better performance in terms of throughput and lower latency. The devices are accessed by constant polling, thus avoiding excessive context switching and interrupt processing overhead.

There are at least two possible scenarios:

- Container-based workload - the vhost-vdpa device is mapped into the pod namespace and consumed by a DPDK application

- VM-based workload - the vhost-vdpa device is mapped directly to QEMU for KVM-based VMs. It is worth mentioning Kubevirt, a technology that provides a unified development platform where developers can build, modify and deploy applications residing in both application containers as well as virtual machines in a common, shared environment.

What is needed for deploying vDPA as a secondary interface?

- Extend OVN Kubernetes CNI to manage multiple interfaces

- Extend the SR-IOV network operator to provision vhost-vdpa interfaces

This work will be the focus of our team for our next deliverables.

Summary

In this post we have covered the high level solution and architecture for providing wirespeed/wirelatency virtio/vDPA interfaces to containers. This solution works for both Kubernetes and OpenShift, assuming that the two orchestration platforms are using the same building blocks.

In our next posts, we will dive into the technical details of the virtio/vDPA integration in Kubernetes/OpenShift and show you how things actually work under the hood.

About the authors

Leonardo Milleri works at Red Hat primarily on vDPA and its integration in Kubernetes/OpenShift. He has contributed to SRIOV-network-operator and govdpa projects and he is a member of the Network Plumbing working group. Recently he has joined the Confidential Containers project.

More like this

Confidential Containers workshop on Microsoft Azure Red Hat OpenShift: Learn interactively

Simplify Red Hat Enterprise Linux provisioning in image builder with new Red Hat Lightspeed security and management integrations

The Containers_Derby | Command Line Heroes

Can Kubernetes Help People Find Love? | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds