We are excited to announce that OpenShift sandboxed containers is now available on the OpenShift Container Platform as a technology preview feature.

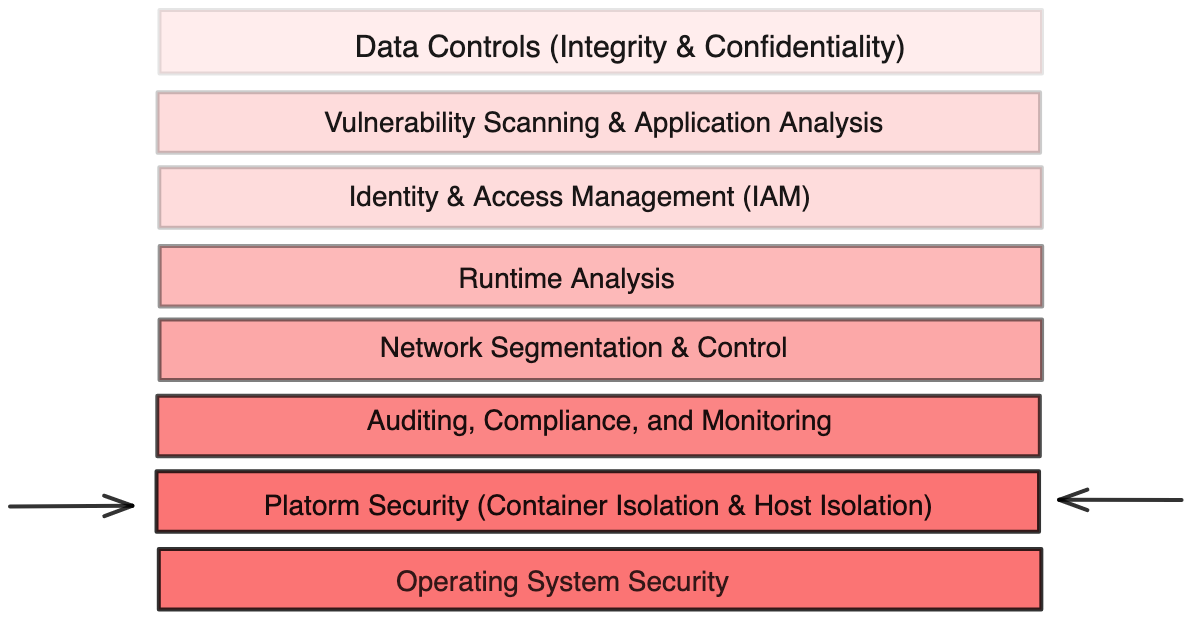

It should come as no surprise that Red Hat has always invested in providing a layered approach to security with a Defense-in-Depth mindset, starting from the operating system (OS) layer with Red Hat Enterprise Linux CoreOS to the application and platform layers with OpenShift’s comprehensive DevSecOps strategy. Defense-in-Depth begins with assuming that exceptions do happen and can happen; as a result, various independent security measures are implemented to account for those exceptions at every layer of the stack.

OpenShift sandboxed containers, based on the Kata Containers open source project, provides an Open Container Initiative (OCI) compliant container runtime using lightweight virtual machines, running your workloads in their own isolated kernel and therefore contributing an additional layer of isolation back to OpenShift’s Defense-in-Depth strategy.

Why does it matter?

OpenShift sandboxed containers provides value on multiple fronts for different personas and use-cases. Below are some examples of where you can benefit from the additional layer of isolation delivered using OpenShift sandboxed containers, Kata Containers, and hardware-assisted virtualization.

Are you a developer?

As a developer working on debugging an application using state-of-the-art tooling such as extended Berkley Packet Filters (eBPF), you might need elevated privileges such as CAP_ADMIN or CAP_BPF. Giving you this may make cluster administrators feel uneasy. After all, with power comes great responsibility. If for any reason your development environment is compromised (exceptions do happen), the attacker will be granted access to the entire host and every process running on it, could tap the system, or even load custom kernel modules.

With OpenShift sandboxed containers, any impact will be limited to a transient lightweight virtual machine with almost no software installed.

Are you a cluster administrator?

You are guarding the fortress. Using OpenShift’s layered approach to security, you have already done a great job getting your cluster to a secure state. However, sometimes exceptions do happen, tickets are opened, and you are required to run potentially vulnerable code. For example, legacy applications that have not been patched or maintained for some time might put the system at risk.

With OpenShift sandboxed containers, you can run such software in its own isolated kernel.

Are you a service provider?

As a service provider, you might be responsible for providing your service to multiple tenants. In an OpenShift or Kubernetes world, this could potentially mean that the service workloads are sharing the same worker node. An example would be operating a CI/CD pipeline; each job could be running its own build command, the output of each job might affect other jobs on the same node, in particular, if it needs to install software that requires elevated privileges.

With OpenShift sandboxed containers, you can safely install the software you need while only granting it access to a transient virtual machine that contains no other accessible software.

Another example would be if you are a Telco moving to adopt Network Function Virtualization (NFV). This requires going through a transformation phase to get all Network Functions (NF) to run as containerized Cloud-native Network Functions (CNFs). While you might already be halfway through, there will be exceptions where you might want to run NFs that were not designed to be cloud-native and would require additional privileges. Having privileges executed on a shared kernel might lead to privilege escalations especially if other security knobs are not properly configured. Additionally, those CNFs could be developed by a 3rd party or different vendors, which adds more requirements to establish isolation boundaries between those CNFs.

With OpenShift sandboxed containers, this additional software can also be installed only inside a transient lightweight virtual machine dedicated to it, limiting interaction with any other software.

What is OpenShift sandboxed containers really?

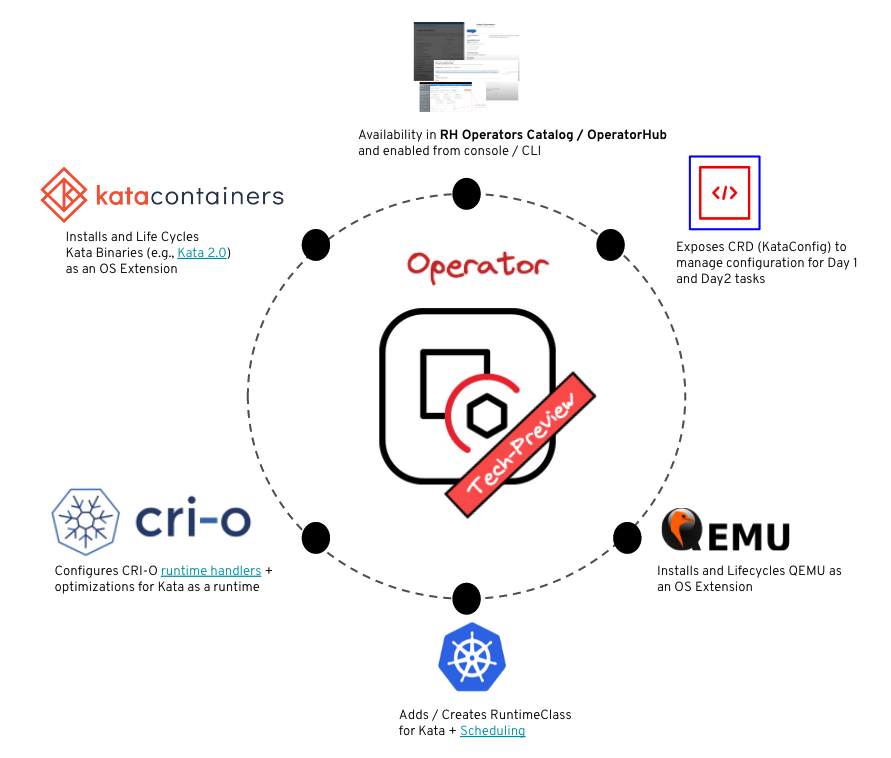

OpenShift sandboxed containers provide means to deliver Kata Containers to OpenShift Container Platform cluster nodes. This adds a new runtime to the platform, which can run workloads in their own lightweight virtual machine, and then starts containers inside these pods. OpenShift sandboxed containers accomplishes this through an OpenShift Operator which is deployed, managed, and updated using the OpenShift Operator Framework.

The OpenShift sandboxed containers Operator delivers and life-cycles all the required bits and pieces to make Kata Containers consumable as an optional runtime on the cluster. That includes but is not limited to:

- The Installation of Kata Containers RPMs as well as QEMU as Red Hat CoreOS extensions on the node.

- The configuration of Kata Containers runtime at the runtime level using CRI-O runtime handlers and at the cluster level by adding and configuring a dedicated RuntimeClass for Kata Containers.

- A declarative configuration to customize the installation such as selecting which nodes to deploy Kata Containers on.

- Ensuring the health of the overall deployment, and reports problems during the install.

The end result is that all you need to use isolated containers is a one-line addition of the runtimeClassName field on a workload spec, and off you go running a kernel isolated application.

It is important to note that the Kata Containers runtime can not be used as the primary/default runtime. By design, Kata Containers does not allow host-level access. For example, access to host networking “hostNetwork” is not supported. This could mean Container Network Interface (CNI) plugins that require this privilege would not function properly; this also applies to any other workload that requires access to host networking.

Benefits of OpenShift sandboxed containers

Since all you need to do is specify a runtime class, sandboxed containers behave essentially like regular containers, except that they run in their isolated kernel. There are numerous advantages for adopting a Kubernetes-native User Experience (UX) to run sandboxed workloads including but not limited to:

- Since Kata Containers is offered as another runtime, many operational patterns used with normal containers are still preserved (e.g., GitOps, S2I, and so on).

- Many of the existing tools that apply to container images can simply be re-used with sandboxed containers as a side-effect of being a native Kubernetes runtime:

- Tooling for trusted container content, such as Container Health Index, exposes the “grade” of each container image, detailing how container images should be curated, consumed, and evaluated to meet the needs of the production system.

- Access control to images with enterprise container registries (Red Hat Quay and Red Hat OpenShift Container Security Operator integration with Quay).

- Control and automation for building container images using tools such as Source-to-image (S2I), Image Streams to keep images secure and up to date.

- Least privilege through per workload Role-Based Access Control (RBAC) for Identity management and network policies for network isolation.

With OpenShift sandboxed containers, we are NOT reinventing the wheel. We are adding another gear to help you move faster in addressing the diverse demands of your workloads!

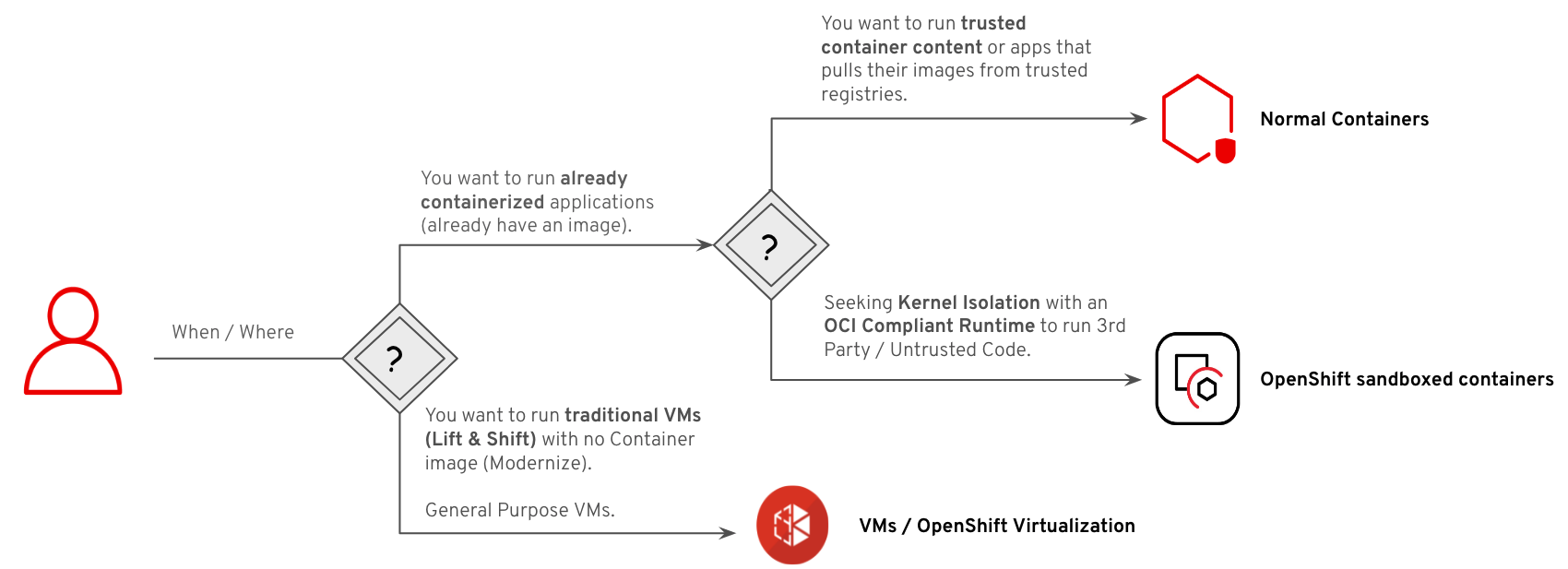

How does it compare to OpenShift Virtualization?

So far, we have covered the basics of what OpenShift sandboxed containers is and why it could be a viable choice for certain types of workloads. As mentioned earlier, Kata Containers (brought to you by OpenShift sandboxed containers) is used to reduce the barrier of entry for kernel isolated containers based on hardware-assisted virtualization. However, OpenShift sandboxed containers is not the only OpenShift feature that brings hardware-assisted virtualization to the Kubernetes and OpenShift layers. OpenShift Virtualization is a feature in OpenShift that helps bring traditional Virtual Machine (VM) applications and workloads to OpenShift. It allows for traditional VM workloads to run side-by-side with containers.

Running containers as opposed to running virtual machine workloads is a key distinction that makes the two OpenShift features different and both necessary. Their respective architectures derive from this difference. While OpenShift Virtualization targets the modernization of traditional VM workloads, OpenShift sandboxed containers target additional isolation for already containerized applications. Targeting traditional VM workloads typically adds more requirements and indirectly affects how the virtualization layer is built to cater to those requirements (e.g., traditional VMs might still want to rely on legacy devices). On the other hand, the target workloads for OpenShift sandboxed containers are already containerized apps, which have very different needs than traditional VMs. The main goal for OpenShift sandboxed containers is additional isolation, not much about running general-purpose VMs or migrating VMs to OpenShift, and so fewer requirements on how the virtual layer looks and more emphasis on how “lightweight” it is.

Kata Containers is an open-source container runtime, building lightweight virtual machines that seamlessly plug into the container's ecosystem.

Another distinction is how those two features are consumed post operators install. For OpenShift Virtualization, additional Customer Resources (CR) are used to configure and run a VM declaratively and a dedicated CLI tool is used to help customize the VM behavior (e.g., boot order, machine states, consoles, and so on). To run a workload with OpenShift sandboxed containers, OpenShift resources (e.g., Pods, Deployments, Statefulset, etc.) are re-used and configured to use the Kata Containers RuntimeClass; no CRs are introduced for running workloads.

In simple terms, OpenShift Virtualization tries to cater to the needs of VMs and provide a similar experience on Kubernetes. On the other hand, OpenShift sandboxed containers focus on additional isolation while being as close to a container as possible when it comes to performance.

Try it Out

OpenShift sandboxed containers are available now in OpenShift Container Platform 4.8 on bare-metal Red Hat CoreOS nodes. If you are a current OpenShift customer, you can access it as a part of your existing subscription. There is no additional cost or entitlement needed to begin using it now. OpenShift sandboxed containers is Tech Preview. We are hard at work to promote the feature to GA, which is targeted for the first half of the calendar year 2022.

What’s Next

This post covered the basics of OpenShift sandboxed containers on a high level, the use-cases, and a brief overview of how the Operator works. The post also briefly described how OpenShift sandboxed containers compares to OpenShift Virtualization which is another feature of OpenShift that relies on a virtual stack.

In our future posts, we will go deeper into Kata Containers, the OpenShift sandboxed containers Operator machinery, and the concepts behind each.

Some resources you may find useful are here:

- OpenShift sandboxed containers documentation: This contains all the information required to deploy the OpenShift sandboxed containers and set up your installation.

- OpenShift Commons Talk for OpenShift sandboxed containers.

- Finally, please continue to follow the OpenShift blog for more posts on OpenShift sandboxed containers.

About the author

Adel Zaalouk is a product manager at Red Hat who enjoys blending business and technology to achieve meaningful outcomes. He has experience working in research and industry, and he's passionate about Red Hat OpenShift, cloud, AI and cloud-native technologies. He's interested in how businesses use OpenShift to solve problems, from helping them get started with containerization to scaling their applications to meet demand.

More like this

A decade of open innovation: Red Hat continues to scale the open hybrid cloud with Microsoft

Stop managing the past and start building IT’s future

Can Kubernetes Help People Find Love? | Compiler

Scaling For Complexity With Container Adoption | Code Comments

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds