This is a guest post written by Bala Ramesh, Technical Marketing Engineer, NetApp

Trident is an open-source storage provisioner for Kubernetes and Kubernetes-based container orchestrators such as Red Hat OpenShift. Trident v19.10 is optimized for OpenShift 4. The simplicity you can obtain by using Trident for dynamically creating PVCs, coupled with its production grade CSI drivers and data management capabilities make it a key option for stateful storage requirements for OpenShift. Applications generate data and access to storage should be painless and on-demand.

Here’s how to install Trident in an OpenShift cluster and use Trident to orchestrate storage on NetApp backends.

My environment

This blog post uses a multi-node OpenShift 4.2 cluster (3 masters + 2 worker nodes) that was deployed using the bare metal method [I have deployed my OpenShift nodes as virtual machines on a KVM hypervisor]. You can choose to install OpenShift on other infrastructure providers (for example, your choice of a hyperscaler, Red Hat OpenStack Platform, or VMware vSphere). The install procedure detailed for Trident will remain the same, independent of where you choose to run OpenShift.

export KUBECONFIG=/root/ocp4/auth/kubeconfig

# oc login -u kubeadmin -p Login successful.You have access to 53 projects, the list has been suppressed. You can list all projects with 'oc projects'

Using project "default".

# oc get nodes

NAME STATUS ROLES AGE VERSION

master0.ocp4.example.com Ready master 39d v1.14.6+463c73f1f

master1.ocp4.example.com Ready master 39d v1.14.6+463c73f1f

master2.ocp4.example.com Ready master 39d v1.14.6+463c73f1f

worker0.ocp4.example.com Ready worker 39d v1.14.6+463c73f1f

worker1.ocp4.example.com Ready worker 39d v1.14.6+463c73f1f

On the storage side, I have a NetApp All Flash FAS (AFF) cluster that runs ONTAP 9.5, with dedicated Storage Virtual Machines (SVMs) created for NFS and iSCSI workloads. Think of an SVM as a logical container meant to isolate data between teams and manage lifecycles independently. Each storage cluster can house multiple SVMs. Ideally you should create multiple SVMs to isolate your workloads and create backends on a per-SVM basis. In this blog, I have one SVM that I will use for creating NFS PVCs.

Installing Trident

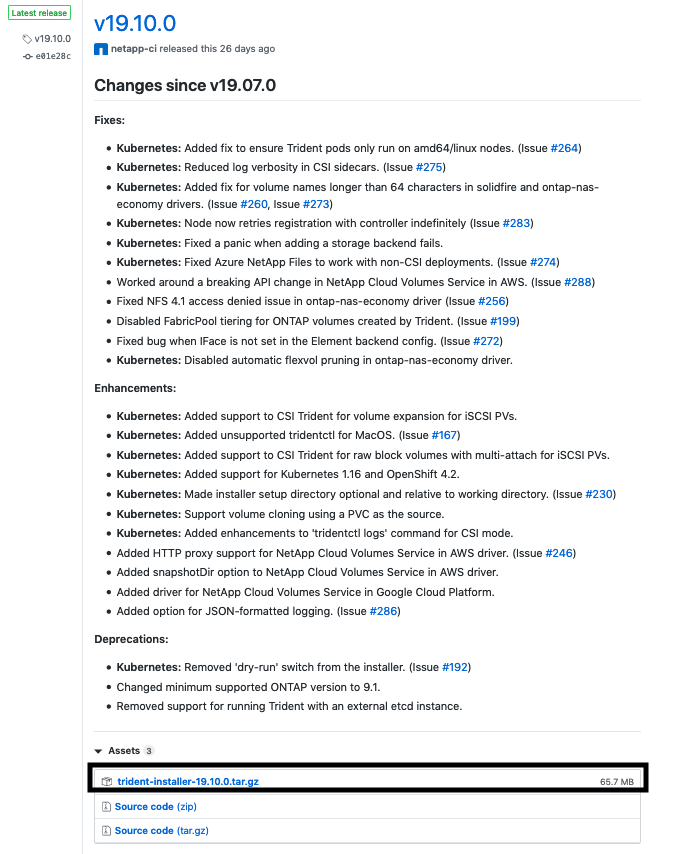

The installation procedure for Trident is straightforward, you can find the documentation here. Strat by retrieving the Trident installer from the GitHub site (https://github.com/NetApp/trident/releases). I am using the latest available version of Trident at this moment, which happens to be Trident 19.10.

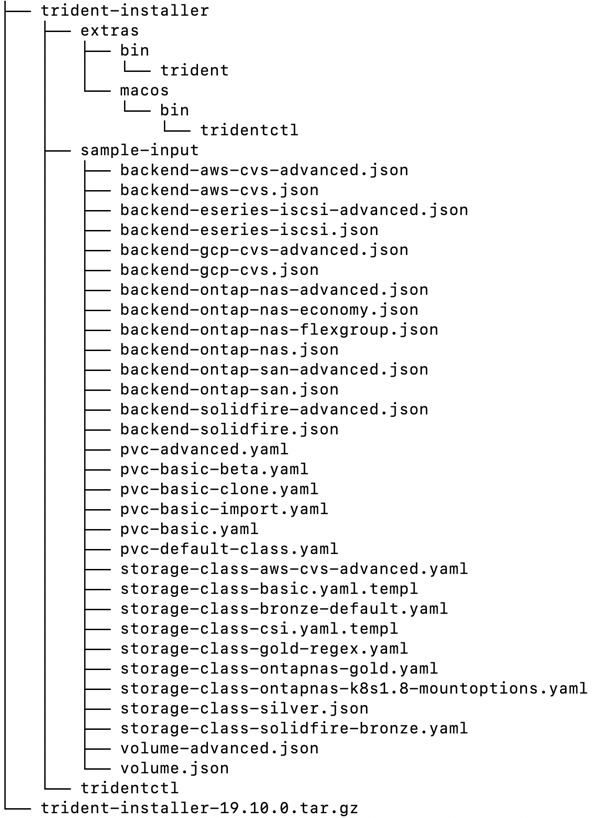

After downloading and extracting the installer, your directory should look like this:

The sample-input directory contains a number of sample definitions for StorageClasses, PVCs and Trident backends to help you get started with Trident. There are a couple of ways you can install Trident:

- Generic install: This is the easiest way to install Trident. If your OpenShift cluster does not have any network restrictions and has access to pull images from the outside world, this is the way to go.

- Customized install: You can also choose to customize your install. In air gapped environments you can point to a private image repository for Trident to pull its images from. Take a look at https://netapp-trident.readthedocs.io/en/stable-v19.10/kubernetes/deplo… to get started.

I’m performing a generic installation in this blog. This is what I do to install Trident:

1.Add the tridentctl binary to my path.

PATH=$PATH:$PWD/trident-installer

- Install Trident by running the tridentctl install command. I specify a namespace for Trident (“trident-ns”) to install Trident’s resources and CRDs in. As part of installing, Trident creates CRDs to maintain its state and these CRD objects will be namespaced, only accessible in “trident-ns”.

# tridentctl install -n trident-ns

INFO Starting Trident installation. namespace=trident-ns

INFO Created service account.

INFO Created cluster role.

INFO Created cluster role binding.

INFO Added security context constraint user. scc=privileged user=trident-csi

INFO Added finalizers to custom resource definitions.

INFO Created Trident pod security policy.

INFO Created Trident service.

INFO Created Trident secret.

INFO Created Trident deployment.

INFO Created Trident daemonset.

INFO Waiting for Trident pod to start.

INFO Trident pod started. namespace=trident-ns pod=trident-csi-7c88dd6588-x95bz

INFO Waiting for Trident REST interface.

INFO Trident REST interface is up. version=19.10.0

INFO Trident installation succeeded.

As can be seen from the logtrace, Trident creates CRDs, defines a Service, Deployment and a DaemonSet that runs on all worker nodes. Once the Trident pod comes up and is able to communicate with the storage cluster, the installation completes and you can move on to the next step.

I can examine the resources that Trident has created.

# oc get pods -n trident-ns -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

trident-csi-7c88dd6588-x95bz 4/4 Running 0 17m 10.254.3.22 worker0.ocp4.example.com

trident-csi-7v2zj 2/2 Running 0 17m 192.168.7.11 worker0.ocp4.example.com

trident-csi-c5czk 2/2 Running 0 17m 192.168.7.21 master0.ocp4.example.com

trident-csi-lxrw9 2/2 Running 0 17m 192.168.7.12 worker1.ocp4.example.com

trident-csi-mwhdq 2/2 Running 0 17m 192.168.7.22 master1.ocp4.example.com

Trident creates a Controller server which runs on one of the worker nodes. This is managed by a Deployment (named “trident-csi”) that creates and maintains a replica of the controller pod (trident-csi-7c88dd6588-x95bz). The Controller pod contains the Trident container and the necessary CSI sidecars to use NetApp’s CSI Drivers. The other pods are created by Trident’s DaemonSet on all available worker nodes. These pods contain a Trident container that talks to the Controller server to provision and attach volumes. You can choose the node(s) the Controller server is scheduled on by generating custom YAMLs and modifying the selector.

Creating a backend

Now that Trident is installed, I proceed to create a Trident backend. Before continuing any further, let’s take a look at what a Trident backend is:

A Trident backend represents a storage cluster that will be used by Trident to provision PVCs. Each instance of Trident can manage multiple backends and orchestrate storage. After you install Trident, you must 1. create a backend and 2. Map StorageClasses to backends. Once this is done, creating PVCs with these StorageClasses will instruct Trident to provision volumes on the storage cluster and expose it as a PV to the OpenShift cluster. This way, Trident manages the lifecycle of the PV from creation to deletion.

The <strong>sample-input</strong> directory contains sample definitions for all supported Trident backends. Here is my backend definition:

# cat backend.json

{

"debug":true,

"managementLIF":"10.11.12.13",

"dataLIF":"10.11.12.14",

"svm":"test",

"backendName": "nas_backend",

"aggregate":"aggr_01",

"username":"admin",

"password":"",

"storageDriverName":"ontap-nas",

"storagePrefix":"bala_",

"version":1

}

tridentctl create backend -f backend.json -n trident-ns

+-------------+----------------+--------------------------------------+--------+---------+

| NAME | STORAGE DRIVER | UUID | STATE | VOLUMES |

+-------------+----------------+--------------------------------------+--------+---------+

| nas_backend | ontap-nas | 2d55fcc6-dec3-4c5f-960a-c1cc7a3678df | online | 0 |

+-------------+----------------+--------------------------------------+--------+---------+

My backend definition mentions the StorageDriver that should be used (“ontap-nas”) and contains the details about the storage cluster. Trident offers a number of storage drivers that target different use cases and scalability requirements.

Creating a StorageClass and PVCs

Once a backend is available, I can create StorageClasses that map to backends and use them to create PVCs. Here’s a simple StorageClass definition that I use to create a “nas” StorageClass:

# cat sc.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nas

provisioner: csi.trident.netapp.io

parameters:

backendType: "ontap-nas"

snapshots: "True"

provisioningType: "thin"

encryption: "true"

The <strong>provisioner</strong> string is set to “csi.trident.netapp.io”, instructing requests for this StorageClass be handled by Trident’s CSI Drivers. Parameters can also be specified as key-value pairs to narrow down the candidate list of backends. This StorageClass will be used to create PVCs that support snapshot creation and encrypted volumes.

You can always examine the list of backends that satisfy a StorageClass by running tridentctl get backend -o json. Each backend has a storage.storageClasses attribute indicating the StorageClasses which can be used to provision a volume.

Here’s my PVC definition:

# cat pvc.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: nas-volume-claim

annotations:

trident.netapp.io/exportPolicy: "default"

trident.netapp.io/snapshotPolicy: "default"

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

storageClassName: "nas"

Trident supports annotations that enable users to pass feature arguments on a per-PVC basis. In this example, I explicitly set the export policy (used to configure access from the storage cluster to the PV) and snapshot policy (for taking automatic storage-level snapshots) to reference pre-created policies.

# oc create -f sc.yaml

storageclass.storage.k8s.io/nas created

# oc create -f pvc.yaml

persistentvolumeclaim/nas-volume-claim created

# oc get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

nas-volume-claim Bound pvc-9e72871d-0fc2-11ea-9d76-525400af4de6 5Gi RWO nas 12s

There it is! Trident took care of creating a volume and presenting it as a PV to my OpenShift cluster, and I can reference this PVC in my pod/deployment definitions just like any other.

By now, it’s easy to see how Trident can add significant value to a stateful application. Persistent storage is a key requirement to successfully adopt container-centric DevOps and the focus must be on simplifying the consumption of storage natively at the Kubernetes layer. This eliminates the hard dependency on infrastructure/storage admins and empowers developers to focus on appdev, and DevOps/SRE professionals to keep the operation running. NetApp is a leader in the storage industry and provides a comprehensive suite of data management solutions to meet performance and efficiency service levels. Coupled with Trident, OpenShift users can scale workloads with less effort and avoid the storage bottleneck to provide a more seamless end-to-end experience. Trident’s documentation and Slack workspace are great places to get started and ask questions. In addition, netapp.io is home to NetApp’s contributions in the Open Ecosystems space and contains a rich set of blogs on Trident.

About the author

Red Hatter since 2018, technology historian and founder of The Museum of Art and Digital Entertainment. Two decades of journalism mixed with technology expertise, storytelling and oodles of computing experience from inception to ewaste recycling. I have taught or had my work used in classes at USF, SFSU, AAU, UC Law Hastings and Harvard Law.

I have worked with the EFF, Stanford, MIT, and Archive.org to brief the US Copyright Office and change US copyright law. We won multiple exemptions to the DMCA, accepted and implemented by the Librarian of Congress. My writings have appeared in Wired, Bloomberg, Make Magazine, SD Times, The Austin American Statesman, The Atlanta Journal Constitution and many other outlets.

I have been written about by the Wall Street Journal, The Washington Post, Wired and The Atlantic. I have been called "The Gertrude Stein of Video Games," an honor I accept, as I live less than a mile from her childhood home in Oakland, CA. I was project lead on the first successful institutional preservation and rebooting of the first massively multiplayer game, Habitat, for the C64, from 1986: https://neohabitat.org . I've consulted and collaborated with the NY MOMA, the Oakland Museum of California, Cisco, Semtech, Twilio, Game Developers Conference, NGNX, the Anti-Defamation League, the Library of Congress and the Oakland Public Library System on projects, contracts, and exhibitions.

More like this

4 reasons to start using image mode for Red Hat Enterprise Linux right now

OpenShift: Consistent integration for the hybrid enterprise

The Containers_Derby | Command Line Heroes

Can Kubernetes Help People Find Love? | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds