In this post:

-

Learn the differences betwween AI, ML, and Deep Learning

-

Common challenges in adopting ML

-

How Red Hat can help you on your AI/ML journey

Machine learning has many applications and benefits for a variety of businesses. However, it’s complicated and requires collaboration to bring you value. In this post, we'll talk about how Red Hat can assist you in being successful with artificial intelligence and machine learning (AI/ML), no matter what phase of your journey you’re in.

What is the difference between Artificial Intelligence, Machine Learning, and Deep Learning?

You can think of artificial intelligence, machine learning, and deep learning as a stack of nested dolls. In other words, deep learning is a part of machine learning, which itself is a part of artificial intelligence.

Defining these concepts more closely, AI is a broad term that describes when machines mimic what humans can do, such as learning and problem solving. It encapsulates both machine learning and deep learning.

Machine learning allows programs to automatically improve through experience, while deep learning uses neural networks to learn complex patterns in huge amounts of data. You might use deep learning for chatbots, translation or virtual assistants.

What is the difference between traditional programming and machine learning?

Traditional programming could be described as a process in which a person (or people) creates a program that takes in a set of rules and data and produces answers as an output.

Machine learning, on the other hand, is an automated process that takes in data and answers as inputs and then produces rules as outputs. Some real-world examples of machine learning include image recognition, speech recognition, and even medical diagnosis.

Think about your favorite streaming platforms recommendation system. By feeding demographic factors as input data and user watch history as output data, for example, an algorithm can create a program that tries to predict if a user will like a certain movie or not.

Common problems in adopting ML

Let's start with two common problems that we see in the market in our experiences.

#1: No one wants to work with the data

Once we start with our Machine Learning initiative and journey, it’s common to get lost in model building and tuning instead of thinking about collecting, storing, or processing data.

But data is very, very important.

A paper written by researchers at Google pointed out that 92% of AI practitioners reported experiencing one or more “data cascades” with their AI systems, defined as “compounding events causing negative, downstream effects from data issues, resulting in technical debt over time” (5). These data cascades are often avoidable, and stem from undervaluing data.

Instead of viewing data as grunt work in ML workflows, the researchers emphasize that AI practitioners prioritize data excellence—using certain processes, standards, infrastructure and incentives to “improve the quality and sanctity of data” (10).

You can learn more about data excellence on the website for the 1st Data Excellence conference.

#2: Collaboration

The second problem that we see in machine learning is that it requires strong collaboration across a variety of different teams.

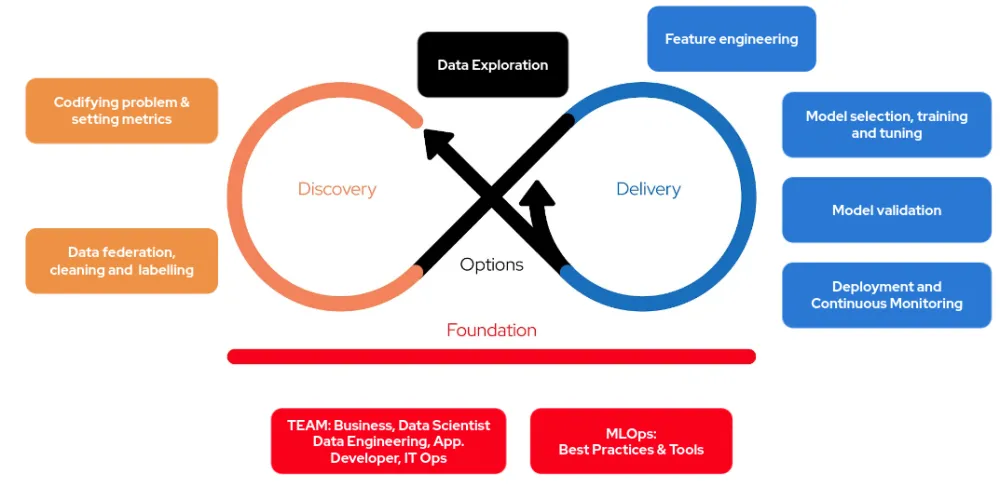

As you can see in the chart above, for example, to make a good machine learning lifecycle you have to have many different personas working together, including data scientists, ML engineers, business analysts, and developers.

Adding to this challenge is the fact that each persona is different, has different focuses, different priorities, and may even work in a different team within your company.

In other words, to be successful with machine learning, you need to have a collaborative team that is cross functional across multiple business verticals. One way to think about the importance of cross-team collaboration is with what we call a Mobius loop.

With a Mobius Loop, we have a methodical way of understanding an AI/ML initiative while collaborating across different verticals.

As you can see above, on the left hand side, we first codify the problem, explore the data, and understand how it will be federated.

As you can see above, on the left hand side, we first codify the problem, explore the data, and understand how it will be federated.

Once we are happy with that, then we go to ML model building and deployment in different environments. This iterative approach allows us to gradually experiment with our hypothesis and make sure that we are creating continuous value for the business. But as you can see, it all starts with a solid foundation between the business team, data scientists, IT ops, and so on.

Creating a team culture, collaborative environments, and technical practices are all foundational practices that support fast and iterative journeys through the other parts of the loop.

Without the foundation, teams cannot reach sustainable, continuous delivery.

Red Hat uses these Open Practice Library principles during customer engagements, to try to ensure that your initiative is successful. The library contains 107 product lifecycle practices, contributed by 73 Creative Commons individuals.

Learn more about AI/ML and Red Hat

Red Hat OpenShift Data Science is a managed cloud service for data scientists and developers of intelligent applications. It provides a fully supported sandbox in which to rapidly develop, train, and test machine learning (ML) models in the public cloud before deploying in production.

Find out how Red Hat Consulting is helping customers operationalize their ML models through machine learning operations (MLOps) practices and see how it all runs on OpenShift. Whether you are deploying ML models into production, looking for a data science research platform, or just starting to explore AI/ML, this three-part webinar series is for you.

About the authors

Faisal Masood is a Principal Architect, and AI/ML lead at Red Hat Asia Pacific and has over 20 years of IT experience. His primary role is to assist customers in defining and executing tactical and strategic goals. Masood works with lighthouse clients in the APAC region and help design and develop evolutionary architectures. These architectures are related to data life cycle, machine learning, microservices, continuous delivery and infrastructure as code. He has an engineering degree from NED University and has completed continuing education courses from MIT Sloan and the University of New Mexico.

Ross Brigoli, a Senior Architect at Red Hat, has over 10 years of experience working as a Software Engineer and Technical Architect at companies such as Accenture and Crédit Agricole. Well-versed in agile principles, he has a passion for solution design and holds a M.S in Computer Applications from Mindanao State University.

More like this

New efficiency upgrades in Red Hat Advanced Cluster Management for Kubernetes 2.15

Improving VirtOps: Manage, migrate or modernize with Red Hat and Cisco

Technically Speaking | Taming AI agents with observability

Transforming Your Identity Management | Code Comments

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds