In my previous article, 4 good habits for building Ansible Automation Platform execution environments, I discussed Ansible execution environments (EEs). That article contains best practices around what to put into your EEs for Red Hat Ansible Automation Platform.

As a brief recap, an automation execution environment includes:

- A version of Python

- A version of ansible-core

- Python modules/dependencies

- Ansible Content Collections (optional)

[ Looking for more on system automation? Get started with The Automated Enterprise, a complimentary book from Red Hat. ]

The previous article describes several ways of building these containers. This article covers the basics of creating an execution environment and focuses on encapsulating the process entirely on Ansible Automation Platform machines.

This process goes into detail about building execution environments in code using collections. It is not an exhaustive dive into all options, so I provide links to documentation for further reading. In addition, I created a repository for code reference. The steps below are advanced practices for assembling many pieces and assume you have experience using the Ansible automation controller and hub.

Configuration as Code: A brief recap

Ansible is an automation method, and all too often I have seen people manually creating and setting their automation through manual processes. A few collections are dedicated to managing the Ansible Automation Platform with Ansible using Configuration as Code (CaC). I reference a few in this article.

The benefits of CaC are:

- Standardized settings across multiple instances, such as development and production

- Version control is inherent to storing your configuration in a Git repository

- Easy to track changes and troubleshoot problems caused by a change

- Ability to use CI/CD processes to keep deployments current and prevent drift

[ Related reading: Manage automation controller Configuration as Code (CaC) with Ansible ]

The following summarizes the collections for managing CaC:

- Ansible.controller and awx.awx are module collections that can interact with their respective products. These are all built on the same code base and specifically created to interact with the API to make changes.

- infra.controller_configuration is a role-based collection. It is a set of roles built to use one of the collections above to take object definitions and push their configurations to automation controller or AWX.

- infra.ah_configuration collection manages the Automation hub. It contains modules and roles to manage and push configuration to the hub. It is built on the code from the previous collections but is explicitly tailored to the Automation hub.

- infra.ee_utilties helps build execution environments. It helps migrate from Tower to the automation controller and create execution environments from definition variables.

The infra collections are part of the new validated content available with the bundled install of Ansible Automation Platform and on Ansible Galaxy.

Define an execution environment in code

The first step is to create an execution environment definition playbook. I include the code here and a link to its repository file. You could store this definition as a variable file, in an inventory, or in any other way Ansible stores variables. This format is easy to use and compact.

[ Write your first Ansible playbook in this hands-on interactive lab. ]

I'll go over the parts of the ee_builder_controller.yaml playbook. At the top, specify false to prevent gathering facts and declare the ee_utilities collection.

---

- name: Playbook to create custom EE

hosts: all

gather_facts: false

collections:

- redhat_cop.ee_utilities

vars:

# For controller configuration definition

ee_reg_credential: Automation Hub Container Registry

ee_list:

- name: custom_ee

alt_name: Custom EE

tag: 1-11-21-2

# base_image

bindep:

- python38-requests [platform:centos-8 platform:rhel-8]

- python38-pyyaml [platform:centos-8 platform:rhel-8]

python:

- pytz # for schedule_rrule lookup plugin

- python-dateutil>=2.7.0 # schedule_rrule

- awxkit # For import and export modules

collections:

- name: awx.awx

version: 21.9.0

- name: redhat_cop.controller_configuration

- name: redhat_cop.ah_configuration

prepend:

- RUN whoami

- RUN cat /etc/os-release

append:

- RUN echo This is a post-install command!

roles:

- redhat_cop.ee_utilities.ee_builder

In the variables above:

- ee_reg_credential is the credential used to access the container registry for use in the definition for automation controller.

- ee_list is the list of execution environments to build. It could be one or more EEs and set as a list for flexibility.

Inside the ee_list variable are additional variables to consider:

- name is the name of the image to be created.

- alt_name is the alternate name for the image used in the automation controller definition.

- tag is the container image tag. You have many choices, but it's helpful to date or use a convention.

- bindep is the variable list that provides bindep requirements to be passed to dnf.

- python is the variable list to provide Python requirements in pip freeze format.

- collections is the list of required collections in the format required for Galaxy.

- prepend includes commands to use before the main build steps.

- append includes commands to use after the main build steps.

These variables represent the same parameters in the ansible-builder file. The values for prepend/append are not required and are used only to illustrate this example. Generally, you can use these values to include additional requirements like proxy options or additional files and settings.

To understand more about ansible-builder, please refer to its documentation or this Introduction to Ansible Builder.

Additional variables for creating an EE in code

Running this playbook on its own does not create an execution environment. You must set several other variables for it to run. In this example, Ansible automation controller sets these required variables on the Job Template or in a Credential.

These variables represent three important aspects of building an execution environment.

1. Where are images pulled from?

I like to download from the Red Hat registry, which the role and the ansible-builder program do by default, but you can set alternate sources.

The two images used in the example come from the registry.redhat.io/ansible-automation-platform-23 image registry. They are:

- Base_image: ee-minimal-rhel8

- Builder_image: ansible-builder-rhel8

2. Where are images pushed to?

The fully qualified domain name (FQDN) of the destination container registry, such as quay.io, your hub, or another image registry your company uses.

3. Where are collections pulled from?

Define the automation hub host and token to use.

Set the following variables to answer these questions:

- base_registery_username is the username for the registry to pull container images from. This can be the Red Hat registry or a local one.

- base_registery_password is the password for the base registry.

- ee_registry_dest is the destination registry FQDN such as hub.nas.

- ee_registry_username is the destination registry username (where it will push the image).

- ee_registry_password is the password for the destination registry.

- ee_validate_certs incidates whether to validate certificates for both of the above registries.

- ah_host is the FQDN of the automation hub to pull collections from, such as hub.nas.

- ah_token is the token to use for the automation hub.

- ee_builder_dir_clean removes files automatically created by the role in a random

/tmp/folder.

[ Get started with Ansible automation controller in this hands-on interactive lab. ]

Build an execution environment with the automation controller

So far, you've seen how to define an execution environment in code. The next step is finding a suitable place to run the playbook.

You can build an execution environment on a command-line interface (CLI) or in a privileged container with some special built-in pieces for running Podman or Docker in a container. The latter can be done on Red Hat OpenShift or other build environments but should never be done on the Ansible automation controller.

I'll demonstrate this process using the automation hub host to run the builder playbook in this example. To run this example, make sure to install ansible-builder on the host.

To configure the Ansible automation controller as code, I use the infra.controller_configuration collection. In this section, I refer to the following file: configure_controller_no_survey.yaml. You can find the collection at infra.controller_configuration.

The configure_controller_no_survey.yaml file sets up variables for all required roles and objects in the Ansible automation controller.

Examine the parts below.

Projects

These are the fields used in a controller automation project page:

controller_projects:

- name: EE build project

scm_type: git

scm_url: https://github.com/sean-m-sullivan/ee_definition_config.git

scm_branch: main

scm_update_on_launch: false

description: EE build project

organization: Default

wait: true

update: false

For a Git project, set the variables type, url, branch, and several others. For additional information, consult the documentation for the projects role.

Inventories

This example requires two inventories, specifying their name and the organization to which they belong:

controller_inventories:

- name: AAP Hosts

organization: Default

- name: Blank (localhost only)

organization: Default

In the next step, I will add hosts to them. For reference, consult the documentation for the inventories role.

Hosts

The hosts require the name, inventory, and variables:

controller_hosts:

- name: 10.242.42.143

inventory: AAP Hosts

variables:

ansible_connection: ssh

ansible_ssh_user: awx

ansible_ssh_pass: password

- name: localhost

inventory: Blank (localhost only)

variables:

ansible_connection: local

In this case, I set the IP address and connection details for the hub host and a localhost. Alternatively, you could set these with a machine credential. For details, consult the documentation for the hosts role.

Credential_types and credentials

Defining credentials as code can be complicated due to the necessity of protecting passwords. I created a credential type in the reference file with the requisite inputs and injectors. This method allows values to be encrypted where the role expects clear text.

controller_credentials:

- credential_type: Registry Credentials

name: Execution Build Credentials

organization: Default

inputs:

base_registery_username: rhn-username

base_registery_password: !vaulted

ee_registry_username: admin

ee_registry_password: secret123

ee_validate_certs: false

ah_host: hub.nas

ah_token: 55987617133a0ef2585446c73bd8340e5b17e07f

When using the controller_configuration role for all credentials, I recommend using Ansible Vault or another credential source. For an example of using Ansible Vault, read How to encrypt sensitive data in playbooks with Ansible Vault. For simplicity in this article, some fields show clear-text passwords; in practice, it's recommended to encrypt passwords.

The credential configuration is long, so this snippet shows only the beginning. For the full example, refer to the file configure_controller_no_survey.yaml in the source code repository.

This credential and credential type takes the variables mentioned above in the Additional variables section and allows them to be used later in a Job Template. For more information, consult the documentation for the credential types role and the documentation for the credentials role.

Job_templates

These two Job Templates run playbooks in the project defined above:

controller_templates:

- name: Execution Environment Builder

job_type: run

inventory: AAP Hosts

credentials:

- Execution Build Credentials

project: EE build project

execution_environment: Automation Hub Minimal execution environment

playbook: ee_builder_controller.yaml

extra_vars:

ee_registry_dest: hub.nas

ee_builder_dir_clean: true

- name: Push Execution Environments to Controller

job_type: run

inventory: Blank (localhost only)

credentials:

- Controller Credential

project: EE build project

execution_environment: Automation Hub Minimal execution environment

playbook: push_to_controller.yaml

These Job Templates use objects defined in the previous steps:

- Inventories

- Credentials

- Minimal execution environment installed by default on the automation controller

- Project

- Playbook to use

- Variables to set for the Job Template

You can find the second playbook in the source code repository.

All the previous steps lead up to this one. This pair of Job Templates builds the EE and then pushes it to the controller. You could combine them into a single Job Template. However, I split them up for illustrative purposes and then create a workflow.

For details, consult the documentation for the job_templates role.

Workflow_job_templates

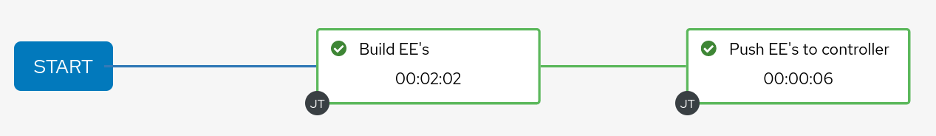

The last step is to create a Workflow to execute the two Job Templates defined in the previous step:

controller_workflows:

- name: Update Execution Environments

workflow_nodes:

- identifier: Build EE's

unified_job_template:

name: Execution Environment Builder

type: job_template

organization:

name: Default

related:

success_nodes:

- identifier: Push EE's to controller

- identifier: Push EE's to controller

unified_job_template:

name: Push Execution Environments to Controller

type: job_template

organization:

name: Default

This Workflow sets an identifier for each workflow node to be used as both a name in the diagram and a unique identifier for future reference. It gives information on how to find the Job Template by setting the type and which organization it belongs to. Finally, the "related" field specifies how the two nodes are linked.

You can find more information about defining workflows in the documentation for the workflow_job_templates role and my article Automation controller workflow deployment as code.

Additional variables

Additional variables are set in this playbook to connect to the automation controller:

controller_hostname: https://controller.nas/

controller_username: admin

controller_password: secret123

controller_validate_certs: false

These include the hostname, username, password, and whether to validate certificates. These variables are important and can be set as a Red Hat Ansible Automation Platform credential in the automation controller.

[ Become a Red Hat Certified Architect and boost your career. ]

Run the build workflow

You have created everything necessary on the automation controller for it to run. Navigate to the Update execution environments workflow and launch it. If you wish to limit access, I recommend creating roles to control access to the workflow and credentials. The workflow should run without issue if you created everything successfully. The only "gotcha" is ensuring ansible-builder exists on the target machine and that any firewall or proxy issues are solved in your environment.

I also recommend you set this up on a schedule to run weekly and create webhooks to update the EE whenever you update any of your custom collections.

Wrap up

I hope these articles show you the power of using Configuration as Code and some tools to help you build execution environments. You can use these same methods to bring the benefits of Git to making changes to your automation controller and hub.

Where to go next

Each section in this series links you to additional documentation using the described roles. The following are some more links that can provide useful documentation:

In addition, for AnsibleFest 2022, my colleagues and I put together a lab workshop that covers these topics. The environment is not provided, but you should be able to complete it with an automation controller and hub.

Check out the self-paced interactive hands-on labs with Ansible Automation Platform.

Finally, if you want a deep dive into the Ansible Automation Platform, look for my book from Packt, Demystifying Ansible Automation Platform.

About the author

More like this

Build security into ITOps from the start with automation

Managing IT Operations when AI outpaces your patching cycle

Operating System Management | Compiler

Technically Speaking | Taming AI agents with observability

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds