Red Hat Ansible Automation Platform introduced execution environments (EEs) with the 2.0 release. Previously, Ansible Automation Platform relied on Python virtual environments for the Python dependencies. Execution environments allow dependencies to be portable using containers. These are used for automation controller jobs and can run locally using ansible-navigator.

[ Looking for more on system automation? Get started with The Automated Enterprise, a complimentary book from Red Hat. ]

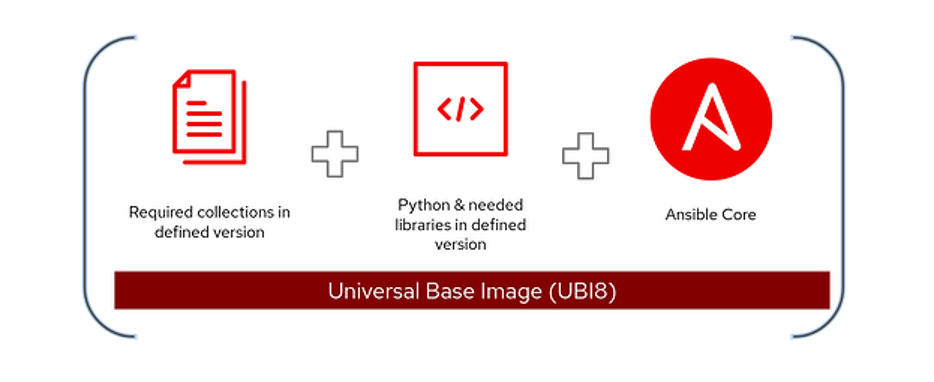

The Ansible Automation Platform has a command-line tool to build EEs called ansible-builder. The builder compiles the following and bundles it into EE containers:

- A version of Python

- A version of

ansible-core - Python modules and dependencies

- System dependencies

- Ansible Content Collections (optional)

Over time, good practices for building EEs have emerged. This article goes over the recommended practices around builds. A follow-up article explains how to use Ansible Automation Platform to automate the process.

For more detail about what goes into EEs, you can refer to:

- The anatomy of automation execution environments

- What's new in Ansible Automation Platform 2: automation execution environments

Execution environment good practices

There are a few practices to keep in mind when building EEs:

- Restrict images to only what is needed.

- Update EEs regularly.

- Do not create monoliths.

- Keep track of versions and why you included pieces.

I'll cover these four practices in more detail, beginning with image management.

[ Write your first Ansible playbook in this hands-on interactive lab. ]

1. Restrict images to only what is needed

Execution environments will gather the additional requirements from included collections on their own. If you only use ansible-navigator, it's easier to include all collections needed when building an image.

However, a project can pull collections from the Ansible automation hub or Galaxy when using Ansible automation controller. If your deployment includes a hub, you can shrink the size of your images and make them more versatile by using collections that contain additional requirements and adding others needed to the project's requirements.

You can find these dependencies in the collections. The three files are requirements.txt, galaxy.yml, and bindep.txt. You can find examples of these files in the netcommon collection, which has all three dependencies.

There is a tradeoff in restricting the collection list in the image to the essentials: It keeps the images small; however, it requires keeping track of dependencies and versions in the project. There are edge cases where you may want to have every collection you use built into the image. Ensure that whenever you update a collection, you also rebuild the image.

There is also a tradeoff because the Ansible automation controller requires you to update the project every time there are collection updates. It does not need to occur every time a job launches but whenever an underlying collection or project source is updated. The alternative is to build a larger image with the required collections and push that as necessary. Using the project update sync can also be a way to test a new collection release without rebuilding the entire container, assuming that the collection's dependencies have not changed.

2. Update execution environments regularly

The upstream and downstream base images get updated regularly. The recent cadence of updates, CVEs, and other improvements has been roughly every two weeks. Because of this, it is important to keep your builds up to date. A CI/CD build process helps because you can schedule it. The images the Ansible Automation Platform installer ships with are not updated on their own. You can set up registry.redhat.io as an external registry and pull the images regularly from there to the automation hub to get regular updates.

Collections release on their own cadence. Even if the base image doesn't have a new release, a collection might have released a new version with bug fixes and updates. It is also important to keep your collections current. Having a process to regularly build a new EE with updated base images and collections can be highly beneficial.

3. Do not create monoliths

It can be tempting to create a single EE to hold everything required. However, this can lead to bloat and dependency conflicts. Creating logical groupings of EEs with similar use cases and requirements is a better practice.

These groupings could include common collections like networking and cloud environments, as the requirements and inclusions in these environments differ. For example, the networking EE has some Python dependencies, while the Windows EE requires built-in Kerberos configuration to authenticate to servers.

Avoiding monoliths also applies to your project repositories and collections. Far too often, I have seen customers with a single playbook repository that contains hundreds of playbooks or role repositories that contain everything under the sun. Having just one or two monoliths makes it hard to keep track of changes and versions of the roles. It's better to keep them in logical groupings and have many repositories. Roles and collections can include dependencies when there are common tasks.

[ Get started with Ansible automation controller in this hands-on interactive lab. ]

4. Keep track of versions and why you included pieces

It can be tempting to use the latest version of everything, but the best practice is to keep track of what versions work, test these against the latest releases, and update after testing. This process can be frustrating and difficult to control, but testing against newer versions before putting them into production is a good habit. You can expect the Python in the official execution environments to be tested against the latest Ansible version. You should do the same for your custom modules or collections.

It can be helpful to comment on why you include a particular dependency in your custom collections or EE definition files. You can refer back to the note later instead of trying to figure out why you added a particular Python library a year later.

Build options for execution environments

The ansible-builder tool creates execution environments, but other utilities can also help build them. There are many ways to build container images; covering them all is impossible.

The builder tool relies on files being populated and updated before running. It creates an image you can publish to a container repository for later use. Read Automating execution environment image builds with GitHub Actions for an example of keeping all definitions for multiple EEs. This example uses GitHub CI/CD to do the publishing.

Another option is to use a container image system like Shipwright or OpenShift Builds, as covered in the article Creating automation execution environments using ansible-builder and Shipwright. You can combine this approach with other container images needed, but the example focuses on OpenShift. You could also use Tekton or other CI/CD pipelines.

[ For more on OpenShift and Tekton, download the complimentary eBook Getting GitOps. ]

I am a fan of automating your automation with automation. It's a mouthful, but why not use Ansible to automate the platform it runs on? I've worked with various people to craft the infra.controller_configuration collection, infra.ee_utilities, and a few other collections based around doing that. In the next section, I'll describe using these collections to automate building images and provide a way to use Ansible automation controller to build, publish, and update EEs. This practice is also useful for scheduling builds of EEs with new base images and collections.

Use Configuration as Code

Ansible is a method of automation, and time and time again, I have seen people manually create and set their automation through manual processes. Various collections are dedicated to managing the Ansible Automation Platform using Ansible using Configuration as Code (CaC).

[ Related reading: Manage automation controller Configuration as Code (CaC) with Ansible ]

The benefits of CaC include:

- Standardized settings across multiple instances, such as development and production

- Inherent version control by storing your configuration in a Git repository

- Simplified change tracking and easier troubleshooting of problems caused by a change

- Ability to use CI/CD processes to keep deployments current and prevent drift

The collections for managing CaC are:

- Ansible.controller and awx.awx are module collections that can interact with their respective products. These are all built from the same code base and are specifically designed to interact with the API to make changes.

- infra.controller_configuration is a role-based collection. It is a set of roles built to use one of the collections above to take object definitions and push their configurations to automation controller or AWX.

- infra.ah_configuration collection manages the automation hub. It contains modules and roles to manage and push configuration to the hub. It is built on the code from both of the previous collections but is specifically tailored to the automation hub.

- infra.ee_utilties helps build execution environments, migrate from Tower to the automation controller, and build execution environments from definition variables.

- infra.aap_utilities helps install, run backups, and restore the automation controller and other useful tools that don't belong to the other controller collections.

The infra collections are part of the new validated content available with the bundled install of Ansible Automation Platform and on Ansible Galaxy.

[ Also read Set up GitLab CI and GitLab Runner to configure Ansible automation controller ]

The two primary collections for this article are infra.ee_utilities and ifra.ah_configuration. They are key for automating the EE build process and scheduling EE builds. In my next article, I demonstrate how you can put all of this into practice.

Learn more

I hope this article offers some guidance and tools for building execution environments and the Ansible Automation Platform. In the next article, I cover building the execution environments using Ansible and using a few of the collections mentioned above.

Here are some additional links that can provide useful documentation:

In addition, for AnsibleFest 2022, my colleagues and I put together a lab workshop that covers these topics. The environment is not provided, but you should be able to complete it with an automation controller and hub.

Experience self-paced interactive hands-on labs with Ansible Automation Platform to gain more knowledge.

Finally, if you want a deep dive into the Ansible Automation Platform, look for my book from Packt, Demystifying Ansible Automation Platform.

About the author

More like this

Take your automation to the next level with Ansible Content Collections for Windows, Splunk, AIOps, MCP, and more

Planning your upgrade path to Ansible Automation Platform 2.6

Technically Speaking | Taming AI agents with observability

You need Ops to AIOps | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds