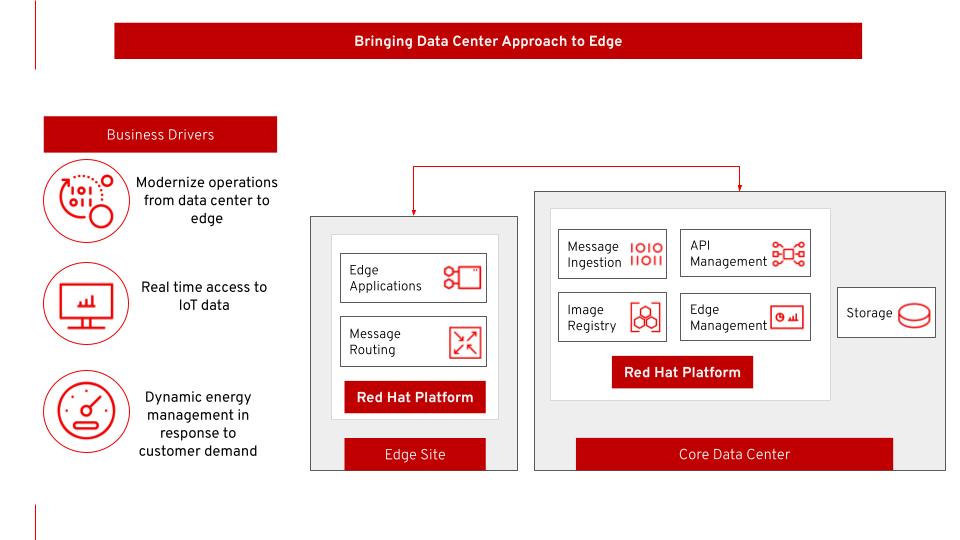

As companies adopt cloud-native computing for their enterprise workloads, many of them want to extend the same cloud datacenter processes to edge sites to leverage agile development methodologies, continuous integration (CI), continuous deployment (CD), and automation. With cloud computing, a company can rapidly deploy and manage resources across multiple locations, whether at a centralized, cloud, or edge site.

In particular, operations-intensive industries like manufacturing and transportation are transforming to become operationally agile. These companies are driven by the business imperative to manage distributed operations better across the supply chain, factory floor, or work sites.

The following architecture is based on a company operating across a vast geographical area that connects remote onsite operations with downstream processing centers and delivery. The company needs to monitor the condition of its physical pipeline and other infrastructure for operational safety and optimization.

This architecture takes advantage of Internet of Things (IoT) technologies for acquiring telemetry data and metrics.

Conceptually, the datacenter-to-edge solution stack is deployed on an open cloud-native infrastructure (such as Red Hat OpenShift) based on either:

- IoT functions distributed across edge sites

- Core functions located at a centralized site

You can access these diagrams and other resources at the Red Hat Portfolio Architecture Center.

[ For more insight on modernizing your infrastructure, read an architect's guide to multicloud infrastructure. ]

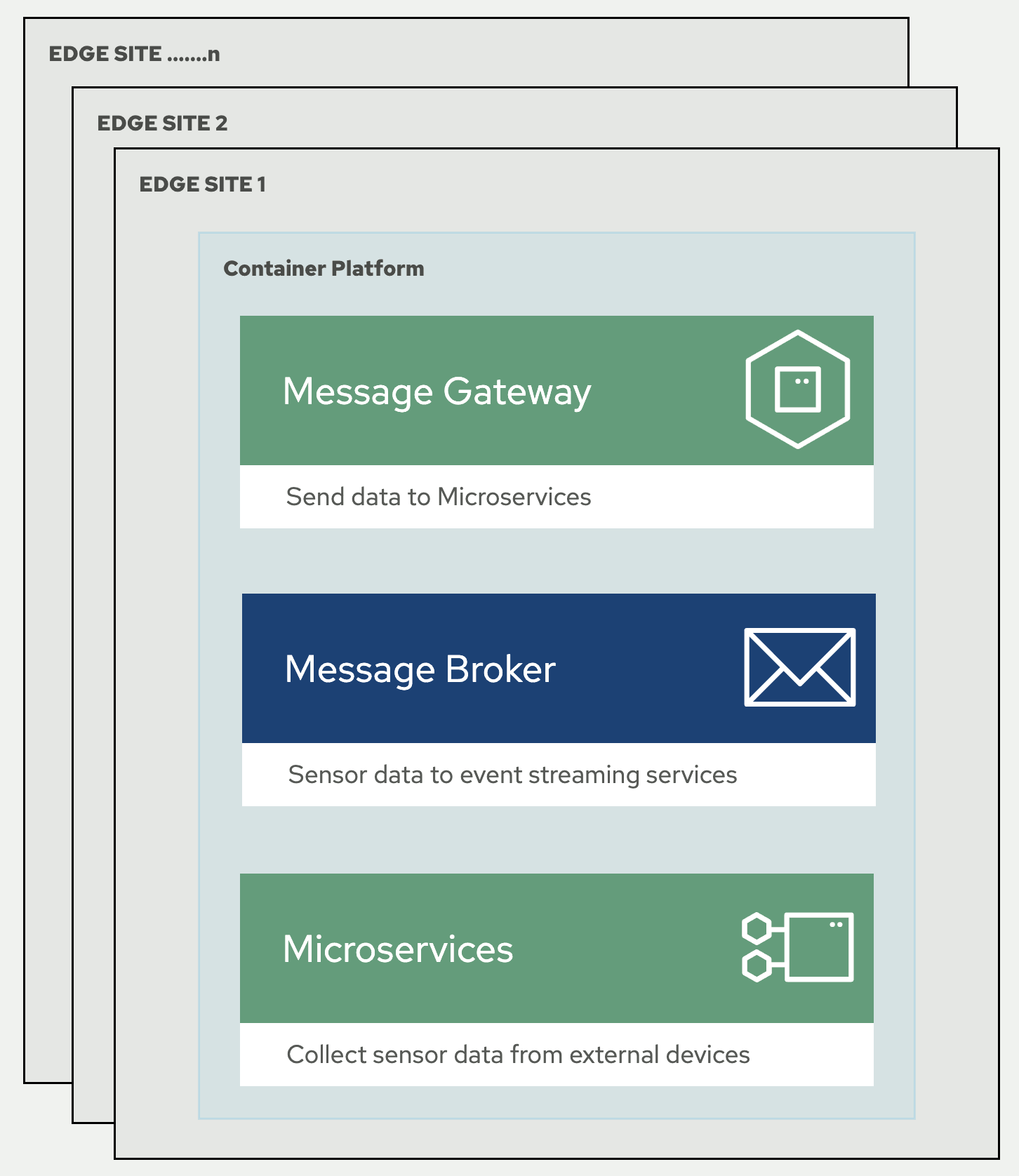

Edge sites

At edge sites distributed across wide geographic locations, telemetry data from sensors is acquired, normalized, and processed before it's transmitted to the core data center. New edge applications can be deployed in the future to add capabilities such as real-time analytics.

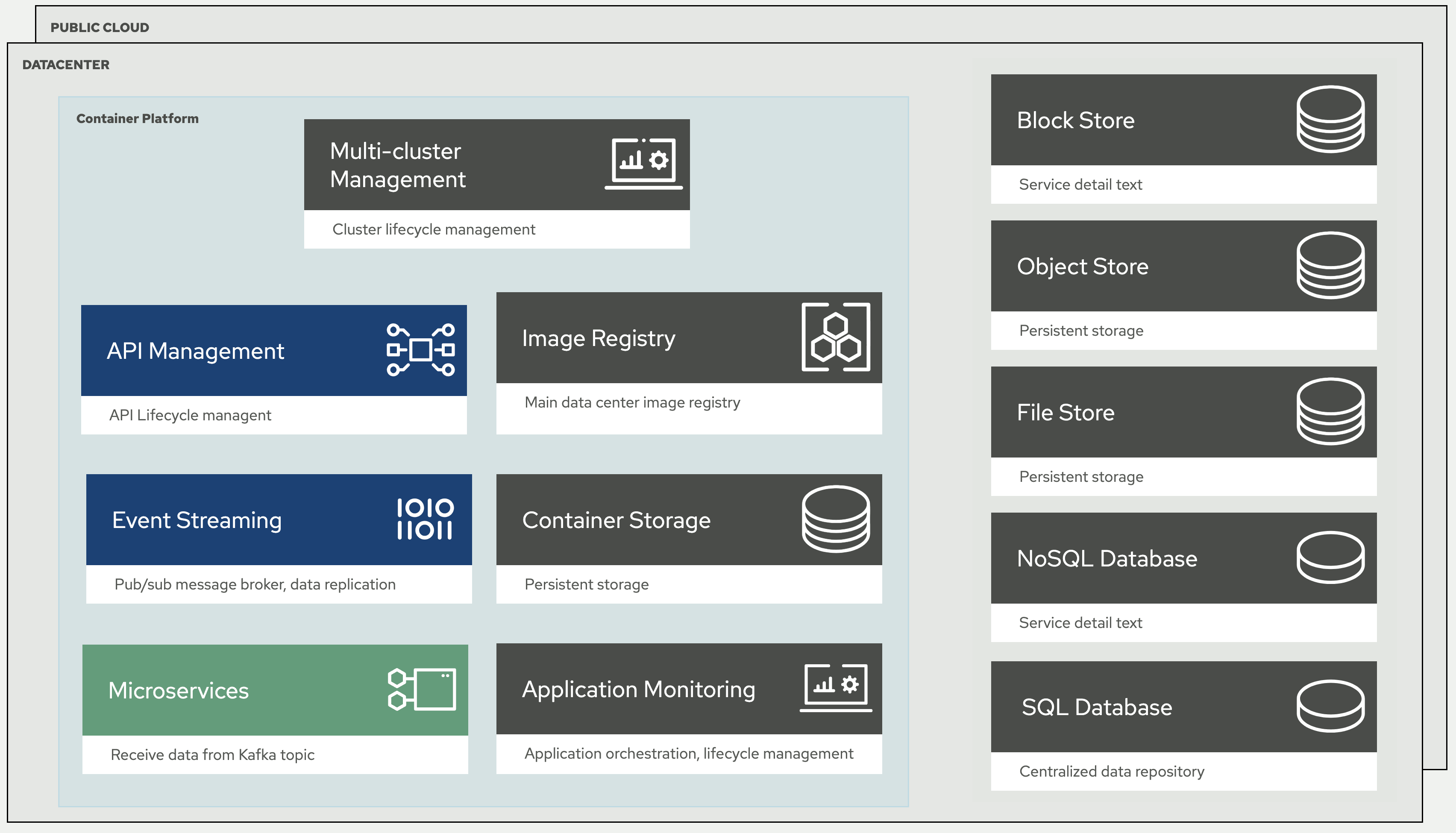

Centralized sites

In addition to core enterprise functions, the core data center and cloud are also responsible for processing and long-term storage of edge data. The cluster and application life cycle management functions, including the ones at edge sites, are also handled at the central location.

Infrastructure

An extensible, software-defined infrastructure provides a consistent framework that extends from central to edge sites. The virtualization element enables greater flexibility and automation of computing resources.

By taking a comprehensive approach, a company can integrate IoT data with other enterprise data to gain insights and automate provisioning and managing configurations of edge resources to become agile with application deployment.

[ You might also be interested in 5 reference architecture designs for edge computing. ]

Technology components

The following technology was chosen for this architecture:

- Red Hat OpenShift is an enterprise-ready Kubernetes container platform built for an open hybrid cloud strategy. It provides a consistent application platform to manage hybrid cloud, multicloud, and edge deployments.

- Red Hat Application Services helps an organization use the cloud delivery model and simplify continuous delivery of applications the cloud-native way. It's built on open source technologies and provides development teams multiple modernization options to enable a smooth transition to the cloud for existing applications.

- Red Hat AMQ Streams is a data-streaming platform with high throughput and low latency. It streams sensor data to corresponding microservices and automated diagnosis.

- Red Hat Advanced Cluster Management controls clusters and applications from a single console with built-in security policies. It extends the value of OpenShift by deploying applications, managing multiple clusters, and enforcing policies across multiple clusters at scale.

- Red Hat Quay is a private container registry that stores, builds, and deploys container images. It analyzes images for security vulnerabilities, identifying potential issues that can help mitigate risks.

- Red Hat OpenShift Data Foundations is software-defined storage for containers. As the data and storage services platform for OpenShift, OpenShift Data Foundation helps teams develop and deploy applications quickly and efficiently across clouds.

- Red Hat Enterprise Linux (RHEL) is an open source Linux operating system providing a foundation to scale existing apps—and roll out emerging technologies—across bare-metal, virtual, container, and all types of cloud environments.

Using edge technology

Edge recognizes that modern industries are global ventures in a physical sense. You can utilize edge in conjunction with your cloud and datacenter strategies to become more agile and discover new ways to improve existing processes. Edge computing and the IoT are built on trusted and emerging open source technology, so it's as flexible as you need it to be, making it a good fit for any industry.

About the author

Ishu Verma is an AI Solution Architect at Red Hat dabbling in emerging technologies like AI Ops, AI safety and security. He, along with fellow open source hackers, works on building enterprise focused solutions with open source technologies. Prior to Red Hat, Ishu worked in technical marketing at Intel on IoT Gateways and building end-to-end IoT solutions with partners.

More like this

Proving open source is ready for the industrial edge

Tackle critical vulnerabilities with the new Red Hat Lightspeed remediation workflow

Should Managers Code? | Compiler

The Architect And The Toolbox | Compiler: Re:Role

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds