This article is the third of a four-part series about the emergence of the modern data center. The previous installment explained the history of the networked PC. That article described the introduction of the personal computer, from the first Altair 8800 hobbyist machine to mass-marketed commodity PCs manufactured by companies such as IBM and Dell.

This installment looks at the transformation of the PC from a standalone computer meant for personal use to a networked machine that became as commonplace as the telephone on the desktop of every employee working in companies large and small.

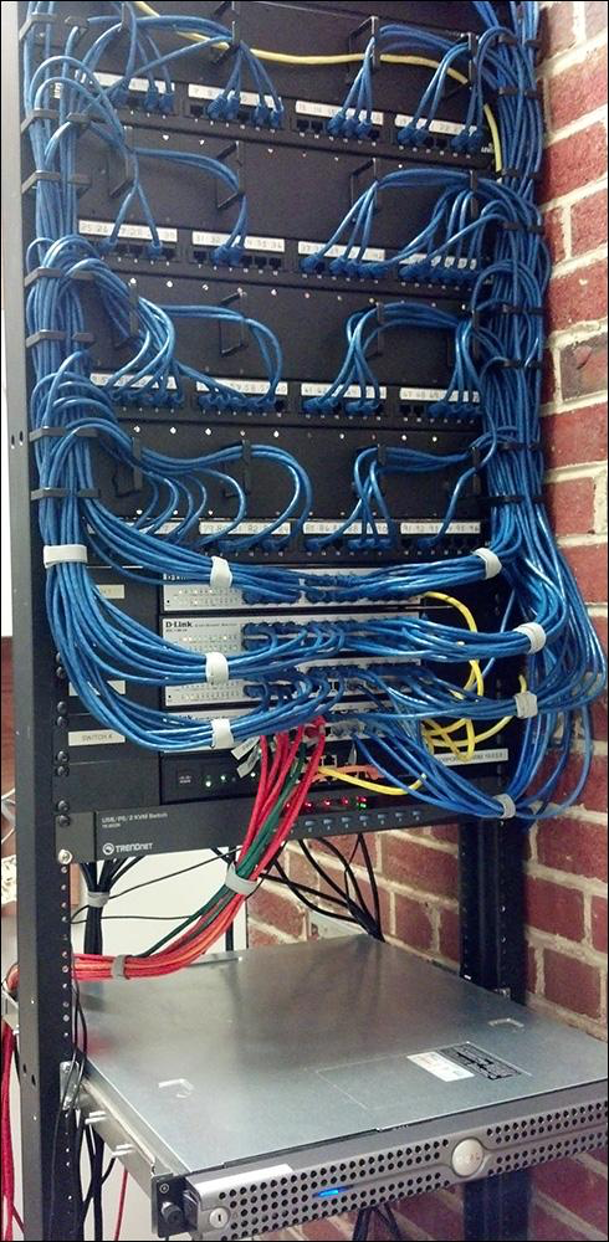

Anatomy of a server closet

If you were to visit a medium-size law firm back in 1998, you might have found a company that had 200 lawyers and another 300 support staff distributed in a few offices over the United States. Each employee in an office would have a computer. Some of those computers would be desktop boxes. Others would be laptops. Each computer would have a cable connected to it via an RJ-45 plug in the back. (See Figure 1.)

Figure 1: The RJ-45 is the standard connector for an Ethernet cable

The other end of the cable would be plugged into a wall socket using another RJ-45 plug. Inside the wall, another cable ran from the socket up to another floor into another room. That room was the server closet.

In the server closet, you'd find a rack of switches and routers into which hundreds of cables were connected. Each cable connected back to an employee's computer via the sockets installed in the wall throughout the floors occupied by the law firm. Each switch or router would be connected to another switch or router until eventually, it all reduced down to one cable coming from a single point. That one cable would be connected to a master computer—the server. That server ran the law firm's entire network at that location. (See Figure 2.)

Figure 2: In the early days of PC networking cables connected everything

That server closet was one of many. Each business in the building where the law firm was a tenant would have its own server closet that contained the one or many servers that supported the given business. Not only would each business have its own server closet, but it also had its own staff whose job it was to make sure the server closet operated the way it was supposed to.

In a way, it was like the early days of electric power. At first, each home had its own generator, producing only the power it needed. After a while, municipalities had their own generators to power their surrounding areas. Each generator had to be staffed and maintained according to a variety of specifications. Construction varied; even output varied. Some generated direct current (DC), others alternating current (AC). While producing electricity using a decentralized approach made independence possible, and it also made costly inefficiency inevitable.

|

Want to learn more about the history of all-purpose technologies such as mass-produced electricity and computing as a service? Read Nicholas Carr's book, The Big Switch, Rewiring the World from Edison to Google. |

Over time, small independent power plants gave way to massive giants that could generate power for an entire multi-state region. As these enormous power plants came online, the revenue model changed. Energy businesses went from making money selling generators to selling the electricity itself. The "pay for what you use" model created by

the electric companies would later become the business model for modern cloud providers.

Clearly, creating one big room with all the servers for an entire building, maybe even a number of buildings, provided an efficiency of scale that made sense financially. Under the right circumstances, a staff that managed a single server closet could just as easily take care of a room full of servers, or even a floor full of servers, for that matter. However, to realize the efficiency, all networks that connected the servers everywhere had to work the same way. Sadly, they didn't.

The road to ubiquitous networking

As described in the previous installment, the computer networking industry was a divided landscape. Several companies were competing for a share and hopefully dominance of a market that was potentially valued at tens of billions of dollars. Each company had its way of doing things. The lack of standardization impeded growth. For the modern data center to emerge at a worldwide scale, a common standard was required. Standardization was realized using two technologies: Ethernet and TCP/IP.

Ethernet is a technology that started in 1972 at Xerox PARC and made public in a 1975 paper by PARC employees Bob Metcalfe and David Boggs. (PARC was the place that inspired Steve Jobs to make the mouse the central device for interacting with the GUI-driven Macintosh computer Apple would release in 1984.) At the time, ethernet was but one of many technologies for moving binary data over a network in a bi-directional manner. Others included token ring introduced by IBM 1984, ARCnet created by Datapoint Corporation, an early manufacturer of computer terminals, and LocalTalk created by Apple Computer.

Ethernet caught on for two reasons. First, it was an open standard that actually worked for a variety of machines, from mainframes to PCs. IBM's token ring was an open standard too, but when put into practice, non-IBM token ring technology did not work with IBM machines. Second, ethernet was evolving to be faster than the other protocols at moving data over the network.

Metcalfe left PARC in 1979 to form 3COM, a company focused on ethernet technologies. A short time later, Digital Equipment Corporation, Xerox, and Intel (the manufacturer of the chips that drove IBM and IBM-compatible PCs) agreed to manufacture technologies that used ethernet. In 1983, the IEEE approved ethernet as a standard. As a result, the protocol leaped to the forefront. By the mid-1990s, if a company had a networked computer, chances were there was an ethernet card inside of it.

TCP/IP's road to dominance was similar to that of ethernet. The TCP/IP protocol's role is to package and address data moving to and fro over the network. Using the postal service analogy, you can think of TCP/IP as the technology that puts a letter in an envelope and then puts the to and from addresses on the envelope. Once addressed, the letter is dropped off at the post office. In this case, the post office is the network. The envelope is a series of binary data packages, with each package having the IP address of the target destination embedded in the package's header.

TCP/IP emerged from ARPNET, a project funded by The Defense Advanced Research Project Agency (DARPA) in 1969. There were also other protocols in play that served that same purpose as TCP/IP. For example, there was IPX/SPX, which was used in early versions of Microsoft's DOS and Windows operating systems. IPX/SPC was core to Novell's NetWare. There was also a version for Macintosh computers, MacIPX.

These data transport protocols, and others like them, competed side by side throughout the 1980s and 1990s, with each experiencing an ebb and flow in popularity. However, when the World Wide Web came along, TCP/IP became the networking standard worldwide.

The age of ubiquitous networking had arrived. In 1995 there were 16 million people on the internet. Today there are over 4 billion. Ethernet and TCP/IP are the engines that have powered this growth.

However, one more piece was needed to complete the puzzle. While moving data to and fro over a network is a critical aspect of universal computing, it was pretty much about point-to-point computing, for example, accessing documents on a network or sending emails between sender and recipient. The notion of applications that were made up of components that were hosted throughout the network, making it so that the data center becomes the actual computer was still an emerging idea. All that was needed were applications that could take advantage of this type of distributed architecture. There were and the puzzle was about to be completed in an interesting way.

Read other articles from this series:

About the author

Bob Reselman is a nationally known software developer, system architect, industry analyst, and technical writer/journalist. Over a career that spans 30 years, Bob has worked for companies such as Gateway, Cap Gemini, The Los Angeles Weekly, Edmunds.com and the Academy of Recording Arts and Sciences, to name a few. He has held roles with significant responsibility, including but not limited to, Platform Architect (Consumer) at Gateway, Principal Consultant with Cap Gemini and CTO at the international trade finance company, ItFex.

More like this

Red Hat, NVIDIA, and Palo Alto Networks collaborate to deliver an integrated, security-first foundation for AI-native telecommunications

How llm-d brings critical resource optimization with SoftBank’s AI-RAN orchestrator

Post-quantum Cryptography | Compiler

Understanding AI Security Frameworks | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds