The amount of data we're generating is growing faster than any traditional datacenter has ever seen. The number of devices connected to the internet and the volume of data generated are growing far too quickly for legacy and conventional datacenters to handle. Moving these large volumes of data into a single centralized location puts a massive strain on the global internet infrastructure. Hence, architects are looking to process data near where the data is generated, at an edge location.

"Around 10% of enterprise-generated data is created and processed outside a traditional centralized datacenter or cloud. By 2025, Gartner predicts this figure will reach 75%" —Gartner

Edge computing is about where the data is processed. It is a distributed computing paradigm, and the idea is to deliver low-latency responses as near as possible to where the data is produced.

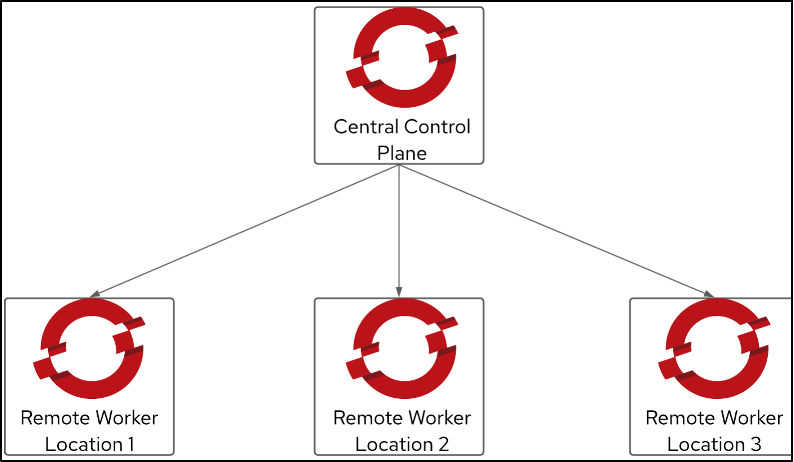

OpenShift is a container platform that is conformant with Kubernetes. OpenShift worker nodes can be deployed at edge locations using a remote worker node configuration. This article looks at the challenges and mitigations in an OpenShift cluster with remote worker nodes.

Challenges

There are two primary challenges for this type of deployment. The first is managing the provisioning of remote nodes. The second is handling node isolation situations.

1. Provisioning remote nodes

Edge computing solutions typically run on the physical bare-metal machine. I use Red Hat Advanced Cluster Management for Kubernetes (RHACM) to help manage the cluster lifecycle for installer-provisioned (IPI) clusters on bare metal. With this, I can quickly discover the hardware and provision (or reprovision) the remote worker nodes when required from a centralized management console. RHACM also has application lifecycle management capabilities. Using RHACM GitOps, I can place the application on the remote worker node where I need it to be available while still managing its lifecycle.

2. Node isolation

When I deploy a remote worker node, the distance between the node and the control plane can introduce a network disruption issue. Power loss can be another contributing factor to a disruption. Remote worker nodes send heartbeats to the control plane every 10 seconds. The cluster will respond using several default recovery mechanisms if the control plane does not receive the heartbeat: The control plane will mark the node health Unhealthy and set the node from Ready to Unknown. Pod eviction will start five minutes after this because the control plane taints the node with node.kubernetes.io/unreachable=NoExecute. There are several mitigation options to overcome this challenge and avoid workload disruption.

How to mitigate pod eviction challenges

Consider the following five options for mitigating workload disruption.

1. Use Kubernetes zones

I can use Kubernetes zones to alter pod eviction behavior. The control plane does not apply the node.kubernetes.io/unreachable=NoExecute taint to the nodes if the zone is fully disrupted.

Here is an example of the Kubernetes zone definition for the node:

kind: Node

apiVersion: v1

metadata:

labels:

topology.kubernetes.io/region=zone-a

2. Use a static pod deployment

I can use a static pod deployment if I need to make sure the pod automatically restarts whenever the node starts. Some limitations apply to static pods; for example, they can't use secrets and config maps.

3. Adjust the time for kubelet checks

I use KubeletConfig and change the node-status-update-frequency and node-status-report-frequency settings to configure the interval that affects the timing when kubelet and the controller check the state of each node.

4. Mitigate pod tolerations

To mitigate the effect of the tainting mechanism when the node is marked as Unhealthy, I can deploy pods with tolerances that address the NoExecute setting. I also can use tolerationSeconds to delay the eviction. This value delays removal after the pod-eviction-timeout value lapses. Therefore, I can avoid or delay a pod eviction by configuring pod tolerations properly.

5. Daemon sets

I believe this option is the best approach if I want to deploy and run the pod on remote worker nodes for several reasons:

- It does not change state when the node changes state to

Unhealthy. It also does not typically need rescheduling behavior. NoExecutetolerations are configured when the daemon set pod is placed.- With labels, I can ensure that a workload runs on a remote worker node matching the one where I want this pod to run.

Wrap up

The edge architecture approach brings challenges, and enterprise architects should think strategically about ways to mitigate them. When you want to run OpenShift with remote worker nodes, the main concern is to make sure that the workload, deployment, and platform can handle disruptions due to the distributed nature of the whole architecture. The strategies above are common examples of mitigating disruption using default OpenShift configurations and managing infrastructure and application lifecycles using centralized RHACM.

About the author

Muhammad has spent almost 15 years in the IT industry at organizations, including a PCI-DSS secure hosting company, a system integrator, a managed services organization, and a principal vendor. He has a deep interest in emerging technology, especially in containers and the security domain. Currently, he is part of the Red Hat Global Professional Services (GPS) organization as an Associate Principal Consultant, where he helps organizations adopt container technology and DevSecOps practices

More like this

4 reasons to start using image mode for Red Hat Enterprise Linux right now

Hardened, ready, and no cost: Container security evolved

Becoming a Coder | Command Line Heroes

Are Big Mistakes That Big Of A Deal? | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds